GPT-4o: Unlocking Multimodal AI Capabilities

The landscape of artificial intelligence is in a perpetual state of flux, continuously redefined by groundbreaking innovations that push the boundaries of what machines can perceive, understand, and generate. From the rudimentary rule-based systems of yesteryear to the intricate neural networks of today, each evolutionary leap has brought us closer to a future where AI acts as a seamless extension of human intellect and creativity. Among these transformative advancements, the emergence of large language models (LLMs) has marked a particularly profound shift, revolutionizing how we interact with technology, process information, and automate complex tasks. These models, initially celebrated for their remarkable proficiency in natural language understanding and generation, have steadily evolved, demonstrating an insatiable appetite for broader forms of data and a deeper understanding of the world.

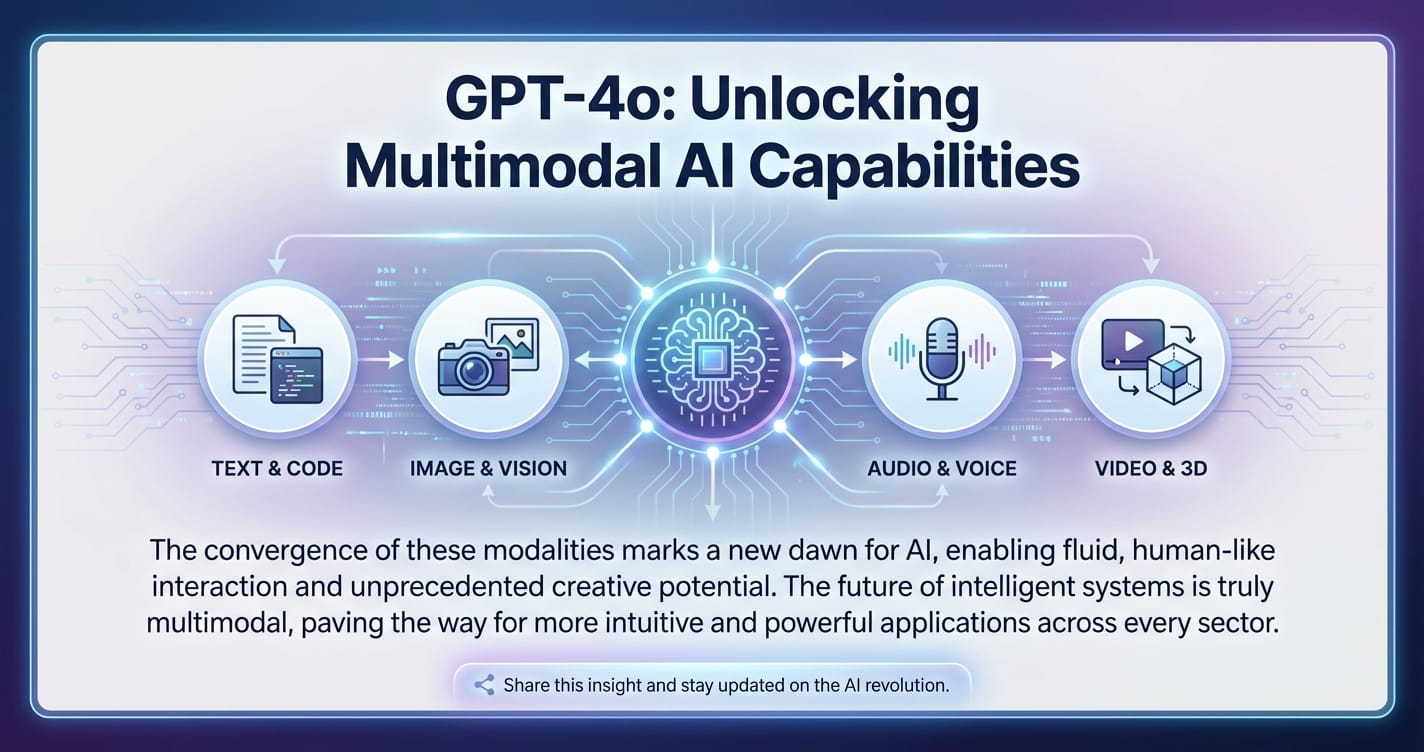

For years, the dream of truly multimodal AI – systems capable of processing and generating information across text, audio, and visual domains simultaneously and coherently – remained largely an aspirational goal. While significant progress had been made in individual modalities, integrating them into a single, fluid experience presented formidable technical hurdles. We saw models excelling in text-to-image generation, others in speech recognition, and still others in visual question answering. Yet, the ability for an AI to natively weave together these diverse sensory inputs and outputs in real-time, engaging in a dialogue that truly mimics human conversational fluidity, remained elusive. This challenge stemmed from the inherent complexity of translating disparate data formats into a unified representation that a single neural network could process without significant latency or loss of context.

Then came GPT-4o, a monumental release from OpenAI that fundamentally redefines the scope of what a generative AI model can achieve. The 'o' in GPT-4o stands for "omni," a moniker that perfectly encapsulates its ambition and capability: to function optimally across all modalities – text, audio, and vision – as a singular, cohesive entity. This isn't merely an incremental upgrade; it represents a paradigm shift from models that cobbled together separate components for different modalities to a truly unified architecture. GPT-4o marks a significant stride towards human-like AI interaction, capable of understanding nuances in speech, interpreting visual cues, and generating appropriate responses, all within milliseconds. This breakthrough promises to unlock unprecedented possibilities across virtually every sector, from revolutionizing customer service and personalized education to democratizing access to sophisticated AI tools for developers and everyday users alike. The ripple effect of GPT-4o’s capabilities is already being felt, signaling a new era where AI doesn't just process information, but truly perceives and interacts with the world in a richer, more integrated manner, paving the way for even more accessible versions like gpt-4o mini and the widely anticipated chatgpt 4o mini.

This comprehensive exploration will delve into the intricacies of GPT-4o, dissecting its architectural innovations, exploring its unparalleled performance, and showcasing the myriad applications it enables. We will also examine the strategic introduction of more accessible derivatives like gpt-4o mini and chatgpt 4o mini, understanding their role in democratizing this powerful technology. Furthermore, we will critically evaluate the ethical considerations and potential societal impacts of such a potent AI, ultimately peering into the future it helps to shape.

The Dawn of Truly Multimodal AI – Understanding GPT-4o's Core Innovation

The journey of AI from specialized, unimodal systems to generalist, multimodal intelligence has been long and arduous. Early AI models were largely confined to single data types – text processors for natural language, image recognition systems for visual data, and speech-to-text engines for audio. While each achieved impressive feats within its domain, the ability to seamlessly integrate these capabilities into a coherent whole remained a significant hurdle. Previous attempts at multimodality, such as the GPT-4V (vision) model, often involved separate processing pipelines where visual inputs were first processed by a dedicated vision encoder, and the resulting embeddings were then fed into a language model alongside text. While effective, this approach introduced latency, potential information loss during translation between modalities, and a less "native" understanding of the intertwined nature of sensory data.

GPT-4o shatters this paradigm by introducing a truly native multimodal architecture. Unlike its predecessors, which treated different modalities as separate inputs requiring distinct pre-processing steps before being combined, GPT-4o was trained end-to-end across text, audio, and vision. This means that a single neural network learns to process raw audio, visual, and textual data simultaneously and inherently. It doesn't translate an image into a description before processing it; it sees the image, hears the sound, and reads the text all within its foundational layers, allowing for a much deeper and more integrated understanding of context.

This unified approach fundamentally changes how the model perceives and interacts with information. Imagine a child learning about the world: they don't process "the cat's meow" as separate audio data and "the cat's furry appearance" as separate visual data. They experience "the cat" as a holistic entity with specific sounds, visuals, and behaviors. GPT-4o attempts to mimic this holistic perception. Its training regimen involved vast datasets encompassing interconnected text, audio, and video, teaching the model to identify correlations and nuances across these disparate forms of information directly. This intrinsic understanding allows for a level of cross-modal reasoning that was previously unattainable, enabling it to not just process but genuinely comprehend and generate information that spans multiple sensory dimensions.

The implications of this native multimodality are profound. For instance, if you show GPT-4o a video of someone struggling to open a jar while describing their frustration, it can process both the visual cues of their effort and the auditory cues of their speech simultaneously. It can then offer advice, or even crack a joke, with an understanding of both the literal problem and the underlying emotional state, demonstrating empathy and contextual awareness far beyond what earlier models could provide. This integrated processing significantly reduces the latency traditionally associated with multimodal interactions, making conversations and commands feel remarkably fluid and natural.

Key Features of GPT-4o's Multimodal Prowess:

- Text Generation and Understanding: At its core, GPT-4o retains and even enhances the extraordinary text capabilities of its predecessors. It excels in complex reasoning, nuanced language generation, summarization, translation, and creative writing, often matching or surpassing the performance of GPT-4 Turbo on standard text-based benchmarks.

- Advanced Audio Processing: This is where GPT-4o truly shines. It can process audio inputs directly, understanding speech with incredible accuracy, discerning emotional tone, and even recognizing multiple speakers. Its audio output is equally impressive, capable of generating natural-sounding speech with various voices and expressive qualities, capable of real-time translation and even singing. This is a leap beyond simple speech-to-text or text-to-speech; it's true audio understanding and generation.

- Superior Image and Video Understanding: GPT-4o can interpret visual information with unprecedented detail and contextual awareness. It can identify objects, analyze scenes, read text within images, describe complex visual relationships, and even provide real-time commentary on video streams. This makes it invaluable for tasks ranging from detailed image captioning and visual search to assisting visually impaired individuals and analyzing complex visual data.

- Cross-Modal Reasoning: Perhaps the most compelling feature is its ability to reason across modalities. It can answer questions about an image based on audio input, generate an image based on text and audio cues, or explain visual phenomena using both spoken and written words. This holistic understanding allows for rich, interactive experiences where the AI can follow a conversation even as the input modality shifts fluidly. The "omni" aspect isn't just about handling all data types; it's about handling them together, intelligently, as a single, cohesive cognitive unit.

By treating all these sensory inputs as intrinsically linked, GPT-4o moves beyond mere task-specific performance into a realm of genuine, integrated intelligence, laying the groundwork for AI systems that can interact with the world in a manner far more akin to human cognition.

Diving Deep into GPT-4o's Architecture and Performance

The revolutionary capabilities of GPT-4o are not merely a result of more training data or minor tweaks; they stem from fundamental advancements in its underlying architecture and optimization strategies. While OpenAI has not released the full technical details of its proprietary architecture, the public demonstrations and performance metrics provide strong clues about the ingenuity baked into this "omni" model. At its heart, GPT-4o likely utilizes a highly optimized transformer architecture, similar to its predecessors, but with crucial modifications to natively handle multimodal inputs and outputs within a unified framework.

Unified Transformer Architecture for Multimodal Processing:

Traditional transformer models excel at sequential data processing, typically text. To handle multimodal inputs, GPT-4o likely employs a sophisticated tokenizer that can convert raw audio, image, and text data into a common, high-dimensional token space. This is not simply converting an image to text, but rather representing the visual features, auditory patterns, and linguistic elements as tokens that can all be processed by the same core attention mechanisms of the transformer. The model's attention layers are then trained to find relationships not only within a single modality (e.g., words in a sentence, pixels in an image) but also between different modalities (e.g., how a specific sound corresponds to a visual event, or how spoken words relate to objects in a scene). This cross-attention mechanism is pivotal, allowing the model to build a rich, contextual understanding that spans sensory boundaries.

Furthermore, the output layer of GPT-4o is equally versatile, capable of generating text, speech, or images directly from its internal representations. This eliminates the need for separate decoding modules for each modality, leading to faster inference and a more coherent, integrated output experience. The training process for such a model is immensely complex, requiring massive, carefully curated multimodal datasets that pair text, audio, and visual information in meaningful ways, allowing the model to learn the intricate correlations that define human perception.

Unprecedented Performance Metrics: Speed, Accuracy, and Multilingualism:

The most striking aspect of GPT-4o's public debut was its real-time audio interaction, which felt uncannily human-like in its responsiveness and fluidity. This wasn't just impressive; it represented a quantum leap in performance.

- Speed and Latency: OpenAI reported that GPT-4o can respond to audio inputs in as little as 232 milliseconds (ms), with an average response time of 320 ms. For context, the average human response time in a conversation is approximately 200-300 ms. This puts GPT-4o firmly within the range of natural human conversational speed, a monumental achievement that was not possible with previous sequential multimodal approaches. Such

low latency AIis critical for real-time applications like voice assistants, customer service, and interactive educational tools, where delays can quickly disrupt the user experience. - Accuracy Across Modalities: On traditional text-based benchmarks, GPT-4o matches or surpasses the performance of GPT-4 Turbo on a wide array of tasks, including MMLU (Massive Multitask Language Understanding), GPQA (General Purpose Question Answering), Math, and Coding benchmarks. What's truly remarkable is that it achieves this while also demonstrating state-of-the-art performance in entirely new domains:

- Audio Understanding: Its speech recognition capabilities significantly outperform existing models, accurately transcribing and understanding diverse accents, languages, and even emotional nuances in spoken input.

- Vision Understanding: On visual question-answering benchmarks, it sets new records, demonstrating a sophisticated ability to analyze complex images, identify subtle details, and reason about visual content.

- Multilingual Support: GPT-4o boasts significantly enhanced performance in over 50 languages, demonstrating superior translation quality and the ability to understand and generate speech in multiple tongues. This global accessibility broadens its utility and impact, making advanced AI capabilities available to a much wider user base worldwide.

Efficiency and Optimization:

The "o" in GPT-4o also signifies "optimal" in terms of efficiency. Despite its expanded capabilities, GPT-4o is designed to be more efficient than its predecessors. For example, it is twice as fast and 50% cheaper in the API compared to GPT-4 Turbo, a testament to OpenAI's continuous efforts in model compression, inference optimization, and hardware utilization. This cost-effective AI approach makes sophisticated multimodal capabilities more accessible to developers and businesses, reducing the barrier to entry for integrating advanced LLMs into their products and services.

The following table provides a high-level comparison of key attributes between GPT-4o and its immediate predecessor, GPT-4 Turbo, highlighting the significant advancements:

| Feature/Metric | GPT-4 Turbo (GPT-4T) | GPT-4o |

|---|---|---|

| Core Modalities | Primarily text-based; visual input (GPT-4V) was a separate processing pipeline. Audio via separate ASR/TTS. | Native Multimodal (text, audio, vision processed by a single model). |

| Audio Processing | Relied on separate speech-to-text and text-to-speech models. High latency for real-time interaction. | Real-time, end-to-end audio processing. Average 320ms response time. Understands tone and emotion. |

| Vision Processing | Handled by GPT-4V, which encoded images into text tokens before language model processing. | Direct, native vision understanding. Enhanced scene analysis, visual reasoning. |

| Latency (Audio) | Multiple seconds for full round-trip audio interaction. | ~0.32 seconds average. Feels conversational. |

| Text Performance | High | Matches/surpasses GPT-4T on most benchmarks (MMLU, GPQA, Math, Coding). |

| Multilingual | Strong, but GPT-4o shows significant improvements. | Enhanced performance in 50+ languages. |

| API Cost | Higher pricing. | 50% cheaper than GPT-4 Turbo. |

| API Speed | Standard | 2x faster than GPT-4 Turbo. |

| Use Cases | Advanced text generation, code, complex reasoning, image description. | Real-time voice assistants, dynamic video analysis, interactive education, enhanced customer service. |

The advancements in GPT-4o represent not just an improvement in individual metrics but a fundamental shift in how AI can interact with the world, making it a truly versatile and groundbreaking tool.

The Versatility of GPT-4o: Diverse Applications and Use Cases

The advent of GPT-4o’s native multimodal capabilities is not merely a technical marvel; it is a catalyst for transformative innovation across an expansive array of industries and everyday scenarios. Its ability to fluidly process and generate text, audio, and visual information in real-time unlocks a new dimension of human-computer interaction and automated intelligence. The applications are virtually limitless, ranging from enhancing creative endeavors to revolutionizing enterprise operations and making sophisticated AI accessible to all.

Creative Industries: Igniting Imagination with Multimodal Tools

For artists, writers, musicians, and content creators, GPT-4o is poised to become an indispensable collaborator. * Content Generation: Imagine an author dictating story ideas while sketching character concepts, with GPT-4o simultaneously generating narrative prose, suggesting plot twists, and even creating visual mood boards based on the dialogue. It can assist in drafting articles, scripts, poems, and marketing copy, enriching the creative process by offering instant feedback across modalities. * Multimedia Content Creation: Users can describe a scene, specifying visual elements, desired audio effects, and narrative dialogue, and GPT-4o could generate preliminary images, soundscapes, or even short video snippets. This accelerates pre-production for film, animation, and game development, turning abstract ideas into tangible drafts in moments. * Interactive Storytelling and Gaming: Developers can leverage GPT-4o to create dynamic non-player characters (NPCs) in games that respond to player speech with nuanced dialogue and visually acknowledge their surroundings. Interactive fiction can become truly immersive, with the AI adapting the narrative based on spoken choices and visual cues from the user, crafting personalized adventures that feel alive.

Education: Personalized Learning and Global Accessibility

The education sector stands to be profoundly reshaped by GPT-4o's interactive and adaptive capabilities. * Personalized Tutoring: GPT-4o can act as a highly adaptive tutor, understanding student queries not just from text, but also from spoken questions, drawings, or even photos of their homework. It can gauge a student's frustration from their tone of voice or guide them through a complex problem by pointing out specific elements in a diagram, offering tailored explanations and exercises. * Language Learning: For language learners, GPT-4o offers real-time pronunciation feedback, engaging conversational practice with a virtual partner, and instant translation while explaining cultural nuances. It can identify specific areas where a learner struggles and provide targeted practice, making language acquisition more dynamic and effective. * Accessibility Tools: GPT-4o can provide real-time audio descriptions of visual content for the visually impaired or translate spoken language into sign language representations on screen for the hearing impaired. Its ability to understand diverse inputs also makes technology more accessible to individuals with various learning or physical challenges.

Business & Enterprise: Streamlining Operations and Enhancing Customer Experiences

For businesses, GPT-4o offers a competitive edge by enhancing efficiency, improving customer engagement, and automating complex workflows. * Enhanced Customer Service: Chatbots powered by GPT-4o can move beyond scripted responses. They can understand a customer's tone of voice, interpret screenshots or video clips of their issue (e.g., a broken product, an error message), and respond with empathy and precise solutions, significantly reducing resolution times and improving satisfaction. Imagine a bot guiding a user through a technical issue by analyzing a live video feed of their device, all while speaking naturally. * Data Analysis and Reporting: Business intelligence can be supercharged. Users can verbally query vast datasets, show charts or graphs, and have GPT-4o summarize trends, identify anomalies, and generate natural language reports or even new visual representations of the data. This democratizes access to complex analytics, allowing non-technical users to derive insights quickly. * Marketing and Advertising: From generating creative briefs based on market research data to producing ad copy, visual concepts, and even voiceovers for campaigns, GPT-4o can streamline the entire marketing pipeline. It can analyze audience reactions to ad prototypes in real-time, suggesting optimizations.

Integrating such advanced LLMs into existing enterprise systems, however, often presents its own set of complexities – managing multiple API keys, handling diverse model versions, optimizing for latency, and ensuring cost-effectiveness. This is where a unified API platform like XRoute.AI becomes invaluable. XRoute.AI is specifically designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, including cutting-edge models like GPT-4o. Businesses seeking to leverage the power of GPT-4o for their customer service, data analysis, or creative workflows can use XRoute.AI to manage their AI integrations efficiently. The platform's focus on low latency AI ensures that real-time interactions powered by GPT-4o remain fluid, while its cost-effective AI solutions allow enterprises to optimize their spending. This robust platform empowers users to build intelligent solutions without the complexity of managing multiple API connections, enabling seamless development of AI-driven applications, chatbots, and automated workflows. Whether it's for high throughput demands or scaling an AI-driven application, XRoute.AI provides the necessary infrastructure to harness GPT-4o's capabilities effectively.

Healthcare: Enhancing Diagnostics and Patient Care

While always requiring human oversight, GPT-4o can assist healthcare professionals in numerous ways. * Medical Image Analysis: In preliminary stages, GPT-4o could help interpret medical images (X-rays, MRIs) by highlighting potential anomalies and cross-referencing findings with patient history presented verbally or in text. * Assistive Technology: It can serve as an intuitive interface for patients with mobility challenges, allowing them to verbally communicate needs, control smart devices, or access health information with greater ease. * Therapeutic Support: AI companions could provide conversational support, helping individuals manage stress or adhere to treatment plans by understanding their emotional state from voice and tone.

Daily Life: Intelligent Assistants and Personal Productivity

Beyond specialized applications, GPT-4o promises to make daily life more efficient and enjoyable. * Smart Assistants: Imagine a truly intelligent home assistant that can "see" what you're doing, "hear" your commands, and anticipate your needs. You could point to ingredients in your fridge and ask for recipe ideas, or show it a broken appliance and get immediate troubleshooting steps, all in a natural, conversational manner. * Personal Productivity Tools: From summarizing complex video meetings to helping you organize photos by understanding their content and your spoken instructions, GPT-4o can transform how we manage information and tasks.

The sheer versatility of GPT-4o is its defining characteristic. By breaking down the barriers between modalities, it offers developers and innovators a canvas upon which to paint a new generation of truly intelligent, responsive, and intuitive applications, making technology feel less like a tool and more like an extension of our own senses and minds.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Introducing gpt-4o mini and chatgpt 4o mini: Accessibility and Scalability

While the full power of GPT-4o represents the pinnacle of OpenAI's multimodal AI research, the practical realities of deployment, cost, and specific use cases often demand more specialized and accessible alternatives. Recognizing this, OpenAI has strategically introduced more streamlined versions, notably gpt-4o mini and chatgpt 4o mini. These models are not merely stripped-down versions but carefully optimized derivatives designed to democratize access to advanced multimodal AI, ensuring that its benefits can be leveraged across a wider spectrum of applications and budgets.

The Need for Smaller, More Focused Models:

The development of "mini" versions stems from several crucial considerations: * Cost-Efficiency: Full-fledged flagship models like GPT-4o are inherently resource-intensive, both in terms of computational power for inference and associated API costs. For high-volume applications or use cases where extreme sophistication isn't paramount, a more cost-effective AI solution is highly desirable. gpt-4o mini addresses this by offering a significant performance-to-cost ratio for certain tasks. * Faster Inference for Specific Tasks: While GPT-4o boasts impressive general speed, smaller models can often achieve even faster inference times for less complex tasks or when deployed in environments with limited computational resources, such as edge devices. * Broadening Access: By providing more affordable and efficient models, OpenAI can make advanced AI capabilities accessible to a broader range of developers, startups, and educational institutions who might otherwise be constrained by budget or technical complexity. This aligns with the goal of democratizing AI. * Targeted Optimization: Smaller models can be specifically optimized for particular types of tasks, such as conversational AI or quick data processing, potentially outperforming larger models in those specialized niches due to their focused design.

gpt-4o mini: The Agile Multimodal Workhorse

gpt-4o mini is positioned as a highly efficient and more affordable variant of the flagship GPT-4o model. While it may not possess the absolute cutting-edge reasoning capabilities or the full breadth of nuanced understanding of its larger counterpart, it inherits the core multimodal architecture and retains a significant portion of its capabilities.

- What it is:

gpt-4o miniis designed to be a compact, faster, and morecost-effective AIsolution for a wide range of tasks that benefit from multimodal processing but don't require the maximal performance of GPT-4o. It is still capable of understanding and generating text, audio, and visual inputs, albeit with potentially reduced complexity or depth compared to the full model. - Targeted Use Cases: This model is ideal for applications requiring high-volume processing where cost and speed are critical. Examples include:

- Basic customer support chatbots that need to understand voice commands and simple visual cues.

- Automated content moderation (identifying inappropriate content across text, image, and audio).

- Simple data extraction from documents containing both text and basic visuals.

- Personalized content recommendation systems that process user preferences across various media.

- Educational apps for basic language translation or interactive quizzes.

- Performance Trade-offs: The trade-off for its enhanced efficiency and lower cost typically involves slightly less sophisticated reasoning, a reduced capacity for extremely complex cross-modal tasks, or a potentially smaller context window. However, for the vast majority of common applications,

gpt-4o ministill offers a substantial upgrade over older, unimodal models.

chatgpt 4o mini: The Conversational Multimodal Specialist

chatgpt 4o mini is a further specialization, explicitly optimized for conversational AI applications. While gpt-4o mini offers general multimodal capabilities, chatgpt 4o mini refines these for the unique demands of real-time, engaging dialogue.

- Focus on Conversational AI: This model is fine-tuned to excel in chat-based interactions, understanding turn-taking, maintaining conversational context, and generating highly natural and relevant responses. It leverages the multimodal core of

gpt-4oto interpret not just spoken words but also tone, implicit visual cues in a video call, or user intent from subtle facial expressions. - Enhanced for Chat Applications:

chatgpt 4o miniis perfect for:- Interactive virtual assistants that can understand complex spoken queries and provide visually rich responses.

- Intelligent customer support agents that can seamlessly switch between voice and text, and interpret screenshots of customer issues.

- Personalized learning companions that engage students in dynamic, multimodal dialogues.

- Accessibility tools that provide richer, more intuitive conversational interfaces.

- User Experience: The goal of

chatgpt 4o miniis to deliver a highly responsive and human-like conversational experience at a practical cost, making advanced AI dialogue more widely deployable.

Strategic Importance: Democratizing Access and Fostering Innovation

The introduction of gpt-4o mini and chatgpt 4o mini is a strategic move by OpenAI to ensure that the transformative power of multimodal AI is not limited to large enterprises or research institutions. By offering models that balance advanced capabilities with accessibility and efficiency, they are: * Lowering the Barrier to Entry: More developers and organizations, including startups and individual innovators, can experiment with and deploy cutting-edge multimodal AI without prohibitive costs or computational demands. * Encouraging Broader Adoption: These models facilitate the integration of AI into a wider array of consumer products, educational tools, and business solutions. * Fostering Diverse Innovation: Developers can now build new applications that leverage multimodal interactions for specific, focused tasks, leading to a more diverse and innovative ecosystem of AI-powered products.

The table below summarizes the key distinctions and typical use cases for GPT-4o, gpt-4o mini, and chatgpt 4o mini, illustrating their complementary roles in the multimodal AI landscape:

| Feature/Metric | GPT-4o | GPT-4o Mini | ChatGPT 4o Mini |

|---|---|---|---|

| Core Capability | Flagship, highest performance native multimodal AI. | Cost-effective, agile multimodal model. | Optimized for conversational multimodal interactions. |

| Complexity Handled | Most complex reasoning, nuanced multimodal understanding. | Good for moderately complex multimodal tasks. | Excellent for complex, fluid human-AI conversations. |

| Latency | Very low (avg. 320ms for audio). | Low, often faster for specific, simpler tasks. | Very low, optimized for real-time dialogue. |

| API Cost | Higher (but 50% cheaper than GPT-4T). | Significantly lower than GPT-4o. | Competitive pricing for conversational use cases. |

| Typical Use Cases | Advanced research, high-end creative tools, complex enterprise solutions, sophisticated assistants. | High-volume automated tasks, basic customer service, content moderation, general multimodal processing. | AI chatbots, virtual assistants, language learning, interactive guides, customer support. |

| Primary Advantage | Unparalleled multimodal depth and flexibility. | Accessibility, cost-efficiency for broad applications. | Highly natural, engaging, and efficient conversational AI. |

These "mini" versions ensure that the groundbreaking advancements of GPT-4o are not confined to a niche, but become foundational elements in the everyday development and deployment of intelligent applications, truly democratizing the future of multimodal AI.

The Ethical Implications and Future Outlook of GPT-4o

As GPT-4o ushers in a new era of deeply integrated, multimodal AI, it simultaneously brings to the forefront a complex web of ethical considerations and societal implications. The power to understand and generate information across text, audio, and video with human-like fluidity carries immense potential for good, but also significant risks that demand careful foresight, robust safeguards, and ongoing public discourse.

Safety and Bias: Navigating the Complexities of Multimodal AI

OpenAI, like other leading AI developers, has publicly committed to responsible AI development, emphasizing safety, transparency, and fairness. With GPT-4o, these commitments are more critical than ever due to its enhanced capabilities:

- Bias Amplification: Multimodal models are trained on vast datasets reflecting the real world, which inherently contain human biases (racial, gender, cultural, socio-economic). GPT-4o’s ability to process and generate nuanced visual and audio content means it could potentially amplify these biases in new and insidious ways. For example, if training data over-represents certain demographics in specific roles, the model might inadvertently perpetuate stereotypes when generating images or responding to queries. Addressing this requires continuous auditing of training data, robust bias detection mechanisms, and post-deployment monitoring.

- Misinformation and Deepfakes: The capacity for GPT-4o to generate highly realistic audio and visual content, seamlessly integrated with text, raises serious concerns about the proliferation of misinformation, deepfakes, and synthetic media. Fabricated videos and audio can be used to spread propaganda, defame individuals, or manipulate public opinion. Developing robust detection methods for AI-generated content, promoting digital literacy, and implementing watermarking or provenance tracking technologies are crucial countermeasures.

- Privacy Concerns: The real-time processing of audio and visual streams, especially in interactive applications, poses significant privacy challenges. How is sensitive personal data handled? What are the consent mechanisms? Ensuring data anonymization, secure processing, and strict adherence to privacy regulations (like GDPR and CCPA) are paramount.

- Security Vulnerabilities: The power of GPT-4o could be exploited for malicious purposes, such as sophisticated phishing attacks that leverage personalized audio or video to impersonate trusted individuals, or automated social engineering campaigns. Robust security protocols and vigilance against emerging threats will be essential.

- Hallucination in Multimodal Contexts: While LLMs are known to "hallucinate" (generate factually incorrect information), multimodal models present new dimensions to this problem. A model might generate visually plausible but factually incorrect images, or combine auditory and visual information in a misleading way. This underscores the importance of human oversight and validation, especially in critical applications.

OpenAI's approach to safety includes extensive red teaming – actively trying to break the model and uncover its vulnerabilities – and implementing safety filters and guardrails. However, the rapidly evolving nature of AI means these efforts must be continuous and adaptive.

Societal Impact: Reshaping Work, Interaction, and Identity

The widespread adoption of GPT-4o and its derivatives will undoubtedly have profound societal impacts:

- Job Displacement and Augmentation: While concerns about AI replacing human jobs persist, it's more likely that GPT-4o will augment human capabilities. Repetitive or data-intensive tasks across creative, administrative, and technical fields can be automated, freeing up humans for more complex, creative, and interpersonal work. However, this transition requires investment in reskilling and education to ensure a just transition for the workforce.

- Evolving Human-Computer Interaction: GPT-4o pushes human-computer interaction into a more natural, intuitive realm. Conversing with AI will feel less like using a tool and more like interacting with a highly intelligent assistant, blurring the lines between digital and human communication. This could lead to new forms of social interaction and cognitive dependence.

- Cognitive Load and Decision Making: With AI assisting in more decision-making processes, understanding the limitations and biases of the AI becomes critical. Over-reliance on AI without critical evaluation could lead to "automation bias" or a reduction in human problem-solving skills.

- Defining AI Identity: As AI becomes more sophisticated in mimicking human interaction across modalities, questions about the "identity" of AI and the nature of human-AI relationships will inevitably arise. Clear identification of AI-generated content and transparent AI interactions will be crucial for maintaining trust and distinguishing between human and machine.

Future Developments: Towards More Integrated and Intelligent Systems

The launch of GPT-4o is not an endpoint but a significant milestone on the path to even more advanced AI. The future trajectory includes:

- Even More Seamless Integration: Future models will likely achieve even greater fluidity in processing and generating across modalities, potentially incorporating other sensory inputs like touch or smell, leading to a truly comprehensive understanding of the physical world.

- Personalization and Customization: We can expect highly personalized versions of these models, fine-tuned for individual preferences, specific industries, or even unique cognitive styles.

- Enhanced Reasoning and AGI: As models continue to improve their ability to reason across diverse forms of information, they will move closer to achieving Artificial General Intelligence (AGI), capable of performing any intellectual task a human can.

- Ethical AI Governance: The rapid pace of AI development necessitates equally rapid advancements in ethical AI governance, policy frameworks, and international collaboration to ensure these powerful technologies are developed and deployed for the benefit of all humanity.

In navigating this complex and exciting future, platforms like XRoute.AI will play an increasingly vital role. By providing a unified API platform that simplifies access to a rapidly expanding universe of LLMs – including advanced models like GPT-4o and its smaller counterparts – XRoute.AI allows developers and businesses to focus on innovation and ethical application rather than the intricate infrastructure of model integration. Its focus on low latency AI and cost-effective AI will be crucial for scaling these powerful multimodal solutions responsibly and efficiently, ensuring that the transformative potential of GPT-4o can be harnessed securely and effectively by a global community of innovators, fostering a future where AI truly empowers and enhances human endeavor.

Conclusion: A Leap Towards Human-Like AI Interaction

The introduction of GPT-4o stands as a landmark achievement in the ongoing saga of artificial intelligence, representing a pivotal moment in our quest to build machines that can truly understand and interact with the world in a human-like fashion. By pioneering a natively multimodal architecture, GPT-4o has transcended the limitations of its predecessors, seamlessly integrating text, audio, and visual processing within a single, unified neural network. This "omni" approach has not only dramatically reduced latency, making real-time conversational AI a tangible reality but has also unlocked unprecedented levels of accuracy, nuance, and cross-modal reasoning.

The implications of this breakthrough are vast and far-reaching. From revolutionizing creative industries and transforming educational paradigms to streamlining enterprise operations and enhancing daily life, GPT-4o's versatility promises to redefine our interaction with technology. Its ability to perceive emotional cues in speech, interpret complex visual information, and generate contextually rich responses opens up new frontiers for personalized assistance, sophisticated content creation, and highly intuitive user experiences.

Furthermore, OpenAI's strategic deployment of more accessible derivatives like gpt-4o mini and chatgpt 4o mini underscores a commitment to democratizing this powerful technology. These specialized models ensure that the benefits of multimodal AI – particularly its low-latency and cost-effective advantages – can be leveraged across a broader spectrum of applications and by a wider community of developers and businesses. By offering tailored solutions, they enable widespread adoption, fostering an ecosystem of diverse and innovative AI-powered products and services.

Yet, with such immense power comes profound responsibility. The ethical considerations surrounding bias, misinformation, privacy, and societal impact demand continuous vigilance, robust safety measures, and open dialogue. As GPT-4o pushes the boundaries of AI, it also compels us to reflect on the future of human-computer interaction and the critical importance of responsible development.

In this rapidly evolving landscape, platforms like XRoute.AI emerge as essential enablers, simplifying the integration of advanced LLMs like GPT-4o into real-world applications. By offering a unified API platform that provides a single, OpenAI-compatible endpoint to over 60 AI models, XRoute.AI empowers developers to focus on innovation rather than infrastructure, ensuring that the transformative potential of GPT-4o can be harnessed efficiently, cost-effectively, and securely.

GPT-4o is more than just an advanced AI model; it is a testament to human ingenuity and a powerful harbinger of a future where AI acts as a truly intelligent, empathetic, and integrated partner in our daily lives. As we continue to explore its capabilities and navigate its challenges, one thing is clear: the era of truly multimodal AI has arrived, and it promises to reshape our world in ways we are only just beginning to imagine.

Frequently Asked Questions (FAQ)

1. What is GPT-4o's main advantage over previous models like GPT-4 Turbo? GPT-4o's main advantage is its native multimodal architecture, meaning it processes and generates text, audio, and vision directly within a single neural network. This allows for significantly lower latency in real-time interactions (average 320ms for audio responses), a more holistic understanding of context across modalities, and enhanced performance in generating human-like speech and interpreting visual cues, something previous models achieved through separate, slower processing pipelines.

2. How does gpt-4o mini differ from the full GPT-4o model? gpt-4o mini is a more compact, faster, and significantly more cost-effective version of the flagship GPT-4o. While it retains a substantial portion of GPT-4o's multimodal capabilities, it's optimized for high-volume, moderately complex tasks where extreme sophistication isn't required. This makes it ideal for broader accessibility and integration into a wider range of applications with budget or speed constraints.

3. Can GPT-4o understand emotions from audio/video inputs? Yes, GPT-4o is designed with enhanced capabilities to understand nuances in human communication. This includes discerning emotional tone from audio inputs and interpreting subtle visual cues (like facial expressions) from video, allowing it to provide more empathetic and contextually aware responses. This contributes significantly to a more natural and human-like interaction experience.

4. Is GPT-4o available for everyone? GPT-4o is available to developers through OpenAI's API, and its capabilities are being integrated into ChatGPT for general users, including free tiers with certain usage limits. OpenAI aims to make its advanced models broadly accessible, often through tiered pricing structures and more accessible versions like gpt-4o mini and chatgpt 4o mini.

5. How can developers integrate GPT-4o into their applications? Developers can integrate GPT-4o through OpenAI's API, which provides endpoints for accessing its multimodal functionalities. For streamlined integration, especially when managing multiple AI models or requiring high throughput and low latency, platforms like XRoute.AI offer a unified API platform. XRoute.AI simplifies access to GPT-4o and over 60 other AI models from various providers through a single, OpenAI-compatible endpoint, making development and deployment of AI-driven applications significantly easier and more cost-effective.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.