GPT-5: Unlocking the Future of AI

The realm of artificial intelligence is in a perpetual state of acceleration, continuously pushing the boundaries of what machines can perceive, understand, and create. In this rapidly evolving landscape, few developments capture the collective imagination quite like the prospect of a new, more powerful iteration of a foundational model. As we stand at the precipice of another transformative leap, the anticipation surrounding GPT-5 is palpable. Following the groundbreaking successes of its predecessors, particularly GPT-4, the next generation of OpenAI's large language model is poised not merely to refine existing capabilities but to fundamentally redefine our interaction with intelligent systems. This article embarks on an extensive exploration of what GPT-5 might entail, dissecting its potential innovations, the profound impact it could have across diverse industries, the technical challenges that lie ahead, and the broader societal implications of such an advanced AI. Prepare to journey into a future where conversational intelligence reaches unprecedented levels of sophistication, where the distinction between human and artificial understanding begins to blur in fascinating and sometimes challenging ways.

The Legacy of GPT: A Brief History and Evolution

To truly appreciate the impending arrival of GPT-5, it is essential to contextualize it within the rich tapestry of the Generative Pre-trained Transformer series. OpenAI's journey began with a bold vision: to build safe and beneficial artificial general intelligence. The GPT series has been a cornerstone of this endeavor, demonstrating consistent, exponential progress.

The initial GPT models, particularly GPT-1 and GPT-2, laid the foundational groundwork. GPT-1 introduced the Transformer architecture, a paradigm shift in sequence modeling that utilized self-attention mechanisms to weigh the importance of different words in an input sequence. This innovation allowed models to capture long-range dependencies in text much more effectively than previous recurrent neural networks. GPT-2, while controversial at its release due to its impressive text generation capabilities and concerns about misuse, significantly scaled up the model parameters and training data, showcasing astonishing fluency and coherence across a wide array of text generation tasks. It proved that simply scaling up transformers could lead to emergent capabilities previously thought to require more explicit architectural designs.

GPT-3 marked another monumental leap. With 175 billion parameters, it dwarfed its predecessors and demonstrated remarkable few-shot and zero-shot learning abilities. This meant the model could perform tasks it hadn't been explicitly trained for, often with just a few examples or even none at all. It revolutionized the understanding of what a pre-trained language model could achieve, turning it into a versatile engine for summarization, translation, question-answering, and even code generation. Its broad applicability sparked a wave of innovation, inspiring countless developers and researchers to explore new frontiers in AI.

Then came GPT-4, a testament to OpenAI's continuous pursuit of excellence and safety. While not disclosing its exact parameter count, GPT-4 demonstrated significant improvements in reliability, creativity, and the ability to handle much more nuanced instructions than GPT-3.5. Its most significant public-facing advancement was its enhanced reasoning capabilities and, crucially, its initial foray into multimodality, allowing it to process not just text but also images (though this feature was not widely available at launch). GPT-4 became the backbone of more sophisticated applications, delivering more accurate and contextually relevant responses, reducing hallucinations, and proving invaluable in complex problem-solving scenarios. It further solidified the dominance of the transformer architecture and the scaling hypothesis in AI development. The iteration of chat gpt powered by GPT-4 quickly became a household name, showcasing the practical utility and transformative potential of these models in everyday life, from creative writing to complex data analysis.

The evolution from GPT-1 to GPT-4 represents not just an increase in scale but a deepening of understanding, a refinement of reasoning, and an expansion of utility. Each iteration has chipped away at the limitations of the last, pushing us closer to truly intelligent and adaptable AI. It is against this backdrop of exponential progress that the anticipation for GPT-5 takes on such profound significance. We are not merely expecting an incremental upgrade; we are looking towards a potential paradigm shift that builds on this formidable legacy to unlock capabilities previously confined to the realm of science fiction. The journey so far has been nothing short of extraordinary, and as we look ahead to GPT-5, we do so with a mixture of excitement, trepidation, and a profound sense of wonder at the boundless possibilities that lie before us.

Anticipated Innovations and Breakthroughs in GPT-5

The whispers and informed speculations surrounding GPT-5 suggest a model that transcends the current state of the art, promising advancements that could fundamentally alter how we interact with and utilize artificial intelligence. While concrete details remain under wraps, a synthesis of research trends, community discussions, and the evolutionary trajectory of the GPT series allows us to paint a vivid picture of its potential breakthroughs.

Scale and Parameters: Beyond Imagination

One of the most immediate and expected advancements for GPT-5 will undoubtedly be its sheer scale. While GPT-4's parameter count was kept private, it is widely believed to be significantly larger than GPT-3's 175 billion. GPT-5 is anticipated to push these boundaries even further, potentially reaching trillions of parameters. This monumental increase in scale is not just about raw power; it implies a far deeper understanding of language, more nuanced contextual awareness, and the ability to learn from an even broader and more diverse dataset. With greater parameters, the model can encode more complex patterns and relationships, leading to more sophisticated reasoning and generation capabilities. This scaling hypothesis has been a driving force in large language model development, and GPT5 is expected to be its most compelling demonstration yet, allowing for an unprecedented level of detail and coherence in its outputs.

True Multimodality: Perceiving and Generating Across Senses

While GPT-4 hinted at multimodality, particularly with its image understanding capabilities, GPT-5 is expected to fully embrace it, moving beyond text-centric interactions. Imagine an AI that can not only comprehend and generate natural language but also understand and create images, video, audio, and even 3D models with equal fluency. This would mean: * Visual Reasoning: The ability to analyze complex images or video feeds, interpret their content, identify objects, understand spatial relationships, and even infer emotional states or intentions from visual cues, then respond intelligently in text or even generate new visual content. * Audio Processing and Generation: Understanding spoken language with unparalleled accuracy, distinguishing nuances in tone and emotion, and generating lifelike synthetic voices or even musical compositions that align with specific creative prompts. * Unified Understanding: A single model that can seamlessly transition between different modalities, drawing insights from a video, summarizing it in text, and then generating a relevant image, all within a coherent dialogue. This truly multimodal chat gpt5 would unlock entirely new forms of interaction and creativity.

Enhanced Reasoning and Logic: Beyond Pattern Matching

Current LLMs are extraordinary pattern matchers, but they often struggle with true common sense reasoning, abstract thought, and complex logical deductions. GPT-5 is expected to make significant strides in these areas. This could manifest as: * Deeper Causal Understanding: Moving beyond correlation to grasp genuine cause-and-effect relationships, enabling it to better explain phenomena and predict outcomes. * Abstract Problem Solving: Tackling complex scientific problems, mathematical proofs, or strategic planning scenarios with greater accuracy and insight, perhaps even proposing novel solutions. * Common Sense Reasoning: Navigating the implicit rules and understandings of the real world, reducing nonsensical outputs and making its interactions feel far more intuitive and "human-like." This capability would be crucial for a more reliable and trustworthy chat gpt5.

Long-Context Understanding: Sustained Coherence

One of the limitations of previous models has been their context window – the amount of text they can "remember" and process at any given time. While GPT-4 expanded this significantly, GPT-5 is anticipated to offer truly expansive context windows, potentially allowing it to understand and generate entire novels, extensive research papers, or prolonged multi-turn conversations with sustained coherence and accuracy. This capability would be transformative for: * Complex Document Analysis: Summarizing, analyzing, and extracting information from vast amounts of unstructured data, like legal briefs, scientific literature, or entire company archives. * Personalized Learning: Engaging in long-term tutoring relationships, remembering a student's progress, strengths, and weaknesses over weeks or months. * Advanced Conversational Agents: Maintaining context across hours-long discussions, making chat gpt5 interactions feel incredibly natural and consistent, akin to speaking with an attentive human.

Reduced Hallucinations and Increased Factual Accuracy

A persistent challenge for LLMs is their tendency to "hallucinate" – generating plausible-sounding but factually incorrect information. While GPT-4 made improvements, GPT-5 is expected to further mitigate this issue. This could be achieved through: * Improved Grounding Mechanisms: Better integration with real-world data, knowledge graphs, and verifiable sources, allowing the model to cross-reference its generations. * Enhanced Self-Correction: The ability for the model to identify potential factual inaccuracies in its own outputs and refine them, perhaps through internal deliberation or external verification processes. * Uncertainty Quantification: The ability to express confidence levels in its answers, informing users when information is less certain or speculative.

Personalization and Adaptability: Tailored AI Experiences

GPT-5 could herald a new era of highly personalized AI. Imagine an AI that truly understands your preferences, learning style, and specific needs over time, adapting its responses and capabilities accordingly. This could involve: * Continuous Learning: The model continually refines its understanding based on your interactions, making each experience more relevant than the last. * Adaptive Persona: The ability to adopt different tones, styles, and expertise levels based on the context or your explicit request, making chat gpt5 an incredibly versatile tool. * Proactive Assistance: Anticipating your needs and offering relevant information or actions before you even explicitly ask, moving from reactive to truly proactive assistance.

Ethical AI and Safety: A Central Design Principle

As AI models become more powerful, the imperative for ethical design and robust safety measures grows exponentially. GPT-5 is expected to incorporate advanced mechanisms for: * Bias Mitigation: More sophisticated techniques to identify and reduce biases inherited from training data, ensuring fairer and more equitable outputs. * Safety Alignment: More robust safeguards against generating harmful, hateful, or misleading content, with improved control over undesirable outputs. * Transparency and Explainability: While still a challenge for large neural networks, efforts will likely be made to provide some level of insight into the model's decision-making process, fostering greater trust.

The leap to GPT-5 is not merely an incremental step; it represents a profound evolution in artificial intelligence. From its unparalleled scale and true multimodality to its enhanced reasoning and ethical considerations, GPT5 promises to be a transformative force, reshaping industries, inspiring creativity, and challenging our very definitions of intelligence. The potential for a chat gpt5 experience that is indistinguishable from highly intelligent human interaction, capable of profound understanding and complex problem-solving, is both exhilarating and a testament to the relentless pace of AI innovation.

Potential Applications Across Industries – What GPT-5 Could Transform

The arrival of GPT-5, with its anticipated enhancements, is poised to trigger a ripple effect across virtually every sector, ushering in an era of unprecedented automation, intelligence, and personalized experiences. Its multimodality, advanced reasoning, and expansive context window will move AI from a specialized tool to a ubiquitous, integrated intelligence that augments human capabilities in profound ways.

Healthcare: Revolutionizing Diagnosis, Treatment, and Research

In healthcare, GPT-5 could be a game-changer. Imagine an AI capable of: * Accelerated Diagnosis: Analyzing vast amounts of patient data – including medical images, genomic sequences, electronic health records, and even conversational symptoms (from a chat gpt5 interface) – to identify subtle patterns indicative of diseases, often earlier and with greater accuracy than human physicians alone. * Personalized Treatment Plans: Tailoring drug regimens, therapy approaches, and preventive care strategies based on an individual's unique biological makeup, lifestyle, and response to previous treatments, significantly enhancing precision medicine. * Drug Discovery and Development: Rapidly sifting through chemical compounds, simulating molecular interactions, and predicting efficacy and side effects, drastically reducing the time and cost associated with bringing new medicines to market. * Patient Engagement and Education: Providing empathetic and accurate information to patients, answering complex medical questions, and guiding them through treatment plans, potentially even offering mental health support through intelligent conversational interfaces. * Medical Research: Automatically synthesizing findings from millions of research papers, identifying gaps in knowledge, and even generating hypotheses for new studies, accelerating scientific discovery.

Education: Personalizing Learning and Empowering Educators

The education sector stands to be profoundly transformed by GPT-5, offering a truly personalized learning experience: * Intelligent Tutors: Acting as an infinitely patient and knowledgeable tutor, adapting its teaching style to each student's learning pace, preferences, and knowledge gaps, offering explanations in multiple modalities (text, visuals, audio). * Content Creation: Generating bespoke educational materials, exercises, and assessments tailored to specific curricula or individual student needs, including interactive simulations and virtual learning environments. * Research Assistance: Helping students and academics sift through vast libraries of information, summarize complex theories, and formulate research questions, significantly streamlining academic work. * Language Learning: Providing immersive and highly contextualized language practice, correcting grammar, pronunciation, and even cultural nuances in real-time conversations. A chat gpt5 specialized for language exchange could revolutionize fluency.

Creative Arts: Unlocking New Dimensions of Expression

GPT-5 will not just automate; it will also empower human creativity: * Advanced Content Generation: Moving beyond text, the model could generate high-quality music compositions across genres, intricate visual art pieces, cinematic storyboards, and even fully realized short films based on detailed prompts. * Creative Collaboration: Acting as a co-creator, brainstorming ideas, suggesting plot twists for novelists, composing harmonies for musicians, or generating visual concepts for designers, pushing artistic boundaries. * Personalized Entertainment: Creating dynamic, interactive narratives that adapt in real-time to user choices, or generating unique digital art pieces and musical scores on demand. * Restoration and Archiving: Digitally restoring damaged historical texts, photographs, or audio recordings with unprecedented accuracy, preserving cultural heritage.

Software Development: Automating and Innovating the Codebase

For developers, GPT-5 promises to be an indispensable assistant: * Automated Code Generation and Debugging: Generating complex code snippets, entire functions, or even complete applications from natural language descriptions. It could also identify and fix bugs, suggest optimizations, and refactor code with human-level understanding. * Intelligent Programming Assistants: Offering real-time coding suggestions, documentation generation, and even explaining complex codebases in simple terms, making chat gpt5 an essential tool for developers. * Natural Language Interfaces for Software: Enabling users to interact with software and data through natural language commands, democratizing access to complex tools and functionalities. * System Design and Architecture: Assisting in the conceptualization and design of complex software systems, evaluating different architectural choices, and identifying potential bottlenecks.

Customer Service & Sales: Hyper-Personalized and Proactive Engagement

The customer experience will be profoundly transformed: * Hyper-Personalized Support: AI agents powered by chat gpt5 that understand customer history, preferences, and emotional state, providing empathetic, context-aware, and highly effective support across all channels. * Proactive Problem Resolution: Identifying potential customer issues before they arise, for example, predicting equipment failures or service interruptions, and proactively offering solutions. * Intelligent Sales & Marketing: Generating personalized marketing content, identifying high-potential leads, and assisting sales representatives with tailored product recommendations and objection handling in real-time. * Multilingual Global Support: Providing instantaneous, highly accurate, and culturally nuanced communication in any language, breaking down international barriers for businesses.

Research & Development: Accelerating Scientific Discovery

Beyond specific industries, GPT-5's ability to process and synthesize information will accelerate R&D across the board: * Hypothesis Generation: Identifying novel connections between disparate research fields, suggesting new avenues for scientific inquiry, and generating testable hypotheses. * Data Analysis and Interpretation: Automatically processing and interpreting massive, complex datasets from experiments, simulations, or observations, identifying trends and insights that might be missed by human analysis. * Automated Experiment Design: Suggesting optimal experimental parameters, designing sequences of experiments, and even simulating their outcomes to refine methodologies before costly physical trials.

Robotics & Automation: Towards Truly Intelligent Machines

The integration of GPT-5 into robotics could lead to a new generation of intelligent, adaptable machines: * Natural Language Control: Allowing humans to interact with robots using intuitive spoken commands, leading to more flexible and user-friendly automation in manufacturing, logistics, and domestic settings. * Enhanced Situational Awareness: Robots equipped with gpt-5 could better interpret complex environments, understand human intentions, and adapt their actions in real-time, leading to safer and more efficient human-robot collaboration. * Adaptive Task Execution: Robots capable of learning new tasks from demonstrations or verbal instructions, and even planning complex sequences of actions to achieve goals in dynamic environments.

The transformative power of GPT-5 lies in its ability to understand, reason, and create across modalities and contexts, making it an unprecedented tool for innovation. Its applications are not merely an extension of current AI; they represent a fundamental shift in what we can achieve with artificial intelligence, blurring the lines between human intuition and machine capability.

To illustrate the anticipated leap, consider the following comparison:

| Feature/Capability | GPT-4 (Current State/Widely Available) | Anticipated GPT-5 Breakthroughs | Impact |

|---|---|---|---|

| Context Window | ~32K tokens (around 25,000 words) | >1M tokens (entire books, extended conversations, multi-document analysis) | Enables sustained, coherent interactions over hours/days; processes entire legal briefs, research archives; deeper narrative understanding. |

| Multimodality | Text input/output, limited image understanding (visual input to text output) | Full multimodal input/output (text, image, audio, video, 3D) | AI can truly "see, hear, and speak"; unified understanding across senses; advanced creative generation (e.g., generating video from text). |

| Reasoning & Logic | Strong logical reasoning for well-defined problems; some common sense | Advanced abstract reasoning, true common sense, causal inference | Solves complex scientific problems; understands nuanced social situations; fewer illogical "hallucinations"; explains "why" not just "what." |

| Factual Accuracy | Significantly improved over GPT-3.5, but still prone to "hallucinations" | Greatly reduced hallucinations; robust grounding in verifiable data | More reliable for critical applications (medical, legal, financial); increased trust in AI-generated information. |

| Personalization | Limited personalization based on immediate chat history | Deep, adaptive personalization over long periods; learns user preferences | AI acts as a dedicated, long-term assistant; highly tailored educational experiences; proactive task assistance. |

| Ethical Alignment/Safety | Improved safety filters, bias mitigation efforts | Proactive bias detection, advanced safeguards against misuse, ethical reasoning | Safer deployment in sensitive areas; more equitable and less harmful AI outputs; AI helps identify and mitigate ethical dilemmas. |

| Training Data | Massive text and code datasets | Vastly expanded, higher quality, diverse multimodal datasets | Deeper, broader knowledge base; more nuanced understanding of the world; better performance across all tasks. |

| Latency/Efficiency | Can be high for complex prompts | Optimized for low latency, higher throughput, more efficient inference | Real-time applications (e.g., live interpretation, instantaneous content generation); more cost-effective for large-scale deployments. |

| Creativity | High-quality text, code, basic image ideas | Novel artistic creations (music, complex visuals, interactive stories) | AI as a true creative partner, pushing the boundaries of human artistic expression; personalized, dynamic entertainment. |

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

The Technical Underpinnings and Challenges

The journey to GPT-5 is not without its formidable technical hurdles. Building an AI model of this predicted scale and capability pushes the boundaries of current computational science, demanding innovation across hardware, software, and fundamental AI research. Understanding these challenges provides crucial context for appreciating the monumental effort involved.

Architectural Enhancements: Beyond the Conventional Transformer?

While the Transformer architecture has proven incredibly powerful, simply scaling it indefinitely presents diminishing returns and increasing computational costs. For GPT-5, OpenAI researchers are likely exploring or implementing several architectural advancements: * Sparse Attention Mechanisms: Traditional transformers use full self-attention, where every word attends to every other word, which becomes computationally prohibitive for very long sequences. Sparse attention reduces this quadratic complexity by allowing tokens to attend only to a subset of other tokens, making longer context windows feasible. * Mixture-of-Experts (MoE) Models: This architecture allows the model to selectively activate only a portion of its parameters for any given input, significantly reducing the computational cost per inference while maintaining a massive total parameter count. This could be key to achieving the scale of GPT-5 without an equivalently massive increase in inference cost. * Novel Positional Encodings: The Transformer relies on positional encodings to understand word order. As context windows grow, novel and more effective ways to encode position for extremely long sequences become critical. * Adaptive Computation: Developing architectures that can dynamically allocate computational resources based on the complexity of the input, ensuring efficiency for simpler tasks and allocating more power for harder problems. * Multimodal Fusion Architectures: Designing neural network modules specifically engineered to seamlessly integrate and process information from disparate modalities (text, vision, audio) rather than simply concatenating their embeddings. This involves intricate cross-attention mechanisms and unified representational spaces.

Training Data: Scale, Quality, and Ethical Sourcing

The performance of large language models is inextricably linked to the quality and quantity of their training data. For GPT-5, the demand for data will be unprecedented: * Exponentially Larger Datasets: Moving beyond the current web-scale text corpora, GPT-5 will require an even more gargantuan collection of text, code, images, video, and audio from diverse sources to achieve true multimodality and deeper world knowledge. This includes highly curated datasets for specific reasoning tasks and safety alignment. * Data Quality and Curation: The "garbage in, garbage out" principle becomes even more critical. Cleaning, filtering, and curating such vast multimodal datasets to remove noise, bias, and harmful content is an immense undertaking. This involves sophisticated automated processes combined with human oversight. * Ethical Sourcing and Copyright: The ethical implications of scraping the internet for training data are a growing concern. OpenAI will face increasing scrutiny regarding copyright, data privacy, and consent. Developing methods to ethically source and compensate data creators, or training on licensed datasets, will be a major challenge. The risk of perpetuating or amplifying societal biases present in the data also necessitates careful data selection and augmentation strategies.

Computational Demands: Hardware, Energy, and Environmental Impact

The training of models like GPT-5 requires staggering computational resources, primarily in the form of graphics processing units (GPUs) or specialized AI accelerators: * Hardware Infrastructure: Training gpt-5 could demand thousands, if not tens of thousands, of the most advanced AI chips (like NVIDIA's H100s or custom TPUs), requiring massive data centers and robust interconnects for efficient distributed training. * Energy Consumption: The energy required to train and run these models is immense, leading to significant carbon footprints. Optimizing model architectures for efficiency and developing more energy-efficient hardware are critical challenges for sustainable AI development. * Cost: The financial cost of training GPT-5 will likely run into hundreds of millions, if not billions, of dollars, encompassing hardware acquisition, energy, and personnel. This places enormous pressure on organizations like OpenAI to secure substantial funding and ensure a return on investment through robust applications. * Inference Costs: While training is the most computationally intensive phase, deploying GPT-5 for inference (generating responses) at scale for millions of users still requires substantial resources. Optimizing models for faster, cheaper inference is crucial for widespread adoption and accessibility, especially for chat gpt5 type services.

Fine-tuning and Alignment: Ensuring Safety and Utility

Training a powerful base model is only one part of the equation. Aligning it with human values and ensuring its safety and helpfulness is arguably the harder challenge: * Reinforcement Learning from Human Feedback (RLHF) Evolution: GPT-4 significantly benefited from RLHF. For GPT-5, this process will need to become even more sophisticated, involving more diverse human annotators, more nuanced feedback mechanisms, and potentially more complex reward models to guide the AI towards desirable behaviors. * Robustness to Adversarial Attacks: As models become more powerful, they also become more attractive targets for adversarial attacks designed to elicit harmful or biased responses. Developing robust defenses against such prompt injection and data poisoning attacks is crucial. * Controllability and Steerability: Giving users and developers more fine-grained control over the model's behavior, style, and safety parameters will be vital for its widespread and responsible application. This includes mechanisms for enforcing ethical guidelines, preventing misuse, and ensuring compliance with regulations. * Explainability and Interpretability: Understanding why GPT-5 generates a particular output remains a significant challenge for large neural networks. Progress in this area is crucial for building trust, debugging errors, and ensuring accountability, especially in high-stakes applications like healthcare.

Ethical Considerations Revisited: Mitigating Societal Risks

Beyond the technical challenges, the development of GPT-5 brings renewed and heightened ethical concerns: * Misinformation and Deepfakes: The ability to generate highly realistic text, images, and video could exacerbate the spread of misinformation, propaganda, and deepfake content, posing significant societal risks. Robust detection and mitigation strategies are paramount. * Job Displacement: The enhanced capabilities of GPT-5 could automate a wider range of cognitive tasks, potentially leading to significant job displacement across various sectors. Society needs to prepare for this economic shift with education, reskilling programs, and social safety nets. * Autonomous Decision-Making: As AI becomes more capable of reasoning and independent action, establishing clear ethical guidelines for its deployment in autonomous systems (e.g., self-driving cars, judicial systems) becomes critical. * Existential Risks: The ultimate concern with advanced AI revolves around control and alignment. Ensuring that superintelligent AI systems remain aligned with human values and goals is an ongoing philosophical and technical challenge that OpenAI and the broader AI community are committed to addressing.

The development of GPT-5 is a grand scientific and engineering endeavor, pushing the limits of what is currently possible. Overcoming these technical and ethical challenges will require not only brilliant minds and immense resources but also a deep commitment to responsible innovation, collaboration, and a clear understanding of the societal implications of building increasingly powerful artificial intelligence.

Preparing for the GPT-5 Era – Strategies for Individuals and Businesses

The impending arrival of GPT-5 isn't just a technological event; it's a societal one that demands preparation from individuals, businesses, and policymakers alike. The models are becoming too powerful and pervasive to ignore, and those who proactively adapt will be best positioned to thrive in the forthcoming AI-accelerated world.

Upskilling and Reskilling: Adapting to New AI Tools

For individuals, the most crucial step is to embrace continuous learning and develop a robust "AI literacy." * Understand AI's Capabilities and Limitations: Learn what AI can (and cannot) do. This involves understanding the basics of how LLMs work, their strengths in tasks like summarization, generation, and analysis, and their weaknesses, such as occasional inaccuracies or lack of true common sense. * Develop Prompt Engineering Skills: As gpt-5 and chat gpt5 become more sophisticated, the ability to craft precise, effective prompts to elicit desired outputs will be a highly valued skill. This goes beyond simple queries to involve strategic thinking about context, persona, format, and iterative refinement. * Focus on Complementary Skills: AI will automate many routine cognitive tasks. Humans will increasingly excel in areas that require uniquely human attributes: critical thinking, creativity, emotional intelligence, complex problem-solving, ethical reasoning, and interpersonal communication. Develop these skills to become an indispensable AI collaborator. * Embrace AI as a Co-Pilot: View AI not as a replacement, but as a powerful assistant. Learn to integrate AI tools into your daily workflows to augment your productivity, creativity, and decision-making. Experiment with various AI applications relevant to your field.

Strategic Integration for Businesses: Identifying Opportunities

Businesses need to develop comprehensive AI strategies that go beyond mere experimentation to fundamental transformation. * Identify High-Impact Use Cases: Don't just implement AI for AI's sake. Conduct thorough assessments to pinpoint specific business processes where GPT-5's capabilities (e.g., multimodality for customer service, enhanced reasoning for R&D, long context for legal analysis) can deliver significant value, efficiency gains, or competitive advantage. * Pilot Projects and Iteration: Start with small, manageable pilot projects. This allows teams to gain hands-on experience, identify challenges, measure ROI, and iterate on their AI integration strategy before a wider rollout. * Build an AI-Ready Data Strategy: High-quality data is the lifeblood of AI. Ensure your organization has robust data governance policies, clean and accessible data stores, and a strategy for continuously collecting and curating relevant information that can feed into and be processed by advanced models like GPT-5. * Invest in Talent and Training: Upskill your existing workforce in AI literacy and prompt engineering. Consider hiring AI specialists who can bridge the gap between business needs and technological capabilities. Create an organizational culture that encourages experimentation and learning with AI. * Develop Internal Ethical AI Frameworks: Proactively establish guidelines for the responsible use of AI within your organization, addressing concerns like data privacy, bias, transparency, and accountability. This builds trust with customers and employees and mitigates regulatory risks.

Leveraging Unified AI Platforms: Simplifying Access to the Future

The proliferation of powerful AI models from various providers, each with its own API and nuances, can create significant integration complexity for developers and businesses. This is where unified API platforms become indispensable.

For developers and organizations looking to harness the power of not just GPT-5 but a wide array of cutting-edge language models, managing multiple API connections can be a daunting and time-consuming task. Each model might have slightly different input/output formats, authentication methods, and rate limits, complicating the development of robust AI-driven applications. This is precisely the challenge that XRoute.AI addresses.

XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows. Imagine having the flexibility to switch between gpt-5, other advanced OpenAI models, or models from Anthropic, Google, and other leaders, all through a consistent interface.

With a focus on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. Whether you're building a chat gpt5-like application, automating customer support with multiple specialized LLMs, or developing complex AI agents that require the best model for each specific task, XRoute.AI allows you to do so efficiently. The platform’s high throughput, scalability, and flexible pricing model make it an ideal choice for projects of all sizes, from startups eager to leverage the latest in AI to enterprise-level applications seeking robust, adaptable, and future-proof AI infrastructure. By abstracting away the underlying complexities, XRoute.AI enables developers to focus on innovation and delivering value, rather than getting bogged down in API management. This foresight in simplifying access to diverse LLMs will become even more critical as models like GPT-5 and its future iterations continue to emerge and diversify the AI landscape.

Regulatory and Policy Adjustments: Shaping the AI Landscape

Governments and international bodies also have a critical role in shaping the GPT-5 era: * Developing Agile Regulations: Crafting policies that protect citizens (e.g., privacy, intellectual property, anti-discrimination) without stifling innovation. This requires a deep understanding of AI's capabilities and rapid adaptation as the technology evolves. * Investing in AI Research and Education: Supporting foundational AI research, particularly in areas like safety, alignment, and interpretability, and funding educational initiatives to prepare the workforce. * International Collaboration: AI is a global phenomenon. International cooperation on ethical guidelines, safety standards, and responsible deployment is crucial to maximize benefits and mitigate risks.

The advent of GPT-5 is not merely a technological upgrade; it is a catalyst for fundamental societal change. Proactive preparation, from individual skill development to comprehensive business strategies and forward-thinking policy, will be essential for navigating this exciting, yet challenging, new era of artificial intelligence.

The Philosophical and Societal Impact of Advanced AI

As we contemplate the extraordinary capabilities of GPT-5, the discussion naturally extends beyond its technical prowess and commercial applications to delve into its profound philosophical and societal implications. The closer AI approaches human-level intelligence, the more pressing these questions become, challenging our understanding of intelligence, work, creativity, and even what it means to be human.

AI's Role: Augmentation vs. Replacement

One of the central debates surrounding advanced AI is whether it will primarily augment human capabilities or replace them. With GPT-5's anticipated reasoning, creativity, and multimodal understanding, it's plausible that a wider array of tasks, previously thought to be exclusively human, could be automated. * Augmentation: In many scenarios, gpt-5 will act as a powerful co-pilot, enhancing human productivity and creativity. Doctors will use it for diagnosis, lawyers for legal research, engineers for design, and artists for inspiration. The AI will handle the tedious, data-intensive, or routine aspects, freeing humans to focus on higher-level strategy, empathy, and truly novel creation. The chat gpt5 interface could become the most powerful personal assistant ever conceived. * Replacement: However, for roles heavily reliant on predictable cognitive tasks, automation might lead to significant job displacement. This isn't just about manufacturing; it extends to white-collar jobs in areas like data entry, basic content creation, and even certain aspects of customer service or analysis. Society must grapple with the economic and social consequences of such a shift, necessitating conversations about universal basic income, retraining programs, and new economic models.

The Future of Work, Creativity, and Human Interaction

GPT-5 will undoubtedly reshape the landscape of work and creativity: * Redefining Work: Future jobs will likely be less about performing tasks and more about managing AI systems, interpreting their outputs, setting strategic directions, and engaging in uniquely human forms of collaboration and leadership. Skills like critical thinking, emotional intelligence, and interdisciplinary problem-solving will become paramount. * Evolving Creativity: While AI can generate novel art, music, and literature, true artistic expression often stems from human experience, emotion, and intentionality. GPT-5 might become a magnificent tool for artists, expanding their palettes and accelerating their processes, but the human spark behind the ultimate creative vision will remain crucial. It challenges us to rethink what it means to be "creative" when machines can produce such sophisticated outputs. * Altering Human Interaction: Chat gpt5 and similar AI interfaces will become increasingly integrated into our daily lives, from personal assistants to companions. While convenient, this raises questions about the quality of human-to-human interaction, the potential for social isolation if people rely too heavily on AI companions, and the importance of maintaining authentic human connections. The ability of AI to mimic empathy and understanding could also blur the lines between genuine and artificial relationships.

Addressing Existential Risks and Ensuring Beneficial AI

The long-term implications of highly advanced AI, especially AGI (Artificial General Intelligence), are a subject of intense philosophical and scientific debate. * The Control Problem: As AI systems become more intelligent and autonomous, ensuring that they remain aligned with human values and goals is paramount. An AI optimized for a narrow goal without broader ethical constraints could, inadvertently, cause harm. The development of robust alignment techniques for gpt-5 and beyond is a critical area of research. * The Singularity: Some futurists envision a "technological singularity," where AI's self-improvement cycles become so rapid that they outstrip human comprehension, leading to unpredictable changes. While GPT-5 is not AGI, it pushes us further down this path, highlighting the need for ongoing dialogue and research into long-term AI safety. * Ethical Governance: The development of international norms, treaties, and regulatory frameworks for advanced AI is essential to ensure its beneficial deployment. This includes addressing issues of autonomous weapons, surveillance, privacy, and algorithmic bias on a global scale. * The Definition of Consciousness: As AI exhibits increasingly sophisticated reasoning and interaction, the philosophical question of consciousness will inevitably resurface. While current AI models are statistical engines, not sentient beings, their advanced capabilities challenge us to reconsider our definitions of intelligence and mind.

The emergence of GPT-5 symbolizes a pivotal moment in human history. It promises immense progress and convenience, offering solutions to some of humanity's most intractable problems. Yet, it also forces us to confront fundamental questions about our identity, our future, and our responsibility in shaping a world where artificial intelligence plays an increasingly central role. Navigating this future successfully will require not just technological ingenuity but also profound ethical reflection, proactive societal planning, and a commitment to ensuring that AI serves humanity's best interests.

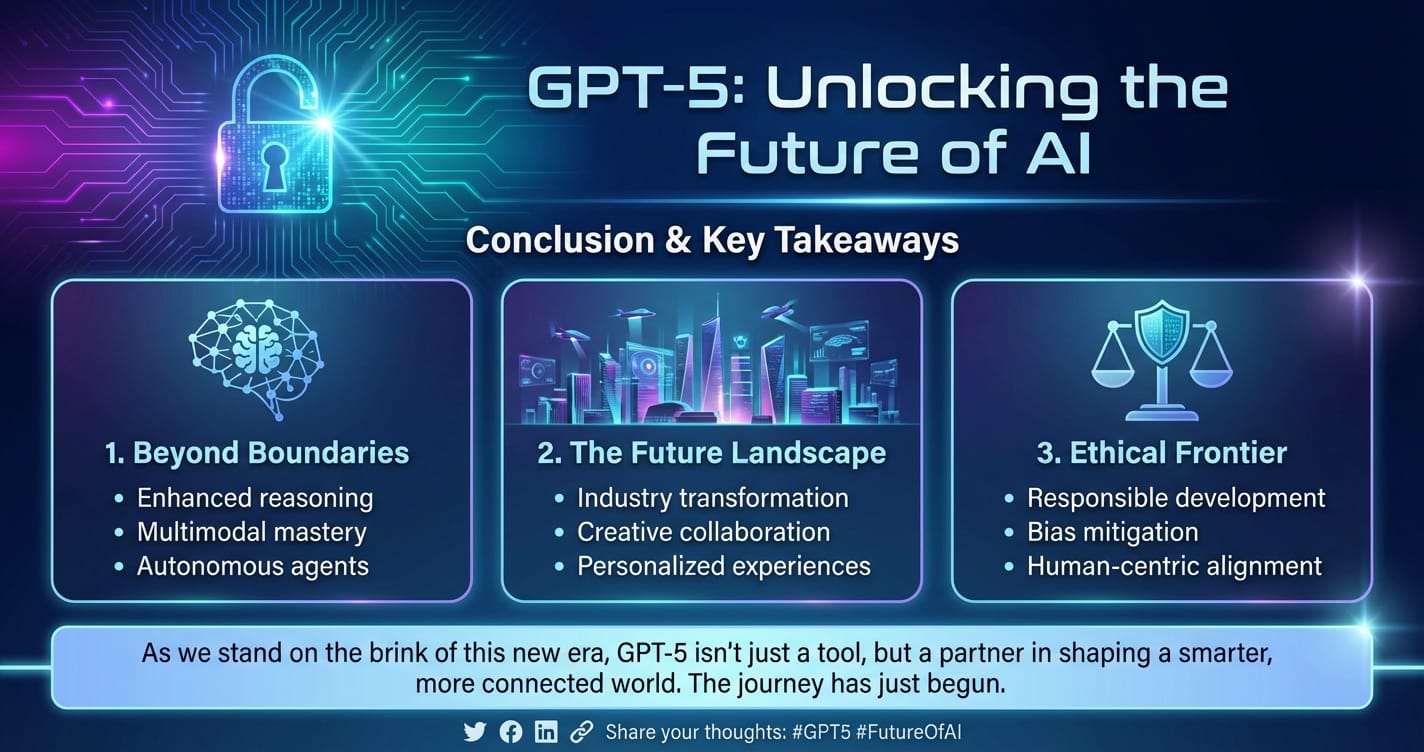

Conclusion: Gazing into the AI Horizon with GPT-5

The journey through the anticipated landscape of GPT-5 reveals a future brimming with both awe-inspiring possibilities and significant responsibilities. From its unprecedented scale and true multimodality to its enhanced reasoning and ethical alignment, GPT-5 is not merely an incremental upgrade; it represents a profound leap in artificial intelligence, poised to redefine our digital and physical worlds. The advancements we expect from gpt-5 will make AI an even more indispensable tool for innovation, problem-solving, and creative expression across every conceivable industry, from healthcare and education to software development and the arts.

We have explored how a more intelligent, coherent, and context-aware chat gpt5 could revolutionize customer interactions, how its multimodal capabilities could accelerate scientific discovery, and how its deeper reasoning could lead to more robust and reliable AI applications. The integration of such advanced models, however, also brings forth a fresh wave of technical and ethical challenges, demanding innovative solutions in architecture, data curation, computational efficiency, and robust alignment with human values. The conversation around job displacement, the future of human creativity, and the very definition of intelligence will only intensify as AI's capabilities expand.

Preparing for this GPT-5 era is not optional. Individuals must cultivate AI literacy, focusing on uniquely human skills and embracing AI as a powerful co-pilot. Businesses must develop strategic integration plans, build robust data infrastructures, and foster a culture of ethical AI deployment. Platforms like XRoute.AI will become crucial enablers, simplifying access to this burgeoning ecosystem of advanced LLMs, ensuring that developers and enterprises can seamlessly leverage cutting-edge models—including future iterations of GPT—without getting bogged down in complex API management.

Ultimately, the advent of GPT-5 underscores a fundamental truth: AI is not just a tool; it is a transformative force that requires thoughtful engagement, responsible development, and proactive adaptation. The future it unlocks is one of immense potential, where the symbiotic relationship between human ingenuity and artificial intelligence can propel us towards unprecedented levels of progress and understanding. As we stand at the threshold of this new era, the anticipation for gpt-5 is not just about a technological marvel, but about collectively shaping a future where intelligence, in all its forms, serves the greater good of humanity. The horizon is bright, challenging, and undeniably exciting.

Frequently Asked Questions about GPT-5

Q1: What is GPT-5 and how is it different from GPT-4? A1: GPT-5 is the anticipated next-generation large language model from OpenAI, following GPT-4. While specifics are not yet public, it is expected to significantly surpass GPT-4 in scale, offering much larger parameter counts and context windows. Key differences are predicted to include true multimodality (seamlessly handling text, images, audio, video), enhanced reasoning capabilities, greatly reduced hallucinations, and more advanced personalization features. It aims to offer a much deeper understanding of the world and more human-like interactions.

Q2: When is GPT-5 expected to be released? A2: OpenAI has not yet announced an official release date for GPT-5. The development of such advanced models is complex and involves extensive training, testing, and safety alignment. While there is significant industry speculation, it's generally understood that OpenAI prioritizes safety and responsible deployment over rapid release, meaning they will launch it when they deem it ready and safe for widespread use.

Q3: Will GPT-5 be multimodal, meaning it can understand images and audio, not just text? A3: Yes, a major anticipated breakthrough for GPT-5 is its full multimodality. While GPT-4 introduced some image understanding, GPT-5 is expected to integrate and process various modalities—text, images, audio, and even video—seamlessly. This means it could understand visual contexts, interpret spoken language with nuance, and even generate content across these different formats, leading to a much richer and intuitive interaction experience with chat gpt5.

Q4: How will GPT-5 impact jobs and the economy? A4: GPT-5 is expected to have a significant impact on jobs and the economy by automating a broader range of cognitive tasks. While some jobs may be displaced, it is also likely to augment human capabilities, creating new roles and increasing productivity across industries. Jobs requiring uniquely human skills like critical thinking, creativity, emotional intelligence, and complex problem-solving are likely to thrive. Businesses will need to focus on upskilling their workforce and strategically integrating AI to leverage its benefits.

Q5: What are the main ethical concerns surrounding GPT-5? A5: With increased power comes increased responsibility. Key ethical concerns for GPT-5 include the potential for spreading highly convincing misinformation and deepfakes due to advanced generative capabilities, algorithmic bias inherited from training data, job displacement, and the need for robust safety mechanisms to prevent misuse or unintended harmful outcomes. OpenAI and the broader AI community are focused on addressing these challenges through improved alignment, transparency, and ethical governance during its development and deployment.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.