How Much Does OpenAI API Cost? A Detailed Breakdown

In the rapidly evolving landscape of artificial intelligence, OpenAI's API has emerged as a foundational technology, powering an immense variety of applications from sophisticated chatbots and intelligent content creation tools to complex data analysis systems. For developers, startups, and established enterprises alike, integrating these powerful models offers unparalleled opportunities for innovation. However, a crucial question that inevitably arises before embarking on such integration is: how much does OpenAI API cost? Understanding the intricate details of OpenAI's pricing structure is not merely an exercise in budgeting; it's a strategic imperative that can significantly impact the financial viability and scalability of your AI-driven projects.

The cost associated with utilizing OpenAI's API is multifaceted, influenced by a combination of factors including the specific model chosen, the volume of data processed, and the nature of the task (e.g., text generation, image creation, speech-to-text). Unlike a simple subscription fee, OpenAI employs a token-based pricing model, which can initially seem daunting but offers granular control over expenditure once understood. This comprehensive guide aims to demystify OpenAI's API costs, providing a detailed breakdown of pricing for different models, offering insights into factors that drive expenses, and outlining practical strategies for cost optimization. By the end of this article, you will possess a clear understanding of what to expect when building with OpenAI, enabling you to make informed decisions that align with both your technical aspirations and budgetary constraints. We'll delve into the nuances of each model, from the cutting-edge GPT-4o to the highly efficient gpt-4o mini, and explore how strategic choices can lead to substantial savings without compromising performance.

Understanding OpenAI's API Pricing Model: The Foundation of Your AI Budget

OpenAI's pricing philosophy revolves primarily around a "pay-as-you-go" token-based system. This model means you only pay for the computational resources you consume, measured in "tokens." Grasping the concept of tokens is fundamental to estimating your API expenses accurately.

What are Tokens?

In the context of large language models (LLMs), a token is a fundamental unit of text. It can be a word, part of a word, a punctuation mark, or even a space. For English text, a general rule of thumb is that 1,000 tokens equate to approximately 750 words. This conversion rate is not exact and can vary slightly based on the complexity and structure of the text.

OpenAI distinguishes between two types of tokens for most of its language models:

- Input Tokens (Prompt Tokens): These are the tokens sent to the model as part of your request, including the prompt itself, any system instructions, and previous conversation history in a chat context. You pay for every token you send to the API.

- Output Tokens (Completion Tokens): These are the tokens generated by the model as its response. You also pay for every token the model generates.

This distinction is crucial because the pricing per token often differs between input and output, with output tokens generally being more expensive, reflecting the computational effort required for generation.

Model Tiers and Specializations

OpenAI offers a diverse suite of models, each designed for specific tasks and varying in capability, speed, and, consequently, cost. These models can be broadly categorized:

- Generative Pre-trained Transformers (GPT) Models: These are the flagship language models, including GPT-4, GPT-3.5, and the latest GPT-4o family. They excel at natural language understanding, generation, summarization, translation, and complex reasoning.

- Embedding Models: Designed to convert text into numerical vector representations (embeddings) that capture semantic meaning. These are vital for tasks like search, recommendation systems, and clustering.

- Image Generation Models (DALL-E): Capable of creating original images from text descriptions.

- Speech-to-Text Models (Whisper): Transcribes audio into written text.

- Fine-tuning Models: Allows users to adapt specific GPT models to their own datasets, improving performance on niche tasks.

Each model within these categories has its own pricing structure, reflecting its underlying complexity and performance. More powerful and capable models, like GPT-4, typically come with a higher per-token cost than their less complex counterparts, such as GPT-3.5 or the newly introduced gpt-4o mini.

Usage Tiers and Potential Discounts

While OpenAI's primary pricing is pay-as-you-go, they do offer some mechanisms that can indirectly affect costs for high-volume users:

- Tiered Access: New users typically start with lower rate limits. As your usage grows and you establish a payment history, your access tiers and associated rate limits might increase, allowing for higher throughput. While this doesn't directly offer a per-token discount, it enables scaling without hitting artificial caps.

- Enterprise Agreements: For very large organizations with substantial and consistent usage, direct enterprise agreements might be negotiable, potentially offering customized pricing or dedicated infrastructure. These are typically not publicly advertised and require direct engagement with OpenAI.

Understanding these foundational elements is the first step in accurately projecting your OpenAI API expenditure. Now, let's dive into the specifics of each model family.

Detailed Cost Breakdown by Model Family

The core of understanding how much does OpenAI API cost lies in a detailed examination of each model's pricing. We'll explore the various models, their capabilities, and their associated token costs, paying close attention to the latest innovations like GPT-4o and gpt-4o mini.

GPT-4 Family: The Apex of Language Understanding and Generation

The GPT-4 series represents the pinnacle of OpenAI's language models, offering unparalleled reasoning, creativity, and instruction-following capabilities. These models are generally more expensive due to their advanced performance but are indispensable for tasks requiring high accuracy, nuanced understanding, or complex problem-solving.

GPT-4 and GPT-4 Turbo

- GPT-4: The original flagship model, known for its extensive general knowledge and advanced reasoning. It comes with varying context window sizes (e.g., 8K, 32K tokens), impacting how much information it can "remember" and process in a single interaction.

- GPT-4 Turbo: An evolution of GPT-4, offering improved performance, a much larger context window (up to 128K tokens), and typically lower prices than the original GPT-4. It also has more up-to-date knowledge cutoff dates. GPT-4 Turbo is designed for higher throughput and reduced costs for many common GPT-4 use cases. It supports multimodal inputs (like image understanding via "GPT-4V").

Typical Pricing (as of recent updates, always check official OpenAI docs for latest): For GPT-4 Turbo with 128k context: * Input Tokens: ~$0.01 / 1K tokens * Output Tokens: ~$0.03 / 1K tokens

This means if you send a 5,000-token prompt and receive a 2,000-token response, the cost would be (5 * $0.01) + (2 * $0.03) = $0.05 + $0.06 = $0.11. This might seem small, but it scales quickly with high volume.

GPT-4o (GPT-4 Omni) and GPT-4o mini: The New Frontier of Multimodality and Efficiency

The introduction of GPT-4o (Omni) marked a significant leap, offering native multimodality across text, audio, and vision. It's designed to be faster, more capable, and crucially, more cost-effective than previous GPT-4 models.

- GPT-4o: This model is revolutionary because it's "natively multimodal," meaning it's trained across modalities, processing text, audio, and vision inputs and outputs directly. This leads to more natural and efficient interactions. For instance, it can process image inputs for analysis and generate text outputs, or even process audio and respond in audio. It also offers twice the speed and half the cost of GPT-4 Turbo for text processing.

- Input Tokens: ~$0.005 / 1K tokens

- Output Tokens: ~$0.015 / 1K tokens

- Vision Input: Pricing for images depends on resolution, with a base cost for standard resolution and higher costs for high-resolution images. For example, a 1080p image might cost around $0.01275.

- gpt-4o mini: This is arguably one of the most exciting developments for cost-conscious developers. gpt-4o mini is a significantly smaller, faster, and much cheaper version of GPT-4o, specifically optimized for simpler tasks where the full power of its larger sibling isn't required. It retains many of the GPT-4o’s multimodal capabilities but at a fraction of the cost, making it an ideal choice for high-volume, less complex applications like basic summarization, translation, simple chatbots, or data extraction where accuracy requirements are not extremely stringent. Its cost-effectiveness makes it a game-changer for projects that previously found GPT-4 family models too expensive for scale.

- Input Tokens: ~$0.00015 / 1K tokens

- Output Tokens: ~$0.0006 / 1K tokens

- Vision Input: Costs are also significantly lower than full GPT-4o.

Why gpt-4o mini is a game-changer: The price difference is staggering. For input, gpt-4o mini is approximately 33 times cheaper than GPT-4o, and for output, it's 25 times cheaper. This makes it an incredibly attractive option for developers looking to integrate powerful AI capabilities into their applications without incurring prohibitive costs. Imagine building an internal knowledge base query system or a customer support bot where 80% of queries can be handled by gpt-4o mini, reserving the more expensive GPT-4o for complex escalations. This hybrid approach allows for significant cost savings while maintaining a high level of user experience.

GPT-3.5 Family: The Workhorse of Cost-Effective AI

The GPT-3.5 family, particularly gpt-3.5-turbo, remains a highly popular choice for its balance of performance, speed, and affordability. While not as powerful as GPT-4 models in complex reasoning, it excels at a vast range of common NLP tasks.

gpt-3.5-turbo: This model is excellent for general-purpose tasks such as basic content generation, summarization, chatbots, data augmentation, and code generation. It’s significantly faster and cheaper than GPT-4, making it suitable for applications requiring high throughput and lower latency at a reasonable cost. It also comes in various context window sizes, with 16k tokens being a popular option.

Typical Pricing (for gpt-3.5-turbo with 16k context): * Input Tokens: ~$0.0005 / 1K tokens * Output Tokens: ~$0.0015 / 1K tokens

Comparing this to GPT-4o, gpt-3.5-turbo is still cheaper, especially for input tokens. However, the release of gpt-4o mini now positions it as an even more cost-effective option for many tasks where its performance is sufficient. This indicates a strong shift towards highly optimized, specialized models at different price points.

Embedding Models: Vectorizing Text for Semantic Search and More

Embedding models transform text into high-dimensional vectors (numerical representations) that capture the semantic meaning of the text. These embeddings are crucial for various AI applications that involve understanding relationships between pieces of text, rather than just generating text.

text-embedding-3-smallandtext-embedding-3-large: OpenAI's latest embedding models are designed to be more efficient and performant than previous versions.text-embedding-3-smallis a good balance of cost and performance, whiletext-embedding-3-largeoffers higher dimensionality and potentially better accuracy for more complex semantic tasks, at a slightly higher cost.

Typical Pricing: * Input Tokens: ~$0.00002 to ~$0.00013 / 1K tokens (depending on small vs. large)

For context, if you process a document with 10,000 tokens for embedding, the cost would be as low as $0.0002 for text-embedding-3-small. These models are incredibly cost-efficient given their critical role in retrieval-augmented generation (RAG) systems, personalized recommendations, and similarity searches.

Image Generation Models: DALL-E

The DALL-E models allow you to generate original images from text prompts. The pricing is per image generated, and it varies based on the resolution and quality desired.

- DALL-E 3: The latest iteration, known for its ability to generate high-quality, diverse images that closely adhere to the given prompt. It's integrated directly into GPT-4 through the API.

Typical Pricing (for DALL-E 3): * Standard Quality: * 1024x1024 resolution: ~$0.04 / image * 1792x1024 or 1024x1792 resolution: ~$0.08 / image * HD Quality (higher detail): * 1024x1024 resolution: ~$0.08 / image * 1792x1024 or 1024x1792 resolution: ~$0.12 / image

The costs for image generation can add up quickly if you're generating many images or requiring high-definition outputs. Careful planning of prompt variations and image regeneration is necessary.

Speech-to-Text Models: Whisper API

The Whisper API enables the conversion of audio into text. This is highly useful for transcribing meetings, voice messages, generating captions, or processing voice commands.

- Whisper: The model uses advanced deep learning techniques to achieve high accuracy across various languages and accents.

Typical Pricing: * Audio Transcription: ~$0.006 / minute

This is a straightforward pricing model. If you process an hour of audio, it would cost $0.006 * 60 = $0.36. This makes Whisper a very affordable option for integrating speech-to-text capabilities into applications.

Fine-tuning Models: Tailoring AI to Your Specific Needs

Fine-tuning allows you to take a base GPT model (currently GPT-3.5 Turbo and some legacy GPT-3 models) and train it further on your own domain-specific data. This can significantly improve the model's performance on tasks unique to your business, such as generating content in a specific brand voice, classifying specialized documents, or handling particular jargon.

Fine-tuning involves two main cost components:

- Training Costs: A one-time (per training run) cost based on the number of tokens in your training data and the chosen model.

- GPT-3.5 Turbo Fine-tuning: ~$0.008 / 1K tokens (training)

- Usage Costs: Once fine-tuned, you pay for inference (input and output tokens) using your customized model, which is typically more expensive than using the base model.

- GPT-3.5 Turbo Fine-tuned:

- Input Tokens: ~$0.003 / 1K tokens

- Output Tokens: ~$0.006 / 1K tokens

- GPT-3.5 Turbo Fine-tuned:

Fine-tuning is an investment. While the training itself might not be excessively expensive for moderately sized datasets, the increased inference cost needs to be weighed against the performance benefits. It's best suited for scenarios where a base model simply doesn't perform adequately for critical, repetitive tasks.

Token Price Comparison: A Comprehensive Overview

To help you quickly grasp the relative costs, here’s a Token Price Comparison table for the primary OpenAI API models. This table highlights the significant differences and helps underscore the value proposition of models like gpt-4o mini.

| Model Family | Specific Model | Input Tokens (per 1K) | Output Tokens (per 1K) | Key Characteristics & Best Use Cases |

|---|---|---|---|---|

| GPT-4 Family | GPT-4 Turbo (128k) | ~$0.01 | ~$0.03 | Most capable, advanced reasoning, large context, multimodal (vision). Best for complex analysis, creative generation, highly accurate tasks. |

| GPT-4o | ~$0.005 | ~$0.015 | Native multimodal (text, audio, vision), faster, more cost-effective than GPT-4 Turbo for text. Ideal for real-time multimodal applications, advanced chatbots, complex data interpretation. | |

| gpt-4o mini | ~$0.00015 | ~$0.0006 | Highly cost-effective, fast, smaller multimodal capabilities. Excellent for high-volume, simpler tasks like basic summarization, rapid prototyping, initial tier chatbots, specific content generation where full GPT-4o power isn't needed. | |

| GPT-3.5 Family | gpt-3.5-turbo (16k) | ~$0.0005 | ~$0.0015 | Good balance of speed, capability, and cost. Workhorse for general content generation, basic chatbots, data augmentation, code generation. |

| Embedding Models | text-embedding-3-small | ~$0.00002 | N/A (input only) | Cost-effective semantic search, recommendations, clustering for smaller contexts. |

| text-embedding-3-large | ~$0.00013 | N/A (input only) | Higher dimensionality for improved accuracy in complex semantic tasks. | |

| Image Generation | DALL-E 3 | N/A | ~$0.04 - $0.12/image | High-quality image generation from text prompts. Cost varies by resolution and quality (standard/HD). |

| Speech-to-Text | Whisper | N/A | ~$0.006/minute | Accurate audio transcription across languages. |

Note: Prices are approximate and subject to change by OpenAI. Always refer to the official OpenAI pricing page for the most current information.

This table clearly illustrates the significant cost advantage of gpt-3.5-turbo and especially gpt-4o mini for tasks where their capabilities are sufficient. For instance, if you're building an application that generates short, straightforward responses or categorizes simple queries, opting for gpt-4o mini over GPT-4o could reduce your token costs by factors of 25-33, making your application significantly more scalable and affordable. This direct Token Price Comparison highlights the importance of strategic model selection.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Factors Influencing Your OpenAI API Bill: Beyond the Per-Token Cost

While understanding per-token pricing is essential, several other operational factors can dramatically influence your final OpenAI API expenditure. Overlooking these can lead to unexpected surges in your bill.

1. Token Consumption: The Core Driver

The most direct factor is, of course, the total number of tokens consumed. This isn't just about how many requests you make, but how "chatty" those requests are.

- Prompt Length and Complexity: Longer and more detailed prompts consume more input tokens. While good prompt engineering often requires detail, aim for conciseness without sacrificing clarity. Each word, even in system instructions, counts.

- Context Window Management: For conversational AI, every turn of the conversation (both user input and model output) is typically sent back to the model as context to maintain continuity. As the conversation progresses, the context window fills up, meaning more input tokens are sent with each new request, even if the user's current query is short. Efficient context management (e.g., summarizing previous turns, retrieving relevant snippets rather than sending entire history) is critical.

- Output Length: The length of the model's generated response directly dictates the output token cost. If your application often requests lengthy articles or detailed code, your output token usage will be high. Fine-tuning models to be more concise or implementing post-processing to trim verbose outputs can help.

- Temperature and Repetition Penalties: While not directly affecting token count, experimenting with these parameters can influence the length and verbosity of outputs. Higher temperatures might lead to more creative, but potentially longer, responses.

2. Model Choice: The Performance-Cost Trade-off

As seen in the Token Price Comparison, selecting the right model for the job is paramount.

- Over-speccing: Using a GPT-4 or GPT-4o model for a task that

gpt-3.5-turboor even gpt-4o mini could handle effectively is a common pitfall. For example, simple text summarization or sentiment analysis often doesn't require the full reasoning power of the most advanced models. - Multi-Model Strategy: A sophisticated application often benefits from a multi-model approach. A routing layer could direct simple queries to gpt-4o mini or

gpt-3.5-turboand only escalate complex, reasoning-heavy tasks to GPT-4o or GPT-4 Turbo. This intelligent routing ensures you only pay for the necessary computational power.

3. Request Volume and Frequency: Scaling Costs

The sheer number of API calls your application makes directly translates to higher costs.

- User Base: A larger user base naturally means more interactions and, therefore, more API calls.

- Application Design: Certain application architectures might make more frequent calls than necessary. For example, constantly checking for updates or re-generating content that hasn't changed.

- Retries and Error Handling: Inefficient error handling that leads to repeated, failed API calls can also accrue costs, as you're still paying for the tokens sent in the failed request.

4. Image Generation Parameters: Resolution and Quality

For DALL-E, the cost is tied directly to the number of images, their resolution, and the chosen quality (standard vs. HD).

- Experimentation vs. Production: When prototyping or experimenting, lower resolution and standard quality images can save significant costs compared to immediately defaulting to HD and high resolution.

- Batch Generation: If you need multiple variations of an image, carefully consider if all variations require the highest quality.

5. Data Size for Embeddings and Fine-tuning

These specialized services have their own cost drivers related to data volume.

- Embeddings: The more documents or text chunks you embed, the higher your costs will be. Efficient chunking strategies and only embedding truly relevant data can help.

- Fine-tuning Data: While a one-time cost, larger datasets for fine-tuning mean higher training expenses. Ensure your training data is clean, relevant, and as concise as possible to maximize impact per token.

By carefully considering these factors, developers and businesses can gain a more holistic understanding of their potential API costs and identify areas for optimization.

Strategies to Optimize OpenAI API Costs: Building Smarter, Spending Less

Managing OpenAI API costs effectively requires a proactive and strategic approach. By implementing smart practices, you can significantly reduce your expenditure without sacrificing the quality or functionality of your AI applications.

1. Smart Model Selection and Tiering

This is perhaps the most impactful strategy.

- Leverage gpt-4o mini: For a vast array of common tasks—simple summarization, initial chatbot responses, data extraction from structured text, basic content generation, or even translation—gpt-4o mini offers an incredible cost-performance ratio. Always default to the cheapest model that meets your performance requirements. Don't use a sledgehammer to crack a nut.

- Utilize GPT-3.5 Turbo for General Tasks: For tasks slightly more complex than what gpt-4o mini can handle but not requiring GPT-4o's full power,

gpt-3.5-turboremains an excellent and economical choice. - Reserve GPT-4o/GPT-4 Turbo for High-Value, Complex Tasks: Only use the most powerful (and expensive) models for tasks demanding advanced reasoning, deep understanding, highly creative output, or multimodal processing where the superior performance justifies the higher cost. Think of a tiered system where simpler queries are routed to cheaper models and only escalated to more powerful ones when necessary.

- Dynamic Model Switching: Implement logic in your application to dynamically select the appropriate model based on the complexity or type of user request. For instance, if a user asks a simple factual question, use

gpt-4o mini. If they ask for a comprehensive market analysis, switch to GPT-4o.

2. Efficient Prompt Engineering and Context Management

Every token counts, so optimize how you craft your prompts and manage conversational context.

- Concise and Clear Prompts: Write prompts that are as short as possible while still being unambiguous. Remove unnecessary words or filler.

- Instruction Optimization: Experiment with different ways of phrasing instructions to achieve the desired output with fewer tokens. Sometimes, a well-placed keyword or a specific format request can replace several sentences of explanation.

- Summarize Context: For long conversations, instead of sending the entire chat history with every turn, summarize previous interactions or extract only the most relevant snippets to include in the prompt. This significantly reduces input token usage.

- System Messages: Use system messages effectively to set the persona and behavior of the AI, guiding its responses without needing repetitive instructions in every user prompt.

3. Caching and Deduplication

Avoid re-generating or re-processing content that has already been created or processed.

- Cache API Responses: If your application frequently asks the same question or requests the same type of content, cache the API's response. When the identical request comes again, serve the cached response instead of making a new API call. Implement smart caching strategies with time-to-live (TTL) to ensure data freshness.

- Deduplicate Requests: Before sending a prompt to the API, check if a similar request was recently made and if its output can be reused. This is particularly useful for tasks like summarization or factual lookups where the output for the same input is deterministic.

4. Batching Requests

For certain tasks, batching multiple individual requests into a single API call can sometimes reduce overhead or be more efficient. OpenAI sometimes offers batch processing endpoints for specific services (e.g., embeddings). Check their documentation for current capabilities.

5. Monitoring Usage and Setting Limits

Transparency into your API usage is critical for cost control.

- OpenAI Dashboard: Regularly check your usage dashboard on the OpenAI platform. It provides detailed breakdowns of token usage per model and spending.

- Set Hard and Soft Limits: Configure spending limits within your OpenAI account. Set a "soft" limit for alerts and a "hard" limit to automatically stop API usage once a certain threshold is reached, preventing runaway costs.

- Custom Monitoring: Integrate OpenAI usage data into your internal monitoring systems (e.g., Prometheus, Grafana) to track costs in real-time and correlate them with application usage patterns.

6. Leveraging Open-Source Alternatives and Hybrid Approaches

For certain tasks, open-source models or local LLMs can be a viable and much cheaper alternative.

- On-Premise LLMs: For highly sensitive data or tasks requiring absolute cost predictability, consider self-hosting open-source LLMs like Llama 3, Mistral, or Falcon.

- Hybrid Architectures: Combine OpenAI's powerful models for complex tasks with open-source models for simpler, high-volume tasks. This can be challenging to manage due to different APIs, but offers maximum flexibility.

7. Considering Unified API Platforms for Cost-Effective AI (Introducing XRoute.AI)

Managing multiple LLM APIs, whether from OpenAI or various other providers, can introduce significant complexity. Different API endpoints, authentication methods, rate limits, and pricing structures create an integration and management headache. This is where unified API platforms become invaluable tools for cost optimization and streamlined development.

Platforms like XRoute.AI are specifically designed to abstract away this complexity. XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows.

One of the key advantages of XRoute.AI in the context of cost management is its ability to facilitate a dynamic, cost-aware routing strategy. Instead of being locked into a single provider's pricing, XRoute.AI allows you to leverage the most cost-effective model for a given task, even across different providers. For example, if gpt-4o mini offers the best price-performance for a particular query, you can configure XRoute.AI to route requests to it. If another provider suddenly offers a better deal for a similar capability, you can switch with minimal code changes. This inherent flexibility contributes to cost-effective AI by allowing you to:

- Provider Agnosticism: Easily switch between OpenAI models or even to models from other providers (e.g., Anthropic, Google, Mistral) without rewriting your codebase. This creates competitive pressure among providers, allowing you to always choose the most economical option.

- Smart Routing: Implement logic to automatically route requests to the cheapest available model that meets your performance criteria, potentially routing simpler tasks to less expensive models and more complex ones to premium options.

- Simplified Management: Reduce development and maintenance overhead by managing all your LLM integrations through a single, consistent API. This leads to faster development cycles and fewer integration bugs, indirectly contributing to cost savings.

- Low Latency AI: While focused on cost, XRoute.AI also prioritizes performance, ensuring that even with intelligent routing, your applications maintain low latency AI responses, which is crucial for user experience and real-time applications.

With a focus on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. The platform’s high throughput, scalability, and flexible pricing model make it an ideal choice for projects of all sizes, from startups to enterprise-level applications, providing a powerful mechanism to control and optimize your overall LLM API spending.

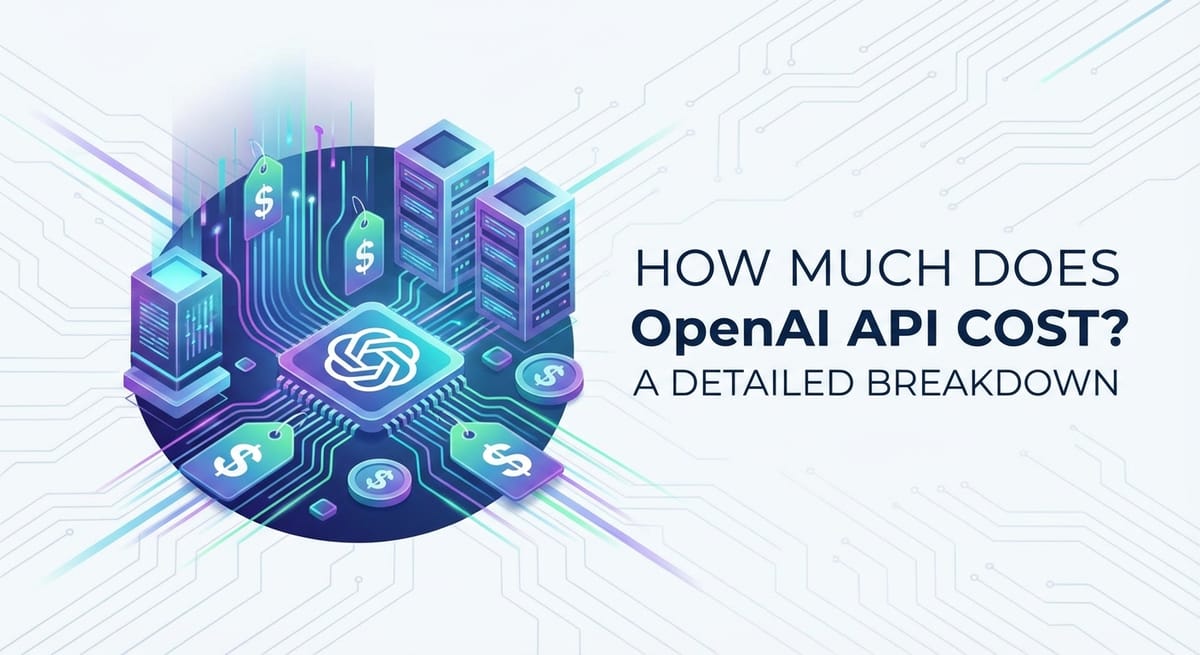

Conclusion: Mastering OpenAI API Costs for Sustainable AI Innovation

Navigating the landscape of OpenAI API costs requires more than just knowing the per-token price; it demands a strategic understanding of the underlying pricing model, the nuances of each model's capabilities, and the practical levers for cost optimization. As we've thoroughly explored, the question of how much does OpenAI API cost is answered by a confluence of factors, from the specific GPT-4 family model you choose—be it GPT-4o for its multimodal prowess or the remarkably economical gpt-4o mini for high-volume, simpler tasks—to your prompt engineering efficiency, and your overall API request volume.

The introduction of models like gpt-4o mini signifies a clear trend towards more granular and accessible AI, making powerful language models available at unprecedented price points. This allows developers to craft sophisticated, multi-tiered AI solutions where the computational power is precisely matched to the task's complexity, thereby ensuring cost-effective AI without sacrificing capability.

By adopting strategies such as intelligent model selection, rigorous prompt optimization, effective caching, diligent usage monitoring, and leveraging innovative platforms like XRoute.AI, businesses and developers can not only forecast their OpenAI API expenditure accurately but also significantly reduce it. Embracing a unified API platform like XRoute.AI further amplifies these efforts by offering unparalleled flexibility to switch between providers, ensuring you always access the most performant and cost-effective AI models for your specific needs, all while maintaining low latency AI and simplifying integration.

Ultimately, mastering your OpenAI API costs isn't about cutting corners; it's about building smarter. It's about making informed architectural decisions that enable sustainable AI innovation, allowing you to unlock the full potential of large language models while keeping your budget in check. The future of AI development is both powerful and financially intelligent, and with the right strategies, your applications can thrive in this dynamic environment.

Frequently Asked Questions (FAQ)

Q1: Is there a free tier for OpenAI API?

A1: Yes, OpenAI typically offers a free trial period with a certain amount of free credit upon signing up. This credit usually has an expiration date (e.g., 3 months). After the free trial, you transition to the pay-as-you-go model. Always check the official OpenAI pricing page for the most current free trial offerings and terms.

Q2: What is the cheapest OpenAI model for text generation?

A2: Currently, the gpt-4o mini model is the most cost-effective for text generation tasks within the OpenAI API ecosystem. It offers significantly lower input and output token prices compared to other GPT models, making it ideal for high-volume, simpler text-based applications.

Q3: How can I monitor my OpenAI API usage and costs?

A3: You can monitor your OpenAI API usage and costs directly through your OpenAI developer dashboard. It provides detailed graphs and breakdowns of your token consumption by model, along with your total expenditure. You can also set usage limits and receive email notifications when you approach or exceed these limits.

Q4: What is the difference between input and output tokens, and why does it matter for cost?

A4: Input tokens are the text you send to the AI model (your prompt, instructions, conversation history), while output tokens are the text the model generates in response. It matters for cost because OpenAI typically charges different prices per 1,000 tokens for input versus output, with output tokens usually being more expensive due to the higher computational cost of generating new text. Efficient prompt engineering (reducing input tokens) and concise responses (reducing output tokens) are key to cost saving.

Q5: Can I get discounts for high-volume usage of OpenAI API?

A5: OpenAI's public pricing is generally pay-as-you-go, based on your token consumption. While there aren't explicit public "volume discounts" on the per-token price for typical users, very large enterprise customers might be able to negotiate custom agreements directly with OpenAI. However, managing your model selection and optimizing usage, as discussed in this article, is the primary way to achieve cost-effective AI for most users. Platforms like XRoute.AI can also help optimize costs across multiple providers.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.