Mastering LLM Routing: Optimize Your AI Performance

The dawn of large language models (LLMs) has ushered in an era of unprecedented innovation, transforming how we interact with technology, process information, and automate complex tasks. From crafting compelling marketing copy and generating intricate code to providing intelligent customer support and synthesizing vast datasets, LLMs are proving to be indispensable tools across virtually every industry. However, the sheer proliferation of these powerful models – each with its unique strengths, limitations, pricing structures, and performance characteristics – presents a formidable challenge for developers and businesses striving to harness their full potential. The critical question isn't just how to use LLMs, but how to use them intelligently and efficiently.

This is where the strategic imperative of llm routing emerges as a cornerstone of modern AI architecture. Far beyond a mere technicality, llm routing is the sophisticated art and science of dynamically directing AI requests to the most appropriate large language model based on a multitude of factors, including the nature of the task, desired output quality, real-time performance metrics, and, crucially, cost implications. In a world where milliseconds and pennies can dictate competitive advantage, mastering llm routing is no longer a luxury but a necessity for achieving optimal Performance optimization and robust Cost optimization in your AI endeavors.

This comprehensive guide will delve deep into the mechanics, benefits, and advanced strategies of llm routing. We will explore the diverse landscape of LLMs, demystify the core components of intelligent routing systems, and unpack how these systems drive superior performance, reduce operational expenses, and enhance the overall reliability of AI-driven applications. By understanding and implementing effective llm routing strategies, you will be equipped to build more resilient, scalable, and economically viable AI solutions, truly mastering the art of leveraging generative AI to its fullest.

The Diverse Landscape of Large Language Models (LLMs)

Before we can effectively route requests, we must first understand the terrain: the vast and varied ecosystem of Large Language Models. The past few years have witnessed an explosion in the number and capabilities of LLMs, each vying for dominance in different niches. This diversity is a double-edged sword: it offers unparalleled flexibility and power but also introduces significant complexity in selection and management.

A Spectrum of Capabilities and Providers

At the forefront are models from established AI research powerhouses like OpenAI, Anthropic, Google, and Meta, alongside a burgeoning array of open-source initiatives and specialized offerings.

- OpenAI's GPT Series (e.g., GPT-4, GPT-3.5): Renowned for their general-purpose understanding, strong reasoning capabilities, and versatility across a wide range of tasks, from creative writing to complex problem-solving and coding. They often set the benchmark for quality and coherence.

- Anthropic's Claude Series (e.g., Claude 3 Opus, Sonnet, Haiku): Developed with a strong emphasis on safety, helpfulness, and harmlessness. Claude models often excel in long-context understanding, nuanced conversations, and robust ethical alignment, making them suitable for sensitive applications.

- Google's Gemini Series (e.g., Gemini 1.5 Pro, Flash): Designed to be multimodal from the ground up, capable of processing and understanding various types of information, including text, images, audio, and video. They offer compelling performance in complex reasoning and integration across different data modalities.

- Meta's Llama Series (e.g., Llama 2, Llama 3): Primarily open-source models that have democratized access to powerful LLM capabilities. Their open nature fosters a vibrant community of developers, leading to extensive fine-tuning and specialized applications, often offering a cost-effective alternative for deployment.

- Specialized Models: Beyond these generalists, a growing number of models are fine-tuned for specific tasks, such as code generation (e.g., StarCoder, Code Llama), medical applications, legal document analysis, or even specific languages. These specialized models can often outperform generalists within their domain due to their focused training.

Understanding Key Differentiators

The choice of LLM is rarely arbitrary. Several factors distinguish these models and inform routing decisions:

- Performance (Quality and Accuracy): How well does the model perform the intended task? This can be subjective but is often measured by metrics like coherence, factual accuracy, relevance, and ability to follow instructions. Top-tier models often deliver superior quality but at a higher cost.

- Latency: The time it takes for a model to process a request and return a response. Low latency is critical for real-time applications like chatbots and interactive interfaces.

- Throughput: The number of requests a model can handle per unit of time. High throughput is essential for applications with heavy concurrent usage.

- Context Window Size: The maximum amount of text (tokens) an LLM can process in a single input. Larger context windows enable models to handle extensive documents, maintain longer conversations, and perform more complex reasoning over broader information.

- Pricing Model: LLMs are typically priced based on token usage (input and output tokens), and sometimes by requests or compute time. Prices vary significantly between providers and models, often with cheaper models offering lower quality or smaller context windows.

- Availability and Reliability: The uptime, rate limits, and regional availability of a model API.

- Fine-tuning Capabilities: The ease and effectiveness with which a model can be fine-tuned on custom datasets to improve performance on specific tasks or domains.

The Challenges of Direct Integration

Without llm routing, directly integrating multiple LLMs into an application presents a host of challenges:

- API Sprawl and Management: Each provider has its own API specifications, authentication methods, and SDKs. Managing multiple integrations becomes cumbersome, prone to errors, and increases development overhead.

- Vendor Lock-in Risk: Relying on a single provider can expose businesses to future price hikes, service disruptions, or a lack of specific features.

- Suboptimal Model Selection: Without a systematic approach, developers might default to a single familiar model, even if it's not the most efficient or cost-effective for every task. This leads to wasted potential and inflated costs.

- Inconsistent Performance: Manually switching between models based on observed performance is impractical in dynamic environments.

- Lack of Centralized Observability: Monitoring the performance, cost, and usage patterns across disparate LLM integrations becomes fragmented and difficult to aggregate.

Recognizing these challenges underscores the fundamental need for an intelligent orchestration layer that can abstract away the complexity and enable dynamic decision-making. This orchestration layer is precisely what llm routing aims to provide.

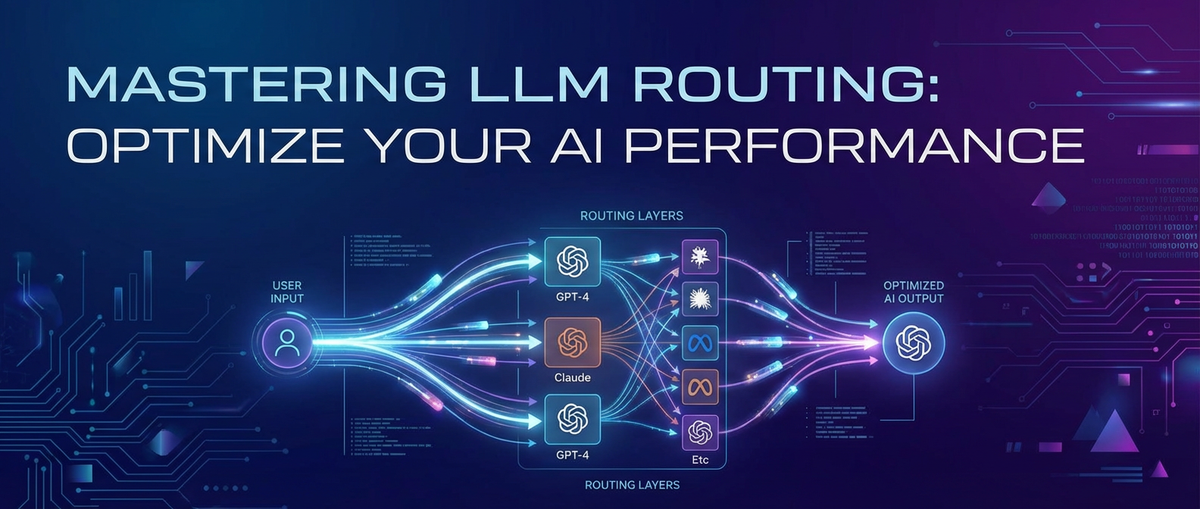

What is LLM Routing? A Deep Dive

At its heart, llm routing is an intelligent orchestration layer designed to manage and optimize interactions with multiple large language models. Imagine it as the traffic controller of your AI ecosystem, meticulously directing each incoming request to the most suitable LLM based on predefined rules, real-time performance data, and strategic objectives. This process is far more sophisticated than a simple round-robin load balancer; it's about making smart, context-aware decisions that drive both efficiency and effectiveness.

Defining LLM Routing

Formally, llm routing is the process of programmatically directing an incoming API request to one or more backend Large Language Models from a pool of available models, based on a set of criteria. The goal is to maximize desired outcomes—be it speed, accuracy, cost-efficiency, or a combination thereof—for each specific query or task.

To draw an analogy, consider a sophisticated logistics company. They don't send every package via the same truck, train, or plane. Instead, they analyze each package's destination, urgency, size, cost constraints, and even weather conditions to select the optimal route and carrier. Similarly, an llm routing system analyzes an AI request to determine the best "carrier" (LLM) and "route" (API call) to deliver the desired "package" (response).

Core Components of an LLM Routing System

A robust llm routing infrastructure typically comprises several key components that work in concert:

- Request Interception & Analysis:

- All incoming requests from the application are first directed to the routing layer.

- This component then analyzes the request's content (e.g., keywords, intent, sentiment, length), metadata (e.g., user ID, source application), and context (e.g., historical interactions, session data).

- This analysis is crucial for understanding the nature of the task and its specific requirements.

- Model Pool Management:

- The routing system maintains a comprehensive registry of all available LLMs, including their providers, API endpoints, authentication keys, performance characteristics (e.g., typical latency, context window), pricing details, and any observed limitations or specializations.

- This pool can include models from various providers (OpenAI, Anthropic, Google, custom fine-tuned models) and even different versions of the same model.

- Decision Engine (The Router Itself):

- This is the brain of the llm routing system. Based on the analysis of the incoming request and the knowledge of the model pool, the decision engine applies a set of rules, algorithms, or even machine learning models to select the optimal LLM.

- Decision logic can range from simple rule-based conditions (e.g., "if intent is code generation, route to Model X") to complex, dynamic algorithms that consider real-time load, cost-effectiveness, and predicted performance.

- Advanced engines might use A/B testing, reinforcement learning, or multi-armed bandit algorithms to continuously refine their routing decisions.

- Request Transformation & Forwarding:

- Once an LLM is selected, the routing system may need to transform the original request to conform to the selected LLM's specific API format and prompt engineering requirements. This could involve adjusting the prompt structure, adding system messages, or handling different parameter names.

- The transformed request is then forwarded to the chosen LLM's API endpoint.

- Response Aggregation & Normalization:

- Upon receiving a response from the LLM, the routing system might process it before returning it to the originating application. This could involve:

- Normalization: Ensuring the output format is consistent, regardless of the LLM used, simplifying the application's parsing logic.

- Post-processing: Applying filters, moderation, or additional processing steps.

- Fallback Handling: If the primary LLM fails or returns an unsatisfactory response, the router can initiate a fallback mechanism, sending the request to an alternative model.

- Caching: Storing responses for identical or similar requests to reduce latency and cost.

- Upon receiving a response from the LLM, the routing system might process it before returning it to the originating application. This could involve:

- Monitoring and Analytics:

- Integral to any effective routing system is robust monitoring. This component tracks key metrics such as:

- Latency for each LLM and request type.

- Success and error rates.

- Token usage and associated costs.

- Throughput and concurrency.

- Quality scores (if feedback mechanisms are in place).

- These analytics provide invaluable insights for continuous Performance optimization and Cost optimization of the routing strategy.

- Integral to any effective routing system is robust monitoring. This component tracks key metrics such as:

Why LLM Routing is Crucial for Modern AI Applications

The complexity of today's AI landscape demands intelligent orchestration. LLM routing addresses this need by providing:

- Agility and Flexibility: Easily integrate new models or swap out underperforming ones without disrupting the application logic.

- Resilience: Implement robust failover strategies to ensure continuous service even if a primary LLM provider experiences downtime or rate limits.

- Scalability: Distribute load across multiple models and providers to handle increasing request volumes efficiently.

- Strategic Advantage: Move beyond one-size-fits-all LLM usage to a tailored, dynamic approach that maximizes the value derived from each AI interaction.

Ultimately, llm routing empowers developers and businesses to build more intelligent, adaptable, and economically sound AI solutions, moving towards a future where AI is not just powerful, but also pragmatic and highly optimized.

The Pillars of LLM Routing: Performance Optimization

In the fast-paced world of AI applications, performance is paramount. Users expect instant responses, accurate information, and seamless interactions. Performance optimization through llm routing is about achieving these goals by intelligently managing how requests are processed by large language models. It encompasses reducing latency, enhancing accuracy, and ensuring high throughput and scalability.

1. Latency Reduction: The Need for Speed

Latency, the delay between sending a request and receiving a response, is a critical factor in user experience, especially for real-time applications like chatbots, virtual assistants, and interactive content generation tools. High latency can lead to frustrated users and abandoned sessions. LLM routing significantly contributes to latency reduction through several mechanisms:

- Dynamic Routing to Fastest Available Model: The routing engine can monitor the real-time performance (response times, queue lengths) of all available LLMs. If Model A is currently experiencing high load and slow responses, the router can dynamically direct new requests to Model B, which might be faster at that moment, even if Model A is typically preferred.

- Geographic Considerations (Edge Deployment): For global applications, routing requests to LLM endpoints geographically closer to the user can dramatically reduce network latency. An llm routing system can identify the user's location and select an LLM instance or provider with data centers in the nearest region.

- Caching Strategies: For frequently asked questions or highly repetitive requests, the routing layer can implement a caching mechanism. If an identical or very similar request has been processed recently, the router can serve the cached response immediately, bypassing the LLM entirely, thereby achieving near-zero latency and saving costs.

- Load Balancing Across Instances: Even within a single LLM provider, large volumes of requests can be distributed across multiple instances or regional endpoints to prevent any single point from becoming a bottleneck, ensuring consistent low latency.

- Pre-emptive Model Warm-up: For applications with predictable peak times, an llm routing system can strategically "warm up" less-used models by sending dummy requests, ensuring they are ready to handle live traffic instantly when demand spikes.

2. Accuracy and Quality Enhancement: The Pursuit of Precision

While speed is important, it cannot come at the expense of quality. An AI response that is fast but inaccurate or irrelevant is ultimately useless. LLM routing helps in Performance optimization by ensuring that requests are handled by the LLM best suited for the specific task, thereby boosting accuracy and output quality.

- Routing to Specialized Models for Specific Tasks: Different LLMs excel at different tasks. For instance:

- Code generation tasks might be routed to models specifically trained on vast code repositories.

- Creative writing or storytelling prompts could go to models known for their imaginative flair.

- Factual summarization or data extraction might be best handled by models optimized for precision and factual consistency. The routing engine can analyze the intent of the prompt (e.g., through prompt classification or keyword detection) and direct it to the most appropriate specialized model.

- A/B Testing of Models: An llm routing platform can facilitate A/B testing by routing a percentage of similar requests to different LLMs and collecting feedback on the quality of their responses. This allows for continuous evaluation and identification of the best-performing models for various use cases.

- Feedback Loops for Continuous Improvement: Integrating user feedback (e.g., "Was this helpful?", up/down votes) into the routing decision engine allows the system to learn and adapt. If a particular model consistently receives poor feedback for a certain type of query, the router can automatically deprioritize it for those queries or direct them to an alternative that has performed better.

- Ensemble Methods and Fallback: For mission-critical tasks requiring extremely high accuracy, the routing system can implement ensemble methods. A request might be sent to two or more LLMs simultaneously, and a consensus mechanism or a secondary verification LLM could be used to select the best response, or to flag inconsistencies. Additionally, if a primary model's response is deemed low quality (e.g., too short, off-topic), a fallback to a different, potentially more robust model can be triggered.

3. Throughput and Scalability: Handling High Demands

Modern AI applications often face fluctuating and potentially massive loads. An effective llm routing strategy ensures that the system can handle a high volume of concurrent requests (throughput) and effortlessly scale to meet peak demands without degradation in performance.

- Load Balancing Across Multiple Models and Providers: Instead of relying on a single LLM, which could become a bottleneck or hit rate limits, the router can distribute incoming requests across a pool of diverse models and providers. This parallel processing capability significantly increases the overall system throughput.

- Intelligent Queue Management: When an LLM or provider experiences temporary capacity issues or rate limits, the routing system can intelligently queue requests, hold them for a short period, or direct them to less-utilized alternatives, preventing errors and ensuring that requests are eventually processed.

- Dynamic Resource Allocation: For self-hosted or cloud-managed LLMs, the routing layer can integrate with infrastructure orchestration tools to dynamically scale up or down the number of LLM instances based on real-time demand, ensuring resources are available when needed and de-provisioned when not, contributing to Cost optimization.

- Failover Mechanisms: A robust llm routing system includes sophisticated failover logic. If an LLM becomes unresponsive, returns an error, or exceeds its rate limits, the router can automatically redirect the request to an alternative, healthy model or provider, ensuring uninterrupted service and system resilience. This is crucial for maintaining high availability.

By meticulously addressing latency, accuracy, and scalability, llm routing elevates the overall performance of AI applications, delivering a superior user experience and unlocking the full potential of large language models.

Table 1: Performance Metrics for LLM Routing

To effectively implement and monitor Performance optimization through llm routing, it's crucial to track specific metrics. This table outlines key performance indicators (KPIs) and their relevance.

| Metric | Description | Why it's Important for LLM Routing | Target Range (Illustrative) |

|---|---|---|---|

| Average Latency | The average time taken from sending a request to receiving a full response. | Directly impacts user experience. Routing strategies aim to minimize this by selecting the fastest available model or leveraging caching. | < 1-2 seconds (for interactive apps) |

| P95/P99 Latency | The latency experienced by 95th/99th percentile of requests. | Reveals outlier performance issues. High P95/P99 indicates that a significant portion of users might be experiencing slow responses, even if the average is good. Routing can address this by avoiding overloaded models. | < 3-5 seconds |

| Throughput (RPS) | Requests per second successfully processed. | Measures the system's capacity to handle load. Routing distributes requests across models/providers to maximize aggregate throughput and prevent bottlenecks. | Varies (e.g., 100-1000+ RPS) |

| Error Rate | Percentage of requests resulting in API errors or failed responses. | Indicates the reliability of models and routing logic. Effective routing implements failover to minimize errors, especially during model outages or rate limits. | < 0.1% |

| Quality Score | A quantitative or qualitative measure of response accuracy, relevance, etc. | Direct indicator of model effectiveness for specific tasks. Routing directs requests to models known to produce high-quality outputs for specific prompt types. Can be derived from human feedback or automated evaluation metrics. | > 80-90% (task-dependent) |

| Cache Hit Rate | Percentage of requests served directly from the cache. | Higher hit rates mean fewer requests sent to LLMs, dramatically reducing latency and costs. Routing intelligently identifies cacheable requests. | > 10-20% (for repetitive queries) |

| Model Utilization | The percentage of time a specific LLM is actively processing requests. | Helps understand if resources are being efficiently used. Routing balances load to prevent under-utilization or over-utilization of expensive models. | 50-80% optimal |

| Fallback Rate | Percentage of requests that required a fallback to an alternative model. | High fallback rates might indicate issues with the primary model or overly aggressive routing rules. Helps identify models needing replacement or re-evaluation. | < 1-2% |

| Average Token Count | Average number of tokens in input/output. | While not a direct performance metric, it heavily influences latency and cost. Routing can select models with better token efficiency or use prompt engineering to reduce token usage without sacrificing quality. | Varies by task |

Monitoring these metrics continuously allows organizations to fine-tune their llm routing strategies, ensuring that their AI applications are always running at peak performance, satisfying user expectations, and maintaining system integrity.

The Strategic Advantage: Cost Optimization through LLM Routing

While performance is often the immediate concern, the long-term sustainability and profitability of AI applications hinge significantly on Cost optimization. Large language models, especially the cutting-edge ones, can incur substantial operational expenses due to their complex computational requirements. LLM routing provides a powerful strategic lever to manage and reduce these costs without compromising quality or performance. It transforms expenditure from an uncontrolled outflow into a strategic investment.

1. Dynamic Pricing & Model Selection: Smart Spending

The pricing models for LLMs are diverse and dynamic, typically based on token usage (input and output), context window size, and sometimes even the specific model capabilities. LLM routing empowers organizations to navigate this complex pricing landscape intelligently.

- Choosing the Cheapest Model That Meets Requirements: Not every task requires the most advanced, and thus most expensive, LLM. A simple summarization task, a basic sentiment analysis, or a quick factual lookup might be perfectly handled by a smaller, cheaper model. The routing engine can analyze the complexity and quality requirements of an incoming request. If a prompt falls within the capabilities of a more affordable model, the router will direct it there, reserving premium models for truly complex or critical tasks. This "right-sizing" of model usage is a primary driver of Cost optimization.

- Leveraging Tiered Pricing Structures: Many LLM providers offer tiered pricing, with different costs for different models (e.g., a "fast" model vs. a "large" model, or different generations like GPT-3.5 vs. GPT-4). An llm routing system can be configured to prioritize lower-tier models for routine requests during off-peak hours or when lower latency is not strictly required, switching to higher-tier models only when absolutely necessary (e.g., high-stakes queries, peak demand, complex reasoning).

- Spot Instance/On-Demand vs. Reserved Capacity: For self-hosted or cloud-managed LLMs, routing can make decisions based on the cost implications of the underlying infrastructure. It can prioritize routing to models running on cheaper spot instances (if available and suitable for the workload) or leverage pre-purchased reserved capacity effectively to avoid expensive on-demand pricing spikes.

- Provider-Specific Discounts and Deals: Over time, providers might offer discounts or introduce new models with more competitive pricing. An agile llm routing system allows quick integration of these new options, enabling immediate cost savings without requiring application-level code changes.

2. Token Management & Cost Efficiency: Reducing the Billable Unit

Tokens are the fundamental unit of billing for most LLMs. Efficient token management is thus central to Cost optimization. LLM routing contributes to this by:

- Routing to Models with Lower Token Costs: As mentioned, different models have different input and output token costs. By routing less complex requests to models with a lower cost per token, the overall expenditure for the same volume of processed text can be significantly reduced.

- Advanced Prompt Engineering within Routing: The routing layer can dynamically modify prompts before sending them to the selected LLM. This could involve:

- Summarization/Condensation: For very long user inputs that only need a brief LLM interaction, the router could first pass the input through a cheaper, faster LLM to summarize it, then send the condensed version to the primary LLM. This reduces the input token count for the expensive LLM.

- Extraction: If only a specific piece of information is needed from a large text, the router can use a smaller model to extract that information, then send only the extracted data to the main LLM.

- Context Truncation: Smartly truncating or selecting the most relevant parts of the historical conversation or document context based on the current query, ensuring only necessary tokens are sent.

- Eliminating Redundant Calls (Caching): As discussed under performance, caching not only reduces latency but also significantly lowers costs by preventing identical requests from being sent to LLMs multiple times, thereby saving on token usage.

- Fallback to Cheaper Models for Retries: If an initial request to an expensive model fails or returns an unsatisfactory response, a smart router can be configured to retry the request with a cheaper, potentially less capable, model as a last resort, avoiding repeated expenditure on the premium model for a failed query.

3. Resource Utilization: Maximize ROI on Infrastructure

For organizations hosting their own LLMs or managing dedicated instances, Cost optimization extends to efficient hardware and software resource utilization.

- Avoiding Over-provisioning: Without intelligent routing, a common defensive strategy is to over-provision resources to handle peak loads, leading to significant idle capacity and wasted expenditure during off-peak times. Routing allows for more precise scaling by dynamically directing traffic, enabling more accurate resource allocation.

- Dynamic Scaling of Infrastructure: By integrating with cloud autoscaling groups or Kubernetes, the llm routing layer can trigger the dynamic provisioning and de-provisioning of LLM inference endpoints. When demand is low, instances can be scaled down, saving compute costs. When demand spikes, instances scale up to maintain performance, ensuring optimal use of expensive GPU or CPU resources.

- Batching Requests: For asynchronous or less latency-sensitive tasks, the routing layer can batch multiple smaller requests into a single, larger request to an LLM. This can reduce the overhead per request and potentially lower overall token costs if the LLM provider offers efficiencies for larger batches.

By strategically implementing these Cost optimization techniques through sophisticated llm routing, businesses can significantly reduce their AI operational expenses, making their LLM-powered applications not just powerful, but also economically sustainable and highly profitable. This turns the burgeoning cost of LLM usage into a controllable, predictable, and strategically managed resource.

Table 2: Cost Comparison of Example LLMs (Hypothetical, Illustrative)

This table illustrates how different LLMs might compare in terms of cost and key characteristics, highlighting the data points an llm routing system would leverage for Cost optimization. Note: Actual prices and capabilities are subject to change by providers.

| Model Name | Provider | Context Window (Tokens) | Input Cost/1M Tokens (USD) | Output Cost/1M Tokens (USD) | Strengths / Best For | Latency (Illustrative) |

|---|---|---|---|---|---|---|

| GPT-4o | OpenAI | 128,000 | $5.00 | $15.00 | Complex reasoning, creativity, multimodal, high quality | Low-Medium |

| GPT-3.5 Turbo | OpenAI | 16,385 | $0.50 | $1.50 | General purpose, cost-effective, good for basic tasks | Very Low |

| Claude 3 Opus | Anthropic | 200,000 | $15.00 | $75.00 | Advanced reasoning, long context, safety, nuanced tasks | Medium-High |

| Claude 3 Sonnet | Anthropic | 200,000 | $3.00 | $15.00 | Balanced, versatile, good for enterprise workloads | Low-Medium |

| Gemini 1.5 Pro | 1,000,000 | $3.50 | $10.50 | Multimodal, extremely large context, strong for analysis | Low-Medium | |

| Llama 3 70B (API) | Meta (via third-party) | 8,192 | $0.70 | $0.80 | Open-source flexibility, strong performance, general | Medium |

| Mistral Large | Mistral AI | 32,768 | $8.00 | $24.00 | Advanced reasoning, multilingual, compact and efficient | Low |

| Mixtral 8x7B (API) | Mistral AI | 32,768 | $0.24 | $0.24 | Sparse Mixture of Experts, fast, highly cost-effective | Very Low |

This table is for illustrative purposes. Actual prices and capabilities from providers like OpenAI, Anthropic, Google, and others can change frequently. Open-source models like Llama 3 often have varying API costs depending on the hosting provider.

An llm routing system would use this kind of data to make informed decisions. For example:

- A complex legal document review query requiring a 200,000-token context window might be routed to Claude 3 Opus or Gemini 1.5 Pro despite their higher costs, due to their superior capability in long-context understanding and reasoning.

- A basic customer support query asking for a product's return policy might go to GPT-3.5 Turbo or Mixtral 8x7B for rapid, Cost optimization-focused response, as these models are highly efficient for simpler interactions.

- A creative brainstorming session might leverage GPT-4o for its advanced creative capabilities, tolerating a slightly higher cost for superior output quality.

The key is dynamic selection based on the query's demands and the model's profile, always aiming to balance performance, quality, and cost.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Advanced LLM Routing Strategies and Techniques

Beyond the basic principles, sophisticated llm routing systems employ advanced strategies and techniques to refine decision-making, adapt to evolving conditions, and unlock even greater levels of Performance optimization and Cost optimization. These methods move beyond simple "if-then" rules to incorporate more intelligent, data-driven approaches.

1. Rule-Based Routing: The Foundation

Rule-based routing forms the fundamental layer of most llm routing systems. It involves defining explicit conditions that determine which LLM to use.

- Keyword/Phrase Detection: If a prompt contains specific keywords (e.g., "generate code," "summarize article," "translate to Spanish"), it can be routed to a model specialized in that domain or language.

- Intent Detection: Using a smaller, faster LLM or a natural language understanding (NLU) model, the routing system can classify the user's intent (e.g., "customer support," "creative writing," "data analysis") and route the request accordingly.

- Sentiment Analysis: For customer service applications, prompts expressing negative sentiment might be routed to a more empathetic LLM or flagged for human review, while positive feedback goes to a standard model.

- Prompt Length/Complexity: Short, simple prompts can be routed to cheaper, faster models (e.g., GPT-3.5 Turbo), while longer, more complex prompts requiring extensive context or reasoning are directed to more powerful, albeit more expensive, models (e.g., GPT-4o, Claude 3 Opus).

While straightforward to implement, rule-based routing can become cumbersome to manage as the number of rules grows, and it lacks the adaptability of more advanced methods.

2. Content-Based Routing: Intelligent Analysis

Content-based routing takes the analysis of the incoming request a step further, often employing machine learning techniques to understand the nuanced characteristics of the input.

- Embedding Similarity: The input prompt can be converted into a vector embedding. This embedding is then compared to embeddings of various "exemplar" prompts associated with different LLMs. The request is routed to the model whose exemplar prompt has the highest similarity. This allows for more flexible routing than strict keyword matching.

- Topic Modeling: Latent Dirichlet Allocation (LDA) or similar techniques can identify the underlying topics within a prompt. Requests related to specific topics (e.g., "finance," "healthcare," "technology") can then be routed to LLMs fine-tuned or known to excel in those domains.

- Entity Recognition: If the prompt mentions specific entities (e.g., "Microsoft," "S&P 500," "Quantum Physics"), the router can infer the domain and select an appropriate specialist LLM.

- Prompt Complexity Scoring: Algorithms can assess the cognitive load required by a prompt (e.g., number of clauses, presence of logical operators, depth of questions) and route it to an LLM with sufficient reasoning capabilities.

3. User-Context Based Routing: Personalization and History

Leveraging information about the user and their past interactions adds another layer of intelligence to llm routing, enabling personalized and more effective responses.

- User Profile and Preferences: If a user has a preference for a specific tone (e.g., formal, casual) or has a history of engaging with a particular type of content (e.g., technical, creative), the router can select an LLM known to align with those preferences.

- Session History: For multi-turn conversations, the router can maintain a session state. If a user is discussing a technical issue, subsequent turns might continue to be routed to a technical LLM, even if a single turn might appear less technical in isolation.

- A/B Testing Groups: Users can be segmented into different groups for A/B testing, where each group is consistently routed to a specific LLM or routing strategy to evaluate its long-term impact on engagement, satisfaction, and cost.

4. Reinforcement Learning (RL) for Routing: Adaptive Intelligence

This is perhaps the most advanced and dynamic form of llm routing. RL agents learn the optimal routing policy through trial and error, based on rewards and penalties derived from actual system performance and user feedback.

- Reward Functions: The RL agent receives rewards for positive outcomes (e.g., low latency, high quality score, low cost, successful task completion) and penalties for negative outcomes (e.g., high latency, errors, expensive token usage without justification).

- Exploration vs. Exploitation: The RL agent continuously balances exploiting known good routing paths with exploring new or less-used LLMs and strategies to discover potentially better outcomes.

- Continuous Learning: As new LLMs emerge, pricing changes, or user preferences evolve, the RL agent can adapt its routing policies in real-time, ensuring continuous Performance optimization and Cost optimization.

- Multi-armed Bandit Algorithms: A simpler form of RL, these algorithms are excellent for dynamically choosing the best LLM among a set for a given task, balancing the need to leverage the best-performing models (exploitation) with the need to test new models or strategies (exploration).

5. Hybrid Approaches and Ensemble Methods

Often, the most effective llm routing systems combine multiple strategies.

- Layered Routing: A request might first go through a rule-based layer (e.g., "if system message indicates moderation, route to moderation LLM"), then a content-based layer (e.g., "if content is code, route to code LLM"), and finally an RL layer to fine-tune the decision based on real-time metrics.

- Cascading Fallback: If the primary LLM fails or is too expensive for a particular task, the request can automatically cascade down a predefined list of alternative models, moving from high-quality/high-cost to lower-quality/lower-cost options.

- Parallel Queries & Consensus: For critical tasks, a request can be sent to multiple LLMs simultaneously. The routing system then aggregates the responses, perhaps using a smaller LLM to identify the best response, resolve conflicts, or synthesize a final answer. This enhances robustness and quality but increases cost and potentially latency.

- Prompt Engineering within the Routing Layer: The routing layer can dynamically adapt the prompt format or add specific instructions based on the selected LLM. For instance, if routing to a model known to be sensitive to tone, the router might prepend an instruction to maintain a neutral tone.

By mastering these advanced llm routing strategies, developers and organizations can create highly intelligent, adaptable, and efficient AI systems that truly deliver on the promise of generative AI, maximizing both performance and cost-effectiveness. The future of AI application development lies in this sophisticated orchestration.

Implementing LLM Routing: Tools and Best Practices

Bringing llm routing to life requires careful consideration of tools, architecture, and operational best practices. The decision often boils down to building a custom solution in-house versus leveraging a specialized platform.

Build vs. Buy: Weighing the Options

Building a Custom LLM Routing Solution:

- Pros:

- Maximum Customization: Tailor every aspect to your exact needs, integrating with unique internal systems and proprietary models.

- Full Control: Complete ownership of the logic, data flow, and security posture.

- Potentially Lower Variable Costs (Long-term): If you have the engineering talent and infrastructure, you might avoid third-party service fees for high volumes.

- Cons:

- High Development Overhead: Requires significant engineering resources, time, and expertise in AI, distributed systems, and API management.

- Ongoing Maintenance: Responsible for monitoring, updating integrations, handling LLM API changes, and ensuring scalability and reliability.

- Slower Time to Market: Building from scratch takes time, delaying the benefits of Performance optimization and Cost optimization.

- Risk of Reinventing the Wheel: Many common routing problems have already been solved by specialized platforms.

Buying or Using a Specialized LLM Routing Platform:

- Pros:

- Faster Time to Value: Quickly integrate and start benefiting from intelligent routing.

- Reduced Engineering Overhead: Offload much of the integration, monitoring, and maintenance burden to the platform provider.

- Access to Advanced Features: Platforms often come with sophisticated routing algorithms, analytics, caching, and fallback mechanisms built-in.

- Scalability and Reliability: Built to handle high loads and ensure high availability, with expert teams managing the underlying infrastructure.

- Unified API: A single point of access to multiple LLMs simplifies development.

- Cons:

- Platform Lock-in (to some extent): While reducing LLM vendor lock-in, you introduce a dependency on the routing platform.

- Pricing: Incurs recurring service fees, which may become substantial at very high volumes.

- Less Customization: May have limitations on how deeply you can customize specific routing logic or integrate niche models.

For most businesses, especially those focusing on core product development rather than AI infrastructure, a specialized platform offers a compelling balance of speed, capability, and cost-effectiveness.

Key Features to Look For in an LLM Routing Platform

When evaluating platforms, consider these essential capabilities:

- Unified API Interface: A single, consistent API endpoint (ideally OpenAI-compatible) that abstracts away the complexity of integrating with various LLM providers.

- Broad Model and Provider Support: The ability to connect to a wide range of LLMs from different providers (OpenAI, Anthropic, Google, open-source models) and easily add new ones as they emerge.

- Flexible Routing Rules: Support for various routing strategies, including rule-based, content-based, context-based, and ideally, dynamic learning (e.g., A/B testing, multi-armed bandit).

- Real-time Monitoring and Analytics: Comprehensive dashboards to track key metrics like latency, throughput, error rates, token usage, and costs across all models and routing paths. This is vital for continuous Performance optimization and Cost optimization.

- Caching Capabilities: Intelligent caching to reduce redundant LLM calls, thereby improving latency and saving costs.

- Fallback and Retry Mechanisms: Robust logic to automatically switch to alternative models or retry requests in case of errors, rate limits, or poor performance.

- Rate Limiting and Quota Management: Tools to manage API usage across different LLMs and prevent unexpected cost spikes or service interruptions.

- Scalability and Reliability: The platform itself should be highly available, fault-tolerant, and capable of scaling to meet your application's demands.

- Security and Data Privacy: Robust security measures, data encryption, compliance certifications, and clear policies on data handling.

- Developer-Friendly Tools: SDKs, clear documentation, and easy-to-use interfaces that streamline the integration process.

Introducing XRoute.AI: A Unified Solution for LLM Routing

Among the cutting-edge solutions emerging in this space, XRoute.AI stands out as a powerful, developer-centric platform specifically designed to master llm routing and unlock the full potential of your AI applications. It embodies many of the essential features listed above, offering a robust foundation for both Performance optimization and Cost optimization.

XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows. With a focus on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. The platform’s high throughput, scalability, and flexible pricing model make it an ideal choice for projects of all sizes, from startups to enterprise-level applications. Its comprehensive suite of features addresses the core challenges of LLM proliferation, allowing you to focus on building innovative applications rather than managing complex API integrations.

Best Practices for Implementing LLM Routing

Regardless of whether you build or buy, adhere to these best practices for a successful llm routing implementation:

- Define Clear KPIs: Before starting, clearly define what "optimized performance" and "cost-effective" mean for your application. Establish measurable KPIs for latency, accuracy, throughput, and cost per token/request.

- Start Small and Iterate: Don't try to implement the most complex routing strategy on day one. Begin with simpler rules, monitor their impact, and gradually introduce more sophisticated logic.

- Continuous Monitoring and Alerting: Implement robust monitoring for all LLM calls and routing decisions. Set up alerts for anomalies in latency, error rates, or cost spikes. This proactive approach is crucial for maintaining Performance optimization and catching issues early.

- A/B Test Routing Strategies: Routinely test different routing algorithms or model combinations with a subset of your traffic to identify superior strategies in a controlled environment.

- Prioritize Security and Data Privacy: Ensure your routing layer complies with all relevant data privacy regulations (e.g., GDPR, CCPA). Implement strong authentication, authorization, and encryption for all data flowing through the system.

- Implement Robust Fallback Strategies: Always have a plan B. Define clear fallback models for when primary models fail, hit rate limits, or return unsatisfactory responses. Consider implementing human-in-the-loop mechanisms for critical failures.

- Optimize Prompt Engineering: Even with routing, well-crafted prompts are essential. The routing layer can dynamically modify prompts based on the chosen LLM, but the foundational prompt should be designed for clarity and efficiency.

- Stay Informed on LLM Evolution: The LLM landscape is constantly changing. Regularly review new models, pricing updates, and performance benchmarks to keep your routing strategy current and competitive.

By following these guidelines and leveraging powerful platforms like XRoute.AI, organizations can effectively implement and manage llm routing strategies that drive significant Performance optimization and Cost optimization, ensuring their AI applications are robust, scalable, and economically viable in the long run.

Real-World Applications and Case Studies (Conceptual)

The power of llm routing truly shines when applied to real-world scenarios, transforming theoretical benefits into tangible improvements. Let's explore some conceptual case studies demonstrating how intelligent routing can optimize diverse AI applications.

1. Customer Support Chatbots: Enhancing Experience, Reducing Costs

Challenge: A large e-commerce company operates a customer support chatbot. Queries range from simple "What's my order status?" to complex "My product arrived damaged, how do I initiate a return and get a refund, and what's your policy on perishable goods?" Using a single, powerful LLM for all queries is expensive and overkill for simple tasks, while a cheaper, less capable LLM struggles with complexity. High latency on complex queries frustrates customers.

LLM Routing Solution:

- Intent-Based Routing: An initial, fast, and inexpensive LLM (like GPT-3.5 Turbo or Mixtral 8x7B) or a specialized NLU model first classifies the customer's intent.

- Simple Queries (Order Status, FAQ): Routed to the cheapest available LLM (e.g., GPT-3.5 Turbo, or a highly fine-tuned, smaller model) or a knowledge base lookup for immediate, low-latency, and Cost optimization responses.

- Complex Queries (Damaged product, refunds, technical issues): Routed to a more powerful, accurate, and context-aware LLM (e.g., GPT-4o, Claude 3 Sonnet).

- Sentiment Analysis: If sentiment is detected as highly negative or frustrated, the query might be routed to a premium LLM capable of more empathetic responses, or even directly to a human agent queue, reducing churn.

- Latency-Based Fallback: If the primary LLM for complex queries is experiencing high latency due to peak load, the routing system can automatically fall back to a slightly less capable but faster alternative to maintain response times, or offer the customer an option for email support.

- Caching: Common simple queries and their answers are cached, providing instant responses and significantly reducing LLM calls and costs.

Outcome: * Performance optimization: Faster responses for simple queries, higher accuracy for complex ones, leading to improved customer satisfaction. * Cost optimization: Significant reduction in overall LLM API costs by minimizing calls to expensive models for trivial tasks. Efficient resource utilization across the board.

2. Content Generation Platforms: Balancing Creativity, Speed, and Budget

Challenge: A marketing agency uses an AI platform to generate various content types: short social media posts, lengthy blog articles, detailed product descriptions, and creative ad copy. Each task has different requirements for creativity, factual accuracy, and length. Using one LLM for all tasks either leads to mediocre results or excessive costs.

LLM Routing Solution:

- Content Type Routing:

- Short Social Media Posts/Headlines: Routed to a fast, cost-effective AI model known for concise output (e.g., GPT-3.5 Turbo).

- Long Blog Articles/Technical Content: Routed to an LLM with a large context window and strong factual reasoning, potentially with enhanced research capabilities (e.g., Gemini 1.5 Pro, Claude 3 Sonnet).

- Creative Ad Copy/Storytelling: Routed to LLMs known for their creativity and stylistic flexibility (e.g., GPT-4o, Claude 3 Opus).

- Fact-Checking/Summarization: Could be handled by a specialized model or a different routing path for verification.

- Prompt Complexity Routing: If a prompt requires deep analysis of provided source material, it's routed to a model with advanced capabilities. If it's a simple rephrasing, a cheaper model is used.

- User Tier-Based Routing: Premium users might have their requests prioritized or always routed to top-tier models for guaranteed high quality, while standard users might experience more Cost optimization routing strategies.

Outcome: * Performance optimization: Higher quality and more relevant content generation across all types. Faster turnaround for less complex content. * Cost optimization: Reduced expenditure on premium LLMs by strategically allocating tasks to the most appropriate and cost-efficient models.

3. Code Generation and Development Tools: Precision and Efficiency

Challenge: A software development platform integrates AI for code generation, bug fixing, and documentation. Different programming languages, frameworks, and complexity levels exist. A general-purpose LLM might make mistakes or be slow, while managing multiple specialized code models manually is cumbersome.

LLM Routing Solution:

- Language/Framework Detection: The routing system analyzes the user's input (e.g., "Python function for data processing," "React component," "SQL query") to detect the programming language and framework.

- Specialized Model Routing:

- Python Code Generation/Refactoring: Routed to a code LLM highly proficient in Python.

- JavaScript/TypeScript (Frontend): Routed to an LLM optimized for web development languages.

- Database Queries (SQL): Routed to an LLM strong in structured query languages.

- Context Window Optimization: For large codebases or complex refactoring, the router ensures the request goes to an LLM with a sufficient context window (e.g., Gemini 1.5 Pro, or a specialized code LLM with a large context).

- Error-Based Fallback: If a generated code snippet fails to compile or passes unit tests, the routing system can automatically retry the request with a different, perhaps more powerful, code LLM or trigger a deeper diagnostic process.

Outcome: * Performance optimization: More accurate and relevant code generation, faster bug fixing, improved developer productivity. * Cost optimization: By routing code requests to models specifically trained on code, overall token usage can be more efficient, and general-purpose LLMs are reserved for tasks they excel at, minimizing wasteful expenditure.

These conceptual case studies underscore how llm routing moves AI applications beyond a one-size-fits-all approach to a dynamic, intelligent, and highly optimized ecosystem. It's the strategic layer that makes sophisticated AI not just possible, but truly practical and economically viable.

Conclusion

The era of large language models has fundamentally reshaped the technological landscape, offering unprecedented opportunities for innovation and efficiency. However, realizing the full promise of these powerful AI tools requires a deliberate and sophisticated approach to their management and utilization. As we've explored throughout this guide, llm routing stands as the indispensable strategy for navigating the complexities of the LLM ecosystem, transforming potential chaos into structured, optimized performance.

By intelligently directing AI requests to the most appropriate models, llm routing acts as the central nervous system of modern AI applications. It is the critical enabler of Performance optimization, ensuring that applications are fast, accurate, and scalable enough to meet the demanding expectations of users and businesses alike. From minimizing latency in real-time interactions and enhancing the quality of generated content to bolstering throughput under heavy loads, intelligent routing ensures that every AI interaction is executed with precision and efficiency.

Equally vital, llm routing is the cornerstone of robust Cost optimization. In a world where token usage can quickly escalate into substantial operational expenses, dynamic model selection, smart token management, and efficient resource utilization become paramount. By strategically choosing the most cost-effective LLM for each task, leveraging tiered pricing, and implementing intelligent caching, businesses can significantly reduce their AI expenditure without compromising on quality or performance. This translates directly into improved ROI and sustainable growth for AI initiatives.

The ability to seamlessly integrate new models, adapt to evolving pricing structures, and maintain uninterrupted service through resilient fallback mechanisms provides an unparalleled level of agility and future-proofing. Platforms like XRoute.AI exemplify this paradigm shift, offering developers a unified, OpenAI-compatible API to effortlessly manage over 60 models from more than 20 providers, focusing on low latency AI and cost-effective AI. Such solutions empower organizations to abstract away the underlying complexity, allowing them to focus on building truly intelligent, impactful applications.

As the LLM landscape continues to expand and evolve, the strategic importance of llm routing will only grow. It is not merely a technical solution but a foundational philosophy for building adaptable, resilient, and economically viable AI systems. By mastering llm routing, developers and businesses are not just optimizing their AI performance and costs; they are unlocking the true, scalable potential of artificial intelligence, paving the way for a future where AI-driven innovation is both limitless and deeply practical.

Frequently Asked Questions (FAQ)

Q1: What exactly is LLM routing and why is it so important now?

A1: LLM routing is an intelligent system that dynamically directs incoming AI requests to the most suitable large language model (LLM) from a pool of available models. It's crucial now because the LLM landscape is vast and diverse, with each model having different strengths, weaknesses, and pricing. Routing helps optimize for factors like speed, accuracy, and cost, ensuring you use the "right tool for the right job" rather than a one-size-fits-all approach. This directly leads to Performance optimization and Cost optimization.

Q2: How does LLM routing contribute to Performance optimization?

A2: LLM routing enhances performance by reducing latency (e.g., routing to the fastest available model or using caching), improving accuracy (e.g., sending requests to specialized models for specific tasks), and increasing throughput (e.g., load balancing across multiple models). It ensures your AI applications respond quickly and accurately, providing a better user experience.

Q3: Can LLM routing significantly reduce my AI operational costs?

A3: Absolutely. Cost optimization is one of the primary benefits. LLM routing achieves this by dynamically selecting the cheapest model that meets the required quality and performance standards for a given task. It also leverages techniques like intelligent caching to reduce redundant calls, optimizes token usage by routing simple requests to models with lower token costs, and helps manage overall resource allocation efficiently, ultimately leading to substantial savings.

Q4: Is it better to build my own LLM routing solution or use a platform?

A4: The "build vs. buy" decision depends on your resources and specific needs. Building a custom solution offers maximum control and customization but requires significant engineering effort and ongoing maintenance. Using a specialized platform, like XRoute.AI, provides faster time to value, reduces development overhead, offers advanced features out-of-the-box, and ensures scalability and reliability, often proving more cost-effective AI in the long run for most organizations.

Q5: What are some advanced strategies used in LLM routing?

A5: Advanced llm routing strategies go beyond simple rules. They include content-based routing (analyzing prompt content via embeddings or topic modeling), user-context based routing (personalizing model selection based on user history or preferences), and even reinforcement learning (RL) based routing, where an AI system learns optimal routing policies through continuous feedback and adaptation, ensuring ongoing Performance optimization and Cost optimization. Many systems also use hybrid approaches, combining multiple strategies for robustness.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.