o1 mini vs 4o: Which Is Right For You?

The artificial intelligence landscape is evolving at an unprecedented pace, marked by a fascinating dichotomy: the rise of increasingly powerful, general-purpose models capable of multimodal understanding, and the simultaneous emergence of highly specialized, ultra-efficient "mini" models designed for specific tasks. This dual trajectory presents both incredible opportunities and complex decision-making challenges for developers, businesses, and researchers. As these advanced systems become more accessible, understanding their distinct strengths and ideal applications is crucial for harnessing their full potential.

In this comprehensive exploration, we delve into the core differences and unique advantages of two prominent paradigms shaping the future of AI: GPT-4o, OpenAI's latest flagship "omni-model," and the conceptual "o1 mini" – a representative archetype of the new generation of compact, hyper-efficient AI models. While GPT-4o pushes the boundaries of multimodal interaction and general intelligence, models embodying the "o1 mini" philosophy prioritize speed, cost-effectiveness, and resource efficiency for targeted applications. This detailed comparison, encompassing everything from architectural design to real-world deployment scenarios, aims to provide clarity and guidance, helping you determine which of these innovative AI solutions is the optimal choice for your specific needs. The conversation naturally extends to considering the potential impact of future iterations like a dedicated "GPT-4o Mini," further complicating the choice but also offering new avenues for optimization.

Understanding GPT-4o: The Omni-Model Paradigm Shift

OpenAI’s GPT-4o (the "o" stands for "omni") represents a significant leap forward in the quest for truly versatile artificial intelligence. Unveiled as a single, natively multimodal model, GPT-4o is engineered to process and generate content across text, audio, and visual domains seamlessly and in real-time. This departure from previous architectures, where separate models were often chained together for multimodal tasks, positions GPT-4o as a cornerstone for building highly interactive and context-aware AI applications.

What is GPT-4o? Its Multimodal Nature in Depth

At its core, GPT-4o is a transformer-based neural network, but with a crucial distinction: it was trained end-to-end across text, audio, and image data. This means that instead of converting different modalities into text embeddings before processing (as was common with GPT-4V, for example), GPT-4o perceives and understands them directly. When a user speaks to GPT-4o, the model processes the raw audio, not just a transcription. Similarly, when presented with an image, it ingests the visual data directly. This native multimodal understanding is what allows for its impressive speed and fluency across different forms of communication.

The "omni" aspect is not just about input; it also extends to output. GPT-4o can respond with natural language text, generate speech with emotive qualities, or even interpret and provide feedback on visual inputs. This cohesive integration of sensory information and generation capabilities opens up a vast new array of possibilities for human-computer interaction, making the AI feel more present, intuitive, and genuinely intelligent.

Key Features and Capabilities: Beyond Traditional Language Models

The advancements in GPT-4o manifest in several groundbreaking features:

- Real-Time Multimodal Interaction: Perhaps the most striking capability is its ability to engage in real-time voice conversations, detecting emotions, pauses, and nuances in human speech, and responding with remarkably natural intonation. This goes far beyond simple voice assistants, aspiring to a conversational fluency akin to human interaction. For instance, a user could show the model a live video feed of a complex problem, describe the situation verbally, and receive instant, contextually rich advice, all within seconds. This has profound implications for customer support, personal tutoring, and accessibility tools.

- Enhanced Vision Capabilities: GPT-4o can interpret complex visual information with high accuracy. This includes not just object recognition but also understanding spatial relationships, text within images, charts, graphs, and even subtle emotional cues from faces. It can analyze intricate diagrams to explain their components, or understand a user's drawing to provide creative suggestions. This makes it invaluable for tasks ranging from medical image analysis to architectural design feedback.

- Superior Text Performance: Despite its multimodal focus, GPT-4o maintains, and in many cases, surpasses, the high text-based performance of its predecessors. It excels in complex reasoning, code generation, creative writing, summarization, and translation, handling long contexts and nuanced queries with remarkable proficiency. Its ability to switch effortlessly between generating code based on a verbal description and debugging it by analyzing a screenshot of an error message exemplifies its power.

- Speed and Efficiency: Compared to previous multimodal models, GPT-4o significantly reduces latency, especially for audio interactions. This is a direct benefit of its natively multimodal architecture, eliminating the conversion bottlenecks that previously slowed down cross-modal understanding. For voice interactions, it can respond in as little as 232 milliseconds, averaging 320 milliseconds, which is on par with human response times.

- Broad Language Support: OpenAI has also emphasized GPT-4o's improved performance and speed across a wider range of languages, making it a more globally accessible tool for communication and content generation. This is crucial for international businesses and multicultural applications.

Use Cases: Where GPT-4o Shines

GPT-4o's versatility makes it suitable for a wide array of demanding applications:

- Advanced Customer Service and Support: Imagine AI agents that can not only understand spoken queries but also interpret screenshots of user interfaces or product issues, providing highly personalized and effective support in real-time.

- Interactive Education and Tutoring: A student struggling with a math problem could verbally explain their confusion, show their handwritten work, and receive immediate, empathetic, and visually guided explanations from an AI tutor.

- Creative Content Generation: From brainstorming marketing campaigns based on visual mood boards to generating scripts for videos and even composing music, GPT-4o can act as a powerful creative partner.

- Healthcare and Accessibility: Interpreting medical images, assisting visually impaired users by describing their surroundings, or providing real-time language translation in critical scenarios.

- Complex Problem-Solving and R&D: Aiding researchers in interpreting complex data visualizations, debugging code by examining error messages and code snippets simultaneously, or designing experiments.

- Personal AI Assistants: Developing highly sophisticated personal assistants that can interact with users through speech, understand their environment via camera input, and manage tasks across multiple modalities.

Strengths: Versatility, Cutting-Edge Performance, Multimodal Fluency

The primary strengths of GPT-4o lie in its unparalleled versatility, its ability to maintain cutting-edge performance across diverse tasks, and its fluid multimodal fluency. It represents the pinnacle of general-purpose AI, capable of handling a vast spectrum of challenges that require understanding and generating human-like responses across various sensory inputs. Its unified architecture not only speeds up processing but also leads to more coherent and contextually rich outputs.

Limitations: Resource Intensity, Potential Cost, Latency for Certain Applications

Despite its revolutionary capabilities, GPT-4o is not without its limitations. Being a large, complex model, it inherently demands significant computational resources for training and inference. While OpenAI has worked to make it more efficient than previous models, its operational costs can still be substantial, especially for high-volume applications or those with extremely tight budget constraints. Furthermore, while its real-time audio response is impressive, there might still be specific, highly latency-sensitive edge computing scenarios where even GPT-4o's speed isn't sufficient without local optimization. Its vast generalization might also mean it's "overqualified" and thus inefficient for highly specialized, narrow tasks that could be handled by a much smaller model.

Introducing the "o1 mini" Philosophy: The Rise of Specialized Efficiency

In stark contrast to the expansive, general-purpose nature of GPT-4o, the "o1 mini" represents a philosophical yet increasingly tangible trend in AI development: the creation of compact, highly optimized models tailored for specific tasks where efficiency, speed, and cost are paramount. While "o1 mini" itself is a conceptual placeholder in this discussion, it embodies the characteristics of many emerging, smaller-footprint AI models that are gaining traction across various industries. These models are not designed to be omni-capable but rather to excel within a defined scope, delivering maximum performance with minimal resource expenditure. This category also naturally includes the anticipated gpt-4o mini or similar compact versions of larger models, aimed at striking a balance between capability and efficiency.

Defining "o1 mini": A Representative Model for the "Mini" AI Trend

"o1 mini" can be thought of as a hypothetical model that exemplifies the design principles of the "mini" AI movement. This movement is driven by the need for AI solutions that can operate effectively in resource-constrained environments, provide instant responses, and be deployed economically at scale. These models are typically characterized by:

- Reduced Parameter Count: Significantly fewer parameters than large foundation models, leading to smaller model sizes.

- Optimized Architectures: Often employ novel architectural designs, quantization techniques, pruning, or knowledge distillation to shrink their footprint without drastically sacrificing performance on their target tasks.

- Domain-Specific Training: Frequently fine-tuned or pre-trained on highly specific datasets relevant to their intended application, allowing them to achieve high accuracy in a narrow domain with fewer resources.

- Focus on Inference Efficiency: Engineered for extremely fast inference times and high throughput, making them ideal for real-time applications.

The "o1 mini" isn't one specific product but rather a banner under which many innovative, smaller AI models operate, each aiming to provide focused intelligence efficiently. This could include lightweight language models, vision models optimized for edge devices, or specialized speech processing units.

Core Design Principles: Balancing Power with Practicality

The philosophy behind "o1 mini" models revolves around a set of guiding principles:

- Efficiency as a Priority: Every design choice, from architecture to training data, is made with the goal of maximizing performance per computational unit. This means lower energy consumption, reduced memory footprint, and faster execution.

- Speed and Low Latency: For applications requiring instantaneous responses, such as real-time anomaly detection, in-car voice commands, or quick chatbot interactions, low latency is non-negotiable. "o1 mini" models are built from the ground up to minimize processing delays.

- Cost-Effectiveness: A smaller model requires less computational power for inference, which directly translates to lower cloud computing costs, reduced energy bills, and more affordable deployment at scale. This makes AI accessible to a broader range of businesses and use cases where the economic viability of larger models might be prohibitive.

- Specialization and Task-Specificity: Instead of aiming for general intelligence, "o1 mini" models are often purpose-built. They might be exceptionally good at sentiment analysis for short texts, image classification for a specific set of objects, or generating concise summaries of particular document types. This focused expertise allows them to outperform larger, general models on their niche tasks, precisely because they are not burdened with irrelevant knowledge.

- Edge AI Suitability: Their compact size and low resource demands make "o1 mini" models ideal for deployment on edge devices – smartphones, IoT sensors, embedded systems, and even smart appliances. This enables offline capabilities, enhances privacy by keeping data local, and reduces reliance on cloud connectivity.

Potential Use Cases: Where "o1 mini" Models Make a Difference

The application spectrum for "o1 mini" models is vast and growing, particularly in areas where resource constraints or real-time demands are critical:

- Embedded Systems and IoT Devices: Enabling smart home devices to understand basic voice commands locally, performing on-device image recognition for security cameras, or facilitating predictive maintenance on industrial equipment without constant cloud connectivity.

- High-Volume, Low-Latency API Calls: Powering millions of daily interactions for basic chatbots, rapid content moderation (tagging, classification), real-time sentiment analysis in customer feedback systems, or instantly summarizing short queries for search engines.

- Resource-Constrained Environments: Deploying AI on older hardware, in regions with limited internet infrastructure, or in situations where energy efficiency is a primary concern.

- Cost-Sensitive Applications: Businesses looking to integrate AI into their products or services without incurring the high operational costs associated with large language models, especially for routine, high-frequency tasks.

- Mobile Applications: Implementing intelligent features directly on smartphones, such as personalized recommendations, local language processing, or on-device translation, reducing dependence on cloud APIs.

- Autonomous Systems: Providing quick, local decision-making capabilities for drones, robots, or autonomous vehicles for tasks like object avoidance or path planning in specific scenarios.

Strengths: Unparalleled Efficiency, Speed, Cost Savings, Niche Expertise

The principal strengths of "o1 mini" models lie in their remarkable efficiency, delivering lightning-fast inference speeds, substantial cost savings, and unparalleled expertise within their specialized domains. They are the workhorses of AI, providing reliable and economical intelligence for well-defined problems, enabling broad AI adoption where general-purpose models might be overkill or too expensive.

Limitations: Narrower Scope, Less Generalizable, Might Lack Multimodal Breadth

The trade-off for their efficiency and specialization is a narrower scope of application. "o1 mini" models are inherently less generalizable than their larger counterparts. They might struggle significantly outside their training domain or when faced with tasks requiring broad contextual understanding or multimodal interpretation. While a specific "o1 mini" might be excellent at text summarization, it won't be able to generate images from text, understand spoken commands, or debug code. They lack the multimodal breadth of models like GPT-4o, making them unsuitable for complex, open-ended tasks that require switching between different forms of data input and output. Their intelligence is deep but not wide.

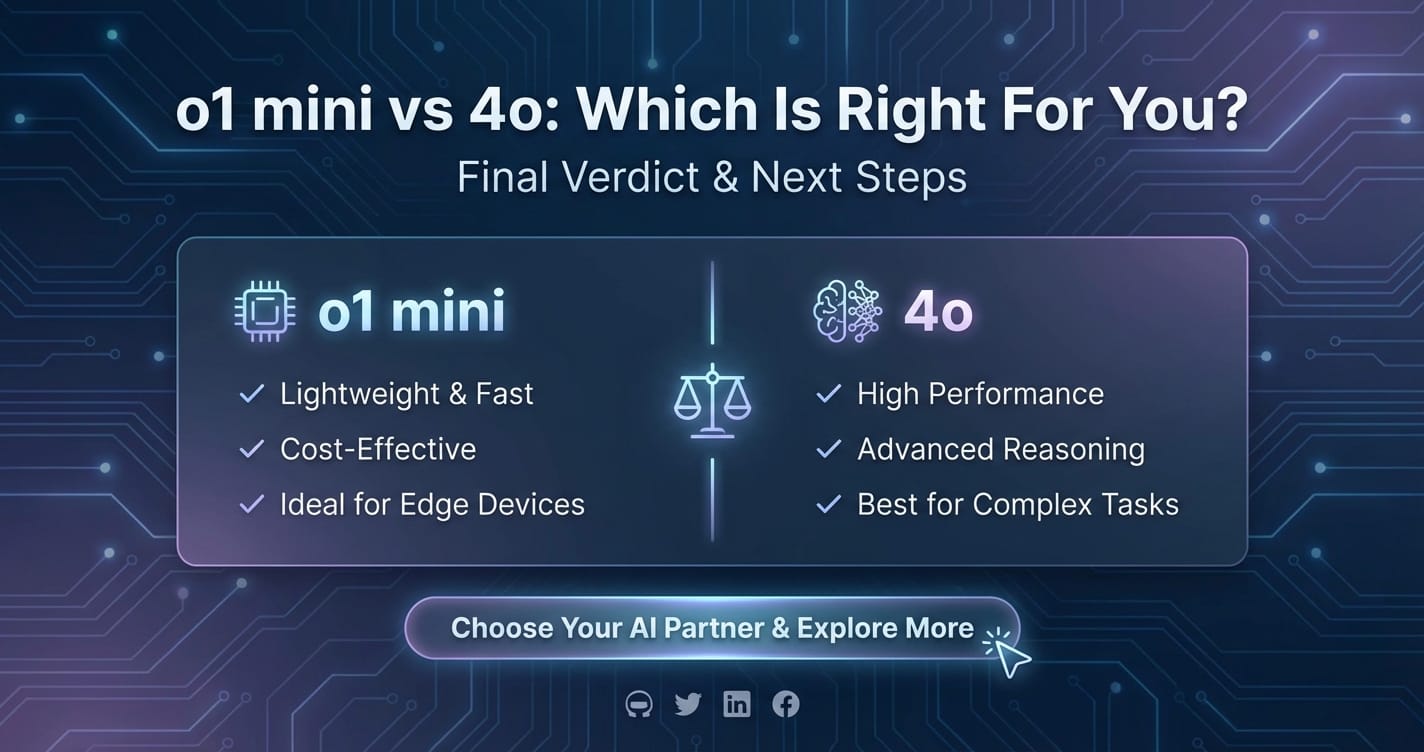

A Head-to-Head Comparison: o1 mini vs 4o

Choosing between GPT-4o and an "o1 mini"-type model is not about identifying a universally "better" solution, but rather about aligning the model's capabilities with specific project requirements. Each paradigm excels in different arenas, offering distinct advantages in performance, resource consumption, and flexibility. This comparative analysis will highlight these critical distinctions.

Performance Metrics: Speed, Accuracy, and Latency

When evaluating AI models, performance is often broken down into several key metrics:

- Speed (Throughput): How many queries or tasks can the model process per unit of time?

- Accuracy: How correct or relevant are the model's outputs for a given task?

- Latency: How quickly does the model provide a response to a single query?

GPT-4o, with its multimodal architecture, boasts impressive speed, especially in real-time conversational contexts where it integrates audio, vision, and text simultaneously. Its accuracy across a vast range of general tasks is exceptionally high, making it a reliable choice for complex reasoning and content generation. However, for extremely high-volume, repetitive text-based tasks or inference on edge devices, the "o1 mini" philosophy prioritizes sheer speed and minimal latency above all else for its specific domain. A lightweight "o1 mini" model designed for simple text classification could classify thousands of inputs per second with microsecond latency on appropriate hardware, potentially outperforming GPT-4o in pure throughput for that particular, narrow task.

Here’s a comparative table illustrating hypothetical performance attributes:

| Feature/Metric | GPT-4o | "o1 mini" (Representative) |

|---|---|---|

| Primary Focus | General intelligence, multimodal fluency | Specialized efficiency, speed, low cost |

| Latency (Typical Text) | Sub-second for complex queries; 232-320ms for audio | Milliseconds for specific tasks (e.g., text classification) |

| Throughput | High for varied complex tasks | Extremely high for targeted, repetitive tasks |

| Accuracy | Excellent across broad general knowledge & tasks | Excellent within its specialized domain, can exceed general models |

| Multimodality | Native (text, audio, vision) | Typically single-modal or very limited multimodal (e.g., text+simple image) |

| Reasoning Depth | Advanced, complex, abstract reasoning | Sufficient for specialized task, limited general reasoning |

| Context Window | Very large (e.g., 128K tokens) | Smaller, optimized for task-relevant context |

Multimodality vs. Specialization

This is arguably the most fundamental differentiating factor.

- GPT-4o's Strength in Diverse Input/Output: GPT-4o's native multimodality is its superpower. It can fluidly switch between understanding spoken words, analyzing images, and generating detailed textual responses or even synthesizing speech. This makes it indispensable for applications requiring a holistic understanding of human communication and context, where the inputs and outputs are dynamic and varied. It doesn't just process text; it experiences the world through different sensory data, allowing for richer interactions and more nuanced understanding.

- "o1 mini"'s Strength in Targeted, Efficient Processing: Conversely, an "o1 mini" model typically operates within a single modality (e.g., text-only, or vision-only for a specific task) or with very limited cross-modal capabilities. Its strength lies in performing that specific task with unparalleled efficiency. It's like a highly trained specialist surgeon compared to a general practitioner. While the general practitioner (GPT-4o) can diagnose a wide range of ailments, the specialist (o1 mini) can perform a specific operation with greater precision and speed because their focus is so narrow. For instance, a dedicated "o1 mini" image classifier for identifying specific medical conditions might offer faster inference and better accuracy than GPT-4o when solely performing that classification, as it wouldn't be burdened with understanding general visual scenes or generating descriptive text.

Resource Consumption and Cost Implications

The operational cost of an AI model is a critical factor for long-term sustainability and scalability, especially for businesses.

- Computational Footprint: Large language models like GPT-4o require significant computational resources (GPUs, memory) for both training and inference. While OpenAI works to optimize this, the inherent complexity of a multimodal, general-purpose model means it will always consume more power and memory than a highly distilled, specialized model.

- API Costs: This directly translates to higher per-token or per-call API costs for GPT-4o. When running millions of inferences daily, these costs can quickly accumulate, becoming a significant line item in an operational budget.

- "o1 mini"'s Cost-Effectiveness: "o1 mini" models, by their very design, are geared towards cost-effectiveness. Their smaller parameter counts and optimized architectures lead to drastically lower inference costs. They can often run on less powerful hardware, or even on CPUs/edge devices, further reducing infrastructure expenses. For high-volume, repetitive tasks, the cumulative cost savings of using an "o1 mini" can be immense, making AI integration feasible for businesses with tight budgets.

Here's another table comparing their cost-efficiency:

| Feature/Metric | GPT-4o | "o1 mini" (Representative) |

|---|---|---|

| API Cost/Token | Higher, reflecting advanced capabilities | Significantly lower, optimized for volume |

| Inference Cost | Moderate to high, depending on task complexity | Very low, ideal for high-frequency operations |

| Energy Consumption | Higher per inference | Lower per inference, environmentally friendly for specific tasks |

| Hardware Requirements | Powerful GPUs (cloud-based inference often) | Can run on CPUs, edge devices, or lighter GPUs |

| Deployment Flexibility | Primarily cloud-based for full capabilities | Cloud, on-premises, edge, mobile |

| Total Cost of Ownership (TCO) | Higher for large-scale or continuous usage | Much lower for high-volume, specialized tasks |

Ease of Integration and Developer Experience

Both types of models aim for developer-friendliness, but their integration paths can differ.

- GPT-4o: Typically accessed via well-documented APIs (like OpenAI's), offering straightforward integration into existing applications. The complexity lies more in effectively leveraging its multimodal capabilities and managing the potential for vast, nuanced responses. Frameworks and SDKs are readily available.

- "o1 mini": Integration can vary. Some "o1 mini" models might come as pre-trained, easily deployable libraries or containerized solutions. Others might require more specific tooling or fine-tuning to perfectly align with a unique use case. However, once integrated, their smaller size often means quicker loading times and less resource overhead within an application. The developer experience is often focused on embedding these compact models efficiently.

It's also worth noting that the burgeoning ecosystem of AI development platforms is significantly simplifying the integration of both types of models. Platforms that abstract away the complexities of different APIs and model providers are becoming invaluable, allowing developers to switch between models or combine them with minimal effort. This is where unified API platforms play a crucial role, allowing developers to experiment and choose the right model for the right job without getting bogged down in integration headaches.

Scalability and Deployment Scenarios

- Cloud-Based Dominance for GPT-4o: Given its computational demands and massive scale, GPT-4o is predominantly a cloud-based model. Its full capabilities, especially real-time multimodal processing, require robust server infrastructure provided by services like Azure or AWS, often accessed via OpenAI's API. Scaling GPT-4o applications involves managing API quotas and optimizing requests to OpenAI's infrastructure.

- Versatile Deployment for "o1 mini": "o1 mini" models offer far greater flexibility in deployment. They can be deployed in the cloud for high throughput, on-premises for data privacy and control, or critically, directly on edge devices. This capability to run locally without constant internet connectivity makes them ideal for offline applications, IoT devices, and scenarios where immediate, private processing is required. Scaling "o1 mini" deployments can involve distributing instances across many edge devices or optimizing cloud instances for parallel processing of specialized tasks.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Use Cases and Ideal Scenarios: Who Benefits Most?

Understanding the technical distinctions is only one part of the equation; translating these into practical application scenarios is where the true value lies. The choice between a sophisticated, general-purpose model like GPT-4o and a specialized, efficient "o1 mini" hinges entirely on the specific problem you are trying to solve and the constraints you operate under.

When GPT-4o Shines: Unlocking New Frontiers of Interaction

GPT-4o is the unequivocal choice when your application demands broad intelligence, nuanced understanding, creative generation, and especially seamless multimodal interaction.

- Complex Creative Tasks: For generating intricate stories, composing sophisticated music (with multimodal cues), designing novel marketing copy based on visual inspiration, or developing game narratives, GPT-4o’s expansive knowledge and creative flexibility are unmatched. Artists, writers, and designers can leverage its capabilities for brainstorming and content creation that transcends simple text.

- Advanced Customer Service Bots (Multimodal): Imagine a customer support bot that can not only understand a customer’s frustrated tone of voice but also analyze a screenshot of their error message, identify the problem, and guide them verbally through a solution. This level of empathetic and context-rich interaction is precisely where GPT-4o excels, moving beyond rudimentary chatbots to truly intelligent virtual assistants.

- Research and Development: In scientific research, GPT-4o can assist in interpreting complex experimental data presented in graphs and tables, summarize vast quantities of academic papers, or even help formulate hypotheses by drawing connections across disparate fields of knowledge. Its ability to process and synthesize information from various modalities can accelerate discovery.

- Interactive Education and Personalized Tutoring: An AI tutor powered by GPT-4o could understand a student’s verbal explanation of a concept, see their handwritten notes or diagrams, and then provide tailored, interactive explanations, ask probing questions, and adapt its teaching style in real-time. This dynamic, responsive learning environment is a game-changer for education.

- Sophisticated Data Analysis and Interpretation: While "o1 mini" might classify data, GPT-4o can analyze and interpret complex datasets, identify trends, explain anomalies, and even generate natural language reports from raw numbers and visualizations. Its reasoning capabilities can uncover deeper insights.

- Prototyping and Exploration: When you're unsure of the exact AI capabilities needed, or you require a versatile model for rapid prototyping of diverse AI features, GPT-4o provides a robust foundation. Its breadth allows for quick iteration and testing of various AI-driven ideas.

When "o1 mini" is the Go-To Choice: Efficiency at Scale

The "o1 mini" philosophy becomes the preferred solution when efficiency, speed, cost, and specialization are the most critical factors. These models are built for purpose, not for generality, delivering focused intelligence where it matters most.

- High-Frequency Transactional AI: For applications requiring millions of daily inferences for simple, repetitive tasks such as classifying emails as spam/not-spam, moderating user comments for objectionable content, or tagging product reviews with sentiment, "o1 mini" models offer unparalleled cost-effectiveness and speed.

- Embedded AI and IoT Devices: Deploying AI directly onto hardware like smart cameras for local object detection, smart appliances for voice control, or industrial sensors for real-time anomaly detection is a perfect fit for "o1 mini" models. Their low power consumption and ability to operate offline are key advantages.

- Real-Time Monitoring and Alerting: In cybersecurity, financial fraud detection, or infrastructure monitoring, the need for immediate analysis and alerting is paramount. "o1 mini" models can process streams of data (e.g., network traffic, sensor readings, transaction logs) with minimal latency to identify threats or critical events as they happen.

- Cost-Optimized Batch Processing: For large-scale data processing tasks that don't require multimodal understanding or complex reasoning – such as categorizing vast libraries of documents, performing bulk sentiment analysis on customer feedback, or translating standard texts – "o1 mini" models can complete these tasks significantly faster and at a much lower cost than larger, more complex models.

- Basic Chatbots and Virtual Assistants (Text-Only): For simple FAQ bots, lead qualification tools, or customer support that primarily relies on text-based interactions for predefined queries, an "o1 mini" language model can provide quick, accurate responses without the overhead of a multimodal giant.

- Mobile Applications with On-Device AI: Integrating AI features directly into mobile apps, such as local language translation, personalized recommendations based on on-device behavior, or image recognition for accessibility features, where cloud connectivity might be intermittent or privacy is a concern.

The "GPT-4o Mini" Factor: Bridging the Gap?

The keyword "gpt-4o mini" points to an intriguing and highly probable future development: a more compact, efficient version of GPT-4o. This concept highlights a growing trend where even leading AI developers aim to distill the power of their flagship models into more accessible and resource-friendly packages.

A hypothetical "GPT-4o Mini" would likely aim to strike a balance between the broad multimodal capabilities of the full GPT-4o and the efficiency and cost-effectiveness of "o1 mini"-type models. It wouldn't necessarily be as specialized or as small as a dedicated "o1 mini" model, but it would be significantly more efficient and potentially more affordable than the full GPT-4o.

How would it position itself?

- Compromise on Scale, Retain Key Features: It might have a smaller parameter count, potentially a slightly reduced context window, or perhaps a more focused set of multimodal capabilities (e.g., excellent text and audio, but less sophisticated vision than the full 4o). The goal would be to retain the most impactful features of GPT-4o – like natural voice interaction and strong text generation – while shedding some of the computational overhead.

- Targeting Mid-Range Applications: "GPT-4o Mini" would likely target applications that require more general intelligence and some multimodal interaction than an "o1 mini" can offer, but where the full power and cost of GPT-4o are overkill. This could include moderately complex customer service, more advanced mobile AI assistants, or interactive educational tools that need responsiveness without the full complexity of high-end research.

- Expanding Accessibility: By offering a more affordable and efficient version, OpenAI could make advanced multimodal AI accessible to a wider range of developers and businesses, democratizing access to cutting-edge technology.

- Direct Competition with "o1 mini" and Other Compact Models: If released, a "GPT-4o Mini" would directly compete with models embodying the "o1 mini" philosophy, especially for tasks that require a slightly broader scope of intelligence but still prioritize efficiency. The decision then becomes: pure specialization and ultimate efficiency ("o1 mini") versus a slightly broader, more generalized capability with good efficiency ("GPT-4o Mini").

The potential arrival of "GPT-4o Mini" underscores the dynamic nature of the AI market, where innovation isn't just about building bigger models, but also about making powerful AI more practical, affordable, and widely deployable.

Navigating the Ecosystem: The Role of Unified API Platforms

The proliferation of AI models, from foundational giants like GPT-4o to specialized "o1 mini" counterparts and potential future offerings like "GPT-4o Mini," creates both immense opportunity and significant challenges. For developers, integrating these diverse models into applications can be a complex, time-consuming, and resource-intensive endeavor. Each model often comes with its own API, authentication methods, rate limits, and data formats, leading to integration headaches and vendor lock-in concerns. This is where unified API platforms become indispensable.

The challenge of integrating diverse AI models is multi-faceted:

- API Proliferation: Every new model or provider means learning a new API, writing new integration code, and maintaining separate connections.

- Vendor Lock-in: Relying on a single provider’s API can limit flexibility and make switching models difficult if better options emerge or pricing changes.

- Performance Optimization: Manually managing latency, throughput, and cost optimization across multiple models from different providers is a daunting task.

- Experimentation Overhead: Trying out different models to find the best fit for a specific task becomes a heavy engineering effort rather than a quick test.

This is precisely the problem that XRoute.AI is designed to solve. XRoute.AI is a cutting-edge unified API platform that streamlines access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI dramatically simplifies the integration process, allowing users to leverage a vast array of AI capabilities without the typical complexities.

How XRoute.AI Empowers Developers and Businesses:

- Single, OpenAI-Compatible Endpoint: This is the game-changer. Developers familiar with OpenAI's API can easily connect to XRoute.AI and gain access to over 60 AI models from more than 20 active providers. This uniformity eliminates the need to learn and integrate dozens of different APIs, drastically accelerating development cycles.

- Unrivaled Model Access: Whether you need the general intelligence of a GPT-4o, the focused efficiency of an "o1 mini"-type model for a specific task, or want to explore other cutting-edge models (including potential "GPT-4o Mini" offerings as they emerge), XRoute.AI acts as your central gateway. It allows seamless development of AI-driven applications, chatbots, and automated workflows.

- Low Latency AI: XRoute.AI prioritizes performance, ensuring that your applications benefit from low latency AI across its diverse model offerings. This is crucial for real-time interactions and highly responsive user experiences, regardless of which underlying model you choose.

- Cost-Effective AI: The platform is engineered to facilitate cost-effective AI. By abstracting away provider-specific pricing and offering optimized routing, XRoute.AI helps users get the best performance for their budget. It allows for flexible pricing models and the ability to easily switch between providers to find the most economical option for any given task.

- High Throughput and Scalability: Built for enterprise-level demands, XRoute.AI ensures high throughput and scalability, enabling your applications to handle large volumes of requests without performance degradation. This is vital for growing businesses and applications with fluctuating user loads.

- Developer-Friendly Tools: With a focus on simplifying the developer experience, XRoute.AI provides the tools and infrastructure needed to build intelligent solutions efficiently, without the complexity of managing multiple API connections. This frees up engineering teams to focus on core product innovation rather than integration plumbing.

- Experimentation and Future-Proofing: In a rapidly evolving landscape, XRoute.AI allows for easy experimentation with new models and seamless switching between providers. This future-proofs your applications, ensuring you can always adapt to the latest advancements and optimize for performance and cost as the market shifts. If a "GPT-4o Mini" emerges, XRoute.AI would likely be among the first to integrate it, offering it alongside other efficient models, allowing users to compare its performance against existing "o1 mini" options with minimal effort.

In essence, XRoute.AI transforms the complex task of AI model integration into a streamlined, efficient process. It empowers developers and businesses to focus on building innovative applications rather than grappling with the intricacies of a fragmented AI ecosystem, making the decision between models like GPT-4o and "o1 mini" a strategic choice rather than an engineering nightmare.

Future Outlook: The Evolving Landscape of AI Models

The current dynamic between powerful, general-purpose models like GPT-4o and efficient, specialized models exemplified by "o1 mini" is not a temporary phase but rather a foundational trend shaping the future of artificial intelligence. This dual evolution is critical for the widespread, sustainable, and responsible deployment of AI across all sectors.

Continued Specialization vs. Generalization

The tension and synergy between specialization and generalization will continue to drive innovation:

- Deepening Specialization: We will likely see an explosion of even more specialized "o1 mini"-type models. These might be ultra-compact, hyper-optimized for specific industries (e.g., legal, medical diagnostics, manufacturing control) or particular modalities (e.g., specific dialects, obscure image types). The push for "edge AI" and on-device intelligence will fuel this growth, as will the need for extremely low-cost, high-volume automation.

- Advancing Generalization: Models like GPT-4o will continue to push the boundaries of multimodal understanding, reasoning, and creativity. Future iterations might incorporate more sensory inputs (e.g., touch, smell), develop even more nuanced emotional intelligence, or achieve higher levels of common sense reasoning. The pursuit of Artificial General Intelligence (AGI) will remain a guiding star for these efforts.

- Hybrid Approaches: The most impactful advancements might come from hybrid architectures that intelligently combine both approaches. Imagine a primary application powered by a general-purpose model, offloading highly repetitive or latency-critical sub-tasks to specialized "o1 mini" components. This modularity could offer the best of both worlds: broad intelligence where needed, and razor-sharp efficiency for specific functions.

Emphasis on Efficiency and Sustainable AI

As AI models grow in complexity and usage, the environmental and economic costs become increasingly salient. The "o1 mini" philosophy directly addresses this by prioritizing efficiency:

- Green AI: The demand for "Green AI" – models that consume less energy during training and inference – will intensify. This means continued research into more efficient neural architectures, sparse models, hardware-aware optimization, and techniques like quantization and pruning. "o1 mini" models are inherently greener.

- Cost Democratization: Reducing the cost of AI inference is crucial for democratizing access to this technology. Efficient models allow smaller businesses, startups, and developers in emerging markets to leverage AI without prohibitive expenses.

- Regulatory Pressures: As AI becomes more regulated, there will be increasing scrutiny on its resource consumption and environmental impact, further accelerating the drive for efficiency.

The Rise of Adaptive and Personalized AI

Future AI systems will likely be more adaptive and personalized. Imagine models that can dynamically load smaller, specialized modules ("o1 mini" components) based on the user's specific query or context, only engaging larger models like GPT-4o for truly complex, open-ended tasks. This dynamic routing, facilitated by platforms like XRoute.AI, will allow for highly optimized resource utilization and bespoke AI experiences.

The emergence of a "GPT-4o Mini" would be a clear indicator of this trend, showing that even the leading general-purpose AI labs recognize the importance of distilling their power into more practical, efficient forms for broader adoption. This isn't just about scaling up capabilities, but also scaling down to meet diverse needs effectively.

Conclusion

The choice between a powerful, all-encompassing AI model like GPT-4o and a highly efficient, specialized model embodying the "o1 mini" philosophy is a strategic one, deeply intertwined with the specific goals and constraints of your project. GPT-4o stands as a beacon of multimodal general intelligence, ideal for complex, creative, and interactive applications that demand nuanced understanding across text, audio, and vision. Its ability to engage in real-time, human-like conversations and interpret diverse data streams opens up unprecedented possibilities for innovation.

Conversely, "o1 mini"-type models are the champions of efficiency, speed, and cost-effectiveness. They excel in high-volume, low-latency, and resource-constrained environments, delivering precise intelligence for specialized tasks with minimal overhead. Whether it's for edge computing, rapid classification, or economical batch processing, their focused expertise makes them invaluable workhorses in the AI ecosystem. The potential future arrival of a "GPT-4o Mini" further blurs these lines, aiming to bridge the gap by offering a more efficient version of a powerful general model.

Ultimately, there is no single "best" model. The optimal choice depends on a careful assessment of your application's requirements:

- Complexity and Scope: Do you need broad, multimodal intelligence for open-ended problems (GPT-4o), or targeted, efficient intelligence for specific tasks ("o1 mini")?

- Performance vs. Cost: Are you prioritizing cutting-edge capabilities regardless of cost, or seeking maximum efficiency and affordability for scaled operations?

- Deployment Environment: Will your AI operate in the cloud, on-premises, or on resource-limited edge devices?

- Latency Requirements: Is instantaneous response critical for your user experience?

Navigating this increasingly diverse AI landscape is made significantly easier with unified API platforms like XRoute.AI. By simplifying access to a multitude of models from various providers through a single, OpenAI-compatible endpoint, XRoute.AI empowers developers to experiment, integrate, and switch between models seamlessly. This flexibility ensures that you can always leverage the most suitable AI solution – be it a powerful general model, a highly efficient specialized model, or a future "mini" iteration – optimizing for performance, cost, and innovation without integration complexities.

Both GPT-4o and models embodying the "o1 mini" philosophy contribute significantly to the broader AI ecosystem, driving progress from different angles. Understanding their unique strengths and strategic applications is key to building the next generation of intelligent, impactful solutions. The future of AI is not a monolithic entity but a rich tapestry woven from diverse, powerful, and increasingly accessible models.

Frequently Asked Questions (FAQ)

Q1: What are the primary differences between o1 mini and GPT-4o?

A1: The primary differences lie in their scope and design philosophy. GPT-4o is a large, general-purpose, natively multimodal AI model capable of understanding and generating text, audio, and vision in real-time. It excels at complex reasoning, creative tasks, and nuanced human-computer interaction. In contrast, "o1 mini" represents a class of highly specialized, compact, and efficient AI models designed for specific tasks (e.g., text classification, basic summarization, specific image recognition). "o1 mini" models prioritize speed, low latency, and cost-effectiveness, often at the expense of broad generalization or multimodal capabilities.

Q2: Is "o1 mini" a real product or a conceptual model?

A2: In this article, "o1 mini" is used as a conceptual placeholder to represent the growing trend and philosophy behind compact, highly optimized, and specialized AI models. While there isn't a specific product named "o1 mini" widely known, it embodies the characteristics of many emerging efficient models from various developers that are designed for niche applications where resources are constrained or specific performance metrics (like latency) are paramount.

Q3: When should I choose GPT-4o over an "o1 mini"-type model?

A3: You should choose GPT-4o when your application requires: 1. Multimodal understanding: Seamlessly processing and generating across text, audio, and visual inputs. 2. Complex reasoning and problem-solving: Tasks that demand deep understanding, abstract thought, and logical inference. 3. Creative content generation: Crafting unique stories, code, or other original content. 4. Highly interactive experiences: Real-time conversational AI with natural language and emotional intelligence. 5. Broad general knowledge: When the AI needs to handle a wide variety of topics without prior specialization. Its strengths lie in versatility and cutting-edge performance for diverse, open-ended tasks.

Q4: How does a platform like XRoute.AI help in this decision-making process?

A4: XRoute.AI simplifies the process of choosing and integrating AI models by providing a unified API platform. It offers a single, OpenAI-compatible endpoint to access over 60 AI models from more than 20 providers. This allows developers to easily experiment with different models (including powerful general models like GPT-4o and efficient specialized models that fit the "o1 mini" profile) without the hassle of integrating multiple APIs. XRoute.AI also optimizes for low latency, cost-effectiveness, and high throughput, enabling users to seamlessly switch between models to find the best fit for their specific application's performance and budget requirements.

Q5: Will there be a "GPT-4o Mini" and how would it compare?

A5: While OpenAI has not officially announced a "GPT-4o Mini," the trend in AI development suggests that more compact, efficient versions of flagship models are likely. A hypothetical "GPT-4o Mini" would likely aim to bridge the gap between the full GPT-4o's multimodal power and the efficiency of "o1 mini"-type models. It would probably offer a subset of GPT-4o's capabilities (e.g., strong text and audio, perhaps less advanced vision) with a smaller parameter count, leading to lower latency and reduced costs. It would be less specialized than a true "o1 mini" but more efficient than the full GPT-4o, ideal for applications requiring a balance of general intelligence and resource optimization.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.