O1 Mini vs O1 Preview: Which Is Right For You?

The artificial intelligence landscape is evolving at a breakneck pace, with new models and capabilities emerging seemingly every week. For developers, businesses, and researchers, navigating this complex ecosystem can be a significant challenge, especially when faced with choices between powerful yet specialized tools. Two recent contenders generating considerable buzz are the hypothetical O1 Mini and O1 Preview models. While both promise to push the boundaries of what's possible with AI, they are designed with distinct philosophies and target different use cases. Understanding the nuanced differences between O1 Mini vs O1 Preview is crucial for making an informed decision that aligns with your project's specific needs and strategic objectives.

This comprehensive AI model comparison aims to dissect O1 Mini and O1 Preview, examining their core strengths, ideal applications, performance characteristics, and underlying design principles. We will delve into their architectural differences, explore their potential impact on various industries, and provide a framework to help you determine which model is the optimal choice for your next groundbreaking AI initiative. From cost-effectiveness and inference speed to advanced reasoning capabilities and multimodal understanding, every aspect will be scrutinized to ensure you have a clear picture of their respective advantages.

The Evolving Landscape of AI Models: A Context for Choice

Before we dive into the specifics of O1 Mini and O1 Preview, it's essential to appreciate the broader context in which these models operate. The past few years have witnessed an explosion in large language models (LLMs) and other generative AI architectures. From general-purpose giants like GPT-3.5 and GPT-4 to specialized models for code generation, image synthesis, and scientific discovery, the diversity is immense. This proliferation is driven by several factors: advancements in deep learning algorithms, access to vast computational resources, and the ever-growing demand for intelligent automation and creative assistance.

However, this abundance also presents a dilemma. Not every project requires the most powerful, most expensive, or most resource-intensive model. Many applications benefit significantly from leaner, faster, and more cost-effective solutions. This is where the concept of "model families" comes into play, often featuring a spectrum from "mini" or "turbo" versions designed for efficiency to "large" or "preview" versions focused on maximal capability. The recent introduction of models like gpt-4o mini exemplifies this trend, offering a blend of power and efficiency tailored for specific developer needs. Our comparison of O1 Mini vs O1 Preview falls squarely within this critical consideration, addressing the fundamental trade-off between raw capability and practical deployment constraints.

The choice of an AI model is no longer a one-size-fits-all decision. It involves a careful evaluation of numerous factors: the complexity of the task, the required latency, the acceptable cost per inference, the volume of requests, the need for fine-tuning, and the overall developer experience. As we explore O1 Mini and O1 Preview, keep these broader considerations in mind, as they form the bedrock of any successful AI implementation strategy.

Deep Dive into O1 Mini: The Agile Powerhouse

O1 Mini emerges as a compelling answer to the growing demand for efficient, scalable, and cost-effective AI solutions. Conceived with a singular focus on performance optimization without sacrificing essential capabilities, O1 Mini is designed to be the workhorse for high-volume, latency-sensitive applications. Its architecture is meticulously engineered to deliver rapid inference speeds and significantly reduced operational costs, making it an attractive option for businesses operating at scale.

Core Philosophy and Design Goals

The fundamental philosophy behind O1 Mini is to achieve maximum utility with minimal resource expenditure. It's not about being the largest or the most comprehensive model; rather, it's about being the most efficient for a broad spectrum of common AI tasks. The design goals are clear:

- Speed and Low Latency: Prioritize quick response times, crucial for real-time applications like chatbots, recommendation systems, and interactive agents.

- Cost-Effectiveness: Optimize computational requirements to lower the cost per token or per inference, making AI more accessible for high-throughput scenarios.

- Scalability: Enable seamless scaling to handle millions of requests without performance degradation, supporting enterprise-level deployments.

- Robustness for Common Tasks: Excel at general language understanding, text generation, summarization, and classification, which constitute the bulk of AI workloads.

- Developer Friendliness: Offer a straightforward API and minimal configuration overhead for rapid integration and deployment.

Key Features and Capabilities

Despite its "Mini" designation, O1 Mini is far from simplistic. It boasts a robust set of features that empower developers to build sophisticated applications:

- Optimized Language Understanding: O1 Mini demonstrates strong capabilities in comprehending natural language queries, identifying entities, and extracting key information. This makes it suitable for semantic search, intent recognition, and data categorization.

- Efficient Text Generation: While not designed for open-ended creative writing at the highest artistic level, it excels at generating coherent, contextually relevant text for specific purposes such as drafting emails, generating product descriptions, or populating templates.

- Summarization and Paraphrasing: It can effectively condense lengthy documents into concise summaries or rephrase content, proving invaluable for content creation pipelines and information processing.

- Classification and Sentiment Analysis: O1 Mini is highly adept at classifying text into predefined categories and analyzing sentiment, which is critical for customer service automation, market research, and content moderation.

- Multilingual Support (Focused): While not as broad as larger models, O1 Mini offers strong performance in a select set of major global languages, catering to international business needs without the overhead of universal coverage.

Ideal Use Cases

O1 Mini shines in scenarios where efficiency and rapid processing are paramount. Its strengths make it an ideal candidate for:

- Customer Support Chatbots: Providing instant, accurate responses to common customer queries, reducing call volumes and improving satisfaction.

- Content Moderation: Automatically identifying and flagging inappropriate or harmful content across platforms at scale.

- Real-time Data Processing: Analyzing incoming data streams for trends, anomalies, or categorization in applications like financial trading or IoT analytics.

- Personalized Recommendations: Generating quick, relevant recommendations for e-commerce platforms, streaming services, or content feeds.

- Automated Report Generation: Compiling data-driven reports or summaries from structured information, saving significant manual effort.

- Developer Tooling: Assisting with code auto-completion, simple bug identification, or documentation generation within integrated development environments.

Performance Metrics and Benchmarking

In a hypothetical performance assessment, O1 Mini would stand out for its:

- Inference Speed: Consistently low latency, often measured in milliseconds, even under heavy load. This responsiveness is critical for user-facing applications where delays are unacceptable.

- Cost per Inference: Significantly lower computational cost compared to larger models, making it economically viable for applications requiring millions or billions of API calls. This is a game-changer for budget-conscious organizations.

- Throughput: High requests per second (RPS) capability, allowing a single instance or a small cluster to handle substantial traffic.

- Memory Footprint: Smaller model size, leading to reduced memory usage and potentially lower infrastructure costs if self-hosted.

Comparing O1 Mini to existing benchmarks like gpt-4o mini provides a helpful context. While GPT-4o Mini is known for its remarkable balance of speed, cost, and capability, O1 Mini would likely be positioned as an even more optimized solution for a narrower set of tasks, pushing the boundaries of efficiency further. It might achieve even faster inference times or lower costs for specific use cases, potentially by specializing its architecture or training data. For example, if GPT-4o Mini can summarize a document in 100ms at $X cost, O1 Mini might do it in 80ms at $X/2 cost, with a slight trade-off in nuanced understanding for highly complex or ambiguous texts. This focus on hyper-efficiency makes O1 Mini a strong contender for applications where every millisecond and every penny counts.

Deep Dive into O1 Preview: The Frontier Explorer

In stark contrast to O1 Mini's emphasis on efficiency, O1 Preview represents the cutting edge of AI research and development. It is designed for those who need to push the boundaries of what AI can achieve, explore new paradigms, and tackle problems that demand the utmost in intelligence, creativity, and nuanced understanding. O1 Preview isn't just about iteration; it's about innovation, often incorporating experimental features and advanced architectural designs that are still being refined.

Core Philosophy and Design Goals

O1 Preview's existence is driven by the ambition to unlock next-generation AI capabilities. Its philosophy centers on exploration, pushing the limits of current technology, and providing a platform for advanced research and development. The design goals reflect this forward-thinking approach:

- Maximum Capability: Prioritize comprehensive understanding, complex reasoning, and advanced generative abilities over raw speed or cost efficiency.

- Multimodal Integration: Seamlessly process and generate content across different modalities – text, images, audio, and potentially video – to mimic human-like perception and interaction.

- Nuanced Understanding and Contextual Awareness: Excel at handling subtle linguistic cues, implicit meanings, and maintaining long-context coherence, crucial for intricate tasks.

- Creative and Open-ended Generation: Produce highly original, imaginative, and diverse outputs across various domains, from storytelling to design.

- Research and Development Platform: Serve as a sandbox for exploring new AI applications, enabling users to experiment with emerging features and push the boundaries of current paradigms.

Key Features and Capabilities

O1 Preview's feature set is designed to tackle challenges that require deep cognitive abilities and broad general intelligence:

- Advanced Language Understanding and Generation: It demonstrates superior performance in tasks requiring intricate understanding of human language, including complex reasoning, inferring intent, and generating highly coherent and creative long-form text. This includes sophisticated dialogue management, legal document analysis, and academic writing assistance.

- Multimodal Reasoning: One of O1 Preview's standout features would likely be its ability to integrate and reason across different data types. For example, it could analyze an image, understand the context described in text, and then generate an audio narration that summarizes both. This capability opens doors to truly interactive and immersive AI experiences.

- Problem Solving and Code Generation: Excelling in logical deduction, mathematical problem-solving, and generating highly optimized, contextually relevant code snippets or entire functions, even for complex programming challenges.

- Creative Content Creation: From drafting compelling marketing copy and intricate fictional narratives to generating artistic prompts and even contributing to musical compositions, O1 Preview aims for unparalleled creative output.

- Long-Context Window: Possessing an extremely large context window, allowing it to process and recall information from extensive documents, conversations, or codebases, maintaining coherence over extended interactions.

- Fine-grained Control and Customization: Offering advanced parameters and fine-tuning options, enabling expert users to sculpt its behavior and output with precision for highly specialized applications.

Ideal Use Cases

O1 Preview is best suited for applications where innovation, depth of understanding, and the ability to handle complex, unstructured problems are paramount. These include:

- Advanced Research and Development: Serving as a powerful assistant for scientific discovery, hypothesis generation, and literature review synthesis.

- High-Value Content Creation: Generating original articles, marketing campaigns, video scripts, or even entire books with sophisticated narrative arcs and stylistic nuances.

- Complex Problem Solving: Aiding in legal document analysis, medical diagnostics, financial modeling, or strategic planning where nuanced interpretation and multi-faceted reasoning are required.

- Cutting-Edge AI Applications: Powering next-generation virtual assistants that can understand emotional cues, multimodal interaction systems, or highly sophisticated game AI.

- Personalized Learning and Tutoring: Creating dynamic, adaptive learning paths and providing deep explanations tailored to individual student needs, potentially even assessing nuanced understanding through varied modalities.

- Creative Industries: Assisting artists, designers, and musicians in overcoming creative blocks, generating novel ideas, and even automating parts of the creative process.

Performance Metrics and Considerations

While O1 Mini prioritizes speed and cost, O1 Preview's performance metrics tell a different story, focusing on depth and breadth:

- Accuracy and Nuance: Extremely high accuracy in complex tasks, with a remarkable ability to grasp subtleties and context that smaller models might miss.

- Reasoning Depth: Superior performance in logical reasoning, multi-step problem solving, and tasks requiring abstract thought.

- Output Quality: Exceptionally high-quality, creative, and coherent outputs across various modalities, often indistinguishable from human-generated content.

- Computational Cost: Generally higher cost per inference due to its larger size, more complex architecture, and greater computational demands. This is the trade-off for its advanced capabilities.

- Latency: Potentially higher latency compared to O1 Mini, especially for very complex queries or multimodal interactions, as more processing is required. However, for applications where quality and depth are more critical than millisecond responses, this is an acceptable compromise.

O1 Preview, by its nature, would not be directly comparable to gpt-4o mini. Instead, it would be positioned against models like the full GPT-4 or future, even more advanced research models. It's about pushing the frontier, where the goal isn't just to be efficient but to be intelligent in ways previously unachievable by machines. Its "Preview" designation also hints that it might still be in active development, with features evolving and performance being continuously optimized, reflecting its role as a pioneering technology.

O1 Mini vs O1 Preview: A Comprehensive AI Model Comparison

The fundamental distinction between O1 Mini and O1 Preview boils down to a classic engineering trade-off: efficiency and accessibility versus comprehensive capability and frontier-pushing innovation. This AI model comparison aims to provide a structured analysis across several critical dimensions, helping you understand where each model excels and where its limitations lie.

1. Performance: Speed, Accuracy, and Token Limits

- O1 Mini:

- Speed: Extremely fast inference speeds, often sub-100ms for typical requests, making it ideal for real-time applications. This speed is a direct result of its optimized architecture and potentially smaller parameter count.

- Accuracy: High accuracy for common, well-defined tasks (e.g., classification, simple summarization, basic Q&A). Its performance might degrade when faced with highly ambiguous, complex, or open-ended prompts requiring deep reasoning or creative synthesis.

- Token Limits (Context Window): Sufficiently large context window for most routine tasks, handling typical conversations or document excerpts. Designed for efficiency, not for processing entire novels in a single prompt.

- O1 Preview:

- Speed: Generally slower inference speeds, potentially ranging from hundreds of milliseconds to several seconds for complex, multimodal queries. This is due to its larger size and more intricate processing requirements.

- Accuracy: Exceptional accuracy and nuance across a vast range of complex tasks, including advanced reasoning, creative generation, and multimodal understanding. It excels where O1 Mini might falter, providing deeper insights and more coherent, high-quality outputs.

- Token Limits (Context Window): Likely boasts a significantly larger context window, enabling it to process and maintain context over extensive documents, long-form conversations, or large codebases, facilitating more sophisticated interactions and analyses.

2. Cost-Effectiveness

- O1 Mini: Designed to be highly cost-effective. Its optimized architecture and faster inference reduce computational resource consumption per query, resulting in a lower price point per token or per API call. This makes it an excellent choice for applications with high query volumes and tight budgets.

- O1 Preview: Expect a higher cost per inference due to its increased computational complexity, larger model size, and the cutting-edge nature of its capabilities. It's an investment in superior quality and advanced features, suitable for high-value applications where the quality of output justifies the higher expense.

3. Latency

- O1 Mini: Offers industry-leading low latency, crucial for real-time user experiences like chatbots, live recommendations, or interactive voice assistants. Minimizing delay is a core design principle.

- O1 Preview: Will typically exhibit higher latency, especially when processing complex requests involving multiple modalities or requiring extensive reasoning. While optimizations are continuous, its primary goal is depth and quality, not instantaneous response.

4. Specific Use Cases and Applicability

- O1 Mini: Best suited for:

- High-throughput, low-latency applications.

- Routine text processing (summarization, classification).

- Customer service automation.

- Basic content generation (e.g., product descriptions, simple emails).

- Large-scale data analysis and insights extraction from structured text.

- Complementing existing systems where speed and cost are bottlenecks.

- Benchmarking against models like gpt-4o mini for similar use cases, often aiming to surpass it in specific efficiency metrics.

- O1 Preview: Ideal for:

- Advanced research and development.

- Creative content generation (long-form articles, scripts, novels).

- Complex problem-solving (legal, medical, scientific domains).

- Multimodal applications (image understanding, video analysis, audio generation).

- Highly nuanced and contextual understanding tasks.

- Applications requiring deep reasoning, strategic planning, or critical thinking.

- Developing entirely new AI capabilities and paradigms.

5. Ease of Integration and Developer Experience

- O1 Mini: Likely offers a very straightforward API, minimal setup, and extensive documentation, making it easy for developers to integrate into existing workflows. Its focus on common tasks means fewer edge cases and more predictable behavior.

- O1 Preview: While offering robust API access, its advanced features and potential experimental nature might require a steeper learning curve. Developers might need to understand more complex prompting techniques, manage multimodal inputs, or handle more varied output formats, potentially requiring more specialized knowledge.

6. Scalability

- O1 Mini: Designed for immense scalability. Its efficiency allows for handling massive query volumes with fewer computational resources, making it highly suitable for large-scale enterprise deployments and applications with fluctuating traffic.

- O1 Preview: While scalable in theory, scaling to the same cost-efficient degree as O1 Mini for high-volume, real-time applications might be more challenging due to its higher resource demands per inference. It's more about scaling capability to complex problems rather than scaling efficiency across millions of simple requests.

7. Future Prospects and Evolution

- O1 Mini: Its evolution will likely focus on further architectural optimizations, even greater speed, reduced cost, and expansion into more specialized "mini" domains (e.g., O1 Mini for Code, O1 Mini for Healthcare). It's about refining efficiency for established AI tasks.

- O1 Preview: As a "Preview" model, its future is dynamic and potentially revolutionary. It will likely see rapid iteration on its core capabilities, introduction of new multimodal features, improvements in reasoning, and integration of cutting-edge research findings. It represents a living, evolving platform at the forefront of AI innovation.

Comparative Summary Table

To further clarify the distinctions, here's a comparative overview:

| Feature/Aspect | O1 Mini | O1 Preview |

|---|---|---|

| Primary Goal | Efficiency, Speed, Cost-Effectiveness | Maximum Capability, Nuance, Advanced Reasoning |

| Target Use Cases | High-volume, low-latency, routine tasks | Complex, creative, multimodal, research-oriented |

| Inference Speed | Very Fast (sub-100ms) | Slower (hundreds of ms to seconds) |

| Cost per Inference | Low | High |

| Accuracy | High for common tasks, good generalist | Exceptional for complex, nuanced tasks |

| Reasoning | Good for straightforward logic, fact retrieval | Superior for complex, multi-step, abstract reasoning |

| Creativity | Functional, template-based generation | Highly imaginative, open-ended, diverse outputs |

| Multimodal | Limited or None | Integrated (text, image, audio, etc.) |

| Context Window | Sufficient for typical interactions | Very Large, long-form coherence |

| Scalability | Excellent for high-volume, cost-sensitive | Good for complex tasks, higher resource demand |

| Developer Focus | Ease of integration, predictable performance | Cutting-edge features, fine-grained control |

| Analogy (Car) | Efficient commuter car, sports compact | Luxury performance sedan, concept car |

| Benchmark Context | gpt-4o mini (focus on efficiency) | GPT-4, frontier research models (focus on capability) |

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Real-World Applications and Scenarios: Where Each Model Shines

To truly grasp the implications of choosing between O1 Mini and O1 Preview, let's explore concrete real-world scenarios where each model would be the superior choice.

Scenarios for O1 Mini

- E-commerce Customer Service Automation:

- Challenge: A large online retailer receives millions of customer inquiries daily regarding order status, product returns, and basic technical support. Human agents are overwhelmed, leading to long wait times and customer dissatisfaction.

- O1 Mini Solution: Integrate O1 Mini into an AI-powered chatbot. It can instantly understand customer intent, retrieve relevant information from databases, and generate accurate, polite responses in milliseconds. For example, "What's the status of my order #12345?" would be processed and answered immediately. The high throughput and low cost of O1 Mini make it economically viable to handle such a massive volume of interactions without breaking the bank.

- Why O1 Mini: Its speed, cost-effectiveness, and reliability for routine, well-defined tasks are perfectly aligned with the demands of high-volume customer service.

- Real-time Content Moderation for Social Media:

- Challenge: A social media platform needs to monitor billions of user-generated posts, comments, and images in real-time to identify and remove harmful content (hate speech, spam, misinformation) to maintain a safe environment.

- O1 Mini Solution: O1 Mini can be deployed as the first line of defense. Its rapid text classification capabilities allow it to quickly scan vast amounts of text, flag potentially problematic content with high accuracy, and prioritize it for human review. It can efficiently differentiate between benign and malicious intent.

- Why O1 Mini: The sheer volume and real-time nature of content moderation demand a model that is incredibly fast and cheap per inference. O1 Mini's efficiency is paramount here.

- Automated Product Description Generation:

- Challenge: An online marketplace with millions of products needs unique, engaging, and SEO-friendly product descriptions generated quickly and at scale. Manual writing is impossible, and template-based approaches lack variety.

- O1 Mini Solution: Provide O1 Mini with structured product data (features, specifications, keywords). It can then generate unique, coherent, and grammatically correct product descriptions optimized for e-commerce, ensuring consistency and saving immense time.

- Why O1 Mini: Generating large volumes of relatively straightforward, repetitive text is its strong suit. The focus is on consistency, speed, and cost, not deep creative narrative.

- Backend Data Extraction and Structuring:

- Challenge: A financial institution needs to extract specific data points (e.g., company names, dates, financial figures, legal clauses) from thousands of unstructured PDF reports daily for analysis and compliance.

- O1 Mini Solution: Configure O1 Mini to identify and extract predefined entities and relationships from these documents. Its robust natural language understanding ensures accurate data extraction, which can then be fed into structured databases for further processing.

- Why O1 Mini: Its efficiency in understanding context and performing targeted information extraction makes it ideal for high-volume, data-intensive tasks.

Scenarios for O1 Preview

- Generative AI for Marketing Campaigns:

- Challenge: A marketing agency needs to develop highly creative, personalized, and multi-channel campaigns (text for ads, video scripts, social media posts, blog articles) for diverse client segments, often requiring novel ideas and intricate storytelling.

- O1 Preview Solution: Leverage O1 Preview's advanced creative generation capabilities. It can brainstorm innovative campaign concepts, write compelling long-form copy, generate engaging video scripts from brief prompts, and even suggest visual styles or audio cues, all while maintaining brand voice and target audience resonance.

- Why O1 Preview: Its superior creativity, nuanced understanding of marketing psychology, and ability to generate high-quality, diverse content across modalities are indispensable.

- Advanced Medical Diagnostics and Research Assistant:

- Challenge: Medical researchers are sifting through vast amounts of scientific literature, patient records, and genomic data to identify complex disease patterns, propose novel treatment hypotheses, or understand drug interactions. This requires deep contextual understanding and complex reasoning.

- O1 Preview Solution: O1 Preview can analyze extensive medical texts, patient histories, and even interpret medical images (if its multimodal capabilities extend to specialized medical imaging). It can synthesize information from disparate sources, identify subtle correlations, summarize complex research papers into actionable insights, and even suggest potential areas for further investigation or novel drug targets based on complex biological pathways.

- Why O1 Preview: Its deep reasoning, ability to handle vast and complex contexts, and potential for multimodal input processing are critical for high-stakes medical applications.

- Intelligent Virtual Assistant for Lawyers:

- Challenge: A law firm needs an AI assistant that can summarize lengthy legal briefs, identify relevant case precedents across thousands of documents, draft initial legal arguments, and answer complex jurisprudential questions, requiring precise language and deep legal reasoning.

- O1 Preview Solution: Integrate O1 Preview to serve as a legal research and drafting assistant. Given a complex legal scenario, it can retrieve pertinent clauses from contracts, analyze past judgments, highlight relevant statutes, and even draft initial arguments, understanding the subtle nuances of legal language and implications.

- Why O1 Preview: The need for extreme accuracy, deep contextual understanding of legal jargon, and advanced reasoning to synthesize complex information is a perfect fit for O1 Preview.

- Interactive Educational Content Creation for STEM:

- Challenge: An educational technology company wants to create highly interactive, personalized learning modules for complex STEM subjects, allowing students to ask open-ended questions, receive detailed explanations, and even interact with simulations.

- O1 Preview Solution: O1 Preview can generate dynamic explanations for complex scientific concepts, adapt its teaching style based on student interaction, solve multi-step mathematical problems with detailed working, and even generate visual aids or interactive simulations based on textual descriptions. Its multimodal capabilities could allow students to ask questions verbally, show their work via an image, and receive a rich, interactive explanation.

- Why O1 Preview: Its advanced reasoning, ability to generate diverse and high-quality content, and potential for multimodal interaction enable a truly personalized and effective learning experience.

These scenarios clearly illustrate that the choice between O1 Mini and O1 Preview is not about which model is "better" in an absolute sense, but rather which model is "right" for the specific demands and constraints of a given project.

Choosing Your Champion: Factors to Consider

Deciding between O1 Mini and O1 Preview requires a strategic approach, weighing your project's unique requirements against the strengths and limitations of each model. There's no single "best" choice; only the most appropriate one for your specific context. Here are the key factors to meticulously consider:

1. Project Requirements and Task Complexity

- For O1 Mini: If your project involves high-volume, repetitive tasks that require speed and efficiency (e.g., customer support, data entry automation, simple content generation, sentiment analysis of social media feeds), O1 Mini is likely your optimal choice. It excels at tasks with clear inputs, predictable outputs, and a need for rapid processing. Think of tasks that, while requiring intelligence, are largely "pattern recognition" or "information retrieval" focused.

- For O1 Preview: If your project demands deep understanding, creative generation, complex problem-solving, nuanced reasoning, or multimodal interaction (e.g., drafting legal documents, generating original marketing campaigns, scientific research assistance, advanced educational tools), O1 Preview is the clear winner. It's built for ambiguity, creativity, and tasks that require "cognitive leaps" beyond simple pattern matching.

2. Budget Constraints and Cost-Effectiveness

- For O1 Mini: If budget is a primary concern, especially for high-volume applications, O1 Mini's low cost per inference makes it incredibly attractive. The total cost of ownership for running an O1 Mini-powered application will generally be significantly lower, allowing for greater scalability within financial limits. This is particularly important for startups or projects with constrained resources that still need robust AI capabilities.

- For O1 Preview: Be prepared for a higher operational cost. The advanced capabilities of O1 Preview come with a premium, reflecting the increased computational resources required for its larger architecture and more complex processing. This model is best justified for high-value applications where the quality and depth of the AI's output directly translate into substantial business value or competitive advantage, making the higher investment worthwhile.

3. Latency Sensitivity

- For O1 Mini: If your application is user-facing and real-time responsiveness is paramount (e.g., interactive chatbots, live recommendations, gaming AI), O1 Mini's ultra-low latency is a non-negotiable advantage. Delays of even a few hundred milliseconds can degrade the user experience significantly in these scenarios.

- For O1 Preview: If your application can tolerate slightly longer response times (e.g., backend content generation, offline data analysis, complex research tasks where the user waits for a comprehensive answer), O1 Preview's higher latency is an acceptable trade-off for its superior output quality and depth. Focus on the value of the output rather than instantaneous delivery.

4. Need for Multimodal Capabilities

- For O1 Mini: If your project primarily involves text-based interactions or tasks, or if multimodal aspects can be handled by separate, specialized models, O1 Mini's lack of inherent multimodal integration (or limited scope) is not a deterrent.

- For O1 Preview: If your application requires seamless processing and generation across different modalities—such as understanding an image and generating a descriptive caption, or taking spoken input and responding with both text and a generated graphic—O1 Preview's integrated multimodal capabilities are essential. This is a significant differentiator for truly immersive and context-rich AI experiences.

5. Developer Experience and Integration Complexity

- For O1 Mini: If your development team prioritizes quick integration, straightforward API usage, and predictable performance, O1 Mini offers a smoother developer experience. Its simpler nature means fewer parameters to tweak and a more direct path from concept to deployment. This is ideal for agile development cycles.

- For O1 Preview: Developers working with O1 Preview should be prepared for potentially more complex interactions. Leveraging its advanced features might require deeper understanding of prompt engineering, managing multimodal inputs, and handling a wider array of output formats. It’s an ideal tool for experienced AI engineers pushing the boundaries, but might require a steeper learning curve for newcomers.

6. Scalability and Throughput Requirements

- For O1 Mini: For applications designed to serve millions or billions of requests, O1 Mini's design for high throughput and efficient resource utilization ensures scalable performance without exponential cost increases. Its "mini" nature is a huge advantage when scaling up.

- For O1 Preview: While capable of scaling, O1 Preview's higher resource demand per inference means that scaling to extremely high request volumes will be significantly more expensive. It's more suited for scaling the complexity of tasks it can handle across a range of users or projects, rather than simply processing a massive number of basic requests efficiently.

By carefully evaluating these factors against your project's specific context, you can confidently determine whether O1 Mini's agile efficiency or O1 Preview's frontier capabilities are the right fit. It's about optimizing for your definition of success.

Navigating the AI Ecosystem with Unified Platforms: Leveraging XRoute.AI

The decision of o1 mini vs o1 preview is a critical one, but it's just one piece of the puzzle in today's dynamic AI landscape. As businesses and developers increasingly rely on diverse AI models—perhaps using O1 Mini for high-volume customer service and O1 Preview for creative marketing—managing these disparate APIs can become a significant operational challenge. Each model often comes with its own unique API endpoints, authentication mechanisms, rate limits, and pricing structures. This complexity can hinder development speed, increase maintenance overhead, and make it difficult to switch between models or experiment with new ones efficiently.

This is where platforms like XRoute.AI become invaluable. XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. Imagine a single, consistent interface that allows you to access not just O1 Mini and O1 Preview, but also potentially other specialized models, without having to integrate each one individually.

How XRoute.AI Simplifies AI Model Management:

- Unified, OpenAI-Compatible Endpoint: XRoute.AI provides a single, OpenAI-compatible endpoint. This means that if you're already familiar with OpenAI's API, integrating O1 Mini, O1 Preview, or any of the 60+ AI models from over 20 active providers becomes remarkably straightforward. You write your code once, and you can switch between models with a simple configuration change, significantly reducing development time and effort.

- Seamless Integration for Development: By abstracting away the complexities of multiple API connections, XRoute.AI enables seamless development of AI-driven applications, chatbots, and automated workflows. Whether you're building a new app or enhancing an existing one, the developer-friendly tools offered by XRoute.AI ensure that your focus remains on innovation, not on managing API sprawl.

- Low Latency AI: When considering models like O1 Mini where low latency is paramount, XRoute.AI's focus on low latency AI ensures that the unified API layer doesn't introduce unnecessary delays. It's engineered to provide efficient routing and optimized performance, allowing your applications to maintain the speed and responsiveness critical for real-time interactions.

- Cost-Effective AI: For businesses weighing the cost implications of using models like O1 Mini for scale versus O1 Preview for advanced tasks, XRoute.AI offers advantages in cost-effective AI. By providing a flexible pricing model and potentially optimizing routing to the best-performing or most cost-efficient models for a given task, it helps you manage your AI budget effectively. This might include intelligent load balancing or dynamic model selection based on your pre-defined cost thresholds.

- High Throughput and Scalability: As your application grows, the ability to handle high volumes of requests efficiently is crucial. XRoute.AI’s platform is built for high throughput and scalability, ensuring that whether you're using O1 Mini for millions of simple queries or O1 Preview for complex, resource-intensive tasks, your infrastructure can keep pace with demand.

- Future-Proofing Your AI Strategy: The AI ecosystem is constantly evolving. Today it's O1 Mini vs O1 Preview; tomorrow it could be something entirely new. XRoute.AI helps empower users to build intelligent solutions without the complexity of managing multiple API connections, allowing you to easily experiment with new models and providers as they emerge, without extensive re-engineering. This makes your AI strategy adaptable and future-proof.

In essence, XRoute.AI acts as an intelligent intermediary, providing a robust, reliable, and flexible layer between your application and the myriad of AI models available. Whether your primary concern is the lightning speed of O1 Mini, the deep intelligence of O1 Preview, or the ability to dynamically switch between them based on task requirements, XRoute.AI simplifies the entire process, making advanced AI more accessible and manageable for projects of all sizes, from startups to enterprise-level applications. It transforms the daunting task of AI model comparison and integration into a seamless part of your development workflow.

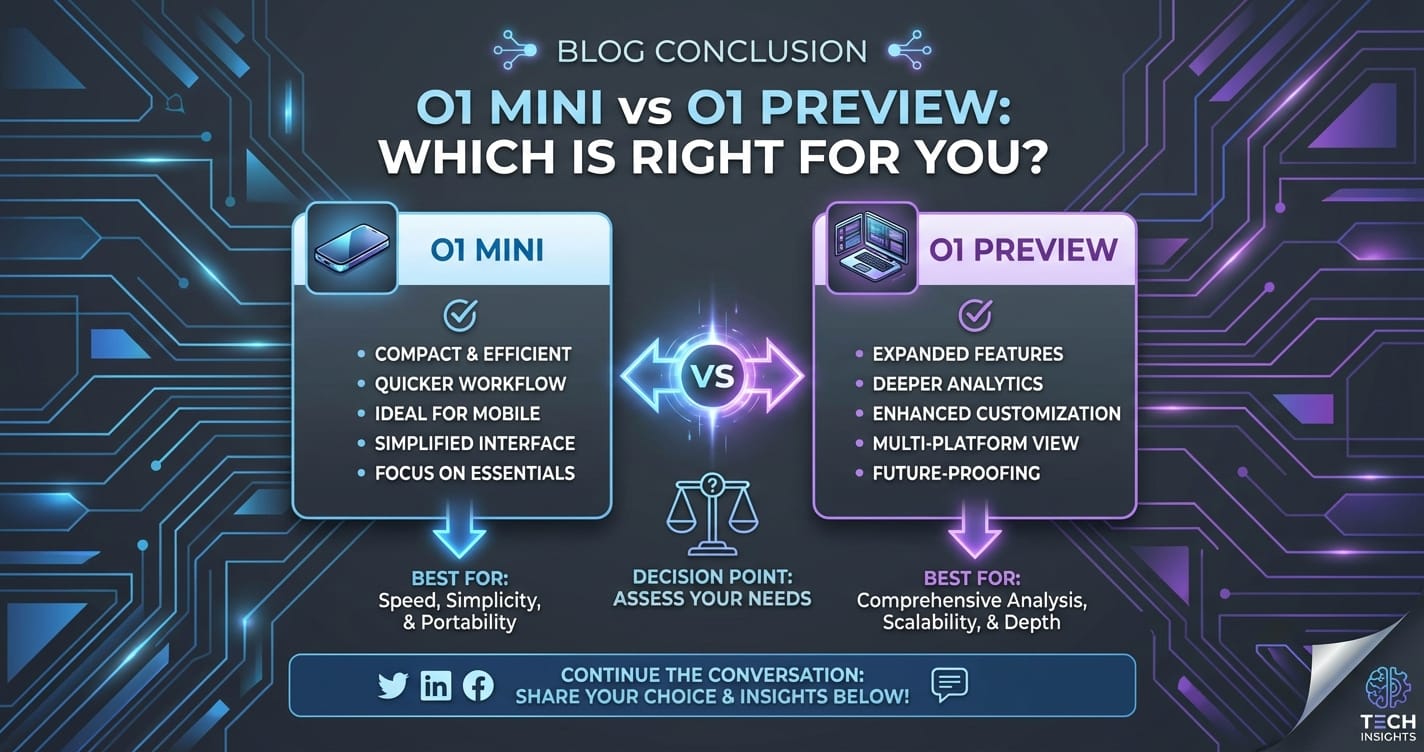

Conclusion: Making the Right AI Choice for Your Future

The decision between O1 Mini and O1 Preview is a microcosm of the broader challenges and opportunities presented by the rapidly advancing field of artificial intelligence. It highlights a fundamental dichotomy: the pursuit of hyper-efficiency and cost-effectiveness for widespread application versus the relentless quest for ultimate capability and groundbreaking innovation. Neither model is inherently "better"; rather, each is exquisitely designed for a specific set of purposes and under particular constraints.

O1 Mini stands as the champion of speed, scalability, and economical operation. It is the ideal choice for businesses and developers who require high-volume, low-latency processing of routine yet critical AI tasks. Its strength lies in democratizing advanced AI, making it accessible for applications where efficiency and a predictable cost structure are paramount. Think of it as the highly optimized engine for the everyday, yet demanding, workloads of the digital economy, much like how gpt-4o mini aims to bring powerful capabilities to wider use cases at an accessible price point.

Conversely, O1 Preview is the vanguard of AI intelligence. It offers unparalleled depth of understanding, advanced reasoning, and creative generation across modalities, pushing the boundaries of what AI can achieve. While it comes with a higher computational cost and potentially longer latency, its capabilities are indispensable for complex problem-solving, cutting-edge research, and truly innovative applications that require an AI to think, create, and reason in ways previously unimaginable.

Your ultimate choice hinges on a thorough understanding of your project's unique demands: its budget, desired latency, the complexity of the tasks, and the long-term strategic goals. Are you building a system that needs to operate at immense scale with minimal cost, or are you forging new ground, requiring the most intelligent and versatile AI possible?

Furthermore, recognizing that the AI landscape is diverse and often requires a combination of models, platforms like XRoute.AI offer a crucial advantage. By providing a unified, developer-friendly API to a vast array of models, XRoute.AI simplifies the integration, management, and cost-optimization across your AI portfolio. It ensures that regardless of whether you choose O1 Mini, O1 Preview, or a blend of multiple models, your development process remains agile and efficient, allowing you to focus on building intelligent solutions rather than wrestling with API complexities.

In the rapidly evolving world of AI, an informed decision today ensures a robust and future-proof solution tomorrow. By carefully considering the insights from this AI model comparison and leveraging platforms designed for flexibility, you are well-equipped to make the choice that propels your next AI endeavor to success.

Frequently Asked Questions (FAQ)

Q1: What is the main difference between O1 Mini and O1 Preview?

A1: The main difference lies in their primary design goals. O1 Mini is optimized for speed, cost-effectiveness, and high-volume, low-latency processing of routine AI tasks. O1 Preview, on the other hand, prioritizes maximum capability, nuanced understanding, advanced reasoning, and multimodal integration for complex, creative, and research-oriented applications, typically at a higher cost and potentially higher latency.

Q2: Which model should I choose if my application needs very fast response times, like a real-time chatbot?

A2: For applications requiring very fast response times and low latency, O1 Mini is the superior choice. Its architecture is specifically designed for rapid inference speeds and efficient handling of high query volumes, making it ideal for real-time user interactions like chatbots, live recommendations, and instant data processing.

Q3: Can O1 Preview handle image and audio inputs, or is it only for text?

A3: O1 Preview is designed with multimodal capabilities, meaning it can seamlessly process and generate content across different modalities, including text, images, and audio, and potentially even video. This allows for more comprehensive understanding and interaction compared to models primarily focused on text.

Q4: How does the cost compare between O1 Mini and O1 Preview?

A4: O1 Mini is significantly more cost-effective per inference due to its optimized architecture and lower computational demands. O1 Preview, with its larger size and advanced capabilities, will typically have a higher cost per inference. The choice depends on whether your project prioritizes budget for high-volume tasks or investment in superior quality and complex functionality.

Q5: How can a platform like XRoute.AI help me manage both O1 Mini and O1 Preview, or other AI models?

A5: XRoute.AI acts as a unified API platform that streamlines access to over 60 AI models, including hypothetical ones like O1 Mini and O1 Preview. It provides a single, OpenAI-compatible endpoint, simplifying integration and allowing you to switch between models easily. This reduces development complexity, helps with cost-effective AI management, ensures low latency routing, and offers scalability, making your AI strategy more flexible and future-proof.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.