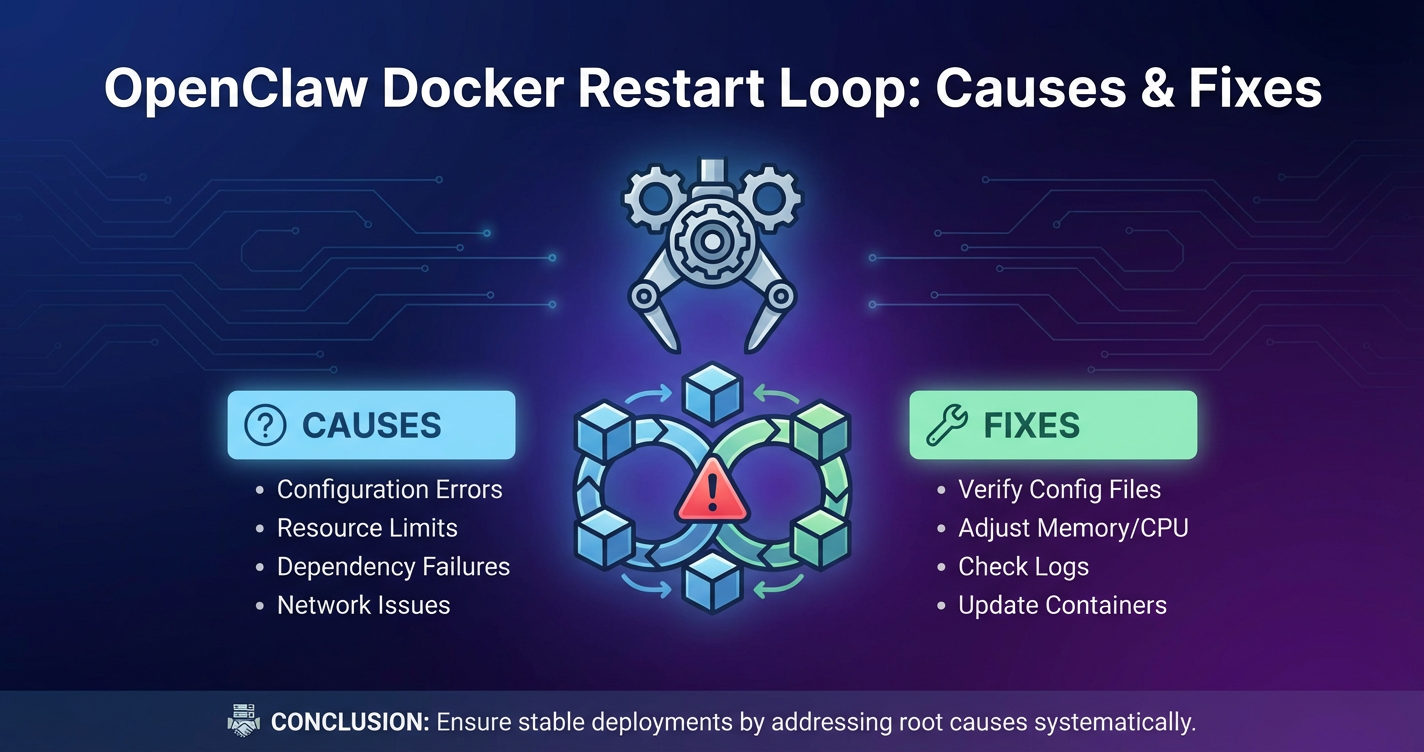

OpenClaw Docker Restart Loop: Causes & Fixes

The hum of servers, the steady flow of data, and the seamless operation of your applications are the hallmarks of a well-maintained infrastructure. Yet, for many developers and system administrators, this tranquility can be shattered by a dreaded sight: a Docker container caught in a relentless restart loop. This issue is particularly frustrating when it affects critical applications like OpenClaw, a hypothetical but representative high-performance data processing or AI inference engine that demands unwavering stability. A restart loop not only disrupts operations but also hints at deeper underlying problems, demanding immediate and thorough investigation.

OpenClaw, in this context, embodies an application critical to business functions—perhaps it's performing real-time analytics, orchestrating complex machine learning pipelines, or managing vital data transformations. Its consistent availability is paramount, and any interruption, especially a persistent restart loop within its Dockerized environment, can lead to significant data processing delays, service outages, and potential financial losses. The very promise of Docker—isolation, portability, and streamlined deployment—can turn into a nightmare when a container repeatedly crashes and attempts to restart, often without clear explanations in the immediate logs.

This comprehensive guide is designed to dissect the multifaceted causes behind OpenClaw Docker restart loops. We will navigate through a labyrinth of potential issues, ranging from subtle resource constraints and intricate application-level bugs to Docker engine misconfigurations and external service dependencies. More importantly, we will equip you with a robust arsenal of troubleshooting strategies and effective fixes. Our focus extends beyond merely stopping the loop; we aim for long-term stability, emphasizing performance optimization and cost optimization throughout our solutions, ensuring that your OpenClaw deployment not only runs reliably but also efficiently. By understanding the root causes and implementing best practices, you can transform a chaotic restart loop into a manageable, solvable challenge, ultimately fortifying your OpenClaw operations against future disruptions.

Understanding the OpenClaw Ecosystem and Docker's Role

Before diving into the intricacies of restart loops, it's crucial to establish a clear understanding of what OpenClaw represents and why Docker is so frequently chosen as its deployment platform. This foundational knowledge provides context for the subsequent troubleshooting steps.

What is OpenClaw? (A Hypothetical Application)

Let's envision OpenClaw as a sophisticated, resource-intensive application designed for high-throughput data processing and complex computational tasks. It might be:

- An AI/ML Inference Engine: Performing real-time predictions, natural language processing, or computer vision tasks, requiring significant GPU or CPU resources and often interacting with large language models (LLMs) or other specialized AI services.

- A Financial Trading Platform Component: Handling rapid transaction processing, market data analysis, or risk assessment, where low latency and high availability are non-negotiable.

- A Scientific Simulation Backend: Executing intricate mathematical models, requiring massive parallel processing capabilities and stable, uninterrupted runtime.

- A Large-Scale Data Transformation Pipeline Segment: Ingesting, cleaning, transforming, and loading vast datasets, demanding robust error handling and consistent resource allocation.

Regardless of its specific domain, OpenClaw's characteristic features would likely include: * High Resource Demands: Extensive CPU, memory, or GPU usage. * Complex Dependencies: Reliance on various libraries, databases, message queues, and potentially external APIs. * Criticality: Its uninterrupted operation is vital for business continuity or research progress. * Configuration Sensitivity: Specific settings and environment variables dictate its behavior and performance.

Why Docker for OpenClaw? The Advantages of Containerization

Docker has become the de facto standard for packaging and deploying applications, and for an application like OpenClaw, its benefits are particularly compelling:

- Portability and Consistency: Docker containers encapsulate OpenClaw and all its dependencies into a single, isolated unit. This ensures that OpenClaw runs identically across different environments—from a developer's laptop to a staging server, and finally to production—eliminating "it works on my machine" syndrome.

- Isolation: Each OpenClaw container operates in its own isolated environment, preventing conflicts with other applications or services running on the same host. This isolation extends to dependencies, network ports, and file systems, creating a clean slate for OpenClaw.

- Scalability: Docker makes it easy to scale OpenClaw instances horizontally. When demand increases, new containers can be spun up quickly to handle the load, and then scaled down when demand subsides. This dynamic scaling is crucial for performance optimization during peak times and contributes to cost optimization by utilizing resources only when needed.

- Resource Management: Docker allows defining precise resource limits (CPU, memory) for each container. This helps prevent a single OpenClaw instance from monopolizing host resources and affecting other applications, while also guiding efficient resource allocation.

- Faster Deployment and Rollbacks: Container images are lightweight and quick to deploy. If a new version of OpenClaw introduces issues, rolling back to a previous, stable image is straightforward and fast, minimizing downtime.

- Version Control and Reproducibility: Dockerfiles serve as blueprints for building OpenClaw images, allowing the entire build process to be version-controlled alongside the application code. This ensures reproducibility and simplifies auditing.

The Importance of Stable OpenClaw Operation

Given OpenClaw's hypothetical critical nature, its stability is paramount. A Docker restart loop signifies a severe breach of this stability, often leading to:

- Service Outages: If OpenClaw is a public-facing service or a backend for one, repeated restarts translate directly to unavailability.

- Data Inconsistencies or Loss: In data processing pipelines, a crashing OpenClaw could corrupt data in transit, fail to process critical batches, or leave data in an undefined state.

- Performance Degradation: Even if a container eventually recovers, the constant cycle of crashing and restarting consumes valuable host resources, impacts overall system performance optimization, and can delay processing.

- Resource Wastage (Cost Implications): A container that fails to start or continuously restarts consumes CPU and memory resources without delivering value. This translates to inefficient infrastructure utilization and increased operational costs, directly impacting cost optimization efforts.

- Alert Fatigue: Persistent restart loops generate floods of alerts, desensitizing operations teams to genuine issues.

Understanding these implications underscores the urgency and meticulousness required in diagnosing and resolving OpenClaw Docker restart loops.

Decoding Docker Restart Loops - The Fundamentals

A Docker restart loop isn't just an inconvenience; it's a symptom, a flag indicating that something fundamental is amiss. To effectively troubleshoot, we must first understand what a restart loop is, how Docker handles container exits, and how to spot these issues using basic Docker commands.

What Constitutes a Restart Loop?

At its core, a Docker restart loop occurs when a container attempts to start, fails, exits with a non-zero status code (or sometimes even a zero status code if misconfigured), and then, due to its configured restart policy, immediately attempts to start again. This cycle repeats indefinitely until the underlying issue is resolved or the restart policy is manually overridden.

Common restart policies include:

no: Do not automatically restart the container.on-failure: Restart only if the container exits with a non-zero exit code.always: Always restart the container, even if it exits cleanly.unless-stopped: Always restart unless the container is explicitly stopped.

For critical applications like OpenClaw, always or unless-stopped are common choices to ensure high availability, but they also mean that even a brief application crash will trigger a restart, potentially leading to a loop if the crash cause isn't ephemeral.

Common Docker Exit Codes and Their Meanings

The exit code of a container is a crucial piece of information, providing a hint about why the process inside failed. Understanding these codes is the first step in diagnosing a restart loop.

| Exit Code | Common Meaning | Potential OpenClaw Scenario |

|---|---|---|

0 |

Success: The process exited cleanly. If a container exits with 0 and restarts, it usually indicates that the CMD or ENTRYPOINT process finished its work and exited, and the restart policy is set to always or unless-stopped. |

A background worker that processes a single task and exits, but is expected to run continuously. Or, a misconfigured CMD in the Dockerfile that runs a script once and exits, rather than keeping OpenClaw's main process alive. |

1 |

Catchall General Error: The process exited due to an unhandled error, typically an application-level failure, invalid arguments, or a generic program termination due to an issue. | OpenClaw application failed to initialize due to a critical configuration error (e.g., invalid database credentials, missing required environment variable), a syntax error in a configuration file, or an unhandled exception during startup that wasn't caught by the application's internal error handling. |

126 |

Permission Problem/Command Not Invoked: Command invoked cannot execute (e.g., permission denied, not an executable file). | The ENTRYPOINT or CMD script for OpenClaw does not have execute permissions (chmod +x), or the shell specified in the shebang (#!/bin/bash) is missing or incorrect. |

127 |

Command Not Found: The command specified in ENTRYPOINT or CMD does not exist in the container's PATH. |

The main OpenClaw executable or a critical startup script is missing from the container image, or its path is not correctly specified in the ENTRYPOINT/CMD. This could happen if a dependency wasn't installed, or a COPY command in the Dockerfile failed. |

137 |

SIGKILL (Out of Memory - OOM Killer): The container was sent a SIGKILL signal, typically by the host's Out Of Memory (OOM) killer because it exceeded its allocated memory limits. This is a common and critical exit code. |

OpenClaw's memory consumption rapidly escalated during startup, exceeding the --memory limit set for the Docker container or the host's available RAM. This is especially prevalent with large datasets, complex AI models, or memory leaks within OpenClaw itself. This directly impacts performance optimization and needs careful resource allocation for cost optimization. |

139 |

SIGSEGV (Segmentation Fault): The process tried to access a restricted memory location. Often indicates a bug in the application code, a corrupted library, or issues with native extensions. | A critical bug in OpenClaw's C/C++ components, a corrupted shared library it depends on, or an issue with its underlying runtime environment (e.g., JVM, Python interpreter) leading to a segmentation fault during initialization. |

143 |

SIGTERM (Graceful Shutdown): The container received a SIGTERM signal, usually indicating a graceful shutdown request. If followed by a restart, it might be due to a health check failing or Docker daemon restarting. |

OpenClaw might be shutting down gracefully because a health check has failed consistently, or the Docker daemon itself is cycling, causing all containers to be stopped and restarted. If OpenClaw isn't ready within a specified shutdown timeout, Docker might send a SIGKILL. |

255 |

General Error/Application Specific: A generic error code, often used by applications to signify an unhandled error or a problem that doesn't fit into other specific categories. Can be highly application-dependent. | OpenClaw exited due to an internal exception not explicitly caught, or a critical system call failed during its startup sequence that the application simply reported as a generic failure before terminating. Could also indicate a failure to connect to an essential external service. |

How to Identify a Restart Loop

Identifying a restart loop involves monitoring the state and logs of your Docker containers.

docker ps:bash docker psExpected output snippet for a restarting container:CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES a1b2c3d4e5f6 openclaw:latest "/usr/local/bin/openc…" 2 minutes ago Restarting (1) 2 seconds ago openclaw-processor- This command shows currently running containers. If OpenClaw is in a restart loop, you'll see its

STATUScolumn rapidly changing, often showing "Restarting (X) seconds ago" or brief periods of "Up" followed by "Exited (Y)". - Pay close attention to the

RESTARTScount. A rapidly incrementing number here is a clear indicator of a loop.

- This command shows currently running containers. If OpenClaw is in a restart loop, you'll see its

docker logs <container_id_or_name>:bash docker logs -f openclaw-processorThis will show you what OpenClaw is printing tostdoutandstderrbefore it crashes.- This is your primary tool for understanding why the container is exiting. Run this command with the

--follow(-f) flag to stream logs in real-time. - Look for error messages, stack traces, warnings, or any output immediately preceding the container's exit.

- Use

--tail Xto view only the last X lines of logs.

- This is your primary tool for understanding why the container is exiting. Run this command with the

docker inspect <container_id_or_name>:bash docker inspect openclaw-processor | grep -E "ExitCode|Error|RestartCount"Expected output snippet:json "ExitCode": 137, "Error": "OOMKilled", "RestartCount": 15,- Provides detailed low-level information about a container, including its restart count, exit code, and the error message (if any) from the last exit.

- Specifically, look for

State.ExitCode,State.Error, andRestartCount.

docker events:bash docker events --filter "type=container" --filter "container=openclaw-processor"- Provides a real-time stream of events from the Docker daemon, including container

start,die,oom, etc. This can be useful for seeing a broader picture of what's happening.

- Provides a real-time stream of events from the Docker daemon, including container

By systematically using these commands and understanding the output, you can quickly move from identifying a restart loop to gathering crucial diagnostic information about its underlying cause.

Deep Dive into Common Causes of OpenClaw Docker Restart Loops

The causes of Docker restart loops, especially for complex applications like OpenClaw, are diverse. They can stem from resource deprivation, misconfigurations, application bugs, or external dependencies. A systematic approach to diagnosis is crucial.

3.1 Resource Constraints: The Silent Killer

Resource starvation is one of the most insidious and common culprits behind container restarts. When OpenClaw demands more CPU, memory, or disk I/O than is available or allocated, the operating system or Docker itself might step in to terminate the process.

- CPU Starvation:

- Cause: OpenClaw requires intensive computation, but its container is limited to a fraction of the available CPU (

--cpusor--cpu-shares). If the application tries to burst beyond this, it might hang, become unresponsive, or internally time out on critical operations, leading to an application-level crash. - Symptoms: High

load averageon the host,docker statsshowing CPU utilization frequently hitting its cap, OpenClaw logs showing timeouts or slow processing warnings before a crash. - Solution: Increase CPU limits if the host has spare capacity. Optimize OpenClaw's code for better CPU efficiency (e.g., parallelization, algorithm improvements) for better performance optimization. Consider profiling OpenClaw's CPU usage to pinpoint bottlenecks.

- Cause: OpenClaw requires intensive computation, but its container is limited to a fraction of the available CPU (

- Memory Exhaustion (OOM Killer):

- Cause: This is perhaps the most frequent cause, leading to an exit code of

137. OpenClaw consumes more RAM than the--memorylimit set for its container or more than the host machine has available. The Linux kernel's Out Of Memory (OOM) killer then forcefully terminates the container to protect the host system. - Symptoms:

docker inspectshowingExitCode: 137andError: OOMKilled. Logs might show the application crashing abruptly without a clean shutdown message.docker statsshows memory usage spiking near the limit just before the crash. - Solutions:

- Increase Memory Limit: The simplest, though not always most sustainable, solution is to increase the

--memorylimit for the OpenClaw container if the host has sufficient free RAM. - Optimize OpenClaw's Memory Usage: This is the ideal, long-term solution. Identify and fix memory leaks within OpenClaw. Optimize data structures, reduce cached data, or process data in smaller chunks. For AI/ML models, explore techniques like quantization, pruning, or using smaller model architectures. This is a direct approach to performance optimization and critically important for cost optimization as memory is a premium resource.

- Implement Swap Space: While not a direct fix for OOM, configuring swap space on the host can provide a buffer, preventing immediate OOM kills in some cases, though it significantly degrades performance.

- Horizontal Scaling: If OpenClaw's workload can be distributed, running multiple smaller instances (each with lower memory requirements) across different hosts can alleviate pressure on a single host.

- Increase Memory Limit: The simplest, though not always most sustainable, solution is to increase the

- Cause: This is perhaps the most frequent cause, leading to an exit code of

- Disk I/O Bottlenecks:

- Cause: OpenClaw performs heavy read/write operations (e.g., logging, saving model checkpoints, processing large files) to a slow disk or an overloaded storage system. This can lead to application timeouts, unresponsiveness, and subsequent crashes.

- Symptoms:

iostatoriotopon the host showing high disk utilization, long queue lengths. OpenClaw logs might show "disk full" errors (if space is truly exhausted) or I/O timeout messages. - Solutions: Use faster storage (SSDs, NVMe). Separate I/O-intensive workloads. Optimize OpenClaw's disk access patterns (e.g., asynchronous I/O, reducing unnecessary writes, caching). Ensure the underlying storage volume type is appropriate for the workload (e.g., provisioned IOPS).

3.2 Application-Level Failures within OpenClaw

Even if Docker is perfectly configured, bugs or misconfigurations within OpenClaw itself can cause it to crash and restart. These are often the hardest to debug because the symptoms manifest within the application's runtime.

- Configuration Errors:

- Cause: Incorrect database connection strings, missing API keys, malformed configuration files (JSON, YAML), incorrect file paths, or invalid environment variables. OpenClaw might fail to initialize essential components if these are wrong.

- Symptoms: OpenClaw logs will explicitly mention configuration parsing errors, "connection refused," "file not found," or authentication failures.

ExitCode: 1is common here. - Solutions:

- Thorough Configuration Review: Double-check all configuration files and environment variables. Ensure they match the expected format and values.

- Configuration Management: Use tools like Docker Compose

.envfiles, Kubernetes ConfigMaps/Secrets, or environment variable injectors to manage configurations consistently. - Validation Logic: Implement robust configuration validation within OpenClaw's startup sequence to catch errors early and provide clear error messages.

- Dependency Issues:

- Cause: Missing shared libraries, incorrect library versions (e.g., Python

pip installissues, Java JAR conflicts), C/C++ runtime library mismatches, or system tool dependencies not present in the Docker image. - Symptoms:

Command not found(ExitCode: 127), dynamic linker errors (No such file or directoryfor shared objects), or PythonModuleNotFoundError. - Solutions:

- Precise Dockerfile: Use a minimal base image, explicitly install all required dependencies using package managers (apt, yum) and language-specific tools (pip, npm, maven). Pin dependency versions to avoid unexpected updates.

- Multi-stage Builds: Reduce image size and ensure only necessary runtime dependencies are present in the final image.

ldd(for Linux): Inside a debugging container,ldd /path/to/executablecan show missing shared libraries.

- Cause: Missing shared libraries, incorrect library versions (e.g., Python

- Runtime Exceptions/Bugs in OpenClaw Code:

- Cause: Unhandled exceptions (e.g.,

NullPointerException,IndexOutOfBoundsException,ZeroDivisionError), logical errors, or race conditions within OpenClaw's source code that cause it to terminate unexpectedly. - Symptoms: Detailed stack traces in OpenClaw's logs immediately preceding the crash.

ExitCode: 1,139(segmentation fault) or255are common. - Solutions:

- Thorough Log Analysis: This is paramount. Examine the stack trace carefully to pinpoint the exact line of code and the context of the error.

- Debugging Mode: Run OpenClaw in a container with a debugger attached (if supported by the language/framework) or with verbose logging enabled.

- Unit and Integration Tests: Robust test suites can catch many of these bugs before deployment.

- Code Review: Peer reviews can often identify potential issues.

- Cause: Unhandled exceptions (e.g.,

3.3 Docker Engine and Daemon Issues

Sometimes, the problem isn't with OpenClaw or its container configuration but with the Docker engine itself.

- Corrupted Docker Daemon:

- Cause: The Docker daemon (dockerd) or its underlying storage (often

/var/lib/docker) can become corrupted due to unexpected shutdowns, disk errors, or bugs in Docker itself. - Symptoms: Docker commands failing mysteriously, containers not starting, or the daemon crashing.

- Solutions: Restart the Docker daemon (

sudo systemctl restart docker). If persistent, consider pruning unused Docker objects (docker system prune -a) or, as a last resort, backing up and then cleaning/var/lib/docker(which will delete all images, containers, and volumes).

- Cause: The Docker daemon (dockerd) or its underlying storage (often

- Insufficient Disk Space for Docker Images/Containers:

- Cause: The disk where Docker stores its images, container layers, and logs (typically

/var/lib/docker) runs out of space. New containers cannot be created, or existing ones might fail to write logs or temporary files. - Symptoms:

docker logsmight show "No space left on device" errors.df -hon the host will show the partition being full. - Solutions:

- Clean Up Docker: Use

docker system prune -ato remove all stopped containers, unused networks, dangling images, and build cache. - Increase Disk Space: Extend the logical volume or add a new disk.

- Optimize Image Size: Use multi-stage builds and minimal base images for OpenClaw to reduce its image footprint, which helps cost optimization by reducing storage needs.

- Clean Up Docker: Use

- Cause: The disk where Docker stores its images, container layers, and logs (typically

- Network Configuration Problems:

- Cause: Port conflicts (if OpenClaw tries to bind to a port already in use on the host), DNS resolution failures inside the container, or misconfigured Docker networks preventing OpenClaw from communicating with external services.

- Symptoms: OpenClaw logs showing "Address already in use," "connection refused," or "hostname not found."

- Solutions:

- Check Port Mappings: Ensure the host port mapped to OpenClaw's internal port is free.

- Verify DNS: Inside a running container, test DNS resolution (

ping google.com,cat /etc/resolv.conf). - Inspect Docker Networks: Use

docker network inspect <network_name>to verify network settings. - Firewall Rules: Ensure host firewalls (iptables, firewalld) are not blocking necessary traffic.

- Docker Version Incompatibilities:

- Cause: OpenClaw's Dockerfile or runtime configuration relies on features only present in newer Docker versions, or conversely, a new Docker version introduces a breaking change.

- Symptoms: Docker daemon errors during container startup, unexpected behavior, or specific features failing.

- Solutions: Always test new Docker versions in a staging environment. Pin Docker engine versions in production. Consult Docker release notes for breaking changes.

3.4 Data Persistence and Volume Mounting Problems

OpenClaw, especially if it's stateful or needs to store processed data, will likely use Docker volumes. Issues with these volumes can easily trigger restarts.

- Incorrect Volume Mounts:

- Cause: The host path specified for a volume mount doesn't exist, has incorrect permissions, or is empty when OpenClaw expects initial data. Or, a critical path is mounted as read-only (

:ro) when OpenClaw needs to write to it. - Symptoms: OpenClaw logs showing "permission denied," "file not found," or errors related to inability to write to a specific directory.

- Solutions: Double-check

-vorvolumesmapping indocker runor Docker Compose. Ensure host paths exist and have correct permissions (e.g.,chown,chmod). Test read/write access to the mounted host directory from within a temporary container.

- Cause: The host path specified for a volume mount doesn't exist, has incorrect permissions, or is empty when OpenClaw expects initial data. Or, a critical path is mounted as read-only (

- Data Corruption in Persistent Volumes:

- Cause: The data stored in a volume (e.g., a database file, a model checkpoint) becomes corrupted due to a previous crash, disk error, or improper shutdown. OpenClaw tries to read this corrupted data during startup and fails.

- Symptoms: Application logs showing errors related to database corruption, file parsing errors, or integrity checks failing.

- Solutions: Backup the volume data. Attempt to repair the corrupted data using application-specific tools (e.g., database recovery tools). If repair is not possible, restore from a known good backup. Implement regular backups for all critical persistent data.

- Permissions Issues on Mounted Volumes:

- Cause: The user or group ID (UID/GID) inside the OpenClaw container does not have the necessary read, write, or execute permissions on the files and directories mounted from the host.

- Symptoms: "Permission denied" errors in OpenClaw logs for specific file operations.

- Solutions:

- Match UIDs/GIDs: Ensure the user running OpenClaw inside the container has a UID/GID that matches the permissions of the host's mounted directory. This can be done by using the

USERinstruction in the Dockerfile, or by adjusting host permissions. - Lax Permissions (Caution): As a temporary debugging step (not for production), you might set

chmod 777on the host directory to rule out permissions, then narrow down the correct permissions. chownin Entrypoint: In some cases, the entrypoint script might need tochowndirectories within the container to match the runtime user, especially for newly created directories.

- Match UIDs/GIDs: Ensure the user running OpenClaw inside the container has a UID/GID that matches the permissions of the host's mounted directory. This can be done by using the

3.5 Entrypoint and Command Misconfigurations

The way a Docker container starts its main process is defined by ENTRYPOINT and CMD in the Dockerfile. Errors here are a common cause of immediate exits.

- Incorrect

ENTRYPOINTorCMDin Dockerfile:- Cause: The specified command or script path is wrong, the arguments are incorrect, or the main OpenClaw process fails to launch.

- Symptoms:

ExitCode: 127(command not found) or126(permission denied/cannot execute). - Solutions:

- Verify Paths: Ensure

ENTRYPOINTandCMDpoint to correct executables or scripts inside the container image. - Test Interactively: Run the container with an interactive shell (

docker run -it --entrypoint /bin/bash openclaw:latest) and manually try to execute theENTRYPOINTandCMDcommands to see what fails.

- Verify Paths: Ensure

- Shell vs. Exec Form Nuances:

- Cause:

CMD ["executable", "param1"](exec form) directly executes the process, whileCMD executable param1(shell form) runs it within a shell (/bin/sh -c "executable param1"). Using shell form can sometimes mask errors or alter process IDs, making signal handling (likeSIGTERM) difficult. Conversely, exec form doesn't process environment variables within the command string. - Symptoms:

ENTRYPOINTscripts not receiving signals, environment variables not being expanded, or unexpectedPID 1behavior. - Solutions: Prefer exec form (

CMD ["python", "app.py"]) for the main application process to ensure proper signal handling. Use shell form (CMD /bin/bash -c "echo $MY_VAR && python app.py") if you need shell features like variable expansion or piping. Be aware of the implications.

- Cause:

- Processes Exiting Immediately:

- Cause: The application's main process finishes its task and exits cleanly (e.g., a script that runs once and terminates), but the container's restart policy dictates that it should run continuously.

- Symptoms:

ExitCode: 0,RESTARTScount increments, but OpenClaw appears to have done nothing wrong. - Solutions: Modify the

ENTRYPOINTorCMDto keep OpenClaw's main process alive. This often means running OpenClaw in a "server" mode, or adding a command that prevents the container from exiting (e.g.,tail -f /dev/nullfor debugging, but not for production unless it truly makes sense). For applications that are designed to run once, adjust the restart policy tonooron-failure.

3.6 Health Checks and Liveness/Readiness Probes

In orchestrated environments like Kubernetes or Docker Swarm, health checks are critical. Misconfigured checks can erroneously deem a healthy OpenClaw instance unhealthy, leading to premature termination and restarts.

- Misconfigured Health Checks:

- Cause: The

HEALTHCHECKinstruction in the Dockerfile or the liveness/readiness probes in orchestrators might be too aggressive (short timeouts, frequent checks), use an incorrect command, or check the wrong port/endpoint. OpenClaw might be still initializing or temporarily overloaded, failing the check, and being killed. - Symptoms: Container logs might show the health check command failing. Orchestrator logs indicate "Unhealthy" or "Liveness probe failed."

ExitCode: 143(SIGTERM) might precede a restart. - Solutions:

- Refine Health Check Logic: Ensure the health check command accurately reflects OpenClaw's readiness. Check a specific API endpoint, a port, or an internal status file.

- Adjust Parameters: Increase

interval,timeout, andretriesfor the health check to give OpenClaw ample time to start up and respond. - Separate Liveness/Readiness: In Kubernetes, use separate liveness (is it alive?) and readiness (is it ready to serve traffic?) probes. OpenClaw might be alive but not yet ready to process requests.

- Cause: The

- Too Aggressive Restart Policies:

- Cause: In combination with misconfigured health checks, an

alwaysorunless-stoppedrestart policy can exacerbate the problem, causing the container to restart immediately upon a health check failure, even for transient issues. - Solutions: Consider

on-failureif OpenClaw can recover from transient issues on its own, or increase the number of health check retries before termination.

- Cause: In combination with misconfigured health checks, an

3.7 External Service Dependencies

Modern applications rarely run in isolation. OpenClaw likely depends on external databases, message queues, caching layers, or other microservices. If these dependencies are unavailable or slow, OpenClaw might fail to start or crash mid-operation.

- Database Unavailability or Connection Issues:

- Cause: The database OpenClaw relies on is down, inaccessible due to network issues, or its credentials are wrong.

- Symptoms: OpenClaw logs full of "connection refused," "authentication failed," or "database unavailable" errors.

- Solutions: Verify database status, network connectivity from the OpenClaw container to the database, and credentials. Implement robust retry logic with exponential backoff in OpenClaw for database connections.

- Message Queue or Caching Layer Problems:

- Cause: Similar to databases, issues with services like Kafka, RabbitMQ, Redis, or Memcached can prevent OpenClaw from functioning correctly, especially if it relies on them for critical operations or state management.

- Symptoms: Application logs showing connection errors, timeouts, or failures to publish/consume messages or fetch/store data.

- Solutions: Check the status and connectivity of these services. Ensure OpenClaw has proper retry mechanisms and fallback strategies.

- External API Failures or Network Latency:

- Cause: OpenClaw might depend on external APIs (e.g., for identity management, payment processing, or even fetching models if it's an AI application). If these APIs are down, slow, or rate-limiting OpenClaw, it can lead to failures. High network latency can also cause timeouts.

- Symptoms: OpenClaw logs showing HTTP 5xx errors, connection timeouts, or specific API error codes.

- Solutions: Implement circuit breakers and graceful degradation for external API calls. Ensure network connectivity and sufficient bandwidth. Monitor external service health. For AI applications, ensuring reliable access to diverse models can be critical, and platforms like XRoute.AI can help by providing a robust, unified API gateway to LLMs, thereby reducing dependency-related failures.

By methodically exploring each of these potential causes, you can narrow down the problem space and move closer to a definitive solution for your OpenClaw Docker restart loop.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Comprehensive Troubleshooting Strategies and Fixes

Resolving an OpenClaw Docker restart loop requires a systematic approach, combining effective diagnostic tools with targeted fixes. This section outlines advanced strategies for tackling these persistent issues, with a strong emphasis on proactive measures for performance optimization and cost optimization.

4.1 Systematic Logging and Monitoring

Logs and metrics are your eyes and ears into the container's internal state. Without them, troubleshooting is akin to navigating in the dark.

- Centralized Logging:

- Strategy: Instead of relying solely on

docker logs, implement a centralized logging solution. Tools like ELK Stack (Elasticsearch, Logstash, Kibana), Grafana Loki, Splunk, or cloud-native logging services (AWS CloudWatch, Azure Monitor, Google Cloud Logging) can aggregate logs from all your OpenClaw containers. - Benefit: Provides a holistic view, making it easier to correlate events across multiple services or identify patterns leading up to a crash. You can filter, search, and visualize logs, dramatically speeding up diagnosis.

- Implementation: Configure OpenClaw to log to

stdout/stderr(Docker's standard practice), and use a logging agent (e.g., Filebeat, Fluentd, Promtail) on the host to ship these logs to your centralized system.

- Strategy: Instead of relying solely on

- Monitoring Tools for Resource Usage:

- Strategy: Deploy comprehensive monitoring solutions like Prometheus with Grafana, Datadog, or New Relic. Monitor host metrics (CPU, memory, disk I/O, network) and, crucially, container-specific metrics (using

cAdvisorornode_exporter). - Benefit: Allows you to visualize resource usage patterns before and during a restart loop. Spikes in memory before an OOM kill, sustained high CPU, or disk saturation become immediately apparent. This data is invaluable for identifying bottlenecks and informs performance optimization efforts.

- Implementation: Configure monitoring agents to scrape metrics from Docker containers and the host, sending them to your monitoring backend for visualization and alerting.

- Strategy: Deploy comprehensive monitoring solutions like Prometheus with Grafana, Datadog, or New Relic. Monitor host metrics (CPU, memory, disk I/O, network) and, crucially, container-specific metrics (using

- Using

docker logs --followanddocker inspectEffectively:- Strategy: When a loop occurs, immediately run

docker logs -f <container_name>to capture real-time output. Simultaneously, in another terminal, usedocker inspect <container_name>to check theExitCodeandErrorfields. - Benefit: Direct, raw insight into the container's last moments. The

ExitCodecombined with the final log messages often reveals the smoking gun. - Tip: If logs are too verbose, consider temporarily reducing OpenClaw's logging level for clarity, or use

grepto filter specific errors.

- Strategy: When a loop occurs, immediately run

4.2 Resource Management and Scaling for Performance and Cost Optimization

Efficient resource allocation is central to preventing restart loops and optimizing your infrastructure.

- Setting Appropriate CPU and Memory Limits:

- Strategy: Based on historical monitoring data, allocate just enough CPU (

--cpusor--cpu-shares) and memory (--memory) to OpenClaw. Over-provisioning wastes resources, while under-provisioning leads to instability. - Benefit: Prevents OOM kills (

ExitCode: 137) and CPU starvation. Crucially, precise resource allocation is a core tenet of cost optimization, as you pay for exactly what OpenClaw needs, avoiding idle resources. It also contributes to performance optimization by ensuring OpenClaw has sufficient resources to operate efficiently without contention. - Implementation: Specify limits in

docker runcommands, Docker Compose files, or Kubernetes deployment configurations. Regularly review and adjust these limits as OpenClaw's workload or code evolves.

- Strategy: Based on historical monitoring data, allocate just enough CPU (

- Horizontal Scaling (Docker Swarm, Kubernetes):

- Strategy: For stateless or horizontally scalable OpenClaw components, run multiple instances across different nodes.

- Benefit: Distributes load, provides redundancy (if one container crashes, others can handle the load), and improves overall throughput. This is a fundamental strategy for performance optimization under heavy loads.

- Implementation: Use orchestrators like Docker Swarm or Kubernetes to manage replica counts and distribute containers. Implement appropriate load balancing.

- Vertical Scaling:

- Strategy: If OpenClaw's workload is inherently monolithic or requires significant single-instance resources (e.g., large AI model inference requiring a single powerful GPU), deploy it on a more powerful host with more CPU, RAM, or specialized hardware.

- Benefit: Can provide a quick fix for resource issues, especially for resource-hungry OpenClaw instances that cannot be easily scaled horizontally.

- Consideration: Vertical scaling can be less cost-optimized than horizontal scaling if the resources are not fully utilized.

- Optimizing OpenClaw's Resource Consumption:

- Strategy: This involves profiling OpenClaw's application code to identify and eliminate bottlenecks.

- Benefit: The most sustainable form of performance optimization and cost optimization. Reducing OpenClaw's inherent resource footprint means it runs faster on less hardware, leading to lower cloud bills and improved responsiveness.

- Examples:

- Memory: Optimize data structures, implement garbage collection tuning, avoid loading entire datasets into memory if streaming is possible. For AI models, explore techniques like model compression (quantization, pruning), efficient batching, or using smaller, more efficient architectures.

- CPU: Optimize algorithms, utilize multi-threading/multi-processing where appropriate, and minimize I/O-bound operations.

- Disk I/O: Batch writes, use buffered I/O, or temporary in-memory storage for frequently accessed data.

4.3 Configuration Validation and Environment Variables

Configuration errors are a leading cause of startup failures.

- Best Practices for Managing Configurations:

- Strategy: Avoid hardcoding sensitive information. Use Docker Secrets for sensitive data (API keys, database passwords) and ConfigMaps (in Kubernetes) or

.envfiles (with Docker Compose) for non-sensitive configurations. - Benefit: Enhances security and makes configurations portable and easy to manage without rebuilding images.

- Tip: Never commit secrets directly into your Dockerfiles or version control systems.

- Strategy: Avoid hardcoding sensitive information. Use Docker Secrets for sensitive data (API keys, database passwords) and ConfigMaps (in Kubernetes) or

- Validating Configuration Files Before Deployment:

- Strategy: Implement pre-deployment checks that validate OpenClaw's configuration files. This could involve schema validation (JSON Schema, YAML linting) or simple script-based checks.

- Benefit: Catches errors before they hit production, preventing potential restart loops due to malformed configurations.

- Implementation: Incorporate validation steps into your CI/CD pipeline.

4.4 Dependency Management and Image Building

A lean, robust Docker image is less prone to issues.

- Pinning Dependency Versions:

- Strategy: Explicitly specify exact versions for all OpenClaw's dependencies (e.g.,

apt-get install python3.9=3.9.7-1~20.04.1,pip install requests==2.28.1). - Benefit: Prevents unexpected breakage when new versions of libraries are released, ensuring reproducible builds.

- Strategy: Explicitly specify exact versions for all OpenClaw's dependencies (e.g.,

- Multi-Stage Builds for Smaller, More Secure Images:

- Strategy: Use multi-stage Dockerfiles. The first stage builds OpenClaw (compilation, dependency installation), and the second stage copies only the necessary runtime artifacts into a minimal base image (e.g.,

alpine,scratch). - Benefit: Reduces image size, minimizes attack surface (fewer unnecessary tools/libraries), and speeds up image pulls. This significantly aids cost optimization by reducing storage requirements and network transfer times. It also reduces potential for dependency conflicts.

- Strategy: Use multi-stage Dockerfiles. The first stage builds OpenClaw (compilation, dependency installation), and the second stage copies only the necessary runtime artifacts into a minimal base image (e.g.,

- Regular Image Updates and Vulnerability Scanning:

- Strategy: Keep your OpenClaw base images up-to-date to patch security vulnerabilities and benefit from bug fixes. Integrate image vulnerability scanners (e.g., Trivy, Clair) into your CI/CD pipeline.

- Benefit: Improves security posture and ensures you're not running on outdated, potentially unstable software versions.

4.5 Docker Engine Health and Maintenance

A healthy Docker host is fundamental to stable container operations.

- Regular Docker Daemon Restarts:

- Strategy: While not a solution to application issues, occasionally restarting the Docker daemon (

sudo systemctl restart docker) can clear up transient issues with the daemon itself or its networking components. - Caution: This will temporarily stop all running containers. Plan during maintenance windows.

- Strategy: While not a solution to application issues, occasionally restarting the Docker daemon (

- Clearing Unused Images/Volumes (

docker system prune):- Strategy: Regularly clean up dangling images, stopped containers, unused networks, and volumes.

- Benefit: Frees up disk space, preventing "No space left on device" errors, and contributing to cost optimization by avoiding unnecessary storage usage.

- Command:

docker system prune -f(removes stopped containers, unused networks, dangling images). Usedocker system prune -ato also remove all unused images and build cache. Usedocker volume pruneto clean up unused volumes.

- Updating Docker Engine:

- Strategy: Keep your Docker engine up-to-date. Test new versions in a staging environment before rolling out to production.

- Benefit: Access to bug fixes, new features, and performance improvements.

4.6 Advanced Debugging Techniques

When logs aren't enough, you might need to get inside the container.

- Attaching to a Running Container (

docker attach,docker exec):- Strategy:

docker attach <container_name>: Attach to the main process of a running container. Useful if the container sometimes stays up for a bit before crashing.docker exec -it <container_name> /bin/bash: Execute a new command (like/bin/bashor/bin/sh) inside a running container. This is safer as it doesn't attach toPID 1and allows you to explore the environment.

- Benefit: Allows you to inspect the file system, check environment variables, run diagnostic commands (

ps aux,netstat,ls -l), and manually try to start OpenClaw's process to observe its behavior.

- Strategy:

- Running Container with an Interactive Shell for Debugging:

- Strategy: Temporarily modify your

ENTRYPOINTorCMDto launch a shell instead of OpenClaw, or usedocker run -it --entrypoint /bin/bash openclaw:latest. - Benefit: Provides a fully interactive environment to explore the container, manually install debugging tools, and try to replicate the crash.

- Strategy: Temporarily modify your

- Using

strace,lsofinside Containers:- Strategy: If your container has

straceorlsofinstalled (or you can install them in a temporary debug image), these can provide deep insights.strace <command>: Traces system calls made by a process, revealing file access errors, network issues, or unexpected process termination.lsof -i: Lists open network connections.lsof <file>: Lists processes opening a specific file.

- Benefit: Uncovers low-level system interactions that might not be visible in application logs.

- Caveat: These tools might not be present in minimal base images. You might need to create a specialized debug image with these utilities.

- Strategy: If your container has

By systematically applying these troubleshooting strategies, you can transform the complex challenge of an OpenClaw Docker restart loop into a methodical problem-solving exercise, ultimately leading to a stable and optimized deployment.

Proactive Measures and Best Practices for OpenClaw in Docker

Prevention is always better than cure. Implementing a set of best practices for OpenClaw's Docker environment can significantly reduce the likelihood of restart loops and ensure long-term stability, contributing to both performance optimization and cost optimization.

Robust Dockerfile Design

A well-crafted Dockerfile is the foundation of a stable container.

- Minimal Base Images:

- Practice: Start with the smallest possible base image (e.g.,

alpine,distroless, or a slim version of your chosen OS likepython:3.9-slim-buster). - Benefit: Reduces image size, leading to faster builds, pulls, and reduced attack surface. This directly impacts cost optimization by minimizing storage and network bandwidth usage.

- Practice: Start with the smallest possible base image (e.g.,

- Clear

CMD/ENTRYPOINT:- Practice: Explicitly define the main process OpenClaw needs to run using

ENTRYPOINT(for the primary command) andCMD(for default arguments). Use the exec form (["executable", "param1"]) to ensure proper signal handling. - Benefit: Guarantees that OpenClaw's process starts correctly and receives

SIGTERMfor graceful shutdowns.

- Practice: Explicitly define the main process OpenClaw needs to run using

- Specific Versioning for Dependencies:

- Practice: Always pin versions of libraries, packages, and tools in your Dockerfile (e.g.,

RUN pip install requests==2.28.1,RUN apt-get install -y python3.9=3.9.7-1~20.04.1). - Benefit: Ensures reproducible builds, preventing unexpected failures due to new, incompatible dependency versions.

- Practice: Always pin versions of libraries, packages, and tools in your Dockerfile (e.g.,

- Multi-Stage Builds:

- Practice: Leverage multi-stage builds to separate build-time dependencies from runtime dependencies.

- Benefit: Results in a smaller, more secure, and more efficient final image, aiding cost optimization and reducing the chance of runtime conflicts.

- User Isolation:

- Practice: Create a non-root user and switch to it using the

USERinstruction in the Dockerfile. - Benefit: Enhances security by limiting the potential damage if the container is compromised. Ensure this user has the necessary permissions for OpenClaw's operations on mounted volumes.

- Practice: Create a non-root user and switch to it using the

Implementing Graceful Shutdown

Applications need to be able to shut down cleanly to prevent data corruption or resource leaks.

- Practice: Configure OpenClaw to gracefully handle

SIGTERMsignals. This typically involves catching the signal and performing cleanup tasks like flushing buffered data, closing database connections, and finishing in-progress requests before exiting. - Benefit: Prevents

ExitCode: 1or137from sudden terminations. Ensures data integrity and faster, more reliable restarts when necessary. - Docker

STOPSIGNALandSTOPTIMEOUT:- Practice: Use

STOPSIGNAL SIGTERMin your Dockerfile to explicitly tell Docker which signal to send for graceful shutdown. SetSTOPTIMEOUTto give OpenClaw enough time to perform cleanup. - Benefit: Provides OpenClaw with sufficient time to shut down before Docker resorts to a forceful

SIGKILL.

- Practice: Use

Automated Testing for OpenClaw and its Docker Container

Comprehensive testing is the ultimate proactive measure.

- Unit and Integration Tests:

- Practice: Develop robust unit tests for OpenClaw's code and integration tests to verify its interactions with dependencies.

- Benefit: Catches application-level bugs before they ever reach a Docker environment, preventing

ExitCode: 1or139.

- Docker-Specific Tests (Container/Acceptance Tests):

- Practice: Write tests that spin up the OpenClaw Docker container and verify its startup, basic functionality, and health checks.

- Benefit: Ensures the Docker image is correctly built, all dependencies are present, and the entrypoint/CMD works as expected. Tools like Testcontainers can be invaluable here.

- Load and Stress Testing:

- Practice: Simulate production loads on your OpenClaw Docker deployments.

- Benefit: Identifies resource bottlenecks, potential memory leaks, or concurrency issues that might lead to

ExitCode: 137under stress, allowing for performance optimization and resource tuning before critical failure.

Version Control for Dockerfiles and OpenClaw Code

Treat your Docker infrastructure as code.

- Practice: Store Dockerfiles, Docker Compose files, and deployment scripts in the same version control system (e.g., Git) as your OpenClaw application code.

- Benefit: Ensures reproducibility, allows for easy rollbacks, and simplifies auditing of changes.

Disaster Recovery Planning

Even with the best practices, failures can happen.

- Practice: Have a clear plan for recovering OpenClaw's data and state in case of catastrophic failure. This includes regular backups of persistent volumes and a strategy for restoring them.

- Benefit: Minimizes data loss and speeds up recovery time, ensuring business continuity.

Continuous Integration/Continuous Deployment (CI/CD) Pipelines

Automate everything possible.

- Practice: Implement a CI/CD pipeline that automatically builds OpenClaw Docker images, runs tests, scans for vulnerabilities, and deploys to staging and production environments.

- Benefit: Ensures consistent deployments, rapid iteration, and early detection of issues. A well-oiled CI/CD pipeline is critical for maintaining performance optimization and realizing cost optimization by reducing manual effort and errors.

Leveraging AI for Enhanced Stability and Performance Optimization: Enter XRoute.AI

The challenges of maintaining stable, high-performance applications like OpenClaw, particularly when they involve complex data processing or AI/ML workloads, are continually evolving. As AI models become more integrated into our systems, the reliability of accessing and serving these models becomes paramount. This is where advanced platforms focusing on AI infrastructure play a crucial role, contributing directly to an application's overall stability and efficiency.

Imagine OpenClaw, in its various hypothetical forms, needing to interact with a multitude of large language models (LLMs) for tasks ranging from sentiment analysis to complex data summarization or code generation. Each LLM might have its own API, specific requirements, and performance characteristics. Manually integrating and managing these diverse models—ensuring low latency, high throughput, and cost-effectiveness—can be a significant engineering challenge, potentially leading to bottlenecks or failures that could cascade into application-level issues, including restart loops.

This is precisely the kind of complexity that XRoute.AI is designed to address. XRoute.AI is a cutting-edge unified API platform that streamlines access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers. This means that an application like OpenClaw, which might rely on external AI services for its core functionality, can achieve seamless development of AI-driven applications, chatbots, and automated workflows without the headaches of managing multiple API connections.

How does XRoute.AI contribute to OpenClaw's stability and performance optimization?

- Unified and Simplified Access: Instead of OpenClaw's Docker container needing to manage separate client libraries, API keys, and endpoints for various LLMs (e.g., OpenAI, Anthropic, Google Gemini), it interacts with a single, consistent XRoute.AI endpoint. This reduces the complexity within OpenClaw's code, minimizing potential for configuration errors or dependency conflicts that could trigger restart loops.

- Low Latency AI: XRoute.AI is specifically engineered for low latency AI. For an application like OpenClaw that may require real-time AI inference, guaranteed low latency is critical. If API calls to LLMs are slow or timeout, OpenClaw could become unresponsive, miss processing deadlines, or even crash due to internal timeouts or resource exhaustion. XRoute.AI's optimized routing and infrastructure help prevent these latency-induced failures, ensuring OpenClaw can reliably get the AI responses it needs. This is a direct contributor to performance optimization.

- Cost-Effective AI: Beyond performance, XRoute.AI helps with cost-effective AI by allowing developers to dynamically route requests to the most optimal model based on cost, performance, and availability. For OpenClaw, this means its AI dependencies are not only robust but also economically managed. An inefficient use of AI resources can quickly escalate costs and potentially lead to resource contention if not managed, which in turn can impact the stability of the entire system. By optimizing which models are used and how, XRoute.AI aids in overall cost optimization for OpenClaw's AI workload.

- Resilience and Fallback Mechanisms: With access to over 60 models from 20+ providers, XRoute.AI can potentially offer built-in resilience. If one AI provider experiences an outage or performance degradation, XRoute.AI could intelligently route requests to an alternative, ensuring continuous operation for OpenClaw. This kind of robust dependency management significantly reduces the risk of external AI service failures causing OpenClaw to enter a restart loop.

- Developer-Friendly Tools: XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. This translates to less development overhead for OpenClaw, allowing engineers to focus on OpenClaw's core logic rather than troubleshooting diverse API integrations.

In essence, by offloading the complexities of LLM integration, guaranteeing low latency AI, and providing mechanisms for cost-effective AI, XRoute.AI acts as a robust backend for OpenClaw's AI-driven components. A stable, efficient, and well-managed AI dependency (as provided by XRoute.AI) directly contributes to the overall health and preventing restart loops in OpenClaw, ensuring that the application can consistently deliver its critical functionalities without interruption. It is an example of how specialized platforms contribute to the holistic performance optimization and cost optimization of complex, modern applications.

Conclusion

Navigating the treacherous waters of OpenClaw Docker restart loops can be a daunting experience, but it is far from an insurmountable challenge. As we've explored, these persistent issues stem from a diverse array of root causes, ranging from resource starvation and application-level bugs to Docker engine quirks and external service dependencies. The key to unlocking a stable and efficient OpenClaw deployment lies not in quick fixes, but in a systematic, methodical approach to diagnosis, combined with the implementation of robust, proactive measures.

We began by emphasizing the criticality of OpenClaw's operations and the foundational role Docker plays in its deployment. Understanding the basic mechanics of Docker's restart policies and the tell-tale signs of various exit codes provides the essential groundwork for effective troubleshooting. From there, we delved into the common culprits: the silent devastation of resource constraints like OOM kills, the intricate dance of application configuration and dependencies, the health of the Docker daemon itself, the delicate nature of data persistence, and the precision required in ENTRYPOINT/CMD definitions. Even external services, as demonstrated, can become chokepoints, highlighting the interconnectedness of modern applications.

The comprehensive troubleshooting strategies outlined—from systematic logging and advanced monitoring to meticulous resource management and proactive image building—are not merely reactive solutions. They are blueprints for establishing an infrastructure that fosters performance optimization at every layer. By fine-tuning resource limits, scaling intelligently, validating configurations rigorously, and maintaining lean, secure Docker images, you not only resolve current restart loops but also proactively fortify OpenClaw against future disruptions. This continuous pursuit of efficiency directly translates into significant cost optimization, ensuring that your computational resources are utilized effectively, minimizing waste and maximizing return on investment.

Furthermore, we've touched upon how specialized platforms like XRoute.AI exemplify the cutting edge of infrastructure support for AI-driven applications. By offering a unified API platform for large language models (LLMs) with a focus on low latency AI and cost-effective AI, XRoute.AI can indirectly yet powerfully contribute to the stability of applications like OpenClaw that depend on external AI services. A reliable, efficient, and well-managed AI backend ensures that OpenClaw's critical dependencies are consistently met, preventing a cascade of failures that could lead to debilitating restart loops.

Ultimately, solving OpenClaw Docker restart loops is an ongoing journey of learning, adapting, and refining your operational practices. By embracing these principles of meticulous analysis, proactive prevention, and continuous optimization, you can transform the chaos of a crashing container into the confidence of a resilient, high-performing OpenClaw ecosystem, ready to meet the demands of your most critical workloads.

FAQ: OpenClaw Docker Restart Loops

Q1: What is the first thing I should check when my OpenClaw Docker container is in a restart loop? A1: The absolute first step is to check the container's logs and its exit code. Run docker logs -f <container_name_or_id> to stream real-time logs and look for any error messages, stack traces, or warnings immediately preceding the crash. Simultaneously, use docker inspect <container_name_or_id> | grep -E "ExitCode|Error" to determine the container's final exit code, which provides a critical hint about the type of failure (e.g., 137 for OOM, 1 for application error, 0 for clean exit with restart policy issues).

Q2: My OpenClaw container is restarting with Exit Code 137. What does this typically mean and how can I fix it? A2: Exit Code 137 almost always indicates that your container was terminated by the host's Out Of Memory (OOM) killer because it exceeded its allocated memory limit. To fix this, you should first try increasing the --memory limit for your OpenClaw container in your docker run command or Docker Compose file. For a more sustainable solution, analyze OpenClaw's memory usage with docker stats or a monitoring tool to identify memory leaks or inefficiencies within the application itself. Optimizing OpenClaw's code for better memory management is crucial for performance optimization and cost optimization.

Q3: How can I debug OpenClaw's application-level errors inside a Docker container that keeps restarting? A3: If OpenClaw is crashing due to an application error (often ExitCode: 1), logs are key. If logs aren't sufficient, you can try running a debug version of your container. Launch the container interactively with a shell instead of OpenClaw's main process (e.g., docker run -it --entrypoint /bin/bash openclaw:latest). Once inside, manually try to execute OpenClaw's startup command to observe the exact failure, inspect files, check environment variables, and possibly install debugging tools if needed.

Q4: How do Docker's ENTRYPOINT and CMD relate to restart loops, and what's the difference between shell and exec form? A4: ENTRYPOINT defines the executable that runs when the container starts, and CMD provides default arguments to that ENTRYPOINT. If the command or script specified in ENTRYPOINT/CMD is incorrect, missing, or exits immediately, it can cause a restart loop. In exec form (["executable", "param1"]), the process runs directly, which is generally preferred for proper signal handling (like SIGTERM for graceful shutdown). In shell form (executable param1), the command runs inside a shell (/bin/sh -c "..."), which can affect signal handling and variable expansion. Misusing these can lead to unexpected exits or containers that complete their task and exit cleanly (ExitCode: 0) but are forced to restart by an always policy.

Q5: How can a platform like XRoute.AI contribute to preventing restart loops for OpenClaw, especially if OpenClaw uses AI? A5: If OpenClaw relies on external AI models (like LLMs), issues with accessing those models can cause OpenClaw to crash. XRoute.AI is a unified API platform that provides robust, low latency AI access to over 60 AI models via a single, OpenAI-compatible endpoint. By centralizing and optimizing AI model access, XRoute.AI reduces the complexity of integrating diverse LLMs into OpenClaw. This simplification minimizes the potential for configuration errors, connection issues, or performance bottlenecks related to AI dependencies. Its focus on cost-effective AI also helps ensure efficient resource utilization. A stable and efficient AI backend, managed by XRoute.AI, prevents cascading failures in OpenClaw, thereby enhancing its overall stability and contributing to better performance optimization.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.