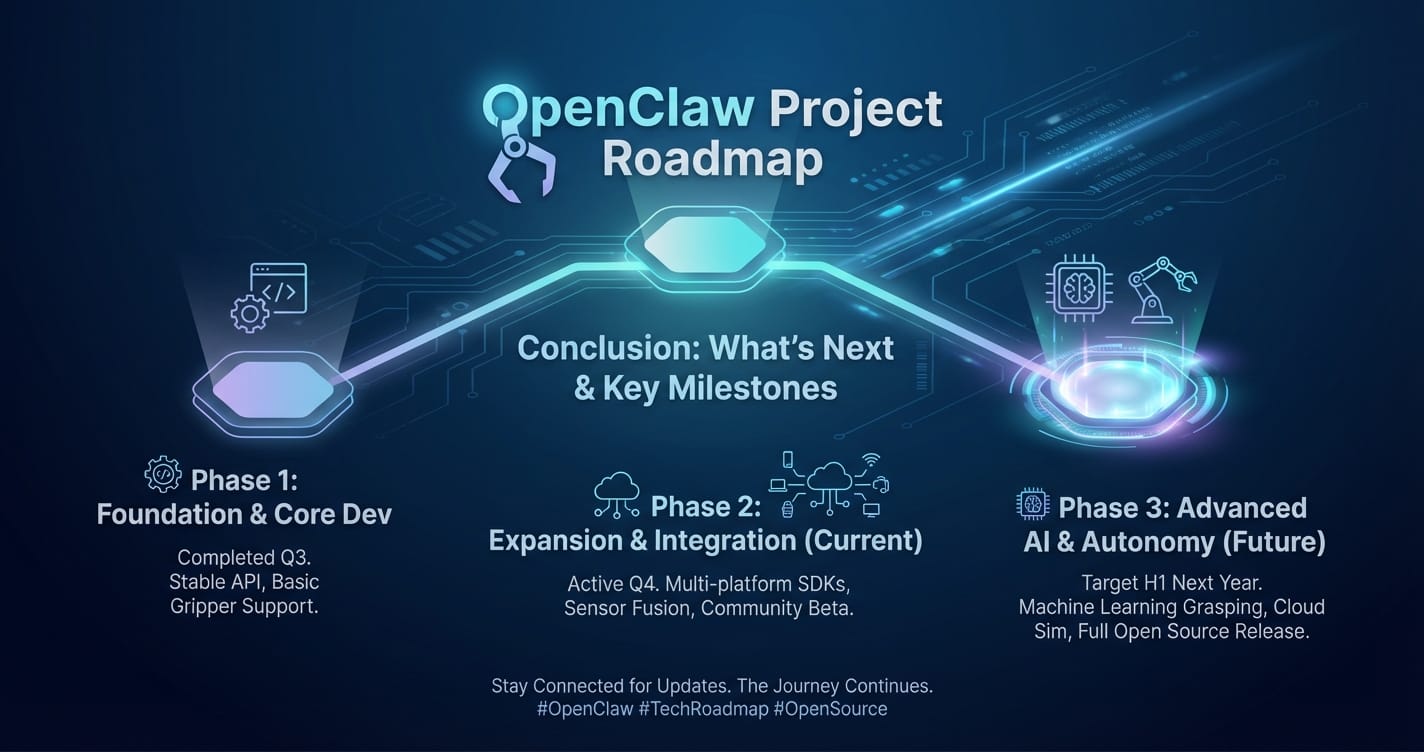

OpenClaw Project Roadmap: What's Next & Key Milestones

The landscape of artificial intelligence is evolving at an unprecedented pace, with Large Language Models (LLMs) emerging as pivotal tools across industries. From automating customer service and generating creative content to revolutionizing data analysis and software development, LLMs are transforming how we interact with technology and information. However, this rapid innovation also brings challenges: a fragmented ecosystem of diverse models, varying APIs, and the complexity of choosing the right model for the right task. This is precisely the void the OpenClaw Project aims to fill, aspiring to become the definitive Unified API platform for accessing the vast and ever-growing universe of LLMs.

OpenClaw is more than just a gateway; it's a vision for simplifying, democratizing, and optimizing the integration of cutting-edge AI into every application. Our mission is to empower developers, researchers, and enterprises alike by providing a seamless, high-performance, and cost-effective solution for leveraging the power of Multi-model support and intelligent LLM routing. This roadmap outlines our ambitious journey, detailing the key phases, technical milestones, and the underlying philosophy driving our development. We believe that by fostering transparency and inviting community collaboration, we can build a platform that truly serves the needs of the global AI community, making advanced AI accessible and manageable for everyone.

I. The Vision Behind OpenClaw: Bridging the AI Divide

In today's AI landscape, developers face a significant dilemma. On one hand, the sheer number of available Large Language Models—ranging from proprietary giants like GPT-4 and Claude to powerful open-source alternatives like Llama and Mixtral—offers unparalleled flexibility and capability. Each model excels in different areas: some are masters of code generation, others excel at creative writing, while others are optimized for specific languages or tasks. On the other hand, integrating these diverse models into applications is a labyrinthine process. Each provider typically offers its own unique API, requiring distinct authentication mechanisms, data formats, error handling procedures, and rate limits. This fragmentation leads to increased development time, maintenance overhead, and a steep learning curve for teams attempting to harness the full potential of AI.

This is the "AI Divide" that OpenClaw is designed to bridge. Our core philosophy centers on abstraction and optimization. We envision a future where developers can interact with any LLM through a single, consistent interface, abstracting away the underlying complexities of individual providers. This Unified API approach is not merely about convenience; it's about fundamentally reshaping the development paradigm for AI applications. Imagine a scenario where switching from one LLM to another, perhaps due to performance gains, cost efficiencies, or the availability of new features, is as simple as changing a single line of code, rather than rewriting entire sections of your integration logic.

Furthermore, the choice of an LLM is rarely static. The optimal model for a given task can change based on the specific prompt, the required output quality, real-time performance metrics, and even dynamic pricing structures. This introduces the critical need for intelligent LLM routing. OpenClaw aims to move beyond static model selection by introducing a dynamic, policy-driven routing engine that automatically directs requests to the most appropriate LLM based on a set of predefined or even learned criteria. This not only ensures optimal performance and cost efficiency but also adds a layer of resilience, allowing applications to seamlessly failover to alternative models if a primary one experiences downtime or performance degradation.

Our commitment to Multi-model support extends beyond merely integrating a handful of popular models. We envision a platform that embraces the full spectrum of LLMs, including those deployed on cloud infrastructure, self-hosted open-source models, and even specialized, fine-tuned variants. This comprehensive approach ensures that OpenClaw remains future-proof, adaptable to emerging AI advancements, and capable of catering to the niche requirements of various industries. By offering a robust foundation built on these principles, OpenClaw seeks to accelerate innovation, reduce operational complexities, and empower a new generation of AI-driven applications that are more intelligent, resilient, and cost-effective than ever before. This roadmap details how we plan to turn this ambitious vision into a tangible reality, phase by phase, milestone by milestone.

II. Phase 1: Foundation and Core Infrastructure (Q3 2024 - Q1 2025)

The initial phase of the OpenClaw Project is dedicated to building a rock-solid foundation, establishing the core technical architecture, and integrating the initial set of foundational LLMs. This period is crucial for validating our Unified API concept, ensuring robust security, and delivering a functional, developer-friendly experience. Our focus will be on creating a stable, scalable, and secure platform that can serve as the bedrock for all future enhancements.

A. Core API Gateway Development

At the heart of OpenClaw lies a sophisticated API Gateway, designed to serve as the single entry point for all LLM interactions. This gateway will be built on a modern microservices architecture, ensuring high availability, fault tolerance, and the ability to scale independently for different components.

- Standardized Request/Response Formats: We will define a universal JSON-based request and response format that is consistent across all integrated LLMs. This abstraction is key to the Unified API promise, allowing developers to send and receive data in a predictable manner regardless of the underlying model. This standardization will cover text generation, embeddings, tokenization, and potentially other emerging LLM functionalities.

- Authentication and Authorization Layer: A robust security framework is paramount. The gateway will implement industry-standard authentication protocols (e.g., API keys, OAuth 2.0, JWT tokens) to secure access. An authorization layer will manage user permissions, allowing granular control over which models and features each user or application can access, including rate limiting to prevent abuse and ensure fair resource allocation.

- Initial Set of Supported LLMs: To demonstrate immediate value and validate the core architecture, we will integrate a select group of leading LLMs. This initial selection will likely include popular commercial models such such as OpenAI’s GPT series, Anthropic’s Claude, and Google’s Gemini. This allows us to establish the necessary connectors and translation layers that map our standardized format to each provider's unique API. This early Multi-model support is vital for showcasing the platform's versatility.

- Error Handling and Logging: A comprehensive error handling mechanism will be put in place, providing clear, consistent error codes and messages regardless of the originating LLM. Extensive logging will be implemented to monitor API performance, track usage, and aid in debugging, crucial for maintaining system reliability and informing future optimizations.

B. Basic LLM Routing Mechanism

While advanced LLM routing will be a focus of later phases, Phase 1 will establish a foundational routing layer. This initial mechanism will enable basic model selection and provide a primitive level of resilience.

- Rule-Based Routing: Initially, routing will be rule-based. Users will be able to specify a preferred model by name (e.g.,

model="gpt-4",model="claude-3-opus"). In cases where a specific model is not specified, a default model or a least-cost option (where pricing information is available) will be selected. - Basic Latency Monitoring: The gateway will include rudimentary monitoring of response times from integrated LLM providers. This data will primarily inform future routing decisions and provide an initial understanding of provider performance characteristics.

- Simple Failover Strategies: In the event of a catastrophic failure or unresponsiveness from a primary chosen LLM provider, the system will attempt to failover to a pre-configured secondary model or return a clear error message. This basic resilience is a critical first step towards ensuring application continuity.

C. Developer Portal and Documentation

A platform is only as good as its usability. A well-designed developer portal and comprehensive documentation are essential for rapid adoption and community engagement.

- Intuitive Developer Portal: The portal will serve as the central hub for developers to manage their API keys, monitor usage, view billing information, and access support resources. It will feature a clean, user-friendly interface designed for ease of navigation.

- Comprehensive API Documentation: Detailed API reference documentation, including endpoint descriptions, request/response examples, authentication guides, and error code explanations, will be provided. This documentation will emphasize the Unified API aspect, highlighting how to interact with different models through a single interface.

- SDKs (Software Development Kits): We will release official SDKs for popular programming languages such as Python and Node.js. These SDKs will simplify interaction with the OpenClaw API, abstracting away HTTP requests and JSON parsing, and allowing developers to integrate LLMs with minimal boilerplate code.

- Tutorials and Quickstart Guides: Practical examples, quickstart guides, and conceptual tutorials will help developers get up and running quickly. These resources will demonstrate how to leverage Multi-model support and basic routing effectively.

- Community Forum Setup: A dedicated community forum will be launched to foster discussions, allow users to share best practices, report issues, and provide feedback. This will be a critical channel for gathering insights and building a vibrant developer ecosystem.

D. Key Milestones for Phase 1

This table summarizes the critical deliverables and expected timelines for the Foundation and Core Infrastructure phase.

| Milestone ID | Description | Target Completion | Status |

|---|---|---|---|

| P1-M1 | Core API Gateway (MVP) Release | Q4 2024 | In Progress |

| P1-M2 | Integration of 3-5 Major LLM Providers | Q4 2024 | In Progress |

| P1-M3 | Standardized API Spec (v1.0) Finalized | Q4 2024 | In Progress |

| P1-M4 | Developer Portal & Documentation Launch | Q1 2025 | Planning |

| P1-M5 | Python & Node.js SDKs (Alpha) Release | Q1 2025 | Planning |

| P1-M6 | Basic Rule-Based LLM Routing Functionality | Q1 2025 | Planning |

Upon successful completion of Phase 1, OpenClaw will provide a robust, secure, and developer-friendly Unified API that simplifies access to a foundational set of LLMs, laying the groundwork for more advanced capabilities in subsequent phases.

III. Phase 2: Enhanced Functionality and Advanced Routing (Q2 2025 - Q4 2025)

With the core infrastructure established, Phase 2 will focus on significantly enhancing OpenClaw's capabilities, particularly in expanding Multi-model support and introducing sophisticated LLM routing mechanisms. This phase is about making OpenClaw a truly intelligent and adaptable platform, moving beyond basic model access to provide optimized and context-aware AI interactions.

A. Expanding Multi-Model Support

The true power of a Unified API lies in its breadth of support. In Phase 2, we will dramatically expand the number and types of LLMs accessible through OpenClaw, embracing both commercial and open-source models.

- Integration of Open-Source Models: A key objective is to integrate popular open-source LLMs. This will involve developing connectors for models available on platforms like Hugging Face, as well as providing guidance and tools for users to integrate their own self-hosted instances of open-source models. This greatly expands the choice and flexibility for developers, reducing vendor lock-in and fostering innovation.

- Support for Specialized Models: Beyond general-purpose LLMs, we will begin integrating models specialized for particular tasks, such as code generation (e.g., StarCoder, CodeLlama), long-context understanding, image captioning, or specific language translation tasks. This caters to a broader range of use cases and allows developers to precisely match model capabilities to application requirements.

- Provider-Agnostic Approach: Our integration philosophy will remain provider-agnostic. We will continuously evaluate new and emerging LLM providers and models, prioritizing those that offer significant advantages in terms of performance, cost, or unique capabilities, ensuring OpenClaw remains at the forefront of AI innovation. This commitment to wide Multi-model support is what differentiates OpenClaw.

B. Intelligent LLM Routing V2

This phase marks a significant leap in our LLM routing capabilities. We will move beyond simple rule-based selection to implement a dynamic, intelligent routing engine that optimizes requests based on a multitude of real-time and historical data points.

- Context-Aware Routing: The routing engine will gain the ability to analyze the content and structure of incoming prompts. For instance, if a prompt clearly indicates a need for code generation, the system could automatically route it to a code-optimized LLM, even if a general-purpose model was initially requested. Similarly, prompts requiring creative writing or summarization could be directed to models known for their proficiency in those areas.

- Performance-Based Routing: Real-time monitoring of LLM provider latency, throughput, and error rates will be integrated directly into the routing decision process. If a primary model experiences high latency or an elevated error rate, requests can be dynamically rerouted to a better-performing alternative, ensuring a seamless user experience and maintaining application responsiveness.

- Cost-Optimization Routing: With varying pricing models across different LLM providers and even different models from the same provider, cost-effective AI becomes a critical factor. The routing engine will incorporate cost data, allowing developers to set preferences (e.g., "prioritize lowest cost," "balance cost and performance") and have the system automatically select the most economical model for a given request without sacrificing quality or speed beyond acceptable thresholds. This makes cost-effective AI a reality.

- Load Balancing Across Providers: For applications with high request volumes, the intelligent routing mechanism will distribute traffic across multiple compatible LLM providers to prevent any single endpoint from becoming a bottleneck, further enhancing reliability and scalability.

- Policy Engine for Custom Routing Rules: Developers will gain the ability to define their own custom routing policies. This could involve rules like "for sensitive data, always use Model A," or "for prompts over 500 tokens, use Model B due to better long-context handling," providing unparalleled control over how their requests are handled.

C. Advanced Caching and Rate Limiting

To further optimize performance and cost, we will implement sophisticated caching and advanced rate-limiting mechanisms.

- Optimized Caching Strategies: Intelligent caching will store responses to frequently asked or identical prompts, reducing the need to call external LLM APIs. This not only speeds up response times but also significantly lowers operational costs by minimizing API usage. Cache invalidation strategies will be carefully designed to ensure data freshness.

- Granular Rate Limiting: Beyond basic per-API key rate limits, we will introduce more granular controls, allowing rate limits to be applied per model, per endpoint, or even tailored to specific user tiers. This ensures system stability and prevents any single user or application from monopolizing resources.

D. Monitoring and Analytics Dashboard

A critical component of managing and optimizing AI workflows is comprehensive visibility. Phase 2 will deliver an advanced monitoring and analytics dashboard.

- Real-time Usage Statistics: Users will have access to detailed, real-time data on their LLM usage, including total requests, token counts (input/output), and cost breakdown by model and provider.

- Performance Metrics per Model/Provider: The dashboard will display key performance indicators (KPIs) such as average latency, success rates, and throughput for each integrated LLM, enabling developers to make informed decisions about model selection and routing policies.

- Error Logging and Debugging Tools: Centralized error logging and advanced debugging tools will help developers quickly identify and resolve issues, understand the root cause of failures, and optimize their prompts or routing rules.

- Cost Analysis and Budget Management: Features to analyze and project costs based on usage patterns, set budget alerts, and generate detailed billing reports will be crucial for enterprises managing significant AI spend.

E. Key Milestones for Phase 2

This table outlines the major milestones for the Enhanced Functionality and Advanced Routing phase.

| Milestone ID | Description | Target Completion | Status |

|---|---|---|---|

| P2-M1 | Integration of 5+ New LLM Providers (Commercial & Open-Source) | Q2 2025 | Planning |

| P2-M2 | Intelligent LLM Routing Engine (V2) Release | Q3 2025 | Planning |

| P2-M3 | Advanced Caching Mechanisms Implementation | Q3 2025 | Planning |

| P2-M4 | Comprehensive Monitoring & Analytics Dashboard | Q4 2025 | Planning |

| P2-M5 | Custom Routing Policy Engine (Beta) | Q4 2025 | Planning |

By the end of Phase 2, OpenClaw will transform into an intelligent and highly optimized Unified API platform, offering extensive Multi-model support and sophisticated LLM routing capabilities that provide significant advantages in performance, cost, and development agility.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

IV. Phase 3: Enterprise Features and Ecosystem Expansion (Q1 2026 - Q3 2026)

Phase 3 of the OpenClaw Project elevates the platform to enterprise-grade readiness and focuses on fostering a vibrant, expansive ecosystem. This phase addresses the needs of large organizations concerning security, compliance, advanced workflow integration, and empowers the community to contribute and extend OpenClaw's capabilities. It's about maturing OpenClaw into a comprehensive AI orchestration layer.

A. Enterprise-Grade Security and Compliance

For enterprises, security, privacy, and regulatory compliance are non-negotiable. OpenClaw will invest heavily in features that meet these stringent requirements.

- Advanced Data Privacy Controls: Implementation of features like data redaction, encryption-at-rest and in-transit, and robust data retention policies to comply with regulations such as GDPR, HIPAA, and CCPA. Users will have explicit control over how their data is handled, processed, and stored.

- Role-Based Access Control (RBAC): Granular RBAC will allow organizations to define specific permissions for different user roles within their OpenClaw accounts, ensuring that sensitive functionalities and data are only accessible to authorized personnel. This includes managing access to API keys, model configurations, and billing information.

- Audit Logging and Reporting: Comprehensive audit trails will track all significant actions performed within the platform, providing transparency and accountability crucial for compliance audits. Detailed reports on usage, security events, and configuration changes will be available.

- Virtual Private Cloud (VPC) & On-Premise Deployment Options: For organizations with strict data sovereignty or network security requirements, OpenClaw will explore and offer options for dedicated VPC deployments or even managed on-premise solutions. This will allow enterprises to leverage OpenClaw's intelligent LLM routing and Multi-model support within their private network boundaries.

B. Workflow Orchestration and Agent Support

Modern AI applications often involve complex sequences of LLM calls, tool use, and interactions with external systems. OpenClaw will provide tools to simplify the orchestration of these sophisticated workflows.

- No-Code/Low-Code Workflow Builder: A visual interface will allow users to design and deploy multi-step AI workflows, chaining together different LLM calls, external API integrations, and conditional logic. This could include workflows for document summarization, sentiment analysis followed by routing to customer support, or content generation with human review loops.

- Integration with AI Agent Frameworks: OpenClaw will offer first-class integration with popular AI agent frameworks (e.g., LangChain, LlamaIndex). This allows developers to easily leverage OpenClaw's Unified API and intelligent LLM routing as the backend for their AI agents, providing agents with access to a diverse array of models and robust routing capabilities.

- Function Calling and Tool Use Across Models: Extending the standardized API to support sophisticated function calling and tool use, allowing LLMs to interact with external systems (e.g., databases, CRM, web search APIs) through OpenClaw's controlled environment. This enables the creation of more powerful and autonomous AI applications, leveraging the strengths of various models for different tool interactions.

C. Custom Model Integration and Fine-tuning Support

Recognizing that many enterprises have unique data and require highly specialized LLMs, OpenClaw will introduce capabilities for integrating and managing custom models.

- Bring Your Own Model (BYOM) Feature: Enterprises will be able to securely integrate their own fine-tuned or custom-trained LLMs into the OpenClaw platform. This allows these private models to benefit from OpenClaw's Unified API, LLM routing, monitoring, and security features, treating them as first-class citizens alongside public models.

- Managed Fine-tuning Services (Beta): OpenClaw will explore offering managed services for fine-tuning existing LLMs using proprietary datasets. This would simplify the process of creating highly specialized models, which can then be deployed and managed through OpenClaw, benefiting from its optimized routing and performance.

D. Community Contributions and Plugin Architecture

To foster innovation and extend the platform's reach, OpenClaw will open its doors to external contributions and develop a robust plugin architecture.

- OpenClaw Plugin SDK: A Software Development Kit specifically for building plugins will be released, enabling community developers and third-party vendors to create custom connectors for new LLM providers, add new routing algorithms, develop specialized pre-processing or post-processing modules, or integrate with other AI tools.

- Marketplace for Extensions: A curated marketplace within the developer portal will allow users to discover, install, and manage plugins developed by the community and third-party partners. This will significantly expand OpenClaw's functionality and cater to highly specialized use cases without requiring core development team intervention for every integration.

- Open-Source Contributions to Core Components: Selected components of the OpenClaw platform (e.g., specific model connectors, utility libraries) may be open-sourced, inviting direct contributions from the community to improve and expand the core platform itself.

E. Key Milestones for Phase 3

This table summarizes the ambitious milestones for the Enterprise Features and Ecosystem Expansion phase.

| Milestone ID | Description | Target Completion | Status |

|---|---|---|---|

| P3-M1 | Advanced RBAC & Audit Logging Release | Q1 2026 | Planning |

| P3-M2 | No-Code Workflow Builder (Alpha) | Q2 2026 | Planning |

| P3-M3 | Bring Your Own Model (BYOM) Feature | Q2 2026 | Planning |

| P3-M4 | Plugin SDK & Initial Marketplace Launch | Q3 2026 | Planning |

| P3-M5 | Integration with Key AI Agent Frameworks | Q3 2026 | Planning |

By the culmination of Phase 3, OpenClaw will have matured into a powerful, secure, and extensible platform, fully equipped to meet the demanding requirements of enterprise clients and capable of driving a rich ecosystem of AI innovations built on its Unified API, advanced LLM routing, and comprehensive Multi-model support.

V. Addressing Challenges and Ensuring Sustainability

Developing a platform as ambitious as OpenClaw is not without its significant challenges. Navigating these complexities and ensuring the long-term sustainability of the project is as critical as delivering innovative features. Our roadmap acknowledges these hurdles and outlines our strategic approach to overcome them.

A. Technical Complexities

The dynamic nature of the LLM landscape presents continuous technical challenges. * Rapidly Evolving LLM Ecosystem: New models, providers, and functionalities emerge constantly. Maintaining comprehensive Multi-model support requires continuous development of new connectors, adaptation to API changes, and keeping up with evolving best practices for prompt engineering and model interaction. Our strategy involves building a highly modular and extensible architecture, minimizing the effort required to integrate new components and allowing for agile adaptation. * Latency and Performance Optimization: While LLM routing helps optimize performance, managing end-to-end latency across multiple external APIs, network hops, and internal processing is a complex task. We will invest in advanced distributed systems architecture, edge computing strategies, and continuous performance profiling to ensure minimal overhead and maximum responsiveness. This includes optimizing our caching layers, request queuing, and parallel processing capabilities. * Data Consistency and Integrity: Ensuring that data flows seamlessly and consistently between disparate LLM APIs and OpenClaw's standardized format, especially when dealing with various tokenization schemes, context windows, and output structures, requires meticulous engineering. Robust validation, transformation, and error recovery mechanisms will be a core part of our data pipeline. * Scalability Under High Load: As adoption grows, OpenClaw must scale to handle massive volumes of requests. Our microservices architecture, combined with cloud-native principles like containerization and serverless computing, will be designed for elastic scalability. Load balancing, auto-scaling groups, and intelligent resource allocation will be continuously optimized.

B. Economic Models and Sustainable Operations

Building and maintaining a complex platform requires a sustainable economic model that benefits both users and the project. * Fair and Transparent Pricing: Our goal is to offer a transparent and competitive pricing model that reflects the value provided. This will likely involve a combination of usage-based pricing (e.g., per token, per request), tiered access for different feature sets, and potentially enterprise-level agreements. The cost-effective AI aspect derived from intelligent routing will be a core part of our value proposition. * Operational Costs Management: Running a high-throughput, low-latency platform with extensive Multi-model support involves significant infrastructure and compute costs. We will continuously optimize our own infrastructure, leverage cloud cost management tools, and implement efficient resource utilization strategies to ensure we can pass on savings to our users. * Value Proposition Articulation: Clearly communicating the unique value of OpenClaw – simplified integration through a Unified API, optimized performance and cost via LLM routing, and unparalleled flexibility through comprehensive Multi-model support – is essential for adoption and revenue generation.

C. Ethical Considerations and Responsible AI

As a platform facilitating access to powerful AI, OpenClaw has a responsibility to address ethical concerns. * Bias and Fairness: LLMs can inherit biases from their training data. While OpenClaw does not directly train models, our platform will offer tools and guidance to help developers identify and mitigate bias in their LLM interactions. This could include pre-processing filters, post-processing validation, and recommendations for model selection based on known bias characteristics. * Transparency and Explainability: Providing visibility into which model was used for a particular request (especially with intelligent LLM routing), why it was chosen, and how the output was generated, is crucial for building trust and enabling explainable AI applications. Our monitoring and analytics dashboard will be designed with transparency in mind. * Responsible Use Policies: Clear terms of service and acceptable use policies will be enforced to prevent the misuse of OpenClaw for harmful or unethical purposes. We will actively work with the community and experts to evolve these policies as the AI landscape matures.

D. Community Building and Feedback Loop

OpenClaw's success hinges on a vibrant and engaged community. * Active Engagement: We are committed to fostering an active dialogue with our users through forums, social media, and direct channels. Regular updates, Q&A sessions, and community calls will keep our users informed and involved. * Feedback Integration: User feedback will be systematically collected, analyzed, and prioritized in our development roadmap. We will maintain an open issue tracker and feature request system, ensuring that the community's voice directly shapes the evolution of OpenClaw. * Developer Advocacy: A dedicated developer advocacy program will support developers using OpenClaw, create useful content, and represent the community's needs internally.

By proactively addressing these challenges, OpenClaw aims to build not just a technologically advanced platform, but also a resilient, ethically conscious, and community-driven project that can adapt and thrive in the ever-changing world of artificial intelligence.

VI. The Broader Impact: Reshaping AI Development

The OpenClaw Project, with its ambitious roadmap, is poised to create a profound impact on the landscape of AI development. By focusing on simplification, optimization, and extensibility, we aim to fundamentally reshape how developers, businesses, and researchers interact with large language models. The implications of this approach extend far beyond mere technical convenience; they touch upon the very accessibility, efficiency, and future trajectory of AI innovation.

One of the most significant impacts will be the democratization of access to advanced AI. Currently, only well-resourced organizations or highly specialized teams can afford the time and expertise to integrate and manage a diverse portfolio of LLMs. OpenClaw's Unified API breaks down these barriers, offering a single, consistent interface that drastically reduces the complexity of accessing powerful models from various providers. This means startups with limited engineering resources, individual developers, and even non-technical users can leverage state-of-the-art AI capabilities without the steep learning curve and integration headaches. It levels the playing field, ensuring that innovation is not stifled by technical overhead.

Furthermore, OpenClaw will accelerate innovation for startups and enterprises alike. By abstracting away the intricacies of multi-provider integrations, developers can dedicate more time and resources to building core application logic and unique user experiences, rather than wrestling with API variations. The ability to seamlessly switch between models, leverage intelligent LLM routing for performance and cost optimization, and access a vast array of LLMs through comprehensive Multi-model support means faster experimentation, quicker iteration cycles, and the rapid deployment of more sophisticated AI-driven features. This efficiency translates directly into a competitive advantage for businesses and a faster pace of scientific discovery for researchers.

The platform will also foster a more competitive and diverse LLM ecosystem. By providing a neutral, generalized access layer, OpenClaw encourages LLM providers, both commercial and open-source, to compete on the merits of their models – performance, cost, and unique capabilities – rather than relying on API lock-in. This competition drives continuous improvement and innovation across the entire LLM landscape, ultimately benefiting end-users with better, more specialized, and more affordable AI solutions. As our plugin architecture and BYOM features mature, OpenClaw will become a hub where niche models can gain broader exposure and specialized solutions can flourish within a standardized framework.

Consider the example of XRoute.AI, a pioneering platform that embodies many of the principles OpenClaw champions. XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows. Its focus on low latency AI, cost-effective AI, and developer-friendly tools highlights the tangible benefits of such an approach. Like OpenClaw's vision, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections, offering high throughput, scalability, and a flexible pricing model. It serves as a real-world testament to the power of a Unified API with robust Multi-model support and intelligent LLM routing in delivering truly transformative AI capabilities, making advanced AI not just accessible but also practically viable for a wide range of applications and budgets.

In essence, OpenClaw is building the foundational infrastructure for the next generation of AI applications. We are not just creating a tool; we are cultivating an environment where innovation thrives, where the barriers to entry for advanced AI are dramatically lowered, and where the full potential of large language models can be unleashed across every sector. The journey outlined in this roadmap is ambitious, but the potential rewards – a more intelligent, connected, and efficient world – make it a profoundly worthwhile endeavor.

Conclusion

The OpenClaw Project is embarking on an ambitious journey to redefine how we interact with the rapidly evolving world of Large Language Models. Our roadmap, from establishing the foundational Unified API and initial Multi-model support to deploying sophisticated LLM routing and comprehensive enterprise features, represents a deliberate and strategic approach to overcoming the pervasive fragmentation in the AI ecosystem. We envision a future where accessing and optimizing AI models is no longer a complex engineering challenge but a seamless, integrated experience.

By focusing on a single, consistent interface, OpenClaw promises to simplify development, accelerate innovation, and significantly reduce the operational overhead associated with leveraging diverse LLMs. Our commitment to intelligent routing ensures that applications can dynamically select the best model for any given task, optimizing for performance, cost-effectiveness, and reliability. As we expand our Multi-model support to encompass both commercial and open-source solutions, we will ensure that developers have unparalleled flexibility and choice, empowering them to build more resilient, intelligent, and context-aware AI applications.

The path ahead involves navigating technical complexities, establishing sustainable economic models, and adhering to the highest ethical standards. However, with a strong foundation, a clear vision, and a deep commitment to community collaboration, we are confident in our ability to deliver a platform that not only meets but anticipates the future needs of the AI landscape.

We invite developers, researchers, and enterprises to join us on this exciting journey. Your feedback, contributions, and engagement will be instrumental in shaping OpenClaw into the definitive platform for AI orchestration. Together, we can build the future of AI development, making advanced intelligence accessible, manageable, and impactful for everyone.

Frequently Asked Questions (FAQ)

Q1: What is the core problem OpenClaw aims to solve? A1: OpenClaw addresses the fragmentation in the LLM ecosystem. Developers currently face the challenge of integrating and managing multiple distinct APIs, authentication methods, and data formats for various LLMs. OpenClaw aims to simplify this by providing a single, Unified API endpoint to access a wide range of LLMs, reducing complexity and accelerating development.

Q2: How does OpenClaw ensure optimal performance and cost-effectiveness? A2: OpenClaw employs intelligent LLM routing mechanisms. This means that based on factors like prompt content, real-time latency, provider costs, and custom policies, OpenClaw can automatically direct your requests to the most suitable LLM. This ensures you're always using the best model for the job, optimizing for both performance (e.g., low latency AI) and cost (e.g., cost-effective AI).

Q3: What kind of Large Language Models will OpenClaw support? A3: OpenClaw is committed to comprehensive Multi-model support. This includes popular commercial models from providers like OpenAI, Anthropic, and Google, as well as open-source models available on platforms like Hugging Face. We also plan to enable users to integrate their own self-hosted or fine-tuned custom models, making the platform adaptable to virtually any LLM.

Q4: Is OpenClaw suitable for enterprise-level applications? A4: Absolutely. While OpenClaw benefits all developers, Phase 3 of our roadmap specifically focuses on enterprise-grade features. This includes advanced security (RBAC, audit logging), data privacy controls, VPC deployment options, and tools for complex workflow orchestration. Our goal is to provide a robust and compliant platform that meets the demanding requirements of large organizations.

Q5: How can the community get involved with the OpenClaw Project? A5: We strongly encourage community involvement! You can participate by providing feedback on our developer portal, contributing to our community forums, and staying updated on our progress. In later phases (Phase 3), we plan to release a Plugin SDK to allow developers to build their own integrations and extensions, and potentially open-source certain core components for direct contributions. We believe a vibrant community is key to OpenClaw's success.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.