OpenClaw Skill Manifest Explained: Setup, Best Practices & Tips

In the rapidly evolving landscape of artificial intelligence, the ability to effectively manage, orchestrate, and deploy diverse AI capabilities is paramount. As large language models (LLMs) grow in sophistication and specialization, developers and enterprises face the intricate challenge of integrating these powerful tools into cohesive, intelligent applications. This is where frameworks designed for structured AI skill management become indispensable. Among these emerging paradigms, the concept of an "OpenClaw Skill Manifest" stands out as a powerful declarative approach to defining and deploying AI-driven functionalities.

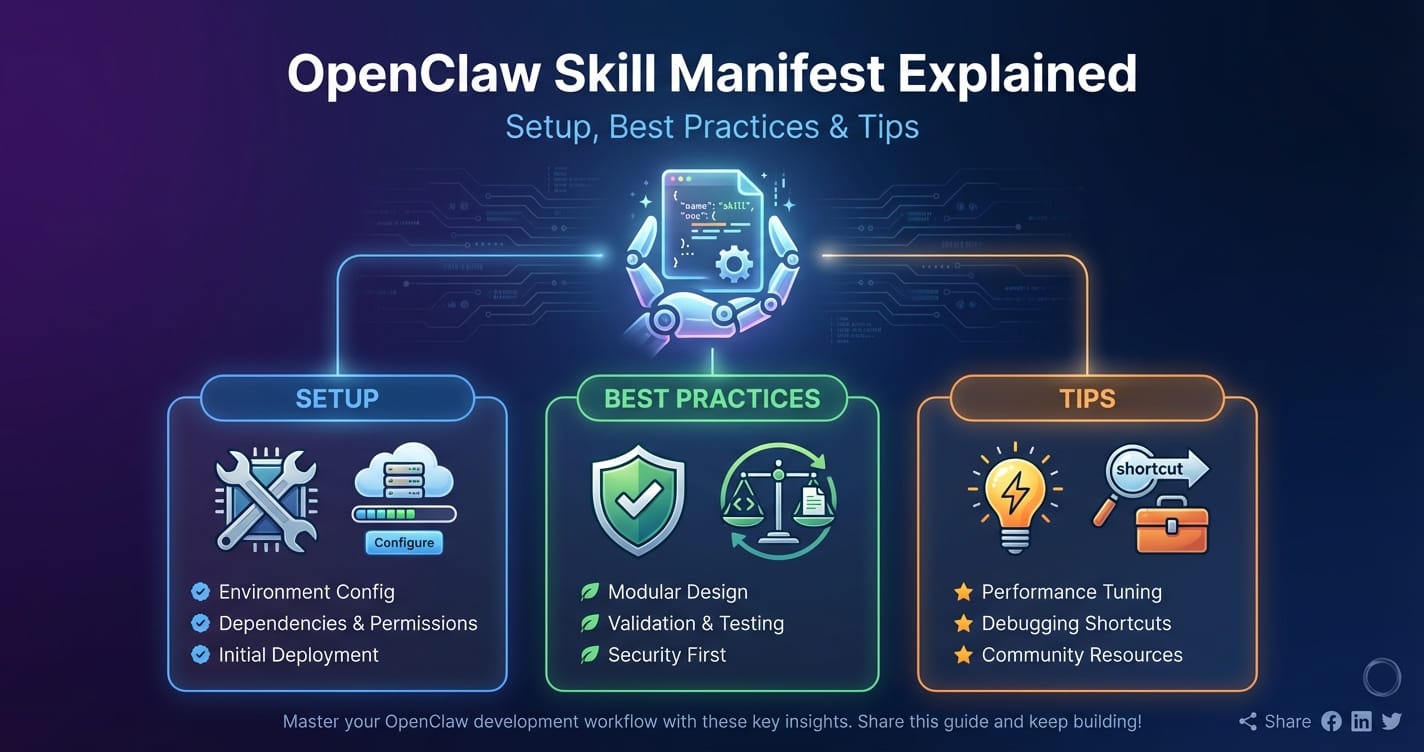

This comprehensive guide delves into the OpenClaw Skill Manifest, dissecting its core components, walking through its setup, and outlining best practices and expert tips to harness its full potential. We will explore how a well-defined manifest acts as the blueprint for an AI skill, enabling seamless integration with underlying Unified LLM API platforms, robust multi-model support, and intelligent LLM routing. By the end of this article, you will possess a profound understanding of how to craft, implement, and optimize OpenClaw Skill Manifests to build scalable, efficient, and highly intelligent AI applications.

Understanding the Core: What is an OpenClaw Skill Manifest?

At its heart, an OpenClaw Skill Manifest is a declarative configuration file—often expressed in YAML or JSON—that meticulously defines an AI skill. Think of it as a comprehensive contract for an AI capability, specifying everything from its identity and purpose to its operational requirements and interaction protocols. It abstracts away the underlying complexities of model selection, API calls, and data handling, allowing developers to focus on the logical function of the skill itself.

The primary purpose of a Skill Manifest is to provide a standardized, machine-readable description of an AI skill, enabling an OpenClaw runtime environment (or a similar AI orchestration platform) to:

- Discover and Understand: Identify available skills, their functionalities, and how they can be invoked.

- Validate Inputs and Outputs: Ensure that data passed to and from the skill conforms to expected schemas, preventing errors and ensuring data integrity.

- Orchestrate Execution: Determine which LLM or sequence of operations is required to fulfill the skill's objective.

- Manage Resources: Understand the computational, cost, and latency requirements of the skill, facilitating optimal LLM routing and resource allocation.

- Enable Multi-model support: Specify dependencies on different LLMs or even external tools, allowing the skill to leverage the strengths of specialized models.

Without a well-structured manifest, managing a growing library of AI skills becomes an ad-hoc, error-prone endeavor. Each skill might require bespoke integration logic, making updates, scaling, and maintenance a nightmare. The Skill Manifest brings order to this potential chaos, establishing a robust framework for building modular, reusable, and scalable AI components.

Why is it Crucial for Scalable AI Applications?

The importance of a Skill Manifest for scalable AI applications cannot be overstated, especially in an era defined by the proliferation of specialized LLMs and diverse AI services.

- Modularity and Reusability: A skill defined by a manifest becomes a self-contained unit. It can be developed, tested, and deployed independently, and then reused across multiple applications or workflows. This fosters a highly modular architecture, accelerating development and reducing redundancy.

- Abstraction and Simplification: Developers don't need to worry about the underlying LLM APIs, model versions, or hosting environments. The manifest defines the "what," and the OpenClaw platform handles the "how," often through a Unified LLM API. This abstraction significantly lowers the barrier to entry for building complex AI features.

- Automated Orchestration: With a clear definition of each skill's inputs, outputs, and dependencies, the OpenClaw system can automatically orchestrate complex workflows. It can chain skills together, manage concurrent executions, and apply intelligent LLM routing decisions without manual intervention.

- Version Control and Evolution: Manifests are text-based configuration files, making them ideal for version control systems. This allows teams to track changes, revert to previous versions, and manage the evolution of skills over time, critical for maintaining stability and enabling continuous improvement.

- Enhanced Reliability and Debugging: Clear input/output schemas and defined error handling mechanisms improve the reliability of skills. When issues arise, the manifest provides a clear reference point, simplifying debugging and problem resolution.

- Cost and Performance Optimization: By declaring model requirements and performance expectations within the manifest, the OpenClaw platform can make informed decisions about LLM routing, selecting the most cost-effective or lowest-latency model for a given task, crucial for managing operational costs in large-scale deployments.

In essence, the OpenClaw Skill Manifest transforms the chaotic integration of disparate AI components into a streamlined, governed, and highly efficient process, paving the way for truly scalable and adaptable AI systems.

The Architecture Behind OpenClaw: How Skills Interact with LLMs

To fully appreciate the power of an OpenClaw Skill Manifest, it's essential to understand the underlying architectural principles that enable skills to interact seamlessly with a multitude of AI models. This interaction hinges on three critical concepts: the Unified LLM API, robust multi-model support, and intelligent LLM routing.

The Backbone: Unified LLM API

The proliferation of LLMs from various providers (OpenAI, Anthropic, Google, Meta, etc.) has introduced significant complexity for developers. Each provider typically offers its own API, with unique authentication methods, endpoint structures, request/response formats, and pricing models. Integrating even a handful of these models often means writing bespoke client code for each, leading to fragmented development efforts and maintenance overhead.

A Unified LLM API addresses this challenge by providing a single, standardized interface to access multiple LLMs from different providers. Instead of interacting directly with dozens of distinct APIs, developers interact with one API endpoint, which then intelligently routes requests to the appropriate backend model.

Key Benefits of a Unified LLM API:

- Simplified Integration: Developers write code once against a common interface, significantly reducing development time and complexity. This is particularly beneficial for applications requiring access to a diverse range of models.

- Interchangeability: Models can be swapped out or updated without requiring changes to the application's core logic. If a new, more performant, or cost-effective model emerges, it can be integrated into the unified API layer, and applications leveraging it can benefit immediately.

- Centralized Management: Authentication, rate limiting, logging, and monitoring can be managed centrally at the unified API layer, providing a single point of control and observability.

- Enhanced Multi-model support: By standardizing interactions, a unified API inherently enables and simplifies the use of multiple models within a single application or workflow.

In the context of OpenClaw, the Skill Manifest often specifies abstract model requirements (e.g., "needs a summarization model," "requires a code generation model"). It's the Unified LLM API layer that translates these abstract requirements into concrete calls to specific models from various providers, acting as a crucial intermediary between the skill's declarative needs and the operational realities of diverse LLM services.

Embracing Diversity: Multi-model Support

The notion that one LLM can do it all is quickly becoming a relic of the past. Different LLMs excel at different tasks due to variations in their training data, architectures, and fine-tuning. For instance, one model might be exceptional at creative writing, another at precise factual recall, and yet another at code generation or sentiment analysis. Building truly sophisticated AI applications necessitates the ability to leverage these specialized strengths.

Multi-model support refers to the capacity of an AI system to seamlessly integrate and utilize various LLMs within a single application or workflow. This goes beyond merely having access to multiple models; it implies an intelligent system that can dynamically select and invoke the most appropriate model for a given sub-task or query.

Why Multi-model Support is Critical for OpenClaw Skills:

- Optimized Performance: By using the best-suited model for each specific task within a skill, overall performance and accuracy are significantly enhanced. A translation skill might use one model for general text and another for technical documents.

- Cost Efficiency: Specialized, smaller models are often more cost-effective for specific tasks than larger, general-purpose models. Multi-model support allows for judicious model selection to optimize expenditure.

- Enhanced Capabilities: Combining the strengths of multiple models unlocks capabilities that no single model could achieve alone. For example, a complex skill might involve a factual query (Model A), followed by creative generation (Model B), and then summarization (Model C).

- Resilience and Fallback: If one model becomes unavailable or performs poorly for a specific query, the system can automatically fall back to an alternative model, improving the robustness of the application.

The OpenClaw Skill Manifest plays a pivotal role in declaring these multi-model dependencies. It can specify not just that an LLM is needed, but potentially which type of LLM, or even a list of preferred models, allowing the underlying platform to enact intelligent routing decisions.

Navigating Complexity: LLM Routing

With a Unified LLM API providing access and multi-model support enabling diversity, the next critical piece of the puzzle is intelligent LLM routing. Routing is the dynamic process of selecting the optimal LLM for a specific request, based on a set of predefined criteria or real-time conditions. This is not merely about choosing any model but choosing the best model for that particular interaction, considering factors like cost, latency, capability, and reliability.

Key Factors Influencing LLM Routing Decisions:

- Cost: Different models have different pricing structures. For non-critical tasks, routing to a cheaper model can significantly reduce operational expenses.

- Latency: Real-time applications demand low-latency responses. Routing to models with faster inference times is crucial for maintaining a responsive user experience.

- Capability/Accuracy: For highly specialized or sensitive tasks, the routing mechanism might prioritize models known for their superior accuracy or domain-specific expertise.

- Token Limits: Some models have stricter input or output token limits. Routing can take this into account to prevent truncation or errors.

- Availability/Reliability: If a primary model or provider is experiencing downtime or degraded performance, intelligent routing can automatically switch to a fallback option.

- Regulatory Compliance: Certain data might need to be processed by models hosted in specific geographical regions or by providers adhering to particular compliance standards.

- User/Application Context: The routing decision might depend on the specific user, the application making the request, or even the current state of a conversation.

Routing Strategies:

- Rule-Based Routing: Simple "if-then" rules (e.g., "if the query is about legal advice, use Legal-LLM-v2").

- Metadata-Based Routing: Using metadata embedded in the request or derived from the Skill Manifest (e.g., "this skill requires high accuracy, so use Model-X").

- Performance-Based Routing: Monitoring real-time latency and success rates of models and routing requests to the best-performing one.

- Cost-Optimized Routing: Continuously evaluating model costs and routing to the cheapest viable option that meets performance criteria.

- Dynamic/Adaptive Routing: More advanced systems might use machine learning to learn optimal routing strategies over time, adapting to changing model performance or cost structures.

The OpenClaw Skill Manifest inherently provides much of the information needed for intelligent LLM routing. By declaring modelRequirements, performanceExpectations, costConstraints, and fallbackOptions within the manifest, it empowers the OpenClaw platform to make smart routing decisions at runtime. This capability is paramount for achieving both efficiency and resilience in complex AI deployments.

XRoute.AI: An Example of a Unified LLM API Platform

To illustrate these concepts, consider platforms like XRoute.AI. XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows. With a focus on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. The platform’s high throughput, scalability, and flexible pricing model make it an ideal choice for projects of all sizes, from startups to enterprise-level applications.

XRoute.AI directly exemplifies how a Unified LLM API can abstract away the complexities of numerous providers, enabling robust multi-model support through a single interface, and offering the mechanisms for intelligent LLM routing to optimize for cost, latency, or specific model capabilities. An OpenClaw Skill Manifest integrated with such a platform would declare its needs, and XRoute.AI would handle the intricate process of fulfilling those needs by selecting and invoking the optimal LLM.

This architectural synergy—a declarative Skill Manifest communicating with a powerful, unified API platform capable of intelligent routing—forms the bedrock of scalable and high-performance AI applications.

Deconstructing the OpenClaw Skill Manifest: Key Components and Their Role

The OpenClaw Skill Manifest is more than just a list of parameters; it's a meticulously structured document that defines every aspect of an AI skill. While specific implementations may vary, a robust manifest will typically include the following key components, each playing a crucial role in enabling discoverability, execution, and management of the skill.

Let's imagine a YAML-based manifest structure for a skill designed to summarize text.

1. Metadata and Identification

These fields provide essential information about the skill itself, enabling easy identification and versioning.

apiVersion: Specifies the version of the OpenClaw Skill Manifest schema the document adheres to. This is crucial for compatibility and future-proofing.- Example:

openclaw.ai/v1alpha1

- Example:

kind: Declares the type of resource, which isSkillin this context.- Example:

Skill

- Example:

metadata: A block containing administrative information.name: A unique, human-readable identifier for the skill (e.g.,text-summarizer,customer-sentiment-analyzer). Must be unique within a given scope.id: A globally unique identifier (UUID or similar). Useful for tracking across systems.version: The version of the skill itself (e.g.,1.0.0,2.1-beta). Essential for managing updates and deployments.description: A concise human-readable explanation of what the skill does. Crucial for discoverability and understanding.author: The creator or team responsible for the skill.tags: A list of keywords for categorization and search (e.g.,["summarization", "nlp", "content-generation"]).

2. Input/Output Definition (Schemas)

This is perhaps the most critical section for ensuring data integrity and enabling automated validation. It defines what data the skill expects to receive and what it promises to return. Often uses JSON Schema for robust validation.

inputSchema: Defines the structure, types, and constraints of the data the skill expects as input.- Example (for text summarizer):

yaml inputSchema: type: object required: ["text", "length_preference"] properties: text: type: string description: "The input text to be summarized." minLength: 50 maxLength: 10000 length_preference: type: string description: "Preferred length of the summary." enum: ["short", "medium", "long"] default: "medium" format: type: string description: "Output format preference for the summary." enum: ["paragraph", "bullet_points"] default: "paragraph"

- Example (for text summarizer):

outputSchema: Defines the structure, types, and constraints of the data the skill will produce as output.- Example (for text summarizer):

yaml outputSchema: type: object required: ["summary", "token_count"] properties: summary: type: string description: "The summarized text." minLength: 10 token_count: type: integer description: "Number of tokens in the generated summary." minimum: 1 quality_score: type: number description: "An optional quality score for the summary." minimum: 0 maximum: 1

- Example (for text summarizer):

3. Execution Configuration

This section specifies how the skill is actually executed, detailing the underlying AI model requirements and any specific parameters.

executionConfig:type: The type of execution engine (e.g.,llm-invocation,function-call,workflow-orchestration).modelRequirements: Defines the requirements for the LLM that will execute this skill. This is crucial for LLM routing and multi-model support.modelType: General category of the model (e.g.,text-generation,embedding,chat).capabilities: A list of specific capabilities the model must possess (e.g.,["summarization", "english-only"]).preferredProviders: An optional list of preferred LLM providers (e.g.,["openai", "anthropic"]).minTokens: Minimum context window size required.maxLatency: Maximum acceptable response time in milliseconds (for routing).maxCostPerToken: Maximum cost per token allowed (for cost-optimized routing).modelNameHint: A hint for a specific model name if known (e.g.,gpt-4-turbo,claude-3-opus).parameters: Any specific model parameters to be passed during invocation (e.g.,temperature,top_p,max_tokens).

fallbackModels: A list of alternative model requirements or specific model names to use if the primary routing fails or returns an error. This enhances resilience.functionCall: If the skill involves calling an external function or API after LLM invocation (e.g., saving data to a database).promptTemplate: A template for constructing the prompt sent to the LLM, often using placeholders forinputSchemavariables.- Example:

yaml promptTemplate: | Please summarize the following text to a {{length_preference}} length. The summary should be presented in {{format}}. Text: {{text}} Summary:

- Example:

4. Operational Parameters

These fields govern the operational aspects of the skill, important for deployment and system-wide management.

resourceLimits: Defines computational resource constraints.cpu: CPU allocation.memory: Memory allocation.

rateLimits: Specifies how frequently this skill can be invoked (e.g.,100 requests per minute). Prevents abuse and manages load.errorHandling: Defines strategies for handling errors during skill execution.retryPolicy: How many times to retry on transient errors.timeout: Maximum execution time before cancellation.onFailure: Action to take on persistent failure (e.g.,notify-admin,log-error).

securityContext: Permissions and access controls required for the skill to operate.

Example OpenClaw Skill Manifest (Partial YAML)

Here's how these components might combine into a simplified manifest for our text summarization skill:

# apiversion: Defines the schema version for the manifest

apiVersion: openclaw.ai/v1alpha1

# kind: Specifies the resource type, in this case, a Skill

kind: Skill

# metadata: Contains descriptive and administrative information about the skill

metadata:

name: text-summarizer-v1 # Unique name for the skill

id: "a1b2c3d4-e5f6-7890-1234-567890abcdef" # Unique identifier

version: "1.0.0" # Current version of the skill

description: "Summarizes input text based on preferred length and format."

author: "AI Solutions Team"

tags:

- summarization

- nlp

- content-generation

createdAt: "2023-10-26T10:00:00Z" # Timestamp of creation

updatedAt: "2023-10-26T14:30:00Z" # Timestamp of last update

license: "Apache-2.0" # Licensing information

# inputSchema: Defines the expected structure and validation rules for input data

inputSchema:

type: object

required: ["text", "length_preference"]

properties:

text:

type: string

description: "The input text to be summarized."

minLength: 50 # Minimum length of input text

maxLength: 20000 # Maximum length of input text

length_preference:

type: string

description: "Preferred length of the summary ('short', 'medium', 'long')."

enum: ["short", "medium", "long"]

default: "medium"

format:

type: string

description: "Output format preference for the summary ('paragraph', 'bullet_points')."

enum: ["paragraph", "bullet_points"]

default: "paragraph"

errorMessage: "Invalid input provided for text summarizer." # Custom error message for validation failure

# outputSchema: Defines the expected structure and validation rules for output data

outputSchema:

type: object

required: ["summary", "token_count", "model_used"]

properties:

summary:

type: string

description: "The generated summarized text."

minLength: 10 # Minimum length of the summary

token_count:

type: integer

description: "Number of tokens in the generated summary."

minimum: 1

model_used:

type: string

description: "The name of the LLM model used for summarization."

quality_score:

type: number

description: "An optional subjective quality score for the summary (0-1)."

minimum: 0

maximum: 1

nullable: true # Allows this field to be null if not provided by the model

# executionConfig: Details how the skill is executed, including LLM requirements

executionConfig:

type: llm-invocation # Specifies that this skill involves invoking an LLM

# promptTemplate: Defines how the prompt to the LLM should be constructed

promptTemplate: |

As an expert summarizer, your task is to condense the provided text.

The summary should be concise, accurate, and capture the main points.

Ensure the summary length is {{length_preference}}.

Present the summary in {{format}}.

Original Text:

{{text}}

Summarize:

# modelRequirements: Specifies the criteria for selecting an LLM

modelRequirements:

modelType: text-generation # General category of the required model

capabilities:

- summarization # Specific capability needed

- english-only # Language constraint

- high-coherence # Quality preference

preferredProviders: # Ordered list of preferred providers for LLM routing

- openai

- anthropic

minTokens: 4096 # Minimum context window size required for the LLM

maxLatency: 2000 # Maximum acceptable response time in milliseconds

maxCostPerToken: 0.000002 # Maximum cost per token in USD (for cost-optimized routing)

modelNameHint: "gpt-4-turbo" # A hint for a specific model, if available

parameters: # Specific parameters to pass to the LLM API

temperature: 0.7

top_p: 0.9

max_tokens: 500

# fallbackModels: Defines alternative models/requirements in case the primary fails

fallbackModels:

- modelType: text-generation

capabilities:

- summarization

preferredProviders:

- google

modelNameHint: "gemini-pro"

maxLatency: 3000

maxCostPerToken: 0.000001

parameters:

temperature: 0.8

max_tokens: 600

# operationalParameters: Governs the operational aspects of the skill

operationalParameters:

resourceLimits:

cpu: "500m" # 0.5 CPU core

memory: "512Mi" # 512 Megabytes of memory

rateLimits:

requestsPerMinute: 60 # Allow up to 60 requests per minute for this skill

burst: 10 # Allow a burst of 10 requests above the limit momentarily

timeout: 10000 # Maximum execution time for the skill in milliseconds (10 seconds)

errorHandling:

retryPolicy:

maxRetries: 3 # Retry up to 3 times on transient errors

initialDelayMs: 500 # Initial delay before first retry

backoffFactor: 2 # Exponential backoff factor for retries

onFailure: "log-and-notify" # Action to take on persistent failure

securityContext:

requiredRoles:

- "skill-executor"

- "data-processor"

allowNetworkAccess: true

dataAccessLevel: "restricted" # E.g., no access to PII

This table summarizes the core components and their significance:

| Component | Description | Key Role | Impact on AI Architecture |

|---|---|---|---|

apiVersion / kind |

Schema version and resource type. | Standardize manifest structure. | Ensures compatibility and structured understanding by the OpenClaw platform. |

metadata |

Identification (name, ID, version), description, author, tags. | Discoverability, version control, human understanding. | Facilitates skill management, search, and collaboration across teams. |

inputSchema |

Defines expected input data structure and validation rules (e.g., JSON Schema). | Input validation, data integrity, clear API contract. | Prevents errors, enables automated data preprocessing, simplifies integration for callers. |

outputSchema |

Defines expected output data structure and validation rules. | Output validation, data consistency, clear API contract. | Ensures consuming applications receive predictable data, aids post-processing. |

executionConfig |

Type of execution, modelRequirements, promptTemplate, parameters. |

LLM routing, multi-model support, dynamic prompt generation. | Enables intelligent model selection, allows switching models without code changes. |

modelRequirements |

Specifies LLM capabilities, providers, latency, cost constraints. | Drives LLM routing decisions, ensures model suitability. | Optimizes for cost, performance, and specific task accuracy; key for Unified LLM API utilization. |

fallbackModels |

Defines alternative models/requirements for resilience. | Enhances system resilience, ensures continuous operation. | Provides robust error handling, minimizes service disruptions. |

promptTemplate |

Template for constructing the LLM prompt. | Consistent and dynamic prompt generation. | Reduces boilerplate, ensures effective communication with LLMs, prevents prompt injection. |

operationalParameters |

Resource limits, rate limits, error handling, security context. | System stability, resource governance, security enforcement. | Manages system load, prevents abuse, ensures secure execution environment. |

Understanding each of these components is crucial for effectively designing, implementing, and deploying OpenClaw Skill Manifests. They provide the necessary granularity to manage complex AI behaviors in a structured and scalable manner.

Setting Up Your First OpenClaw Skill Manifest: A Step-by-Step Guide

Creating an OpenClaw Skill Manifest might seem daunting initially due to its comprehensive nature, but by following a structured approach, you can systematically define and deploy your AI skills. This section guides you through the process, from conceptualization to initial validation.

Step 1: Conceptualize Your Skill – Define Purpose and Scope

Before writing any code or YAML, clearly define what your AI skill will do. This foundational step ensures clarity and alignment with your application's needs.

- Identify the Problem/Need: What specific problem does this skill solve? (e.g., "Summarize long articles," "Answer customer FAQs," "Generate creative marketing copy").

- Define Core Functionality: What is the primary action the skill performs?

- Inputs: What information does the skill absolutely need to perform its function? What are their types and constraints? (e.g., raw text, a topic, a user query).

- Outputs: What information should the skill definitively return? What is its expected format? (e.g., summarized text, a generated answer, a piece of creative content).

- Dependencies: Does this skill rely on specific knowledge bases, external APIs, or particular LLM capabilities?

Example: Let's define a "Code Reviewer" skill. * Purpose: Review Python code for common errors, style violations, and suggest improvements. * Inputs: Python code (string), review type (e.g., "style", "security", "optimization"). * Outputs: Review comments (list of strings, each with line number and suggestion), overall assessment (string). * Dependencies: An LLM capable of understanding and generating code, potentially a linter tool.

Step 2: Define Input and Output Schemas

Based on your conceptualization, translate your input and output requirements into precise schemas, ideally using JSON Schema standards for robust validation. This forms the contract of your skill.

- For each input parameter: Specify

type(string, integer, boolean, object, array),description,requiredstatus, and any validation rules (minLength,maxLength,pattern,enum,minimum,maximum). - For each output parameter: Similarly, define

type,description,requiredstatus, and relevant validation. - Consider edge cases: What if inputs are missing or malformed? What are the possible error outputs?

Example (Code Reviewer Input Schema Snippet):

inputSchema:

type: object

required: ["code", "review_type"]

properties:

code:

type: string

description: "The Python code snippet to be reviewed."

minLength: 10

maxLength: 10000 # Assume max 10,000 characters for a snippet

review_type:

type: string

description: "Type of review requested (style, security, optimization)."

enum: ["style", "security", "optimization", "general"]

default: "general"

file_path:

type: string

description: "Optional: Original file path for context."

nullable: true

Step 3: Craft the Prompt Template

The prompt template is the instruction set your skill will send to the underlying LLM. It's crucial for guiding the LLM's behavior and ensuring consistent, high-quality output.

- Be Clear and Specific: Clearly state the task, desired format, and constraints.

- Use Placeholders: Incorporate variables from your

inputSchemausing a templating syntax (e.g.,{{variable_name}}). - Provide Context: Give the LLM necessary background information.

- Specify Output Format: Instruct the LLM on how to structure its response, aligning with your

outputSchema.

Example (Code Reviewer Prompt Template Snippet):

promptTemplate: |

You are an expert Python code reviewer. Your task is to review the provided Python code

for {{review_type}} issues and suggest improvements.

Provide your feedback as a list of clear, actionable comments. Each comment should

include the approximate line number(s) if applicable, and a concise explanation

of the issue and suggested fix.

Review Focus: {{review_type}}

Python Code:

```python

{{code}}

```

Review Comments:

Step 4: Define Model Requirements for LLM Routing

This is where you specify the characteristics of the LLM needed, enabling the OpenClaw platform (or a Unified LLM API like XRoute.AI) to perform intelligent LLM routing and leverage multi-model support.

modelType: What kind of model is required (e.g.,text-generation,code-generation)?capabilities: List specific features (e.g.,["python-coding", "error-detection"]).preferredProviders: Which providers do you prefer, in order of preference? This aids LLM routing in prioritizing.minTokens: Minimum context window size.maxLatency,maxCostPerToken: Set these for performance and cost optimization during routing.parameters: Any specific API parameters for the chosen LLM (e.g.,temperature,max_tokens).fallbackModels: Crucial for resilience. Define a backup set ofmodelRequirementsin case the primary routing fails.

Example (Code Reviewer Model Requirements Snippet):

modelRequirements:

modelType: code-generation

capabilities:

- python-coding

- error-detection

- code-optimization-suggestions

preferredProviders:

- openai

- google

minTokens: 8192 # Larger context window needed for code

maxLatency: 5000 # Up to 5 seconds for complex code review

maxCostPerToken: 0.00001

modelNameHint: "gpt-4o"

parameters:

temperature: 0.2 # Lower temperature for factual/precise output

top_p: 0.8

max_tokens: 2000 # Max output tokens for review comments

fallbackModels:

- modelType: code-generation

capabilities:

- python-coding

preferredProviders:

- anthropic

modelNameHint: "claude-3-haiku" # A faster, potentially cheaper alternative

maxLatency: 3000

maxCostPerToken: 0.000005

parameters:

temperature: 0.3

max_tokens: 1500

Step 5: Complete Metadata and Operational Parameters

Fill in the remaining metadata (name, version, description) and operationalParameters (rate limits, timeouts, error handling).

metadata.name: Choose a clear, descriptive, and unique name (e.g.,code-reviewer-python-v1).metadata.version: Start with1.0.0or0.1.0for initial development.rateLimits: Consider how often this skill might be called. A code reviewer might be less frequent than a chatbot.timeout: Estimate a reasonable execution time. Code review can take longer.errorHandling: Define retry logic and what happens on failure.

Step 6: Validate Your Manifest

Once drafted, validate your YAML/JSON manifest against the OpenClaw schema definition (if available) and manually review it.

- Schema Validation: Use a linter or a schema validator tool to ensure structural correctness.

- Logic Review: Read through the manifest. Does it accurately reflect your skill's intent? Are all placeholders in the prompt template correctly mapped to

inputSchemafields? - Test with Mock Data: Imagine calling your skill with various inputs. Would the manifest guide the LLM to produce the desired output?

Initial Setup Checklist:

| Stage | Action Item | Importance | Notes |

|---|---|---|---|

| Conceptualization | Define clear purpose, inputs, and outputs. | High | Avoid scope creep. Be specific. |

| Schema Definition | Create detailed inputSchema and outputSchema (JSON Schema). |

Critical | Ensures data integrity, enables automated validation. |

| Prompt Engineering | Develop a precise promptTemplate with placeholders. |

High | Guides LLM behavior, ensures consistent output format. |

| Model Selection | Specify modelRequirements for LLM routing and multi-model support. |

High | Optimizes cost/performance, leverages specialized models. |

| Operational Specs | Set rateLimits, timeout, errorHandling. |

Medium | Manages system load, enhances resilience. |

| Validation | Schema validation and logical review. | Critical | Catches errors early, ensures correctness before deployment. |

By following these steps, you lay a solid foundation for your OpenClaw skill, ensuring it is well-defined, robust, and ready for integration into your AI-powered applications. Remember, clarity and precision in your manifest directly translate to the reliability and effectiveness of your AI skill.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Best Practices for Crafting Effective OpenClaw Skill Manifests

Creating a functional OpenClaw Skill Manifest is one thing; crafting an effective one that promotes maintainability, scalability, and optimal performance is another. These best practices will guide you in building robust and future-proof manifests.

1. Modularity and Reusability: Small Skills, Big Impact

Design your skills to be granular and focused on a single, well-defined task. Avoid creating "monolithic" skills that try to do too much.

- Single Responsibility Principle: Each skill should have one primary reason to change. A "generate marketing copy" skill is better than a "create entire campaign" skill.

- Compose Skills: Instead of one large skill, build a workflow by chaining multiple smaller skills. For example, a "Customer Onboarding" workflow might combine:

extract-user-infoskillpersonalize-welcome-messageskillschedule-follow-upskill

- Benefits: Easier to test, debug, maintain, and reuse individual components across different applications. Improves multi-model support by allowing each micro-skill to use the most specialized LLM.

2. Clear and Comprehensive Documentation within the Manifest

The manifest itself should serve as a primary source of documentation.

- Descriptive

nameanddescription: These fields are critical for human understanding and discoverability. Be explicit about what the skill does and what problem it solves. - Detailed

inputSchemaandoutputSchemadescriptions: Each property in your schemas should have a cleardescriptionexplaining its purpose, expected values, and any nuances. - Comments: Use YAML or JSON comments to explain complex sections, design decisions, or specific

modelRequirementschoices. - Example Usage: Consider adding an

examplesfield within the manifest (or external documentation linked frommetadata) showing typical input and expected output.

3. Robust Input/Output Validation

Leverage the power of JSON Schema within your inputSchema and outputSchema to ensure data quality and prevent errors.

- Mandatory Fields: Clearly mark

requiredfields. - Type Checking: Always specify

type(string, integer, boolean, array, object). - Format and Patterns: Use

format(e.g.,email,uri) andpattern(regex) for strings. - Range Constraints: Apply

minLength,maxLengthfor strings/arrays,minimum,maximumfor numbers. - Enumerations: Use

enumfor fields with a fixed set of allowed values. - Custom Error Messages: Provide

errorMessagefields within your schema definitions for more user-friendly validation failures.

Robust validation prevents invalid data from reaching your LLM, which can lead to unpredictable responses, higher costs, and security vulnerabilities. It's a critical aspect of building reliable AI systems, especially when working with a Unified LLM API.

4. Version Control for Skills

Treat your skill manifests like source code.

- Git Integration: Store all manifests in a version control system (e.g., Git).

- Semantic Versioning: Use semantic versioning (e.g.,

1.0.0,2.1.0) for your skill'smetadata.version.MAJORfor breaking changes (e.g., changes toinputSchema/outputSchemathat require consumers to update).MINORfor new features or non-breaking additions.PATCHfor bug fixes or minor adjustments.

- Changelogs: Maintain a changelog for each skill, detailing changes between versions.

- Benefits: Enables tracking evolution, rollbacks, collaboration, and clear communication about breaking changes.

5. Smart LLM Routing Strategy and Fallbacks

Intelligent LLM routing is a cornerstone of cost-effective and high-performance AI.

- Specificity in

modelRequirements: Don't just ask for "any LLM." SpecifymodelType,capabilities,minTokens,maxLatency,maxCostPerToken, andpreferredProviders. - Prioritize Cost vs. Performance: For latency-sensitive applications,

maxLatencyshould be strict. For batch processing,maxCostPerTokenmight be prioritized. - Leverage Multi-model support: If a sub-task within your skill could be handled by a cheaper, specialized model, consider refactoring into a smaller skill or explicitly defining model requirements for that sub-task.

- Define

fallbackModels: Always specify fallback options. This is crucial for resilience. If the primary model or provider is unavailable or fails, the system can automatically switch to an alternative, preventing service interruptions. - Monitor Routing Effectiveness: Track which models are being used, their costs, latency, and success rates to continually optimize your routing strategy.

Platforms like XRoute.AI thrive on well-defined modelRequirements to execute optimal LLM routing decisions, ensuring you get the best performance for your budget.

6. Security Considerations

Embed security best practices directly into your manifest and skill design.

- Least Privilege: Define

securityContextto grant only the necessary permissions to the skill. - Input Sanitization: While

inputSchemavalidates structure, the underlying skill logic should sanitize and escape inputs before passing them to the LLM to prevent prompt injection or other vulnerabilities. - Output Validation: Validate output against

outputSchemato catch potentially malicious or malformed responses from the LLM. - Data Handling: Document how the skill handles sensitive data (

dataAccessLevel). Does it store data? Does it redact PII?

7. Performance Optimization

Beyond LLM routing, other aspects of the manifest contribute to performance.

- Efficient Prompting: Keep prompts concise yet clear. Avoid unnecessarily long or complex prompts, which consume more tokens and increase latency.

- Appropriate

max_tokens: Setmax_tokensinmodelRequirements.parametersto a realistic maximum for the expected output. Requesting too many tokens wastes resources; too few can truncate responses. - Rate Limits: Configure

rateLimitsto prevent overloading the system or exceeding provider limits, which can lead to throttling and degraded performance. - Timeouts: Set realistic

timeoutvalues inoperationalParameters. A skill that times out gracefully is better than one that hangs indefinitely.

By diligently applying these best practices, you can create OpenClaw Skill Manifests that are not only functional but also maintainable, scalable, cost-effective, and resilient, serving as robust building blocks for sophisticated AI applications powered by Unified LLM APIs and intelligent multi-model support.

Advanced Tips for OpenClaw Skill Development

Moving beyond the basics, these advanced tips can help you unlock even greater power, flexibility, and efficiency in your OpenClaw skill development.

1. Dynamic Manifests and Configuration Management

While static YAML/JSON manifests are excellent for declarative definitions, some scenarios require more dynamism.

- Templating for Deployment: For similar skills deployed in different environments (e.g., staging vs. production), use templating engines (like Jinja2 for YAML) to generate manifests dynamically. This allows environment-specific variables (like different

modelNameHintorrateLimits) to be injected. - External Configuration: Instead of embedding all configuration directly, reference external configuration sources (e.g., a Feature Flag service, a dedicated configuration store) in your manifest metadata. The OpenClaw runtime can then fetch additional parameters at runtime. This allows for live updates to skill behavior without redeploying the manifest.

- Version Control for Config: Ensure that dynamic templates and external configurations are also under strict version control.

2. Leveraging Multi-model Support for Nuanced Tasks

True multi-model support extends beyond simply picking the "best" model; it involves strategically combining models.

- Task Chaining with Different Models: Design complex skills as a sequence of smaller, specialized steps, where each step explicitly uses a different model through a Unified LLM API.

- Example: A "Research Assistant" skill might:

- Use a fast, cheap model to extract keywords from a query.

- Pass keywords to a vector database for retrieval augmented generation (RAG).

- Send retrieved context and original query to a powerful, accurate model for comprehensive answer generation.

- Finally, use a smaller, faster model for summarization or rephrasing for conciseness.

- Example: A "Research Assistant" skill might:

- Parallel Execution with Ensemble Methods: For critical decisions or diverse perspectives, send the same input to multiple models concurrently via a Unified LLM API, then use a separate "aggregation" or "voting" mechanism (which could be another skill or a simple function) to synthesize the results.

- Conditional Model Selection: Integrate logic within your skill (or at the LLM routing layer) to dynamically choose models based on input characteristics or runtime conditions. For instance, if input text is over 5000 tokens, use a model with a larger context window; otherwise, use a cheaper, smaller model.

3. Optimizing LLM Routing for Cost and Latency at Scale

Efficient LLM routing becomes critically important as usage scales, directly impacting operational costs and user experience.

- Real-time Performance Monitoring: Integrate monitoring tools that track the actual latency, error rates, and costs of different LLM providers and models. Use this data to inform and adapt your routing logic.

- Cost-Aware vs. Latency-Aware Toggles: Implement "mode" switches for your application or skill consumers. For example, a

priority: lowtag in the input could trigger cost-optimized routing, whilepriority: hightriggers latency-optimized routing. - Caching Layer: For frequently asked questions or predictable inputs, implement a caching layer before the Unified LLM API. This can drastically reduce LLM invocations, saving cost and reducing latency.

- Rate Limit Negotiation: Proactively monitor and potentially adjust

rateLimitsin your manifests based on anticipated traffic patterns and provider agreements. - Load Shedding and Graceful Degradation: Define strategies in your

errorHandlingto gracefully degrade service. If all preferred models are overloaded, perhaps fall back to a less accurate but guaranteed-to-respond model, or return a polite error message.

Platforms like XRoute.AI are designed to offer these routing capabilities, emphasizing low latency AI and cost-effective AI through their intelligent orchestration layer. By clearly defining your modelRequirements and fallbackModels in the OpenClaw manifest, you empower such platforms to make the most optimal decisions.

4. Integrating with External Tools and APIs

OpenClaw skills don't have to be purely LLM-driven. They can act as orchestrators.

- Function Calling Integration: Many modern LLMs support function calling. Your

executionConfigcan specify that after an LLM response, a specific external function should be invoked (e.g., to query a database, send an email, update a CRM). The LLM's role would be to parse the user's intent and generate the parameters for that function. - Pre- and Post-Processing: Integrate external tools for data preparation (e.g., document parsing, OCR) before passing to the LLM, or for processing LLM output (e.g., sentiment analysis, validation against business rules). Your manifest can declare these external dependencies.

- Webhooks for Asynchronous Tasks: For long-running tasks, the skill can initiate an external process and then use a webhook to receive results asynchronously, updating the status or triggering subsequent actions.

5. Advanced Error Handling and Observability

Robustness is key for production-ready AI applications.

- Granular

errorHandling: Beyond simple retries, define specific actions for different types of errors (e.g.,model_unavailable,rate_limit_exceeded,input_validation_failure). - Semantic Error Codes: Ensure your skill outputs clear, standardized error codes and messages that consuming applications can interpret.

- Comprehensive Logging: The OpenClaw runtime should log all skill invocations, including inputs, outputs, chosen LLM, routing decisions, latency, and any errors. This data is invaluable for debugging, performance analysis, and security auditing.

- Metrics and Monitoring: Expose metrics for skill usage (invocations per minute), success rates, average latency, and cost per invocation. Integrate these with your existing monitoring dashboards.

By embracing these advanced tips, you can transform your OpenClaw Skill Manifests from simple configuration files into sophisticated blueprints for highly intelligent, robust, and economically efficient AI systems.

Real-World Scenarios: Applying OpenClaw Skills

The declarative power of OpenClaw Skill Manifests, combined with a Unified LLM API, multi-model support, and intelligent LLM routing, opens up a vast array of real-world applications. Let's explore a few illustrative scenarios.

Scenario 1: Intelligent Customer Service Chatbot

Imagine a customer service chatbot that needs to handle diverse queries, from simple FAQs to complex troubleshooting.

- Skills Involved:

greeting-skill: Initiates conversation, identifies user intent.faq-answerer: Uses an LLM with strong factual recall for common questions.troubleshooting-guide: For complex issues, might use a specialized LLM for diagnostic steps, potentially integrating with an internal knowledge base.sentiment-analyzer: Detects user frustration to escalate to a human agent if needed.order-status-checker: Calls an external API to retrieve order details, then summarizes the info using an LLM.product-recommender: Suggests products based on user preferences, using an LLM for creative suggestions.

- OpenClaw Manifest Impact:

- Each skill has its own manifest defining inputs (e.g., user query, order ID), outputs (e.g., answer, troubleshooting steps, sentiment score), and specific

modelRequirements. faq-answerermight specify amaxCostPerTokenfor a cheaper, fast LLM.troubleshooting-guidemight prioritize a more powerful, accurate (and potentially slower/pricier) LLM for critical diagnostic advice, with a highermaxLatencytolerance.sentiment-analyzerwould use a highly specialized, low-latency sentiment model.- LLM routing dynamically selects the optimal model based on the manifest for each sub-task, ensuring cost-efficiency and accuracy.

- Unified LLM API ensures that all these diverse models are accessed through a single, consistent interface.

- Each skill has its own manifest defining inputs (e.g., user query, order ID), outputs (e.g., answer, troubleshooting steps, sentiment score), and specific

Scenario 2: Automated Content Generation Pipeline

A marketing agency wants to automate the generation of blog post outlines, social media updates, and email newsletters based on a single topic.

- Skills Involved:

topic-researcher: Takes a general topic, uses an LLM to generate keywords and sub-topics.blog-outline-generator: Generates a structured blog outline from keywords.social-media-copywriter: Crafts short, engaging social media posts.email-newsletter-drafting: Creates a longer, persuasive email draft.image-prompt-generator: From textual content, suggests prompts for an image generation AI.tone-adjuster: Rewrites content to a specific tone (e.g., formal, casual, humorous).

- OpenClaw Manifest Impact:

- The

blog-outline-generatorandemail-newsletter-draftingskills might specify differentmodelRequirementsfor creativity versus structured output. tone-adjustercould leverage a specific LLM known for its stylistic control.- Multi-model support allows the pipeline to use the best LLM for each unique content type, ensuring variety and quality.

- LLM routing ensures that when the system requests "creative writing," it goes to a model known for that, and when it needs "structured bullet points," it goes to another.

- The

inputSchemaforsocial-media-copywritermight include parameters likeplatform(Twitter, LinkedIn) to tailor output.

- The

Scenario 3: Data Analysis and Summarization for Business Intelligence

A financial analyst needs to quickly summarize quarterly reports, extract key figures, and identify trends from unstructured text data.

- Skills Involved:

document-parser: Extracts raw text from PDFs, potentially using OCR tools, then cleans it.key-figure-extractor: Identifies and extracts financial metrics (e.g., revenue, profit, growth rates) from the cleaned text. This might use a highly specialized financial LLM.trend-identifier: Analyzes extracted figures and narrative text to identify positive or negative trends.executive-summarizer: Condenses the findings into a brief, actionable executive summary.report-formatter: Structures the output into a readable report format (e.g., Markdown, JSON).

- OpenClaw Manifest Impact:

key-figure-extractorwould have strictmodelRequirementsfor accuracy, potentially prioritizing LLMs specifically fine-tuned on financial data, even if they are more expensive (lowmaxCostPerTokenmight not apply here, but highcapabilityandaccuracywould).executive-summarizermight balancemaxLatencyandmaxCostPerTokenfor a concise output.- LLM routing dynamically selects models based on the sensitivity and specificity of the task. For numerical extraction, a model with strong data handling; for narrative summary, a model with strong generative capabilities.

- The

outputSchemaforkey-figure-extractorwould define a structured JSON object containing numerical values, allowing subsequent skills to easily process this data.

These scenarios highlight how OpenClaw Skill Manifests facilitate the construction of sophisticated AI applications by providing a structured, modular, and dynamic way to define and orchestrate AI capabilities. By leveraging a Unified LLM API like XRoute.AI, with its inherent multi-model support and intelligent LLM routing, developers can efficiently build, deploy, and scale these intelligent solutions without getting bogged down in the complexities of managing individual LLM providers.

The Future of AI Skill Management and the Role of Unified LLM APIs

The journey into sophisticated AI applications is characterized by increasing complexity. As LLMs become more powerful, specialized, and ubiquitous, the challenge shifts from merely accessing these models to managing them effectively within a coherent system. The concept of an OpenClaw Skill Manifest, and the architectural principles it embodies, stands as a critical evolutionary step in this journey.

The future of AI skill management is unequivocally moving towards greater abstraction, intelligence, and interoperability. We are witnessing a clear trend where monolithic AI systems are giving way to modular, composable architectures. In this paradigm, well-defined skill manifests serve as the foundational building blocks, enabling AI systems to operate with unprecedented levels of flexibility and efficiency.

The Enduring Importance of a Unified LLM API:

At the heart of this evolution is the Unified LLM API. As the number of available models continues to explode, each with its unique strengths, weaknesses, and API specifications, the need for a single, consistent interface becomes even more pronounced. Without it, developers would be mired in integration headaches, constantly adapting to new provider changes, and struggling to leverage the best-of-breed models for their specific needs.

Platforms like XRoute.AI represent the vanguard of this movement. By offering an OpenAI-compatible endpoint that consolidates access to over 60 models from more than 20 providers, XRoute.AI directly addresses the fragmentation challenge. Its focus on low latency AI, cost-effective AI, and developer-friendly tools aligns perfectly with the demands of modern AI development. Such a Unified LLM API not only simplifies integration but also acts as the intelligent orchestration layer that makes advanced LLM routing and multi-model support practically achievable at scale.

The Power of Multi-model Support:

The ability to seamlessly integrate and switch between multiple LLMs will no longer be a luxury but a necessity. No single LLM will ever be optimal for all tasks, and specialized models will continue to emerge for niche applications. A robust skill management framework, underpinned by multi-model support, ensures that applications can always leverage the most appropriate tool for the job, optimizing for accuracy, creativity, speed, or cost as required. This flexible model ecosystem will drive innovation and allow AI solutions to tackle increasingly complex and nuanced problems.

The Crucial Role of Intelligent LLM Routing:

As costs and performance become major differentiators in the competitive AI landscape, intelligent LLM routing will evolve into an even more sophisticated capability. Beyond simple rule-based decisions, future routing mechanisms will incorporate real-time performance analytics, predictive cost modeling, adaptive learning, and even A/B testing of models to ensure continuous optimization. Skill Manifests, by providing declarative modelRequirements and operationalParameters, will continue to feed the routing engine with the necessary metadata to make these highly informed decisions, ensuring applications remain performant and economically viable.

The Future Landscape:

In the coming years, we can expect:

- More Sophisticated Skill Orchestration: OpenClaw-like frameworks will evolve to support more complex workflows, including conditional logic, parallel execution, human-in-the-loop interventions, and dynamic adaptation.

- Enhanced Observability and Governance: Tools for monitoring skill performance, cost attribution, model drift, and compliance will become standard, directly integrating with manifest definitions.

- AI-Powered Manifest Generation: AI itself might assist in generating initial skill manifests from natural language descriptions or existing code, further accelerating development.

- Standardization and Interoperability: Efforts towards open standards for AI skill definitions and Unified LLM API interfaces will gain momentum, fostering a more collaborative and interoperable AI ecosystem.

The OpenClaw Skill Manifest represents a visionary approach to managing the inherent complexity of modern AI. By providing a clear, declarative contract for AI capabilities, it empowers developers to build modular, resilient, and intelligent applications. When combined with the power of a Unified LLM API that offers robust multi-model support and intelligent LLM routing, like XRoute.AI, this approach not only simplifies the development process but also ensures that AI solutions can scale efficiently, adapt dynamically, and unlock the full potential of large language models for a smarter future.

Conclusion

The journey through the OpenClaw Skill Manifest has illuminated its profound significance in shaping the future of AI application development. We've established that the manifest is far more than a simple configuration file; it is the definitive contract for an AI skill, meticulously outlining its identity, purpose, input and output expectations, and the critical LLM routing decisions that govern its execution.

By embracing a structured approach to defining skills, leveraging a Unified LLM API to abstract away the complexities of diverse model providers, and intelligently applying multi-model support, developers can construct AI systems that are both powerful and remarkably flexible. The best practices—from modular design and robust validation to intelligent LLM routing and comprehensive documentation—are not mere suggestions but essential pillars for building resilient, cost-effective, and high-performance AI solutions.

In an era where the capabilities of large language models are expanding at an unprecedented pace, managing these powerful tools effectively is paramount. The OpenClaw Skill Manifest provides the clarity, control, and standardization needed to navigate this dynamic landscape, transforming the intricate challenge of AI integration into a streamlined, scalable, and highly efficient process. As you embark on your next AI project, remember the power of a well-crafted manifest – it is your blueprint for success in the intelligent era.

Frequently Asked Questions (FAQ)

1. What is the primary benefit of using an OpenClaw Skill Manifest? The primary benefit is standardization and abstraction. It provides a clear, declarative contract for an AI skill, simplifying its integration, management, and orchestration within an AI system. It hides the complexities of underlying LLM APIs and models, enabling modularity, reusability, and efficient deployment, especially when working with a Unified LLM API.

2. How does the Skill Manifest support "multi-model support"? The Skill Manifest includes modelRequirements where you can specify the type of LLM, its capabilities, preferred providers, and even specific model hints. This allows the OpenClaw runtime (or a platform like XRoute.AI) to intelligently select the most appropriate LLM from a pool of available models for a given task, leveraging the unique strengths of different models for optimal performance and cost.

3. What role does "LLM routing" play in an OpenClaw-based system? LLM routing is the dynamic process of selecting the best LLM for a specific request based on criteria defined in the Skill Manifest, such as maxLatency, maxCostPerToken, capabilities, or preferredProviders. This ensures that each skill invocation is processed by the most suitable model, optimizing for cost, performance, or accuracy, and providing resilience through fallback mechanisms.

4. Can an OpenClaw Skill Manifest integrate with any LLM provider? While the manifest itself defines requirements, its ability to integrate with various providers depends on the underlying AI orchestration platform or Unified LLM API it communicates with. Platforms like XRoute.AI are specifically designed to provide a single, consistent interface to numerous LLMs from multiple providers, making integration seamless for skills defined by a manifest.

5. Is the OpenClaw Skill Manifest a proprietary format, or is it based on open standards? The "OpenClaw Skill Manifest" is presented here as a conceptual framework for structured AI skill definitions. While the precise name might be hypothetical, its design principles (using YAML/JSON, relying on JSON Schema for validation) are inspired by existing open standards and best practices in declarative configuration and API design (e.g., Kubernetes resource definitions, OpenAPI specifications). The aim is to promote interoperability and ease of adoption.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.