Unified LLM API: Simplify Your AI Development

I. Introduction: Navigating the Labyrinth of Large Language Models

The dawn of large language models (LLMs) has ushered in an era of unprecedented innovation, transforming the landscape of artificial intelligence. From sophisticated chatbots and intelligent content generators to code assistants and data analysis tools, LLMs are reshaping how businesses operate and how individuals interact with technology. The promise is immense: automation, enhanced creativity, and deeper insights, all within reach. Yet, as the field proliferates, so too does its complexity. What began as a handful of pioneering models has rapidly expanded into a vast, ever-growing ecosystem, each model boasting unique strengths, nuances, and, crucially, distinct application programming interfaces (APIs).

This rapid diversification, while empowering, has inadvertently created a new set of challenges for developers and businesses alike. Integrating a single LLM into an application can be a straightforward task, but what happens when the need arises to leverage the distinct advantages of multiple models? What if one model excels at creative writing, another at factual recall, and yet another at code generation? The immediate consequence is API sprawl – a tangled web of disparate documentation, authentication methods, and integration paradigms. This complexity leads to significant development overhead, slower innovation cycles, increased maintenance costs, and often, suboptimal performance as developers struggle to manually switch between models based on task requirements.

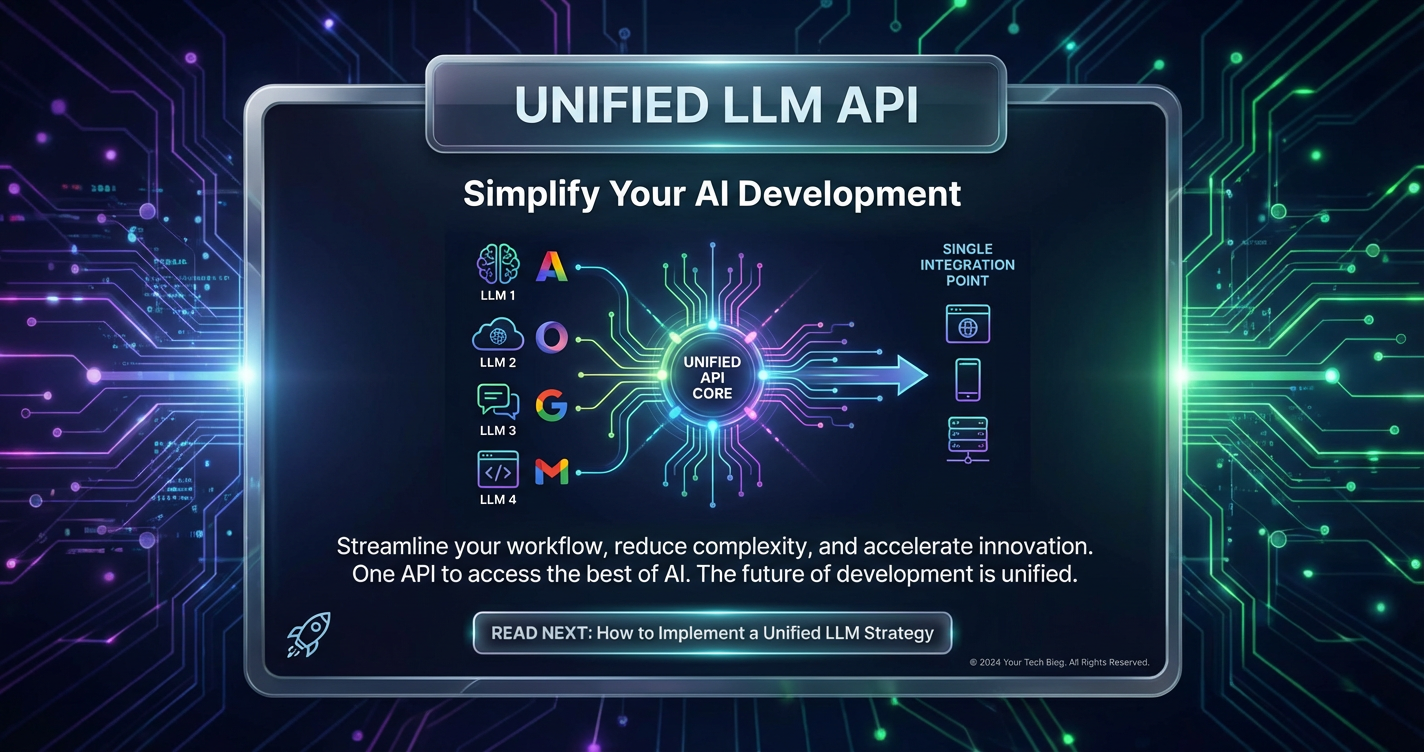

It is precisely this burgeoning complexity that has given rise to an elegant and powerful solution: the Unified LLM API. Imagine a universal remote control for your entire AI ecosystem – a single, standardized interface that grants seamless access to a multitude of large language models, abstracting away their underlying differences. This revolutionary approach not only dramatically simplifies the integration process but also unlocks a new level of flexibility, efficiency, and intelligence in AI-powered applications. By consolidating access and introducing intelligent orchestration layers, a Unified LLM API empowers developers to focus on building innovative features rather than wrestling with API minutiae, paving the way for more robust, cost-effective, and future-proof AI solutions. This article will delve deep into the intricacies of this transformative technology, exploring its mechanics, benefits, and profound impact on the future of AI development.

II. Deconstructing the Unified LLM API: A Gateway to AI Efficiency

At its core, a Unified LLM API represents a paradigm shift in how developers interact with and harness the power of large language models. Rather than directly engaging with the individual APIs of OpenAI, Anthropic, Google, or a host of other providers, developers interact with a single, consistent interface. This abstraction layer acts as a central hub, orchestrating requests to the most appropriate LLM based on predefined rules or dynamic analysis.

What Exactly is a Unified LLM API?

A Unified LLM API is, essentially, a single endpoint and a standardized set of API calls that allow developers to access and utilize a wide array of different large language models from various providers. It serves as an intermediary, translating the generic requests from a developer's application into the specific format required by the chosen underlying LLM, and then translating the LLM's response back into a consistent format for the application.

Consider the analogy of a universal charging cable for all your electronic devices. Before its advent, you needed a different charger for every phone, tablet, and laptop. The universal cable simplifies this by providing a single interface that adapts to multiple devices. Similarly, a Unified LLM API eliminates the need for developers to learn and implement separate API calls, authentication mechanisms, and response parsing logic for each LLM they wish to use. This consolidation drastically reduces the initial development burden and ongoing maintenance complexity.

Beyond the Basics: How it Works Under the Hood

The operational mechanism of a Unified LLM API involves several sophisticated components working in concert:

- Standardized Endpoints and Abstraction Layers: The most visible aspect is the single API endpoint (e.g.,

api.unified-llm-platform.com/v1/chat/completions). Developers send their requests (e.g., a prompt for text generation) to this endpoint using a consistent data structure, often mimicking popular standards like the OpenAI API specification for ease of adoption. Behind this endpoint lies an abstraction layer that masks the diverse functionalities and data formats of the underlying LLMs. This layer handles the "translation" of developer requests into the specific payload required by, say, OpenAI's GPT-4, or Anthropic's Claude 3, and then normalizes their varied responses into a single, predictable format. This ensures that regardless of which LLM processes the request, the application always receives a consistent output structure, simplifying downstream parsing and processing. - Proxying and Orchestration: Once a request hits the unified API, the platform acts as an intelligent proxy. It doesn't just forward the request blindly; instead, it orchestrates the entire process. This orchestration includes:

- Authentication and Authorization: Managing API keys and access tokens for various LLM providers securely on behalf of the developer.

- Request Routing: A critical component, this involves determining which specific LLM from the available pool is best suited to handle the incoming request. This decision can be based on a multitude of factors, as we will explore in the "LLM Routing" section.

- Rate Limiting and Load Balancing: Distributing requests across multiple LLM instances or providers to prevent bottlenecks and ensure high availability, especially under heavy load.

- Caching: Storing frequently requested responses to reduce latency and API costs for repetitive queries.

- Monitoring and Logging: Tracking usage, performance metrics, and potential errors across all integrated LLMs, providing valuable insights for optimization and debugging.

By centralizing these functions, a Unified LLM API platform takes on the heavy lifting of managing a complex, multi-LLM environment. It allows developers to declare what they want to achieve (e.g., "generate a marketing slogan") without needing to worry about how that objective will be met or which specific LLM will ultimately power the response. This decoupling of application logic from LLM implementation details is a cornerstone of agile and resilient AI development.

III. The Cornerstone of Flexibility: Multi-Model Support

In the rapidly evolving landscape of generative AI, no single large language model can claim to be a panacea for all tasks. Each model, whether developed by a tech giant or an open-source community, possesses unique architectural designs, training datasets, and fine-tuning methodologies, endowing it with distinct strengths and weaknesses. Recognizing and leveraging this diversity is paramount for building truly sophisticated and adaptable AI applications. This is where the concept of Multi-model support, a core feature of any robust Unified LLM API, becomes indispensable.

The Diverse Landscape of LLMs: Strengths and Specializations

The past few years have witnessed an explosion in the number and capabilities of LLMs. A brief overview reveals their specialized niches:

- OpenAI's GPT Series (e.g., GPT-3.5, GPT-4): Often considered general-purpose powerhouses, renowned for their strong few-shot learning abilities, robust common sense reasoning, and exceptional performance across a wide range of tasks, from creative writing to complex problem-solving. They are typically optimized for diverse use cases.

- Anthropic's Claude (e.g., Claude 3 Opus, Sonnet, Haiku): Distinguished by their focus on safety, helpfulness, and harmlessness (HHH principles). Claude models excel in tasks requiring nuanced understanding, extensive context windows, and adherence to ethical guidelines, making them ideal for sensitive applications or lengthy document analysis.

- Google's Gemini/PaLM: Engineered for enterprise-grade solutions and multimodal capabilities, often integrating seamlessly with Google Cloud services. Gemini models, in particular, are designed to natively understand and operate across different modalities like text, code, audio, image, and video, offering powerful integrated experiences.

- Open-Source Alternatives (e.g., Llama, Falcon, Mistral): These models provide unprecedented transparency, customizability, and cost-effectiveness. While they might sometimes require more effort in fine-tuning and deployment, their open nature fosters innovation and allows for highly specialized, domain-specific applications without hefty API costs, often appealing to researchers and startups.

Choosing the "best" LLM is rarely a simple decision; it's about selecting the most appropriate LLM for a given task, balancing factors like accuracy, speed, cost, and ethical considerations. For instance, a complex legal document review might demand Claude's extensive context window and safety features, while generating a quick social media caption might be perfectly handled by a more cost-effective model like GPT-3.5 or a smaller open-source model.

The Advantages of Multi-model support

The ability of a Unified LLM API to offer Multi-model support translates into a multitude of tangible benefits for developers and businesses:

- Tailoring Models to Tasks: Precision and Effectiveness: With access to various LLMs, developers can dynamically select the best tool for the job. This "best-of-breed" approach ensures that each specific request is handled by the model most proficient at that particular task, leading to higher accuracy, more relevant outputs, and ultimately, a superior user experience. This flexibility allows for fine-grained control over AI performance across different application modules.

- Mitigating Vendor Lock-in and Enhancing Resilience: Relying on a single LLM provider exposes an application to significant risks: potential API changes, service outages, price increases, or even the discontinuation of a specific model. Multi-model support inherently reduces this risk by providing built-in redundancy. If one provider experiences an issue, requests can be seamlessly rerouted to another available model, ensuring service continuity and preventing costly downtime. It also provides leverage in commercial negotiations and future strategic planning.

- Access to Cutting-Edge Innovation Without Re-coding: The LLM landscape is constantly evolving, with new, more powerful, and specialized models emerging regularly. A Unified LLM API with robust multi-model support ensures that developers can integrate these new innovations into their applications with minimal effort. Instead of re-writing integration code for each new API, they simply point their existing calls to the unified platform, which handles the underlying complexity. This significantly accelerates the adoption of new technologies and keeps applications at the forefront of AI capabilities.

- Cost Optimization through Diversification: Different LLMs come with different pricing structures. By having the option to use multiple models, developers can intelligently route less critical or simpler requests to more cost-effective models, reserving premium, more expensive models for tasks that genuinely require their advanced capabilities. This strategic approach can lead to substantial cost savings over time, a critical factor for scaling AI operations.

- Facilitating Experimentation and A/B Testing: The unified interface makes it incredibly easy to experiment with different LLMs for the same task. Developers can quickly compare the performance, output quality, and cost-efficiency of various models, making data-driven decisions about which model best serves their specific needs. This iterative process is crucial for optimizing AI-driven features.

Table 1: A Glimpse at Diverse LLMs and Their Strengths (Accessible via Unified API)

| LLM Family | Primary Strengths | Ideal Use Cases | Typical Cost Profile (Relative) | Key Considerations |

|---|---|---|---|---|

| OpenAI GPT | General-purpose reasoning, creativity, code generation | Chatbots, content generation, summarization, complex tasks | Medium to High | Excellent all-rounder, strong few-shot learning |

| Anthropic Claude | Long context windows, safety, ethical guidelines | Legal review, customer service, sensitive document analysis | Medium to High | Focus on HHH principles, robust for long texts |

| Google Gemini/PaLM | Multimodal capabilities, enterprise integration, scalability | Image/video analysis, cross-modal understanding, large-scale apps | Medium | Deep integration with Google Cloud ecosystem |

| Meta Llama/Mistral | Open-source, highly customizable, cost-effective | Fine-tuning for specific domains, on-premise deployment | Low (deployment costs) | Requires self-hosting or managed service, high customization |

| Cohere Command | Text generation, semantic search, RAG, enterprise-focused | Enterprise search, chatbots with knowledge bases, summarization | Medium | Strong for enterprise applications and RAG patterns |

The ability to seamlessly switch between these diverse models through a single API is not merely a convenience; it is a strategic advantage that empowers businesses to build more resilient, intelligent, and cost-efficient AI applications capable of adapting to a dynamic technological landscape.

IV. Intelligent Orchestration: The Art and Science of LLM Routing

While multi-model support provides the raw material—a diverse collection of powerful LLMs—it is LLM routing that transforms this collection into an intelligent, efficient, and dynamic AI system. LLM routing is the sophisticated decision-making layer within a Unified LLM API that determines which specific LLM, from the available pool, is best suited to handle an incoming request. It's the "traffic controller" for your AI workflow, directing each query to its optimal destination based on a set of predefined rules, real-time metrics, or advanced algorithmic analysis.

Understanding LLM Routing: The Brains Behind the Operation

LLM routing is more than just load balancing; it's about intelligent allocation of resources to maximize performance, minimize cost, and ensure the highest quality of output for every interaction.

- Definition: LLM routing refers to the process of programmatically directing an API request for an LLM task (e.g., text generation, summarization, translation) to a specific large language model from a selection of available models, based on criteria such as cost, latency, capability, or user preference.

- Why Routing Matters: Efficiency, Cost, and Performance: Without intelligent routing, developers would either be forced to hardcode a single LLM (sacrificing flexibility and optimization) or implement complex, manual conditional logic within their application to choose between models. This latter approach is brittle, scales poorly, and is prone to errors. LLM routing centralizes this intelligence, allowing for:

- Maximized Efficiency: Ensuring that tasks are performed by the most capable or efficient model for that specific context.

- Optimized Costs: Routing simple, high-volume tasks to cheaper models, reserving premium models for complex, critical queries.

- Enhanced Performance: Directing requests to models that are currently experiencing lower latency or higher throughput, ensuring quicker response times.

- Improved Reliability: Automatically failing over to alternative models if a primary model or provider experiences an outage.

Key LLM Routing Strategies

A robust Unified LLM API platform will offer a variety of LLM routing strategies, often allowing for complex combinations to meet specific business needs:

- Cost-Based Routing: This is one of the most common and impactful strategies. The system analyzes the expected cost of processing a given request by different LLMs and routes the request to the cheapest option that can still meet the required quality or capability threshold. For example, simple paraphrasing tasks might go to a smaller, less expensive model, while nuanced creative writing would be routed to a premium model. This is a cornerstone of cost-effective AI.

- Latency-Based Routing: For real-time applications where speed is paramount (e.g., live chatbots, interactive voice assistants), requests are routed to the model or provider endpoint currently exhibiting the lowest response time. This ensures a low latency AI experience for end-users, dynamically adapting to network conditions and model load.

- Performance/Accuracy-Based Routing: In scenarios where output quality is non-negotiable, routing can be based on the known performance characteristics or benchmark accuracy of different models for specific task types. A complex legal query might always be routed to a model known for its high factual accuracy and extensive context window, even if it's slightly more expensive.

- Capability-Based Routing: This strategy routes requests based on the specific features or capabilities required by the prompt. If a prompt explicitly asks for an image description, it would be routed to a multimodal LLM. If it's a code generation request, it might go to a model specifically fine-tuned for coding.

- Failover Routing: A crucial strategy for system resilience. If the primary LLM or its provider's API experiences an outage, slowdown, or returns an error, the request is automatically rerouted to a backup LLM from a different provider. This ensures high availability and uninterrupted service, significantly improving the robustness of AI applications.

- Load Balancing: Distributes incoming requests evenly across multiple instances of the same model or across different models if they are interchangeable for a given task. This prevents any single LLM from becoming a bottleneck and helps maintain consistent performance under high traffic.

- User/Context-Based Routing: In more advanced scenarios, routing can depend on the user's subscription tier (e.g., premium users get access to the best models), historical user preferences, or the specific context of the conversation (e.g., routing to a specialized medical LLM if the conversation turns clinical).

Implementing Routing Logic: From Simple Rules to Dynamic Algorithms

The implementation of routing logic can range from straightforward conditional statements to sophisticated machine learning models:

- Rule-Based Routing: The simplest form, where developers define explicit rules (e.g., "if prompt contains 'code generation', use Model X; otherwise, use Model Y").

- Metadata-Based Routing: Attaching metadata to requests (e.g.,

model_preference: 'cost-effective',priority: 'high') that the routing engine interprets. - Dynamic and Algorithmic Routing: The most advanced systems use real-time data (e.g., current latency, model uptime, cost fluctuations) and machine learning algorithms to make routing decisions on the fly, continuously optimizing for the desired outcome (e.g., lowest cost within a performance SLA).

Table 2: Common LLM Routing Strategies and Their Benefits

| Routing Strategy | Description | Primary Benefit | Ideal Scenario | Example |

|---|---|---|---|---|

| Cost-Based | Routes to the cheapest LLM capable of meeting requirements. | Significant cost savings, cost-effective AI | High-volume, routine tasks (e.g., basic FAQs, social media drafts) | Use GPT-3.5-turbo for general inquiries, GPT-4-turbo for complex analysis. |

| Latency-Based | Routes to the LLM with the fastest response time. | Real-time responsiveness, low latency AI | Interactive chatbots, voice assistants, critical UI responses | If Claude 3 Sonnet is slower than GPT-4 for a given request, route to GPT-4. |

| Performance-Based | Routes to the LLM known for the highest quality/accuracy for the task. | Superior output quality, reliability | Legal document analysis, creative writing, scientific summarization | Always send sensitive medical inquiries to a model optimized for medical knowledge. |

| Capability-Based | Routes to an LLM with specific required features (e.g., multimodal). | Task-specific optimization, specialized output | Image captioning, code debugging, specific language translation | Route multimodal requests to Google Gemini, code generation to GPT-4-turbo-preview. |

| Failover/Redundancy | Reroutes to a backup LLM if the primary fails or is unavailable. | High availability, system resilience | Any mission-critical application, preventing service disruption | If OpenAI API is down, automatically switch to Anthropic Claude. |

| Load Balancing | Distributes requests across multiple models/instances. | Consistent performance, prevents bottlenecks | High-traffic applications with scalable demand | Evenly distribute conversational AI requests across two different GPT-3.5 instances. |

The intelligent application of LLM routing transforms a collection of individual models into a cohesive, highly efficient, and adaptable AI super-engine. It allows developers to build future-proof applications that automatically optimize for cost, speed, and quality, making Unified LLM API platforms an indispensable tool in modern AI development.

V. Unlocking Tangible Benefits: Why Your Business Needs a Unified LLM API

The strategic advantages offered by a Unified LLM API extend far beyond mere convenience. For businesses striving to remain competitive and innovative in the AI era, these platforms represent a fundamental shift in how large language models are integrated, managed, and scaled. The benefits cascade through development cycles, operational costs, system reliability, and future adaptability, making them an increasingly critical component of any modern AI strategy.

Simplified Development and Faster Time-to-Market

One of the most immediate and profound benefits of a Unified LLM API is the dramatic simplification of the development process.

- Reduced Integration Complexity: Instead of wrestling with distinct API specifications, authentication flows, and data schemas for each LLM provider, developers interact with a single, consistent interface. This significantly reduces the learning curve and the amount of boilerplate code required to connect to multiple models. The cognitive load on engineering teams is lightened, allowing them to focus on unique application logic rather than integration plumbing.

- Less Boilerplate Code, More Innovation: With a standardized API, developers can write cleaner, more modular code. They abstract away the nuances of individual models, meaning their application logic doesn't need to change even if the underlying LLM provider is swapped or a new model is introduced. This accelerates the pace of innovation, as new features can be built and deployed faster, and existing ones can be enhanced by simply switching to a better-performing or more cost-effective model via the unified API. This agility translates directly into a faster time-to-market for new AI-powered products and services.

Significant Cost Optimization (Cost-effective AI)

The financial benefits of a Unified LLM API are substantial and often realized through intelligent routing.

- Leveraging Cheaper Models for Routine Tasks: Not every task requires the most advanced, and thus most expensive, LLM. A Unified LLM API with sophisticated LLM routing allows businesses to direct simpler, high-volume requests (e.g., basic FAQs, content rephrasing) to more economical models, while reserving premium models for complex reasoning, nuanced summarization, or highly creative tasks. This dynamic allocation ensures that resources are always optimized, preventing overspending on LLM inferences.

- Dynamic Routing to Reduce API Spend: Beyond static rule-sets, advanced routing engines can make real-time decisions based on current pricing tiers, token usage, and even promotional offers from different providers. This dynamic optimization ensures that businesses are continuously leveraging the most cost-effective path for each request, driving down overall API expenditure and enhancing cost-effective AI strategies.

Enhanced Reliability and Redundancy

In mission-critical applications, the availability and reliability of AI services are paramount. A Unified LLM API significantly bolsters these aspects.

- Protection Against Outages and API Changes: By integrating multiple LLM providers, the platform creates inherent redundancy. If a primary provider experiences an outage, performance degradation, or introduces breaking API changes, the Unified LLM API can automatically reroute traffic to an alternative, stable model without manual intervention. This dramatically minimizes downtime and ensures business continuity.

- Seamless Failover Mechanisms: Automated failover is a cornerstone of enterprise-grade reliability. The unified platform continuously monitors the health and performance of connected LLMs. In the event of an issue, it seamlessly switches to a healthy backup model, providing an uninterrupted service experience for end-users, often without them even noticing a disruption.

Superior Performance (Low Latency AI)

For applications requiring real-time interaction, latency is a critical performance metric.

- Choosing the Fastest Model for Time-Sensitive Applications: LLM routing strategies can prioritize latency, directing requests to the model or provider currently offering the fastest response times. This might involve considering geographical proximity to data centers, current server load, or specific model architectures known for their speed. This dynamic optimization ensures that users experience low latency AI, leading to smoother interactions in chatbots, virtual assistants, and other interactive applications.

- Optimized API Calls and Connection Management: The unified platform often handles connection pooling, persistent connections, and optimized data serialization, further reducing the overhead associated with individual API calls to various providers. These subtle optimizations contribute to an overall faster and more responsive AI infrastructure.

Future-Proofing Your AI Infrastructure

The AI landscape is characterized by its rapid pace of change. What is cutting-edge today might be obsolete tomorrow.

- Adapting to New Models and Innovations Effortlessly: A Unified LLM API acts as a crucial buffer between your application and the evolving world of LLMs. When a new, superior model emerges, or an existing one is updated, you can integrate it into your unified platform without needing to modify your core application code. This allows businesses to seamlessly adopt the latest advancements, keeping their AI capabilities state-of-the-art.

- Staying Agile in a Rapidly Evolving Landscape: This flexibility means that businesses are not locked into any single provider's technology stack. They can experiment with and migrate to different models or providers based on performance, cost, or strategic advantages, ensuring their AI strategy remains agile and responsive to market changes.

Empowering Experimentation and A/B Testing

Innovation thrives on experimentation. A Unified LLM API facilitates this at a fundamental level.

- Easily Swap Models to Compare Results: The abstracted interface makes it trivial to swap one LLM for another in an A/B test environment. Developers can quickly compare output quality, response times, and cost implications across different models for specific tasks, gathering crucial data to inform their choices.

- Accelerated Iteration Cycles: This ease of experimentation accelerates the iterative development process. Teams can quickly prototype, test, and deploy improvements to their AI features, leading to faster product cycles and continuous enhancement of AI-driven functionalities.

In essence, a Unified LLM API transforms a complex, fragmented LLM ecosystem into a streamlined, resilient, and intelligent resource. It is not just about simplifying development; it's about building a sustainable, adaptable, and highly optimized AI future for any organization.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

VI. Real-World Applications: Where Unified LLM APIs Shine

The versatility and efficiency provided by a Unified LLM API make it an invaluable asset across a broad spectrum of industries and use cases. By offering seamless access to diverse models and intelligent routing capabilities, these platforms empower developers to build sophisticated AI applications that adapt to specific needs, optimize for various constraints, and deliver superior user experiences.

Customer Service & Support: Advanced Chatbots and Virtual Assistants

This is perhaps one of the most visible applications. Businesses can leverage a Unified LLM API to create highly intelligent and responsive customer service agents.

- Scenario: A company wants to build a chatbot that can answer FAQs, provide product information, and escalate complex issues.

- Unified API Advantage:

- Routing: Simple, high-volume FAQs can be routed to a cost-effective model (e.g., GPT-3.5-turbo) for quick responses.

- Capability-Based Routing: More complex queries requiring nuanced understanding or sentiment analysis can be routed to a model known for its empathetic tone and longer context windows (e.g., Anthropic's Claude).

- Failover: If one provider experiences an outage, the chatbot automatically switches to an alternative model, ensuring uninterrupted support for customers.

- Multi-model support: For generating personalized follow-up emails, a different, more creative model might be used than the one for answering factual questions.

Content Creation & Marketing: Dynamic Generation, Summarization, Translation

The demand for high-quality, scalable content is insatiable, and LLMs are powerful tools in this domain.

- Scenario: A marketing team needs to generate blog post outlines, social media captions, email subject lines, and multilingual product descriptions.

- Unified API Advantage:

- Capability-Based Routing: For creative blog outlines, a model like GPT-4 might be preferred. For quick, punchy social media captions, a faster, cheaper model could be used.

- Cost Optimization: Automated summarization of internal documents can be handled by a more economical model, while a premium model is reserved for crafting nuanced marketing copy that directly impacts sales.

- Multi-model support: Integrating models specialized in different languages ensures accurate and culturally appropriate translations for global campaigns.

Software Development: Code Generation, Debugging, Documentation

Developers themselves are increasingly benefiting from LLM assistance.

- Scenario: A development team wants to integrate AI into their IDE for code completion, bug fixing suggestions, and automated documentation generation.

- Unified API Advantage:

- Capability-Based Routing: Code generation and debugging assistance can be routed to models specifically trained on vast codebases (e.g., code-focused versions of PaLM or GPT models).

- Latency Optimization: For real-time code completion, routing prioritizes the lowest latency model to maintain a smooth developer experience.

- Experimentation: Easily test different models' efficacy in generating idiomatic code for various programming languages without changing the core IDE integration.

Data Analysis & Business Intelligence: Extracting Insights, Automating Reports

LLMs can unlock insights from unstructured data, a crucial capability for business intelligence.

- Scenario: A business analyst needs to extract key sentiment from customer reviews, summarize lengthy research papers, or generate natural language reports from structured data.

- Unified API Advantage:

- Performance Routing: For critical reports requiring high accuracy, the best-performing summarization or extraction model is used.

- Multi-model support: One model might excel at named entity recognition from legal documents, while another is better at synthesizing financial reports.

- Cost-Effective AI: Processing vast archives of historical data for trend analysis might utilize a cheaper model for initial passes, with a more advanced model handling deeper, targeted queries.

Education & Research: Personalized Learning, Information Retrieval

The potential for LLMs to revolutionize learning and research is immense.

- Scenario: An educational platform wants to provide personalized tutoring, generate quiz questions, and help researchers synthesize complex scientific literature.

- Unified API Advantage:

- Capability-Based Routing: Models specialized in factual retrieval can answer student questions, while creative models generate diverse quiz formats.

- Long Context Windows: For summarizing research papers, models like Anthropic's Claude, known for their large context windows, can be specifically targeted via routing.

- Cost Optimization: Lower-cost models can handle routine vocabulary drills, while premium models assist with complex problem-solving.

Healthcare: Clinical Documentation, Diagnostic Support (with caveats)

While requiring rigorous safeguards, LLMs show promise in healthcare.

- Scenario: Assisting doctors with transcribing patient notes, summarizing medical literature, or generating preliminary diagnostic ideas (always under human supervision).

- Unified API Advantage:

- Security and Compliance: A Unified LLM API can enforce strict data handling and privacy protocols, potentially routing sensitive information only to models or providers with specific certifications.

- Performance Routing: High-stakes tasks like diagnostic support would prioritize models known for the highest accuracy in medical reasoning.

- Failover: Ensuring continuous access to critical AI tools, as downtime in healthcare can have serious consequences.

These examples illustrate that a Unified LLM API is not merely a technical abstraction but a strategic enabler, allowing organizations to deploy more intelligent, resilient, and cost-effective AI solutions across their entire operational footprint.

VII. Choosing Your Unified LLM API Platform: Key Considerations

As the market for Unified LLM API platforms grows, selecting the right one becomes a critical decision for any organization aiming to leverage AI efficiently. The choice will profoundly impact your development velocity, operational costs, system reliability, and future scalability. It's not just about which models are supported, but how well the platform orchestrates them, the developer experience it offers, and its long-term viability.

Here are the key considerations when evaluating a Unified LLM API platform:

- Breadth of Multi-model support:

- Which LLMs are available? Does the platform support the leading models (OpenAI, Anthropic, Google, etc.) as well as relevant open-source alternatives?

- How quickly are new models integrated? The pace of LLM innovation is rapid; a good platform will keep up.

- Support for fine-tuned or custom models? Can you bring your own fine-tuned models or even integrate proprietary models through the unified API? This is crucial for domain-specific applications.

- Sophistication of LLM routing:

- What routing strategies are supported? Look for cost-based, latency-based, performance-based, capability-based, and failover routing as standard.

- How customizable is the routing logic? Can you define your own complex rules, or does it offer dynamic, AI-driven routing optimization?

- Granularity of routing? Can you route at the level of specific prompts, users, or application modules?

- Transparency: Does the platform provide insights into why a particular model was chosen for a request?

- Latency and Throughput:

- How fast is the platform itself? Does the abstraction layer add significant latency, or is it optimized for speed, delivering low latency AI?

- Can it handle high volumes of requests? Evaluate its scalability and load-balancing capabilities under peak demand.

- Regional availability: Are there data centers close to your user base to minimize network latency?

- Pricing Model:

- Transparency: Is the pricing clear and predictable? Are there hidden costs?

- Flexibility: Does it offer pay-as-you-go, tiered pricing, or enterprise-level agreements? Can it dynamically choose cheaper models to enhance cost-effective AI?

- Cost Tracking: Does it provide detailed analytics on costs per model, per request, or per user?

- Security and Compliance:

- Data privacy: How is your data handled? Is it logged, stored, or passed through? What are the data retention policies?

- Encryption: Is data encrypted in transit and at rest?

- Access control: Robust API key management, role-based access control (RBAC), and enterprise-grade security features.

- Compliance certifications: Does it meet industry standards like SOC 2, ISO 27001, HIPAA, GDPR, etc., particularly if dealing with sensitive data?

- Developer Experience:

- SDKs and Libraries: Are there well-maintained SDKs for popular programming languages?

- Documentation: Is the documentation clear, comprehensive, and easy to follow, with examples?

- Monitoring and Analytics: Does it provide dashboards for usage, performance, errors, and cost?

- Community and Support: What kind of support channels are available (forums, dedicated support)?

- Observability and Analytics:

- Beyond basic usage, can you monitor model-specific performance, error rates, and latency?

- Are there tools to analyze prompt effectiveness across different models?

- Can you track costs down to individual API calls or user sessions?

Introducing XRoute.AI: Your Partner in Simplified AI Development

In the competitive landscape of Unified LLM API platforms, XRoute.AI stands out as a cutting-edge solution meticulously designed to address the aforementioned considerations. It is a powerful unified API platform built to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts alike.

XRoute.AI distinguishes itself by offering a single, OpenAI-compatible endpoint. This means if you're already familiar with OpenAI's API, integrating with XRoute.AI is incredibly straightforward, dramatically simplifying your development process. Through this unified endpoint, XRoute.AI provides seamless multi-model support, granting access to an impressive array of over 60 AI models from more than 20 active providers. This extensive coverage includes leading models from OpenAI, Anthropic, Google, and many others, ensuring you always have the right tool for any task, mitigating vendor lock-in, and enabling advanced LLM routing strategies.

The platform places a strong emphasis on low latency AI and cost-effective AI, understanding that performance and budget are critical. Its intelligent LLM routing capabilities dynamically direct your requests to the optimal model based on real-time factors like cost, latency, and performance, ensuring you get the best possible outcome at the best possible price. With high throughput, robust scalability, and a flexible pricing model, XRoute.AI is designed to support projects of all sizes, from innovative startups to demanding enterprise-level applications. Its developer-friendly tools and comprehensive documentation further empower users to build intelligent solutions without the complexity of managing multiple API connections, solidifying its position as an ideal choice for the future of AI development.

VIII. Implementation Best Practices and Advanced Strategies

Successfully integrating and maximizing the benefits of a Unified LLM API requires more than just connecting to an endpoint. Thoughtful implementation, continuous monitoring, and strategic optimization are key to unlocking its full potential and ensuring your AI-powered applications are robust, efficient, and future-proof.

Start Small, Scale Smart

When introducing a Unified LLM API into your ecosystem, resist the urge to overhaul everything at once.

- Pilot Project Approach: Begin with a clearly defined, manageable pilot project. This could be a specific feature within an application (e.g., an internal summarization tool, a specific chatbot module).

- Gradual Model Integration: Start by integrating a few key LLMs that directly address your initial use case. Once comfortable, gradually expand your multi-model support as new requirements emerge or better models become available.

- Iterative Routing Rules: Start with simple, rule-based LLM routing (e.g., "always use model A for task X, model B for task Y"). As you gather data and gain confidence, progressively introduce more sophisticated, dynamic routing strategies that optimize for cost, latency, or performance.

Monitor and Optimize Your Routing Rules

LLM routing is not a set-and-forget mechanism; it requires continuous attention and optimization.

- Establish Key Performance Indicators (KPIs): Define what success looks like for your AI integrations. This might include:

- Cost per API call/token: To track budget adherence and identify areas for cost-effective AI.

- Average latency: To ensure low latency AI for user-facing applications.

- Output quality/accuracy: Using human evaluation or automated metrics.

- Failover success rate: To measure system resilience.

- Utilize Platform Analytics: Leverage the monitoring and analytics dashboards provided by your Unified LLM API platform (like XRoute.AI). These tools offer invaluable insights into how your models are performing, which routing rules are being triggered, and where inefficiencies or errors might lie.

- A/B Test Routing Strategies: Continuously experiment. Run A/B tests on different routing rules or model combinations for specific tasks. For example, direct 50% of creative writing prompts to GPT-4 and 50% to Claude 3, then compare the output quality and cost. This data-driven approach allows for ongoing refinement and optimization.

Leverage Caching for Repetitive Tasks

Many LLM requests are repetitive, especially in applications with frequent common queries.

- Implement an intelligent caching layer: For prompts that are likely to generate the same or very similar responses (e.g., common FAQs, standard boilerplate text), cache the LLM's output. This dramatically reduces API calls, minimizes latency, and saves costs.

- Consider Cache Invalidation: Design a robust cache invalidation strategy to ensure that cached responses are updated when underlying information changes or models are updated.

Security First: API Keys and Access Control

Integrating with multiple AI services means managing multiple points of access.

- Secure API Key Management: Store your LLM provider API keys securely within the Unified LLM API platform (or in a secure vault), not directly in your application code. Use environment variables and robust secrets management practices.

- Least Privilege Principle: Grant only the necessary permissions to your Unified LLM API platform for accessing various LLMs.

- Network Security: Ensure secure network configurations, including firewalls and private connections where possible, especially for enterprise deployments.

- Data Masking/Sanitization: If dealing with sensitive data, implement mechanisms to mask or sanitize Personally Identifiable Information (PII) before sending it to LLMs, particularly those not specifically certified for sensitive data handling.

Staying Informed About Model Updates

The LLM landscape is dynamic, with models constantly being updated, fine-tuned, or replaced.

- Subscribe to Provider Updates: Stay informed about new model releases, deprecations, and API changes from your chosen LLM providers.

- Monitor Unified API Platform Updates: Your Unified LLM API provider will also regularly update its platform to integrate new models and enhance features. Keep abreast of these changes to leverage the latest capabilities and avoid compatibility issues.

- Plan for Migrations: Factor in potential model migrations or updates into your development roadmap. The beauty of a unified API is that these migrations are often much simpler, but they still require testing and validation.

By adhering to these best practices, organizations can build highly performant, resilient, and adaptable AI applications that not only deliver significant business value today but are also well-positioned to evolve with the rapid advancements in the field of artificial intelligence.

IX. The Road Ahead: The Future of Unified LLM APIs

The journey of Unified LLM API platforms is only just beginning. As large language models become more ubiquitous, specialized, and integral to business operations, the need for intelligent orchestration layers will grow exponentially. The future of these platforms promises even greater sophistication, deeper integration, and more autonomous capabilities, shaping the very architecture of AI-powered applications.

Increasing Specialization and Hybrid Architectures

While current Unified LLM APIs excel at routing to general-purpose models, the future will see a significant increase in the integration of highly specialized, domain-specific models.

- Hyper-Specialized Models: Expect to see models trained exclusively on medical texts, legal precedents, financial data, or scientific papers becoming first-class citizens within unified platforms. LLM routing will evolve to recognize subtle semantic cues in prompts, directing them to the precise specialist model required.

- Hybrid Architectures: The distinction between traditional LLMs and other AI modalities will blur. Unified APIs will seamlessly integrate not just different LLMs but also other AI services like image generation models (diffusion models), voice synthesis, knowledge graphs, and even traditional machine learning models. This will enable complex, multi-stage AI workflows where a single API call orchestrates a sequence of diverse AI tasks. Imagine a single prompt generating an image, describing it in multiple languages, and then crafting social media posts for each.

Enhanced Autonomous Agent Orchestration

The rise of AI agents, capable of complex, multi-step reasoning and interaction with external tools, will push Unified LLM APIs to new levels of intelligence.

- Advanced Agent Routing: Instead of just routing a single prompt, unified platforms will route entire agentic workflows. This involves choosing the best LLM to act as the "brain" for an agent, considering its planning capabilities, tool-use proficiency, and reasoning power.

- Dynamic Tool Selection: Future platforms might not only choose the LLM but also dynamically select which external tools (e.g., search engines, databases, calculators, proprietary APIs) the LLM agent should leverage for a given task, based on the prompt and desired outcome.

- Self-Optimizing Workflows: Imagine a Unified LLM API that can observe the performance of an AI agent, identify bottlenecks or suboptimal model choices, and automatically reconfigure the agent's workflow or switch underlying LLMs to improve efficiency or accuracy without human intervention. This truly embodies low latency AI and cost-effective AI at a systemic level.

Broader Ecosystem Integration

The value of a Unified LLM API will be amplified by its ability to integrate seamlessly with existing enterprise systems and developer tools.

- Enterprise System Connectors: Deeper integrations with CRM, ERP, data warehouses, and identity management systems will be standard, making it easier for businesses to embed AI into their core operations.

- Observability and MLOps Tools: Tight integration with MLOps platforms, logging services, and monitoring tools will provide end-to-end visibility into the entire AI pipeline, from prompt to response, across all models and routing decisions.

- Low-Code/No-Code Platforms: As AI becomes more accessible, Unified LLM APIs will be crucial backbones for low-code/no-code platforms, enabling citizen developers to build sophisticated AI applications by simply dragging and dropping components that inherently leverage multi-model, routed intelligence.

Ethical AI and Governance Through Unified Platforms

As AI becomes more powerful, ethical considerations and robust governance frameworks are paramount. Unified LLM APIs can play a crucial role in enforcing these.

- Centralized Policy Enforcement: Future platforms could allow organizations to centrally define and enforce ethical guidelines, content moderation policies, and data privacy rules across all LLMs accessed through the API.

- Bias Detection and Mitigation: Routing rules could be designed to identify and mitigate biases by routing certain types of prompts to models known for their fairness or by passing outputs through bias-checking filters.

- Traceability and Auditability: The unified platform, by acting as a central hub, can provide comprehensive logs and audit trails, showing which model processed which request, with what inputs, and what the output was, which is critical for compliance and accountability.

In conclusion, the evolution of Unified LLM APIs will parallel the rapid advancements in AI itself. They will transform from mere API aggregators into intelligent, autonomous orchestration layers that are fundamental to building scalable, reliable, ethical, and highly effective AI solutions across every facet of technology and business. The future is one where developers are empowered not by a single powerful model, but by a intelligently managed collective, accessible through a single, powerful gateway.

X. Conclusion: Embracing the Future of AI Development with Unity

The landscape of artificial intelligence, particularly concerning large language models, is one of exhilarating innovation coupled with increasing complexity. The initial enthusiasm around a handful of pioneering LLMs has matured into a nuanced understanding that no single model is a universal solution. Instead, the power lies in leveraging the diverse strengths of many. However, this diversification brought with it the formidable challenge of API sprawl, integration headaches, and the constant struggle to optimize for cost, performance, and reliability.

This is where the Unified LLM API emerges not just as a convenience, but as an indispensable architectural component for modern AI development. We’ve explored how these platforms act as intelligent conduits, abstracting away the myriad differences between various LLMs and presenting developers with a single, consistent interface. At their core, they embody two transformative capabilities: multi-model support and LLM routing.

Multi-model support empowers businesses to escape vendor lock-in, tap into the specialized capabilities of different LLMs (whether for creativity, factual recall, or code generation), and ensure resilience against service outages. It allows for a "best-of-breed" approach, where the right tool is always available for the right job, fostering agility and continuous innovation.

Complementing this, LLM routing is the intelligent brain of the operation. It dynamically directs requests to the optimal model based on a sophisticated array of criteria—be it cost-effective AI, low latency AI, superior accuracy, or specific capabilities. This intelligent orchestration ensures that resources are allocated efficiently, minimizing operational expenses while maximizing output quality and responsiveness. The tangible benefits are clear: faster development cycles, significant cost savings, enhanced reliability through redundancy, superior performance, and crucially, a future-proofed AI infrastructure capable of adapting to the relentless pace of technological change.

Platforms like XRoute.AI exemplify this vision, offering a cutting-edge unified API platform that simplifies access to over 60 LLMs via a single, OpenAI-compatible endpoint. By focusing on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers teams to build sophisticated AI applications, chatbots, and automated workflows without the complexities of managing numerous API connections.

The inevitable shift towards unified solutions is driven by a practical imperative: to simplify, optimize, and scale AI. For developers and businesses navigating the intricate world of large language models, embracing a Unified LLM API is no longer an optional enhancement; it is a strategic imperative. It empowers them to move beyond the plumbing of integration and truly focus on building smarter, faster, and more impactful AI-driven innovations that will define the next generation of digital experiences. The future of AI development is unified, efficient, and brilliantly orchestrated.

XI. FAQ (Frequently Asked Questions)

Q1: What is the primary benefit of using a Unified LLM API? A1: The primary benefit is vastly simplified development and integration. Instead of managing multiple APIs from different LLM providers, you interact with a single, consistent API endpoint. This reduces complexity, accelerates development cycles, lowers maintenance overhead, and enables seamless multi-model support and LLM routing for optimized performance and cost.

Q2: How does LLM routing save costs? A2: LLM routing saves costs by intelligently directing requests to the most cost-effective AI model that can still meet the required quality and performance standards. For example, simpler tasks might be routed to cheaper models, while more complex or critical tasks are handled by premium, more expensive models. This dynamic optimization ensures you're not overpaying for routine inferences.

Q3: Can a Unified LLM API support proprietary or fine-tuned models? A3: Many advanced Unified LLM API platforms, like XRoute.AI, are designed to be flexible. While they primarily offer access to public, commercially available models, some platforms do provide mechanisms to integrate and route requests to your own proprietary, fine-tuned, or privately hosted models, often through custom connectors or private endpoint configurations.

Q4: Is a Unified LLM API suitable for small projects or just enterprises? A4: While enterprises gain significant benefits from managing complex multi-model environments, a Unified LLM API is also highly beneficial for small projects and startups. It lowers the barrier to entry for leveraging advanced AI, allows for quick experimentation with different models, and helps manage costs from the outset, enabling small teams to build scalable and future-proof AI applications efficiently. It fosters low latency AI and cost-effective AI from day one.

Q5: How does XRoute.AI differentiate itself in the Unified LLM API space? A5: XRoute.AI differentiates itself as a cutting-edge unified API platform by providing a single, OpenAI-compatible endpoint for over 60 AI models from more than 20 providers, ensuring broad multi-model support. It prioritizes low latency AI and cost-effective AI through intelligent LLM routing capabilities, high throughput, and flexible pricing. Its focus on developer-friendly tools simplifies integration, empowering users to build scalable AI solutions without managing multiple complex API connections.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.