What AI API is Free? Top Picks for Developers

The rapid advancement of Artificial Intelligence has ushered in an era where intelligent capabilities are no longer confined to academic labs but are readily accessible to developers worldwide. From natural language processing and computer vision to speech recognition and generative AI, the tools to build groundbreaking applications are more powerful and user-friendly than ever before. At the heart of this accessibility are AI APIs (Application Programming Interfaces) – gateways that allow developers to integrate complex AI models into their own software with just a few lines of code.

However, as the demand for AI grows, so does the curiosity and concern around its cost. Startups, individual developers, researchers, and hobbyists often find themselves asking: "what ai api is free?" or seeking out the "free ai api" options available. For those looking to scale or optimize their budgets, the question quickly evolves into "what is the cheapest llm api"? This comprehensive guide aims to demystify the landscape of free and cost-effective AI APIs, offering practical insights and top picks for developers looking to harness AI's power without breaking the bank.

The Nuance of "Free" in AI APIs: Understanding Free Tiers and Open-Source Models

Before diving into specific recommendations, it's crucial to understand what "free" truly means in the context of AI APIs. In most cases, a "free AI API" doesn't imply an unlimited, perpetually free service for heavy commercial use. Instead, it typically refers to one of three scenarios:

- Free Tiers/Credits: Many commercial AI API providers offer a "free tier" or initial free credits to new users. This allows developers to experiment, prototype, and conduct small-scale testing without incurring immediate costs. These free tiers usually come with specific usage limits (e.g., a certain number of requests per month, a maximum token count for LLMs, or a time limit). Exceeding these limits transitions the usage into a paid model.

- Community or Research-Oriented Access: Some platforms, particularly those focused on open science or academic research, provide free access to their AI models or APIs for non-commercial use, often with generous but still capped limits.

- Open-Source Models via Third-Party Platforms: While the AI model itself might be open-source (meaning its code is publicly available), hosting and running that model still incurs infrastructure costs. However, some platforms or community initiatives might offer free inference endpoints for popular open-source models, again, usually with rate limits or for non-commercial purposes. Alternatively, developers can self-host open-source models, where "free" refers to the model license, but the cost shifts to hardware and operational expenses.

Understanding these distinctions is vital for setting realistic expectations and planning your development journey. The goal for many developers is to find APIs that offer sufficient "free" usage to validate an idea, build a proof-of-concept, or support a small, non-commercial project, then transition gracefully to a cost-effective solution as their needs grow.

Why Developers Seek Free and Cheap AI APIs

The motivations behind seeking free or low-cost AI APIs are multifaceted and often intersect with the typical developer workflow:

- Prototyping and Experimentation: For initial ideation and rapid prototyping, developers need tools that allow them to test concepts quickly without financial commitment. Free tiers are perfect for this "build fast, break fast" approach.

- Learning and Skill Development: As AI fields evolve rapidly, developers need hands-on experience with various models and APIs. Free access lowers the barrier to entry, enabling learning and experimentation with new technologies like large language models or advanced computer vision techniques.

- Budget Constraints: Startups, independent developers, and students often operate on tight budgets. Free and cheap APIs are essential for launching projects without significant upfront investment.

- Non-Commercial Projects and Hobbies: For personal projects, open-source contributions, or academic research where monetization isn't the primary goal, free resources are a godsend.

- Comparative Analysis: Developers often evaluate multiple AI APIs to determine which one best fits their specific use case in terms of performance, accuracy, and cost. Free tiers facilitate this comparison without financial risk.

- Cost Optimization for Scale: Even established businesses constantly look for ways to optimize operational costs. Identifying the "cheapest LLM API" for specific tasks can lead to substantial savings at scale.

Top Picks for Free AI APIs: A Developer's Toolkit

Let's explore some of the most accessible and generous free AI API offerings across various AI domains.

1. Natural Language Processing (NLP) and Large Language Models (LLMs)

NLP is arguably the most in-demand AI capability, encompassing tasks from text generation and sentiment analysis to translation and summarization. LLMs are at the forefront of this revolution.

a. Hugging Face Transformers & Inference API

- What it offers: Hugging Face is an unparalleled hub for open-source machine learning models, particularly for NLP and more recently, multimodal AI. Their

transformerslibrary allows developers to access thousands of pre-trained models. Crucially, their Inference API provides a way to run many of these models directly, often with a generous free tier for non-commercial use or small-scale prototyping. - Free Tier/Access: Many models on the Hugging Face Hub can be tested directly in your browser. For programmatic access, their free tier allows a certain number of API calls for inference. For heavier use, they offer paid plans or the option to self-host models on your infrastructure.

- Use Cases: Text generation, summarization, translation, sentiment analysis, named entity recognition, question answering, text classification, and more. It's an excellent resource for experimenting with cutting-edge LLMs and other NLP models without managing infrastructure.

- Why it's great: Unmatched breadth of models, strong community support, and the ability to fine-tune and share models. It democratizes access to state-of-the-art NLP.

b. Google Cloud AI Platform (Free Tier)

- What it offers: Google Cloud provides a suite of powerful AI services, including Natural Language, Translation, Vision AI, Speech-to-Text, and Text-to-Speech. Many of these services come with a monthly free tier.

- Free Tier/Access:

- Natural Language API: Free for 5,000 units/month for features like syntax, sentiment, entity, and content classification.

- Translation API: Free for 500,000 characters/month.

- Speech-to-Text API: Free for 60 minutes/month.

- Text-to-Speech API: Free for 1 million characters/month.

- Vision AI: Free for 1,000 units/month for features like label detection, OCR, face detection.

- Use Cases: Adding intelligent text analysis, language translation, voice interfaces, or image analysis to your applications.

- Why it's great: Part of a robust cloud ecosystem, high accuracy, and integrated with other Google Cloud services. The free tiers are substantial enough for many learning and small project needs.

c. OpenAI (Free Credits for New Users)

- What it offers: While not a perpetually "free ai api," OpenAI provides initial free credits to new users upon signing up for their platform. These credits can be used to experiment with their powerful models, including GPT-3.5 Turbo, GPT-4 (and its variants like GPT-4o), DALL-E (image generation), and Whisper (speech-to-text).

- Free Tier/Access: Typically, new users receive a certain amount of free credits (e.g., $5 or $18, subject to change) that expire after a few months. This is enough to conduct significant experimentation and prototyping, especially with their more cost-effective models like

gpt-3.5-turbo. - Use Cases: Advanced text generation, complex reasoning, code generation, summarization, creative writing, image generation from text, and highly accurate speech transcription.

- Why it's great: Access to industry-leading models that set benchmarks for performance and capability. It's an essential platform for anyone serious about building AI applications.

d. Cohere (Free Tier)

- What it offers: Cohere focuses on enterprise-grade NLP models for generation, understanding, and search. They offer models like Command (for generation) and Embed (for embeddings).

- Free Tier/Access: Cohere provides a generous free tier for developers, which allows a significant number of requests per month for non-commercial and prototyping use. Specific limits vary by model but are designed to allow ample experimentation.

- Use Cases: Building sophisticated chatbots, content generation, semantic search, text classification, and understanding user intent.

- Why it's great: Strong focus on practical enterprise applications, excellent documentation, and robust models designed for high-quality text processing.

e. Mistral AI (Open Models via Community Platforms)

- What it offers: Mistral AI has quickly emerged as a formidable competitor in the LLM space, known for its powerful yet efficient open-source models (e.g., Mistral 7B, Mixtral 8x7B, Mistral Large). While Mistral also offers commercial APIs, their open models can be accessed for free via community platforms or self-hosted.

- Free Tier/Access:

- Hugging Face: Many Mistral models are available on Hugging Face, often with free inference API endpoints for limited use.

- Local Deployment: Developers can download and run Mistral's open-source models on their own hardware, effectively making the API "free" (aside from hardware and electricity costs). Tools like

ollamasimplify this.

- Use Cases: Text generation, coding assistance, summarization, chat applications, and tasks requiring strong reasoning capabilities, often with better efficiency than larger models.

- Why it's great: High performance for their size, open-source flexibility, and growing community support. They offer an excellent balance of capability and resource efficiency.

2. Computer Vision APIs

Computer vision APIs allow applications to "see" and interpret images and videos.

a. Google Cloud Vision AI (Free Tier)

- What it offers: Google's Vision AI API enables applications to understand the content of an image, performing tasks like label detection, optical character recognition (OCR), face detection, landmark detection, and safe search detection.

- Free Tier/Access: Provides 1,000 units/month for common features (label detection, landmark detection, OCR, safe search detection, face detection, explicit content detection).

- Use Cases: Image content moderation, automated tagging of photos, text extraction from images, facial recognition for security, and building smart photo galleries.

- Why it's great: Highly accurate and comprehensive, integrated with Google's vast knowledge graph, and easy to use.

b. AWS Rekognition (Free Tier)

- What it offers: Amazon Rekognition offers advanced image and video analysis, including object and scene detection, facial analysis, celebrity recognition, unsafe content detection, and text detection in images.

- Free Tier/Access: Provides 5,000 images/month for image analysis (object, scene, and facial analysis) and 5000 minutes/month for video analysis (streaming video events).

- Use Cases: Content moderation for user-uploaded images/videos, identity verification, retail analytics, and creating searchable video archives.

- Why it's great: Scalable, integrated with the AWS ecosystem, and offers both image and video analysis capabilities.

3. Speech APIs

Speech APIs convert spoken language to text (Speech-to-Text) and text to spoken language (Text-to-Speech).

a. Google Cloud Speech-to-Text (Free Tier)

- What it offers: Highly accurate speech recognition across various languages and dialects, capable of transcribing both real-time audio and pre-recorded files.

- Free Tier/Access: Free for 60 minutes of audio processing per month.

- Use Cases: Voice assistants, call center analytics, dictation software, transcribing meetings, and adding voice search to applications.

- Why it's great: Excellent accuracy, support for many languages, and features like speaker diarization.

b. AWS Polly (Free Tier)

- What it offers: Amazon Polly turns text into lifelike speech, offering a wide selection of natural-sounding voices across many languages.

- Free Tier/Access: Provides 5 million characters per month for standard voices and 1 million characters per month for neural voices, for the first 12 months.

- Use Cases: Creating audio content, voice user interfaces, accessibility features for applications, and voice branding.

- Why it's great: High-quality, natural-sounding voices, extensive language support, and integration with other AWS services.

4. General Purpose AI and Machine Learning Platforms

These platforms offer a broader range of AI capabilities or tools to build and deploy custom models.

a. TensorFlow.js / PyTorch Mobile

- What it offers: These are not "APIs" in the traditional sense but rather libraries that allow you to run machine learning models directly in web browsers (TensorFlow.js) or on mobile devices (PyTorch Mobile). Many pre-trained models are available.

- Free Tier/Access: Entirely free, as the computation happens on the user's device. The "cost" is the model download size and client-side processing power.

- Use Cases: Client-side inference for real-time applications (e.g., augmented reality filters, local voice commands, image classification without cloud roundtrips), ensuring data privacy, and reducing server costs.

- Why it's great: Ultimate privacy and control, no server-side costs, and enables offline AI capabilities.

What is the Cheapest LLM API? Strategies for Cost-Effective AI

Once you've outgrown the free tiers or need to deploy AI commercially, cost becomes a significant factor. For Large Language Models (LLMs), pricing can vary dramatically based on several factors. The question, "what is the cheapest llm api?" doesn't have a single answer, as the "cheapest" solution depends heavily on your specific use case, required performance, and usage patterns.

Key Factors Influencing LLM API Cost

- Token Count (Input vs. Output): Most LLM APIs charge per token. A token can be a word, a part of a word, or punctuation. Crucially, input tokens (your prompt) and output tokens (the model's response) are often priced differently, with output tokens typically being more expensive.

- Strategy: Optimize prompts to be concise, minimize unnecessary context, and specify desired output formats to reduce token usage. Cache common responses where appropriate.

- Model Size and Capability: Larger, more capable models (e.g., GPT-4, Claude 3 Opus) are significantly more expensive per token than smaller, faster models (e.g., GPT-3.5 Turbo, Claude 3 Haiku, Gemini 1.5 Flash).

- Strategy: Always choose the smallest model that can effectively accomplish your task. Don't use a GPT-4 level model for simple text summarization if GPT-3.5 Turbo suffices.

- Context Window Size: Models with larger context windows (the amount of text they can process at once) might seem more expensive, but they can handle more complex, longer prompts, potentially reducing the need for multiple API calls.

- Strategy: Evaluate if a larger context window prevents multiple API calls, which might be cheaper overall for complex tasks.

- API Provider: Different providers have different pricing strategies and competitive rates.

- Region/Latency: While less common for direct token pricing, some providers might have regional pricing or latency considerations that indirectly impact the overall cost of operations if you're deploying globally.

- Usage Volume and Tiers: Many providers offer volume discounts or tiered pricing, where the cost per token decreases as your usage increases.

- Strategy: Monitor your usage and consider negotiating custom plans if your volume is very high.

Strategies for Cost Reduction with LLMs

- Model Tiering: Implement a strategy where your application tries the cheapest suitable model first (e.g.,

gpt-3.5-turbo) and only falls back to a more expensive, powerful model (e.g.,gpt-4o) if the simpler model fails or the task explicitly requires advanced capabilities. - Prompt Engineering for Efficiency:

- Few-shot Learning: Instead of asking an LLM to "figure out" a task, provide a few examples in the prompt to guide its behavior, which can reduce the number of tokens needed for effective output.

- Output Constraints: Specify clear output formats (e.g., "return only JSON," "give a one-sentence answer") to prevent verbose, unnecessary output tokens.

- Token Estimation: Use libraries or tools to estimate token counts before sending requests, especially for long prompts or expected long responses.

- Batching and Caching:

- Batching: For multiple independent requests, if the API supports it, batching them into a single call can sometimes be more efficient than many individual calls.

- Caching: For static or frequently requested information, cache the LLM's responses. Don't re-generate content that hasn't changed.

- Fine-tuning (Long-term Strategy): For highly specific, repetitive tasks, fine-tuning a smaller model on your custom data can make it perform better than a general-purpose larger model, often at a lower inference cost per token. However, fine-tuning itself involves costs.

- Open-Source Models (Self-hosting): If you have the expertise and infrastructure, self-hosting open-source LLMs (like Llama 3, Mistral, Gemma) on your own hardware or cloud instances can be extremely cost-effective for high-volume usage, shifting the cost from per-token API fees to fixed infrastructure costs.

Comparing Pricing for Popular LLM APIs

Let's look at a comparative table of some popular LLM APIs, focusing on their most cost-effective models. Note: Pricing is subject to change and should always be verified on the provider's official website.

| LLM Provider | Model Name | Input Price (per 1M tokens) | Output Price (per 1M tokens) | Context Window | Key Strengths |

|---|---|---|---|---|---|

| OpenAI | gpt-3.5-turbo |

~$0.50 | ~$1.50 | 16K | Fast, cost-effective for many tasks, good generalist. |

| OpenAI | gpt-4o |

~$5.00 | ~$15.00 | 128K | Advanced reasoning, multimodal, higher quality. |

| Anthropic | Claude 3 Haiku |

~$0.25 | ~$1.25 | 200K | Extremely fast, compact, high context for its cost. |

| Anthropic | Claude 3 Sonnet |

~$3.00 | ~$15.00 | 200K | Balanced intelligence and speed for enterprise. |

Gemini 1.5 Flash |

~$0.35 | ~$0.50 | 1M | High context window, cost-optimized, multimodal. | |

Gemini 1.5 Pro |

~$3.50 | ~$10.50 | 1M | Enhanced reasoning, multimodal, for more complex tasks. | |

| Mistral AI | Mistral Tiny |

~$0.14 | ~$0.42 | 32K | Very low cost, good for simpler tasks, efficient. |

| Mistral AI | Mistral Large |

~$8.00 | ~$24.00 | 32K | Top-tier performance, strong reasoning, multilingual. |

| Meta | Llama 3 8B (via API)* |

Varies by host | Varies by host | 8K | Open-source, strong performance for its size. |

Note on Llama 3: As an open-source model, its API cost depends on the hosting provider (e.g., AWS, Azure, Hugging Face, or self-hosting). These costs typically reflect the underlying infrastructure usage rather than a direct per-token fee from Meta itself.

From this table, models like Claude 3 Haiku, Gemini 1.5 Flash, and Mistral Tiny clearly stand out as contenders for the "cheapest LLM API" title for basic tasks, offering excellent performance for their price point. However, if your task requires advanced reasoning or multimodal capabilities, the higher-tier models from OpenAI, Anthropic, or Google might be more cost-effective in terms of achieving the desired outcome even if their per-token cost is higher. The true "cheapest" is often a function of cost per unit of valuable output.

The Role of Unified AI API Platforms: Optimizing Access and Cost

Navigating the myriad of AI APIs, each with its own pricing, documentation, and latency profiles, can be daunting. Developers often find themselves juggling multiple integrations to find the optimal balance of cost, performance, and model availability. This is where unified API platforms become invaluable. They abstract away the complexity of integrating with individual providers, offering a single endpoint to access a diverse range of AI models.

For instance, XRoute.AI is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers, enabling seamless development of AI-driven applications, chatbots, and automated workflows. With a focus on low latency AI, cost-effective AI, and developer-friendly tools, XRoute.AI empowers users to build intelligent solutions without the complexity of managing multiple API connections. The platform’s high throughput, scalability, and flexible pricing model make it an ideal choice for projects of all sizes, from startups to enterprise-level applications, effectively helping developers find the "cheapest LLM API" by allowing dynamic routing and model selection based on performance and cost criteria. Such platforms reduce development overhead, mitigate vendor lock-in, and provide a centralized way to monitor and optimize AI API usage and costs across different models and providers.

XRoute is a cutting-edge unified API platform designed to streamline access to large language models (LLMs) for developers, businesses, and AI enthusiasts. By providing a single, OpenAI-compatible endpoint, XRoute.AI simplifies the integration of over 60 AI models from more than 20 active providers(including OpenAI, Anthropic, Mistral, Llama2, Google Gemini, and more), enabling seamless development of AI-driven applications, chatbots, and automated workflows.

Best Practices for Using Free and Cheap AI APIs

To make the most of free tiers and cost-effective solutions, developers should adopt several best practices:

- Read the Terms of Service Carefully: Understand the limits of free tiers, usage policies, and what happens when you exceed those limits. Some free tiers may prohibit commercial use.

- Monitor Your Usage: Most API providers offer dashboards to track your consumption. Regularly check these dashboards to ensure you stay within free limits or manage your paid usage effectively. Set up alerts for approaching thresholds.

- Implement Rate Limiting and Backoff: Protect your application from accidentally making too many requests and incurring unexpected costs or hitting API rate limits. Implement exponential backoff for retries.

- Secure Your API Keys: Treat API keys like passwords. Do not hardcode them in your client-side code, commit them to public repositories, or share them unnecessarily. Use environment variables or secure key management services.

- Start Small and Iterate: Begin with the cheapest, simplest model that can potentially solve your problem. Only upgrade to more powerful or expensive models if the task truly demands it.

- Containerize and Self-Host When Appropriate: For open-source models, if your usage is high enough to justify the overhead, containerizing (e.g., with Docker) and self-hosting can be a cost-effective long-term strategy, especially for models like Llama 3 or Mistral.

- Optimize Network Traffic: If you're paying for data transfer, ensure your application sends and receives only necessary data. Compress data where possible.

- Stay Updated: The AI landscape evolves rapidly. New models emerge, pricing changes, and new free tiers are introduced. Regularly check provider documentation and industry news.

Challenges and Limitations of Relying on Free/Cheap APIs

While invaluable, free and cheap AI APIs come with their own set of challenges:

- Rate Limits and Quotas: Free tiers are inherently limited. For applications requiring high throughput or real-time performance, these limits can be quickly hit, necessitating a move to paid plans.

- Scalability: Free tiers are not designed for large-scale production deployments. Scaling an application built on a free tier will invariably lead to increased costs or the need to re-architect.

- Commercial Use Restrictions: Many free tiers explicitly forbid or restrict commercial use. Developers must transition to a paid plan for any revenue-generating application.

- Data Privacy and Security: While reputable providers have strong security measures, sending sensitive data through any third-party API requires due diligence. Understand their data handling policies. For highly sensitive data, self-hosting open-source models or using on-premise solutions might be necessary.

- Support: Free tier users typically receive limited or no dedicated technical support. Community forums are often the primary resource.

- Model Obsolescence: AI models are constantly being updated. A free model you rely on might be deprecated or superseded by a paid version.

- Vendor Lock-in (Even with Free Tiers): Building heavily on one provider's free tier can make it harder to switch later, even if a cheaper or better alternative emerges, due to integration efforts. Unified platforms like XRoute.AI mitigate this risk by offering multiple providers through a single interface.

The Future of Free and Cost-Effective AI APIs

The trend towards more accessible and affordable AI is likely to continue. We can anticipate:

- Increased Competition: As more players enter the AI API market, competitive pricing and more generous free tiers will likely become standard.

- Specialized Models: We'll see more highly specialized, smaller models designed for specific tasks, offering high performance at a lower cost than general-purpose LLMs.

- Open-Source Growth: The open-source AI community will continue to flourish, leading to powerful models that can be self-hosted or accessed via very low-cost community-driven inference services.

- Edge AI Expansion: Running AI models directly on devices (edge AI) will become more prevalent, reducing cloud API calls and enhancing privacy.

- Unified Platforms: The demand for platforms like XRoute.AI, which simplify access, optimize costs, and manage multiple AI providers, will grow significantly. They offer a future where developers can dynamically choose the best model for their needs based on real-time performance and cost.

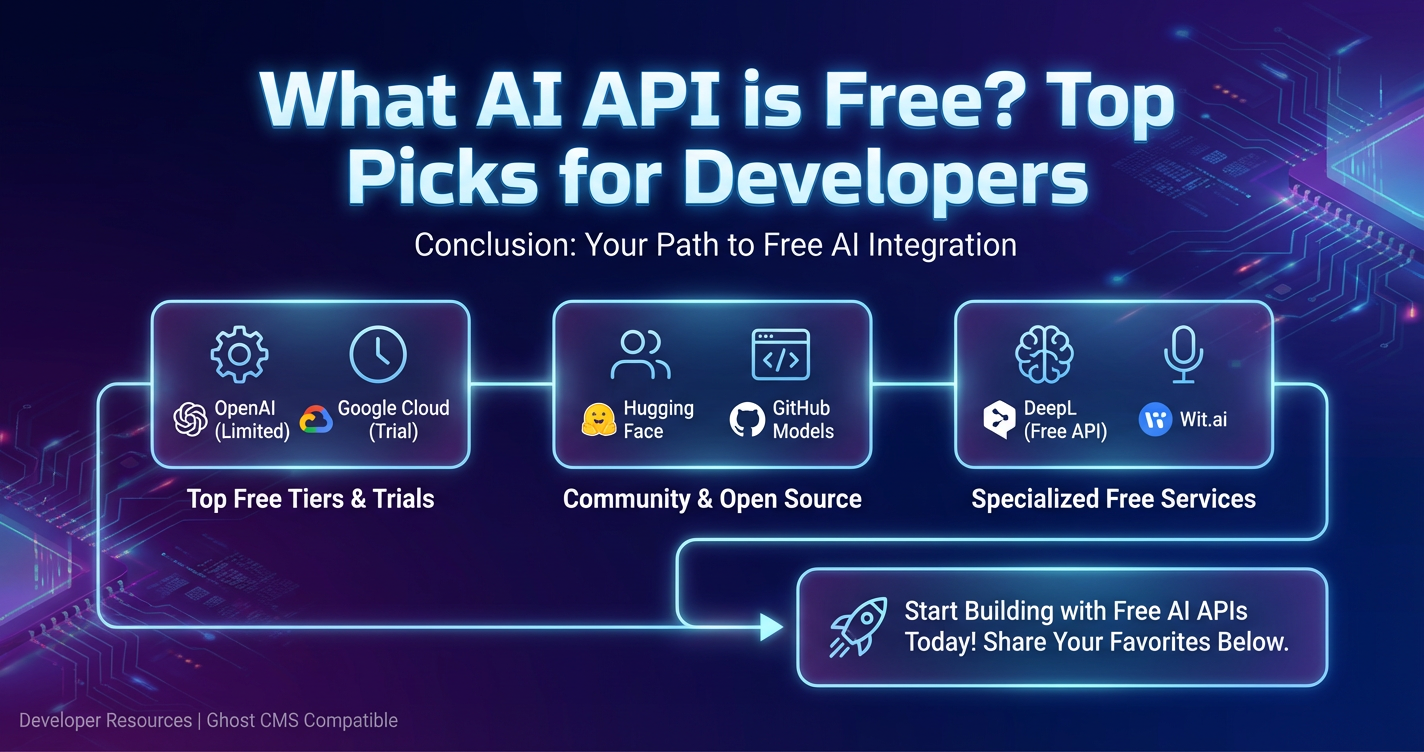

Conclusion

The journey to building AI-powered applications doesn't have to start with a hefty investment. For developers asking "what ai api is free?" or seeking the "cheapest llm api," a wealth of options exist, ranging from generous free tiers of commercial services to the flexibility of open-source models. By understanding the nuances of "free," strategically choosing the right model for the task, and implementing best practices for cost optimization, developers can leverage the transformative power of AI to innovate, learn, and create groundbreaking solutions.

Platforms like XRoute.AI are further simplifying this landscape, offering a unified gateway to a vast array of LLMs and facilitating choices that balance performance, latency, and cost-effectiveness. As the AI ecosystem matures, the tools to build intelligent applications will only become more accessible, empowering a new generation of developers to shape the future.

Frequently Asked Questions (FAQ)

Q1: Are truly free AI APIs suitable for commercial products? A1: Generally, no. While many AI API providers offer free tiers, these are typically designed for prototyping, experimentation, and non-commercial use. They come with strict usage limits (rate limits, token counts) and often exclude commercial applications in their terms of service. For commercial products, you will almost certainly need to transition to a paid plan to ensure scalability, reliability, and compliance with usage agreements.

Q2: How can I monitor my usage to stay within free tiers? A2: Most major AI API providers (like Google Cloud, AWS, OpenAI, Cohere) offer dedicated dashboards or billing sections in their developer consoles where you can track your API usage in real-time. It's crucial to check these regularly. Additionally, you can often set up budget alerts or usage notifications that will inform you when you're approaching your free tier limits or a specific spending threshold.

Q3: What's the main difference between a "free API" and a "free tier API"? A3: A "free API" often implies a service that is perpetually free, typically for specific, limited functionality or for non-commercial projects. These are rarer in the commercial AI space. A "free tier API," on the other hand, is a specific usage allowance provided by a commercial API service (like Google Cloud or OpenAI) that allows you to use a limited amount of their paid service for free for a certain period or up to a specific usage limit. Once you exceed these limits, you'll be charged, or your access may be temporarily suspended.

Q4: Besides cost, what other factors should I consider when choosing an LLM API? A4: Beyond cost, critical factors include: * Performance and Accuracy: How well does the model perform on your specific task? * Latency: How quickly does the API respond? Crucial for real-time applications. * Scalability: Can the API handle your projected load as your application grows? * Context Window Size: The maximum input length the model can process. * Multimodality: Does it support other data types like images or audio? * Developer Experience: Quality of documentation, SDKs, and community support. * Data Privacy and Security: How does the provider handle your data? * Customization/Fine-tuning: Can you fine-tune the model with your data? * Vendor Lock-in: How easy is it to switch providers if needed? Platforms like XRoute.AI can help mitigate this.

Q5: How do unified API platforms like XRoute.AI help with cost optimization? A5: Unified API platforms like XRoute.AI assist with cost optimization by: 1. Centralized Access: Providing a single endpoint to access multiple LLMs from various providers, allowing you to easily switch between models to find the most cost-effective one for a given task. 2. Dynamic Routing: Some platforms can intelligently route your requests to the cheapest or most performant available model based on your criteria. 3. Simplified Management: Reducing the operational overhead and development time associated with integrating and maintaining separate connections to many different APIs. 4. Competitive Pricing: Often negotiating better rates with providers due to aggregated volume, or offering their own optimized pricing structures. 5. Monitoring and Analytics: Providing tools to monitor usage across all integrated models, making it easier to identify cost-saving opportunities and manage budgets effectively.

🚀You can securely and efficiently connect to thousands of data sources with XRoute in just two steps:

Step 1: Create Your API Key

To start using XRoute.AI, the first step is to create an account and generate your XRoute API KEY. This key unlocks access to the platform’s unified API interface, allowing you to connect to a vast ecosystem of large language models with minimal setup.

Here’s how to do it: 1. Visit https://xroute.ai/ and sign up for a free account. 2. Upon registration, explore the platform. 3. Navigate to the user dashboard and generate your XRoute API KEY.

This process takes less than a minute, and your API key will serve as the gateway to XRoute.AI’s robust developer tools, enabling seamless integration with LLM APIs for your projects.

Step 2: Select a Model and Make API Calls

Once you have your XRoute API KEY, you can select from over 60 large language models available on XRoute.AI and start making API calls. The platform’s OpenAI-compatible endpoint ensures that you can easily integrate models into your applications using just a few lines of code.

Here’s a sample configuration to call an LLM:

curl --location 'https://api.xroute.ai/openai/v1/chat/completions' \

--header 'Authorization: Bearer $apikey' \

--header 'Content-Type: application/json' \

--data '{

"model": "gpt-5",

"messages": [

{

"content": "Your text prompt here",

"role": "user"

}

]

}'

With this setup, your application can instantly connect to XRoute.AI’s unified API platform, leveraging low latency AI and high throughput (handling 891.82K tokens per month globally). XRoute.AI manages provider routing, load balancing, and failover, ensuring reliable performance for real-time applications like chatbots, data analysis tools, or automated workflows. You can also purchase additional API credits to scale your usage as needed, making it a cost-effective AI solution for projects of all sizes.

Note: Explore the documentation on https://xroute.ai/ for model-specific details, SDKs, and open-source examples to accelerate your development.